Muhammad Saad Saeed

Robust Harmful Meme Detection under Missing Modalities via Shared Representation Learning

Feb 01, 2026Abstract:Internet memes are powerful tools for communication, capable of spreading political, psychological, and sociocultural ideas. However, they can be harmful and can be used to disseminate hate toward targeted individuals or groups. Although previous studies have focused on designing new detection methods, these often rely on modal-complete data, such as text and images. In real-world settings, however, modalities like text may be missing due to issues like poor OCR quality, making existing methods sensitive to missing information and leading to performance deterioration. To address this gap, in this paper, we present the first-of-its-kind work to comprehensively investigate the behavior of harmful meme detection methods in the presence of modal-incomplete data. Specifically, we propose a new baseline method that learns a shared representation for multiple modalities by projecting them independently. These shared representations can then be leveraged when data is modal-incomplete. Experimental results on two benchmark datasets demonstrate that our method outperforms existing approaches when text is missing. Moreover, these results suggest that our method allows for better integration of visual features, reducing dependence on text and improving robustness in scenarios where textual information is missing. Our work represents a significant step forward in enabling the real-world application of harmful meme detection, particularly in situations where a modality is absent.

Linking Faces and Voices Across Languages: Insights from the FAME 2026 Challenge

Dec 23, 2025Abstract:Over half of the world's population is bilingual and people often communicate under multilingual scenarios. The Face-Voice Association in Multilingual Environments (FAME) 2026 Challenge, held at ICASSP 2026, focuses on developing methods for face-voice association that are effective when the language at test-time is different than the training one. This report provides a brief summary of the challenge.

Realism to Deception: Investigating Deepfake Detectors Against Face Enhancement

Sep 08, 2025Abstract:Face enhancement techniques are widely used to enhance facial appearance. However, they can inadvertently distort biometric features, leading to significant decrease in the accuracy of deepfake detectors. This study hypothesizes that these techniques, while improving perceptual quality, can degrade the performance of deepfake detectors. To investigate this, we systematically evaluate whether commonly used face enhancement methods can serve an anti-forensic role by reducing detection accuracy. We use both traditional image processing methods and advanced GAN-based enhancements to evaluate the robustness of deepfake detectors. We provide a comprehensive analysis of the effectiveness of these enhancement techniques, focusing on their impact on Na\"ive, Spatial, and Frequency-based detection methods. Furthermore, we conduct adversarial training experiments to assess whether exposure to face enhancement transformations improves model robustness. Experiments conducted on the FaceForensics++, DeepFakeDetection, and CelebDF-v2 datasets indicate that even basic enhancement filters can significantly reduce detection accuracy achieving ASR up to 64.63\%. In contrast, GAN-based techniques further exploit these vulnerabilities, achieving ASR up to 75.12\%. Our results demonstrate that face enhancement methods can effectively function as anti-forensic tools, emphasizing the need for more resilient and adaptive forensic methods.

Modality Invariant Multimodal Learning to Handle Missing Modalities: A Single-Branch Approach

Aug 14, 2024

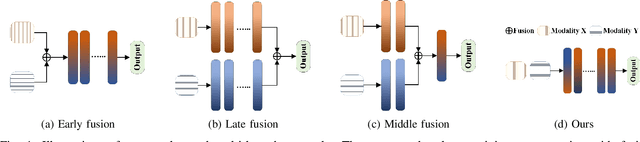

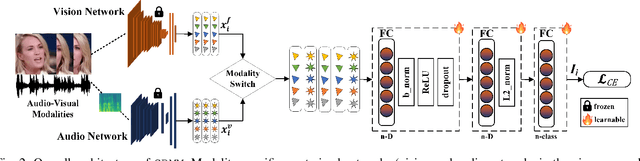

Abstract:Multimodal networks have demonstrated remarkable performance improvements over their unimodal counterparts. Existing multimodal networks are designed in a multi-branch fashion that, due to the reliance on fusion strategies, exhibit deteriorated performance if one or more modalities are missing. In this work, we propose a modality invariant multimodal learning method, which is less susceptible to the impact of missing modalities. It consists of a single-branch network sharing weights across multiple modalities to learn inter-modality representations to maximize performance as well as robustness to missing modalities. Extensive experiments are performed on four challenging datasets including textual-visual (UPMC Food-101, Hateful Memes, Ferramenta) and audio-visual modalities (VoxCeleb1). Our proposed method achieves superior performance when all modalities are present as well as in the case of missing modalities during training or testing compared to the existing state-of-the-art methods.

Chameleon: Images Are What You Need For Multimodal Learning Robust To Missing Modalities

Jul 23, 2024Abstract:Multimodal learning has demonstrated remarkable performance improvements over unimodal architectures. However, multimodal learning methods often exhibit deteriorated performances if one or more modalities are missing. This may be attributed to the commonly used multi-branch design containing modality-specific streams making the models reliant on the availability of a complete set of modalities. In this work, we propose a robust textual-visual multimodal learning method, Chameleon, that completely deviates from the conventional multi-branch design. To enable this, we present the unification of input modalities into one format by encoding textual modality into visual representations. As a result, our approach does not require modality-specific branches to learn modality-independent multimodal representations making it robust to missing modalities. Extensive experiments are performed on four popular challenging datasets including Hateful Memes, UPMC Food-101, MM-IMDb, and Ferramenta. Chameleon not only achieves superior performance when all modalities are present at train/test time but also demonstrates notable resilience in the case of missing modalities.

Face-voice Association in Multilingual Environments (FAME) Challenge 2024 Evaluation Plan

Apr 16, 2024Abstract:The advancements of technology have led to the use of multimodal systems in various real-world applications. Among them, the audio-visual systems are one of the widely used multimodal systems. In the recent years, associating face and voice of a person has gained attention due to presence of unique correlation between them. The Face-voice Association in Multilingual Environments (FAME) Challenge 2024 focuses on exploring face-voice association under a unique condition of multilingual scenario. This condition is inspired from the fact that half of the world's population is bilingual and most often people communicate under multilingual scenario. The challenge uses a dataset namely, Multilingual Audio-Visual (MAV-Celeb) for exploring face-voice association in multilingual environments. This report provides the details of the challenge, dataset, baselines and task details for the FAME Challenge.

Single-branch Network for Multimodal Training

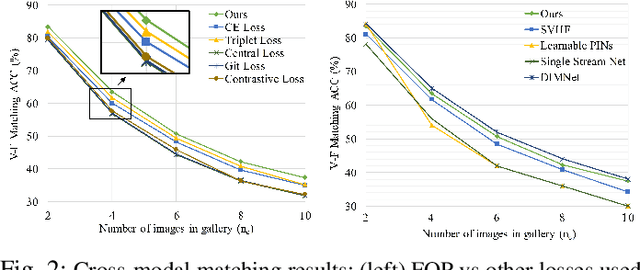

Mar 10, 2023Abstract:With the rapid growth of social media platforms, users are sharing billions of multimedia posts containing audio, images, and text. Researchers have focused on building autonomous systems capable of processing such multimedia data to solve challenging multimodal tasks including cross-modal retrieval, matching, and verification. Existing works use separate networks to extract embeddings of each modality to bridge the gap between them. The modular structure of their branched networks is fundamental in creating numerous multimodal applications and has become a defacto standard to handle multiple modalities. In contrast, we propose a novel single-branch network capable of learning discriminative representation of unimodal as well as multimodal tasks without changing the network. An important feature of our single-branch network is that it can be trained either using single or multiple modalities without sacrificing performance. We evaluated our proposed single-branch network on the challenging multimodal problem (face-voice association) for cross-modal verification and matching tasks with various loss formulations. Experimental results demonstrate the superiority of our proposed single-branch network over the existing methods in a wide range of experiments. Code: https://github.com/msaadsaeed/SBNet

Speaker Recognition in Realistic Scenario Using Multimodal Data

Feb 25, 2023

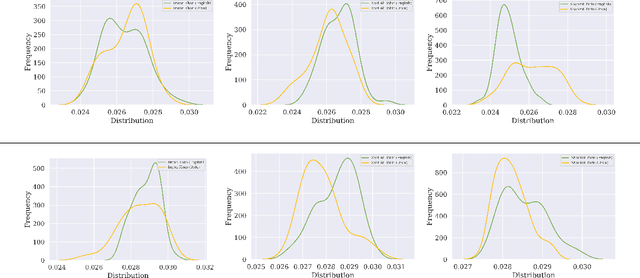

Abstract:In recent years, an association is established between faces and voices of celebrities leveraging large scale audio-visual information from YouTube. The availability of large scale audio-visual datasets is instrumental in developing speaker recognition methods based on standard Convolutional Neural Networks. Thus, the aim of this paper is to leverage large scale audio-visual information to improve speaker recognition task. To achieve this task, we proposed a two-branch network to learn joint representations of faces and voices in a multimodal system. Afterwards, features are extracted from the two-branch network to train a classifier for speaker recognition. We evaluated our proposed framework on a large scale audio-visual dataset named VoxCeleb$1$. Our results show that addition of facial information improved the performance of speaker recognition. Moreover, our results indicate that there is an overlap between face and voice.

Learning Branched Fusion and Orthogonal Projection for Face-Voice Association

Aug 22, 2022

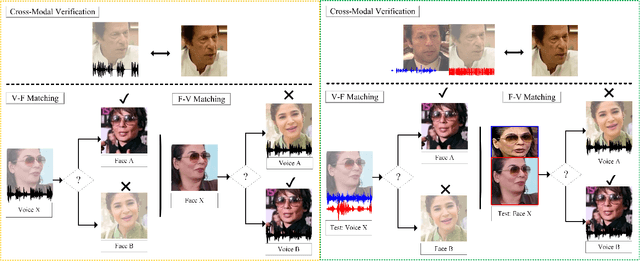

Abstract:Recent years have seen an increased interest in establishing association between faces and voices of celebrities leveraging audio-visual information from YouTube. Prior works adopt metric learning methods to learn an embedding space that is amenable for associated matching and verification tasks. Albeit showing some progress, such formulations are, however, restrictive due to dependency on distance-dependent margin parameter, poor run-time training complexity, and reliance on carefully crafted negative mining procedures. In this work, we hypothesize that an enriched representation coupled with an effective yet efficient supervision is important towards realizing a discriminative joint embedding space for face-voice association tasks. To this end, we propose a light-weight, plug-and-play mechanism that exploits the complementary cues in both modalities to form enriched fused embeddings and clusters them based on their identity labels via orthogonality constraints. We coin our proposed mechanism as fusion and orthogonal projection (FOP) and instantiate in a two-stream network. The overall resulting framework is evaluated on VoxCeleb1 and MAV-Celeb datasets with a multitude of tasks, including cross-modal verification and matching. Results reveal that our method performs favourably against the current state-of-the-art methods and our proposed formulation of supervision is more effective and efficient than the ones employed by the contemporary methods. In addition, we leverage cross-modal verification and matching tasks to analyze the impact of multiple languages on face-voice association. Code is available: \url{https://github.com/msaadsaeed/FOP}

Fusion and Orthogonal Projection for Improved Face-Voice Association

Dec 20, 2021

Abstract:We study the problem of learning association between face and voice, which is gaining interest in the computer vision community lately. Prior works adopt pairwise or triplet loss formulations to learn an embedding space amenable for associated matching and verification tasks. Albeit showing some progress, such loss formulations are, however, restrictive due to dependency on distance-dependent margin parameter, poor run-time training complexity, and reliance on carefully crafted negative mining procedures. In this work, we hypothesize that enriched feature representation coupled with an effective yet efficient supervision is necessary in realizing a discriminative joint embedding space for improved face-voice association. To this end, we propose a light-weight, plug-and-play mechanism that exploits the complementary cues in both modalities to form enriched fused embeddings and clusters them based on their identity labels via orthogonality constraints. We coin our proposed mechanism as fusion and orthogonal projection (FOP) and instantiate in a two-stream pipeline. The overall resulting framework is evaluated on a large-scale VoxCeleb dataset with a multitude of tasks, including cross-modal verification and matching. Results show that our method performs favourably against the current state-of-the-art methods and our proposed supervision formulation is more effective and efficient than the ones employed by the contemporary methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge