Marcel A. J. van Gerven

Symbolic Discovery of Stochastic Differential Equations with Genetic Programming

Mar 10, 2026Abstract:Automated scientific discovery aims to improve scientific understanding through machine learning. A central approach in this field is symbolic regression, which uses genetic programming or sparse regression to learn interpretable mathematical expressions to explain observed data. Conventionally, the focus of symbolic regression is on identifying ordinary differential equations. The general view is that noise only complicates the recovery of deterministic dynamics. However, explicitly learning a symbolic function of the noise component in stochastic differential equations enhances modelling capacity, increases knowledge gain and enables generative sampling. We introduce a method for symbolic discovery of stochastic differential equations based on genetic programming, jointly optimizing drift and diffusion functions via the maximum likelihood estimate. Our results demonstrate accurate recovery of governing equations, efficient scaling to higher-dimensional systems, robustness to sparsely sampled problems and generalization to stochastic partial differential equations. This work extends symbolic regression toward interpretable discovery of stochastic dynamical systems, contributing to the automation of science in a noisy and dynamic world.

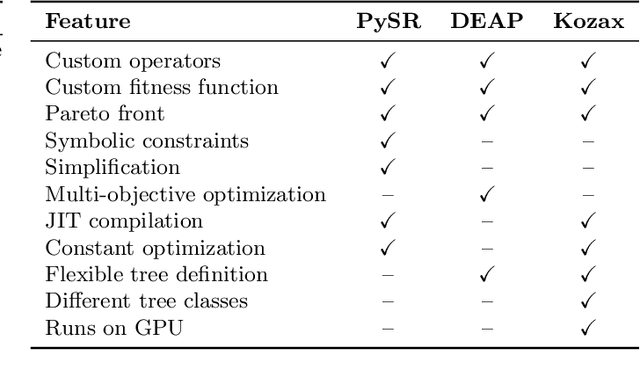

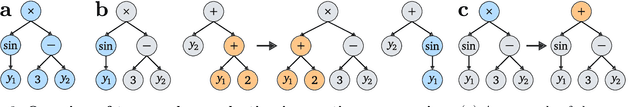

Kozax: Flexible and Scalable Genetic Programming in JAX

Feb 05, 2025

Abstract:Genetic programming is an optimization algorithm inspired by natural selection which automatically evolves the structure of computer programs. The resulting computer programs are interpretable and efficient compared to black-box models with fixed structure. The fitness evaluation in genetic programming suffers from high computational requirements, limiting the performance on difficult problems. To reduce the runtime, many implementations of genetic programming require a specific data format, making the applicability limited to specific problem classes. Consequently, there is no efficient genetic programming framework that is usable for a wide range of tasks. To this end, we developed Kozax, a genetic programming framework that evolves symbolic expressions for arbitrary problems. We implemented Kozax using JAX, a framework for high-performance and scalable machine learning, which allows the fitness evaluation to scale efficiently to large populations or datasets on GPU. Furthermore, Kozax offers constant optimization, custom operator definition and simultaneous evolution of multiple trees. We demonstrate successful applications of Kozax to discover equations of natural laws, recover equations of hidden dynamic variables and evolve a control policy. Overall, Kozax provides a general, fast, and scalable library to optimize white-box solutions in the realm of scientific computing.

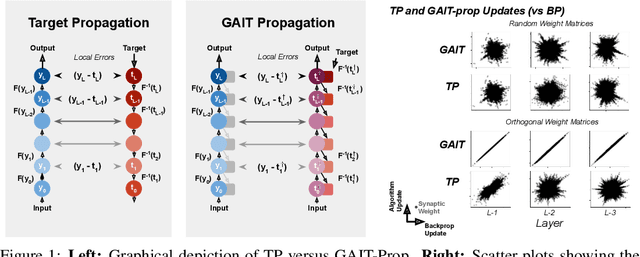

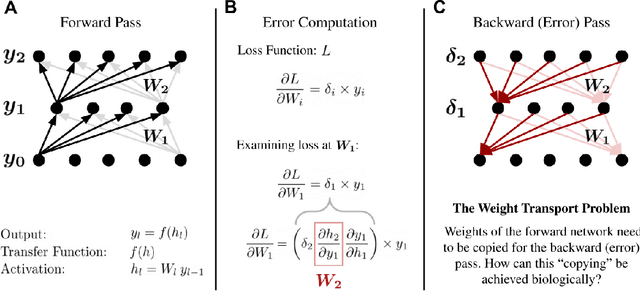

GAIT-prop: A biologically plausible learning rule derived from backpropagation of error

Jul 01, 2020

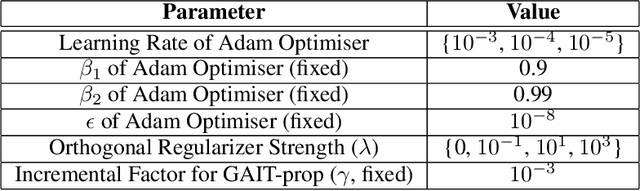

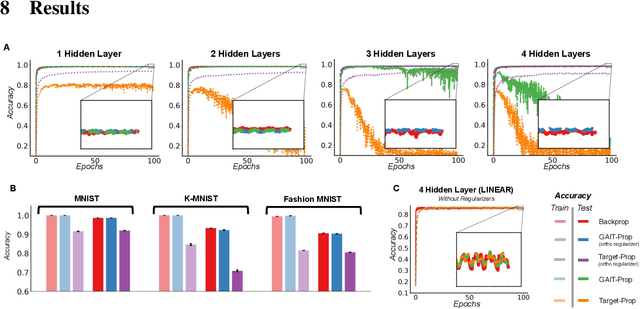

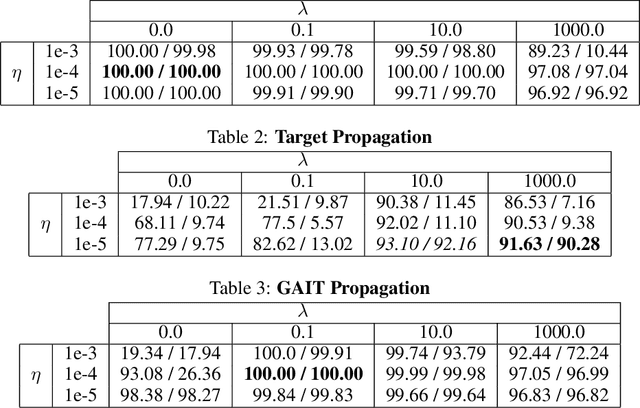

Abstract:Traditional backpropagation of error, though a highly successful algorithm for learning in artificial neural network models, includes features which are biologically implausible for learning in real neural circuits. An alternative called target propagation proposes to solve this implausibility by using a top-down model of neural activity to convert an error at the output of a neural network into layer-wise and plausible 'targets' for every unit. These targets can then be used to produce weight updates for network training. However, thus far, target propagation has been heuristically proposed without demonstrable equivalence to backpropagation. Here, we derive an exact correspondence between backpropagation and a modified form of target propagation (GAIT-prop) where the target is a small perturbation of the forward pass. Specifically, backpropagation and GAIT-prop give identical updates when synaptic weight matrices are orthogonal. In a series of simple computer vision experiments, we show near-identical performance between backpropagation and GAIT-prop with a soft orthogonality-inducing regularizer.

Explainable 3D Convolutional Neural Networks by Learning Temporal Transformations

Jun 29, 2020

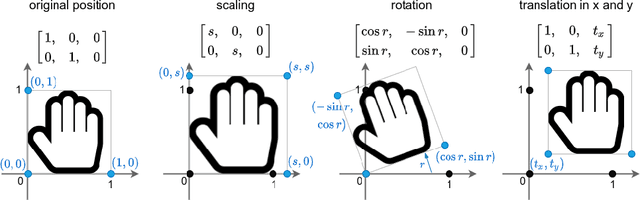

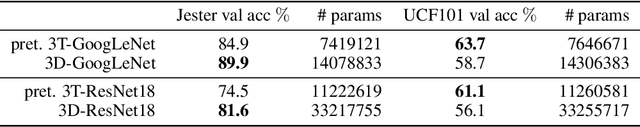

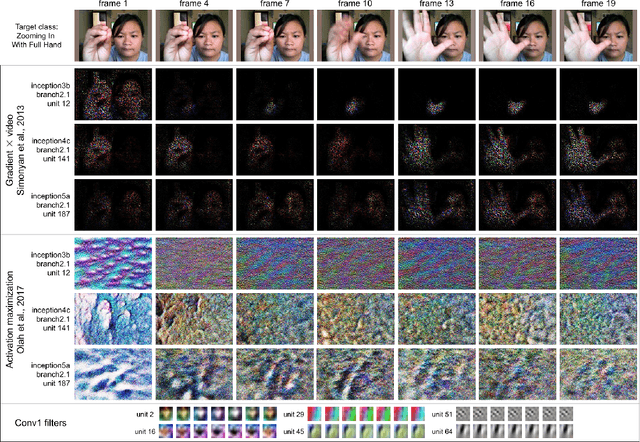

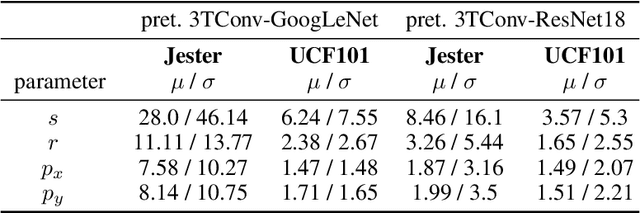

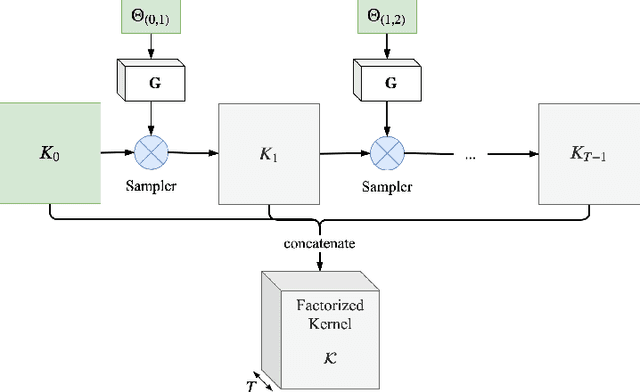

Abstract:In this paper we introduce the temporally factorized 3D convolution (3TConv) as an interpretable alternative to the regular 3D convolution (3DConv). In a 3TConv the 3D convolutional filter is obtained by learning a 2D filter and a set of temporal transformation parameters, resulting in a sparse filter where the 2D slices are sequentially dependent on each other in the temporal dimension. We demonstrate that 3TConv learns temporal transformations that afford a direct interpretation. The temporal parameters can be used in combination with various existing 2D visualization methods. We also show that insight about what the model learns can be achieved by analyzing the transformation parameter statistics on a layer and model level. Finally, we implicitly demonstrate that, in popular ConvNets, the 2DConv can be replaced with a 3TConv and that the weights can be transferred to yield pretrained 3TConvs. pretrained 3TConvnets leverage more than a decade of work on traditional 2DConvNets by being able to make use of features that have been proven to deliver excellent results on image classification benchmarks.

Spike-Timing-Dependent Inference of Synaptic Weights

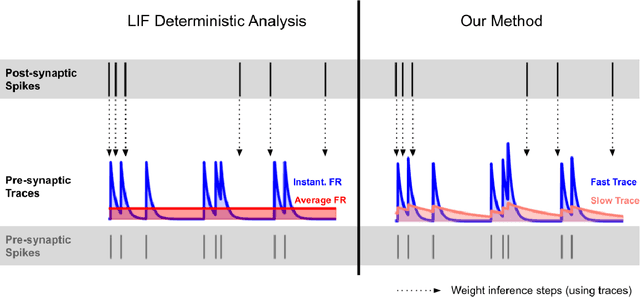

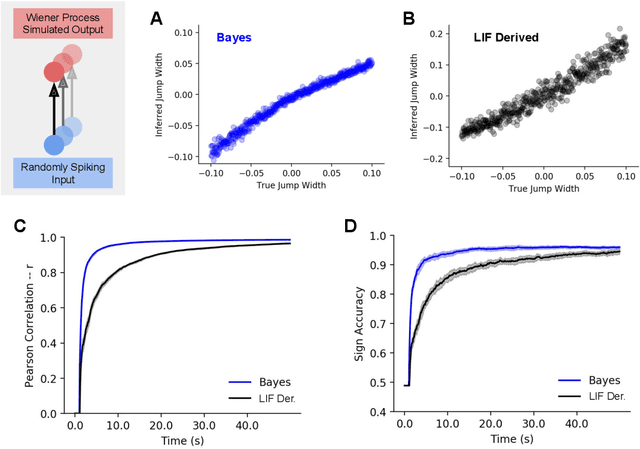

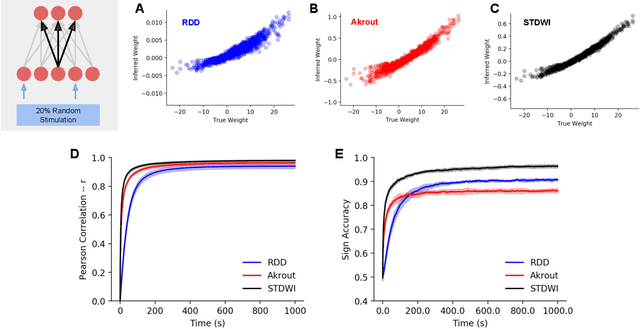

Mar 09, 2020

Abstract:A potential solution to the weight transport problem, which questions the biological plausibility of the backpropagation of error algorithm, is proposed. We derive our method based upon an (approximate) analysis of the dynamics of leaky integrate-and-fire neurons. We thereafter validate our method and show that the use of spike timing alone out-competes existing biologically plausible methods for synaptic weight inference in spiking neural network models. Furthermore, our proposed method is also more flexible, being applicable to any spiking neuron model, is conservative in how many parameters are required for implementation and can be deployed in an online-fashion with minimal computational overhead. These features, together with its biological plausibility, make it an attractive candidate technique for weight inference at single synapses.

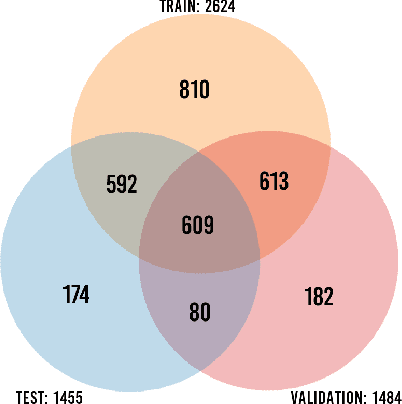

Background Hardly Matters: Understanding Personality Attribution in Deep Residual Networks

Dec 20, 2019

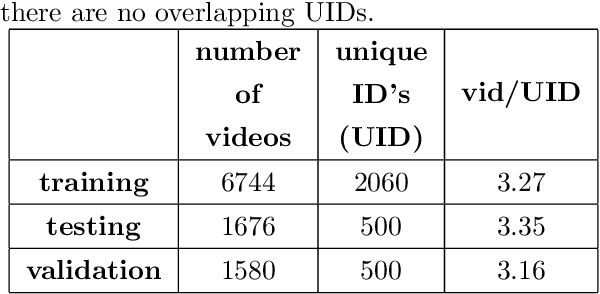

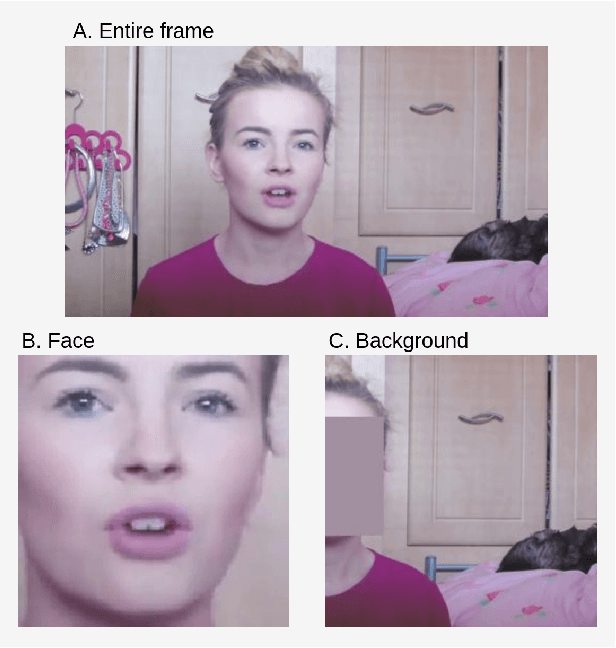

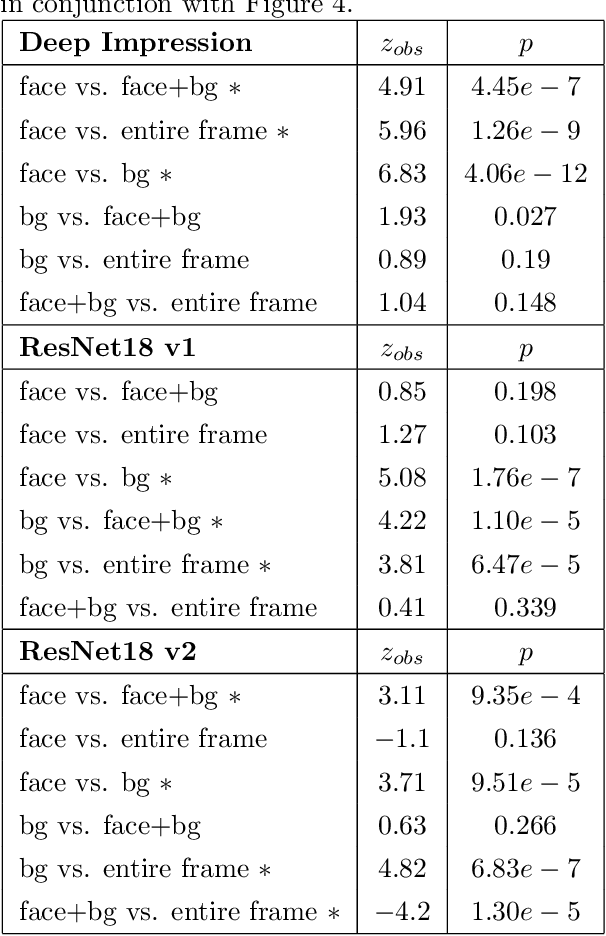

Abstract:Perceived personality traits attributed to an individual do not have to correspond to their actual personality traits and may be determined in part by the context in which one encounters a person. These apparent traits determine, to a large extent, how other people will behave towards them. Deep neural networks are increasingly being used to perform automated personality attribution (e.g., job interviews). It is important that we understand the driving factors behind the predictions, in humans and in deep neural networks. This paper explicitly studies the effect of the image background on apparent personality prediction while addressing two important confounds present in existing literature; overlapping data splits and including facial information in the background. Surprisingly, we found no evidence that background information improves model predictions for apparent personality traits. In fact, when background is explicitly added to the input, a decrease in performance was measured across all models.

Temporal Factorization of 3D Convolutional Kernels

Dec 09, 2019

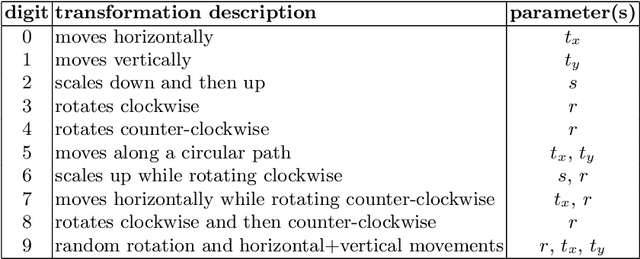

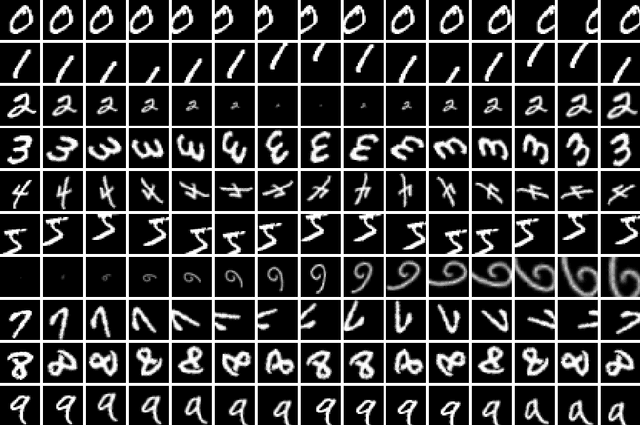

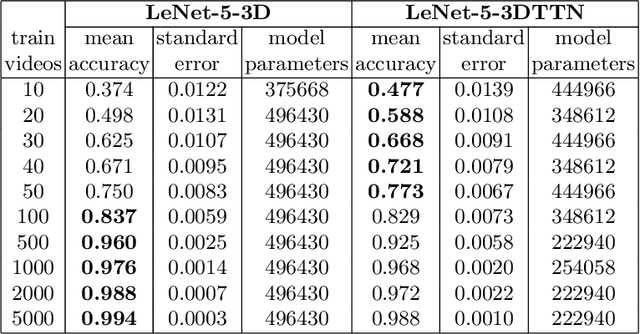

Abstract:3D convolutional neural networks are difficult to train because they are parameter-expensive and data-hungry. To solve these problems we propose a simple technique for learning 3D convolutional kernels efficiently requiring less training data. We achieve this by factorizing the 3D kernel along the temporal dimension, reducing the number of parameters and making training from data more efficient. Additionally we introduce a novel dataset called Video-MNIST to demonstrate the performance of our method. Our method significantly outperforms the conventional 3D convolution in the low data regime (1 to 5 videos per class). Finally, our model achieves competitive results in the high data regime (>10 videos per class) using up to 45% fewer parameters.

Causal inference using Bayesian non-parametric quasi-experimental design

Nov 15, 2019

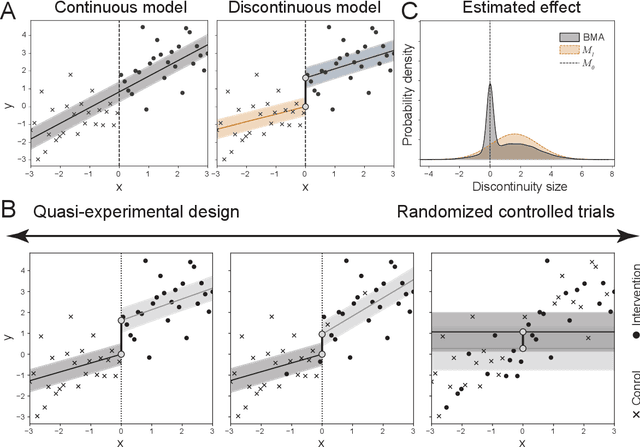

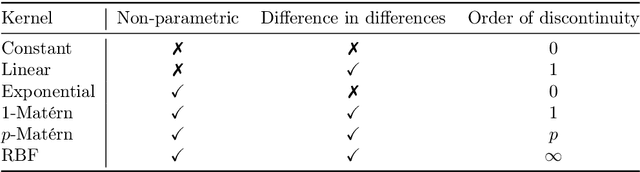

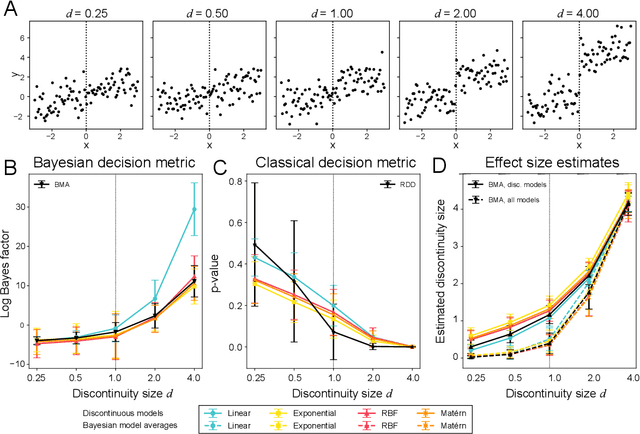

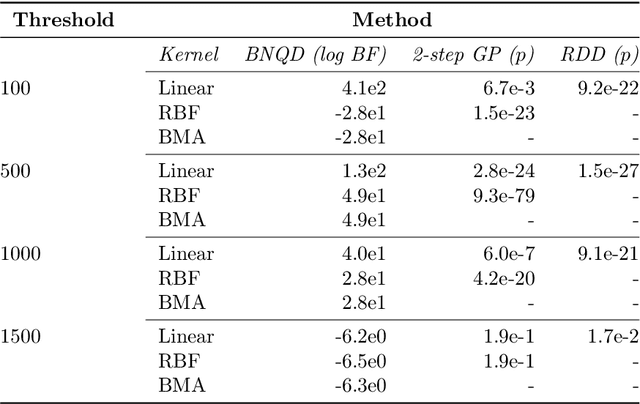

Abstract:The de facto standard for causal inference is the randomized controlled trial, where one compares an manipulated group with a control group in order to determine the effect of an intervention. However, this research design is not always realistically possible due to pragmatic or ethical concerns. In these situations, quasi-experimental designs may provide a solution, as these allow for causal conclusions at the cost of additional design assumptions. In this paper, we provide a generic framework for quasi-experimental design using Bayesian model comparison, and we show how it can be used as an alternative to several common research designs. We provide a theoretical motivation for a Gaussian process based approach and demonstrate its convenient use in a number of simulations. Finally, we apply the framework to determine the effect of population-based thresholds for municipality funding in France, of the 2005 smoking ban in Sicily on the number of acute coronary events, and of the effect of an alleged historical phantom border in the Netherlands on Dutch voting behaviour.

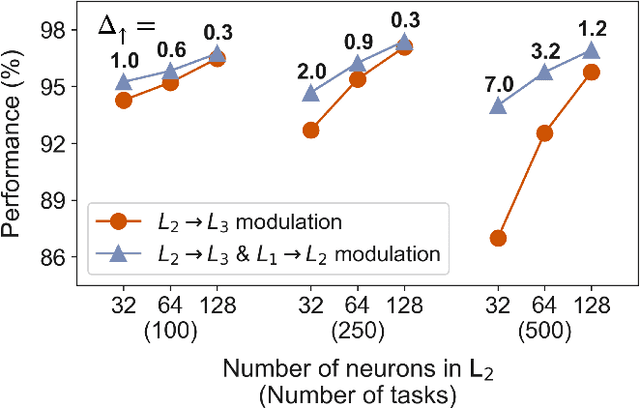

Modulation of early visual processing alleviates capacity limits in solving multiple tasks

Jul 30, 2019

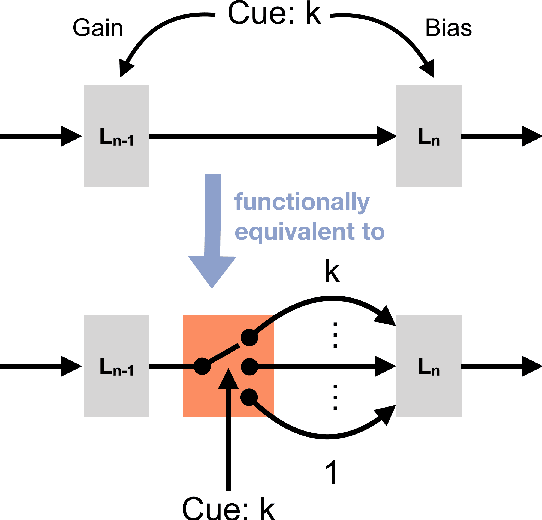

Abstract:In daily life situations, we have to perform multiple tasks given a visual stimulus, which requires task-relevant information to be transmitted through our visual system. When it is not possible to transmit all the possibly relevant information to higher layers, due to a bottleneck, task-based modulation of early visual processing might be necessary. In this work, we report how the effectiveness of modulating the early processing stage of an artificial neural network depends on the information bottleneck faced by the network. The bottleneck is quantified by the number of tasks the network has to perform and the neural capacity of the later stage of the network. The effectiveness is gauged by the performance on multiple object detection tasks, where the network is trained with a recent multi-task optimization scheme. By associating neural modulations with task-based switching of the state of the network and characterizing when such switching is helpful in early processing, our results provide a functional perspective towards understanding why task-based modulation of early neural processes might be observed in the primate visual cortex

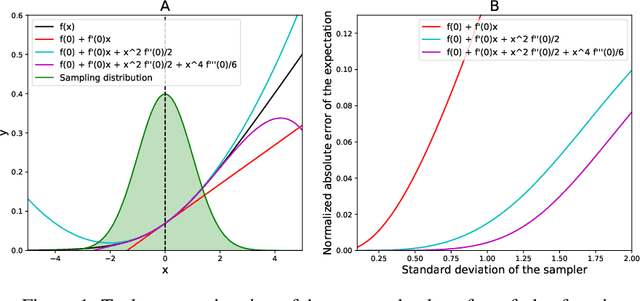

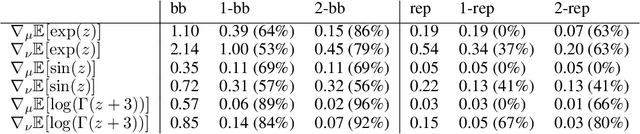

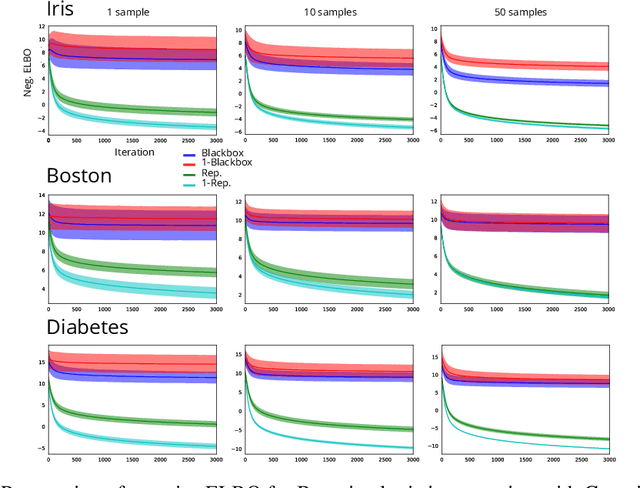

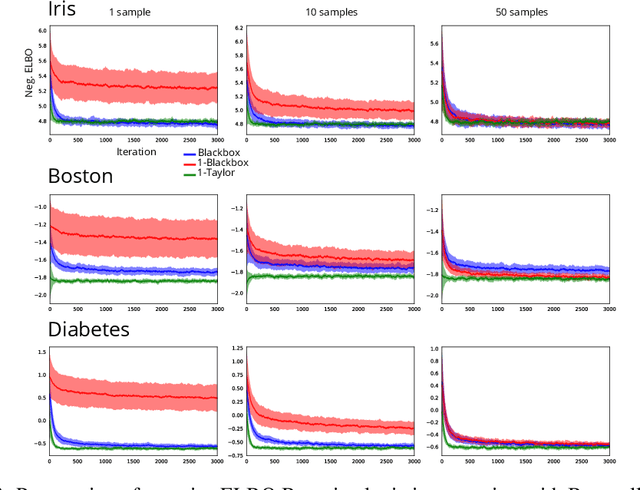

Perturbative estimation of stochastic gradients

Apr 08, 2019

Abstract:In this paper we introduce a family of stochastic gradient estimation techniques based of the perturbative expansion around the mean of the sampling distribution. We characterize the bias and variance of the resulting Taylor-corrected estimators using the Lagrange error formula. Furthermore, we introduce a family of variance reduction techniques that can be applied to other gradient estimators. Finally, we show that these new perturbative methods can be extended to discrete functions using analytic continuation. Using this technique, we derive a new gradient descent method for training stochastic networks with binary weights. In our experiments, we show that the perturbative correction improves the convergence of stochastic variational inference both in the continuous and in the discrete case.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge