Jiali Wang

Robust and Efficient Communication in Multi-Agent Reinforcement Learning

Nov 14, 2025Abstract:Multi-agent reinforcement learning (MARL) has made significant strides in enabling coordinated behaviors among autonomous agents. However, most existing approaches assume that communication is instantaneous, reliable, and has unlimited bandwidth; these conditions are rarely met in real-world deployments. This survey systematically reviews recent advances in robust and efficient communication strategies for MARL under realistic constraints, including message perturbations, transmission delays, and limited bandwidth. Furthermore, because the challenges of low-latency reliability, bandwidth-intensive data sharing, and communication-privacy trade-offs are central to practical MARL systems, we focus on three applications involving cooperative autonomous driving, distributed simultaneous localization and mapping, and federated learning. Finally, we identify key open challenges and future research directions, advocating a unified approach that co-designs communication, learning, and robustness to bridge the gap between theoretical MARL models and practical implementations.

Sub-diffraction terahertz backpropagation compressive imaging

May 05, 2025Abstract:Terahertz single-pixel imaging (TSPI) has garnered significant attention due to its simplicity and cost-effectiveness. However, the relatively long wavelength of THz waves limits sub-diffraction-scale imaging resolution. Although TSPI technique can achieve sub-wavelength resolution, it requires harsh experimental conditions and time-consuming processes. Here, we propose a sub-diffraction THz backpropagation compressive imaging technique. We illuminate the object with monochromatic continuous-wave THz radiation. The transmitted THz wave is modulated by prearranged patterns generated on the back surface of a 500-{\mu}m-thick silicon wafer, realized through photoexcited carriers using a 532-nm laser. The modulated THz wave is then recorded by a single-element detector. An untrained neural network is employed to iteratively reconstruct the object image with an ultralow compression ratio of 1.5625% under a physical model constraint, thus reducing the long sampling times. To further suppress the diffraction-field effects, embedded with the angular spectrum propagation (ASP) theory to model the diffraction of THz waves during propagation, the network retrieves near-field information from the object, enabling sub-diffraction imaging with a spatial resolution of ~{\lambda}0/7 ({\lambda}0 = 833.3 {\mu}m at 0.36 THz) and eliminating the need for ultrathin photomodulators. This approach provides an efficient solution for advancing THz microscopic imaging and addressing other inverse imaging challenges.

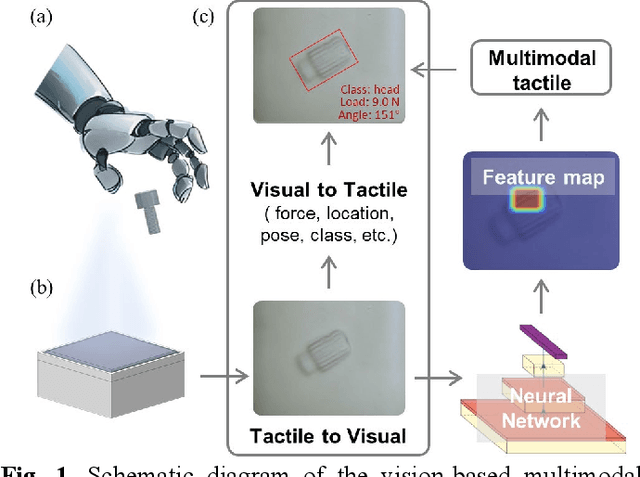

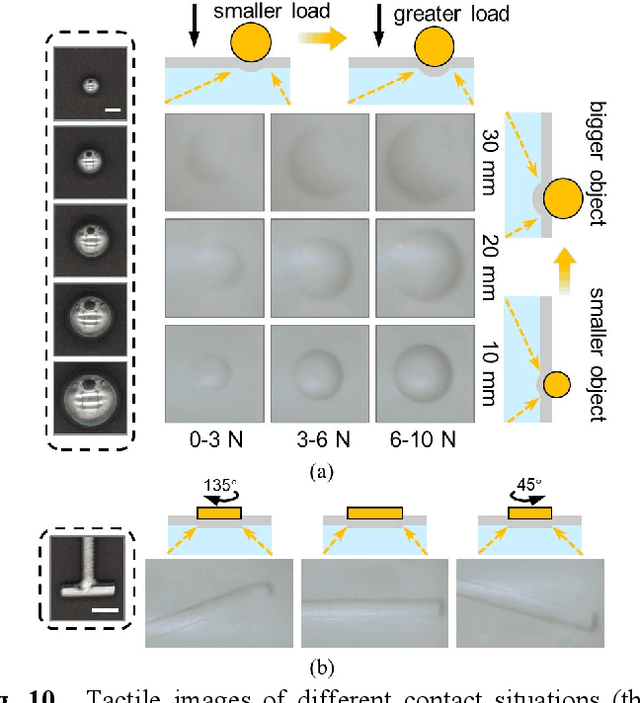

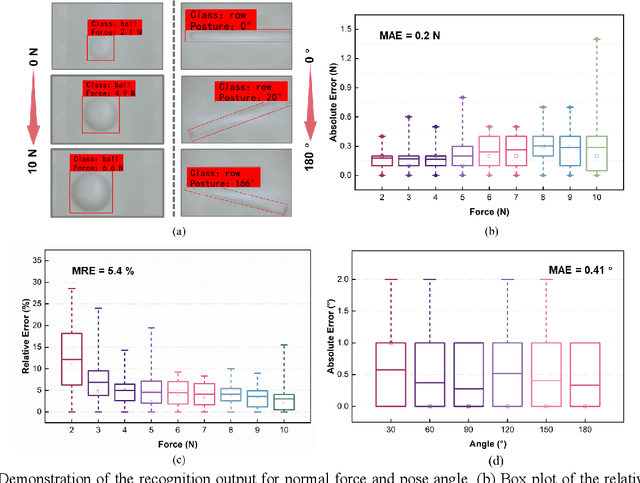

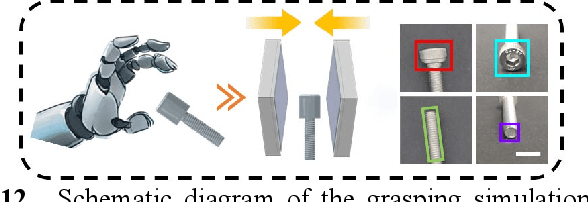

A Vision-Based Tactile Sensing System for Multimodal Contact Information Perception via Neural Network

Oct 03, 2023

Abstract:In general, robotic dexterous hands are equipped with various sensors for acquiring multimodal contact information such as position, force, and pose of the grasped object. This multi-sensor-based design adds complexity to the robotic system. In contrast, vision-based tactile sensors employ specialized optical designs to enable the extraction of tactile information across different modalities within a single system. Nonetheless, the decoupling design for different modalities in common systems is often independent. Therefore, as the dimensionality of tactile modalities increases, it poses more complex challenges in data processing and decoupling, thereby limiting its application to some extent. Here, we developed a multimodal sensing system based on a vision-based tactile sensor, which utilizes visual representations of tactile information to perceive the multimodal contact information of the grasped object. The visual representations contain extensive content that can be decoupled by a deep neural network to obtain multimodal contact information such as classification, position, posture, and force of the grasped object. The results show that the tactile sensing system can perceive multimodal tactile information using only one single sensor and without different data decoupling designs for different modal tactile information, which reduces the complexity of the tactile system and demonstrates the potential for multimodal tactile integration in various fields such as biomedicine, biology, and robotics.

Federated Linear Bandit Learning via Over-the-Air Computation

Aug 28, 2023

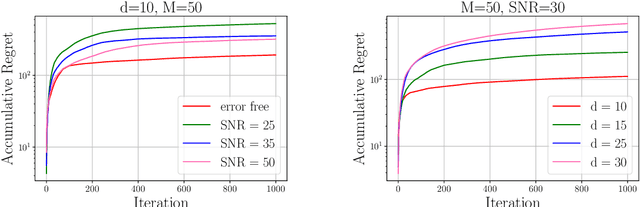

Abstract:In this paper, we investigate federated contextual linear bandit learning within a wireless system that comprises a server and multiple devices. Each device interacts with the environment, selects an action based on the received reward, and sends model updates to the server. The primary objective is to minimize cumulative regret across all devices within a finite time horizon. To reduce the communication overhead, devices communicate with the server via over-the-air computation (AirComp) over noisy fading channels, where the channel noise may distort the signals. In this context, we propose a customized federated linear bandits scheme, where each device transmits an analog signal, and the server receives a superposition of these signals distorted by channel noise. A rigorous mathematical analysis is conducted to determine the regret bound of the proposed scheme. Both theoretical analysis and numerical experiments demonstrate the competitive performance of our proposed scheme in terms of regret bounds in various settings.

TROPHY: A Topologically Robust Physics-Informed Tracking Framework for Tropical Cyclones

Jul 28, 2023

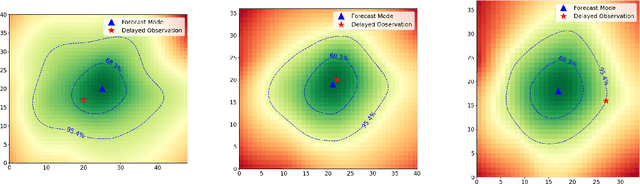

Abstract:Tropical cyclones (TCs) are among the most destructive weather systems. Realistically and efficiently detecting and tracking TCs are critical for assessing their impacts and risks. Recently, a multilevel robustness framework has been introduced to study the critical points of time-varying vector fields. The framework quantifies the robustness of critical points across varying neighborhoods. By relating the multilevel robustness with critical point tracking, the framework has demonstrated its potential in cyclone tracking. An advantage is that it identifies cyclonic features using only 2D wind vector fields, which is encouraging as most tracking algorithms require multiple dynamic and thermodynamic variables at different altitudes. A disadvantage is that the framework does not scale well computationally for datasets containing a large number of cyclones. This paper introduces a topologically robust physics-informed tracking framework (TROPHY) for TC tracking. The main idea is to integrate physical knowledge of TC to drastically improve the computational efficiency of multilevel robustness framework for large-scale climate datasets. First, during preprocessing, we propose a physics-informed feature selection strategy to filter 90% of critical points that are short-lived and have low stability, thus preserving good candidates for TC tracking. Second, during in-processing, we impose constraints during the multilevel robustness computation to focus only on physics-informed neighborhoods of TCs. We apply TROPHY to 30 years of 2D wind fields from reanalysis data in ERA5 and generate a number of TC tracks. In comparison with the observed tracks, we demonstrate that TROPHY can capture TC characteristics that are comparable to and sometimes even better than a well-validated TC tracking algorithm that requires multiple dynamic and thermodynamic scalar fields.

Green Federated Learning Over Cloud-RAN with Limited Fronthual Capacity and Quantized Neural Networks

Apr 30, 2023Abstract:In this paper, we propose an energy-efficient federated learning (FL) framework for the energy-constrained devices over cloud radio access network (Cloud-RAN), where each device adopts quantized neural networks (QNNs) to train a local FL model and transmits the quantized model parameter to the remote radio heads (RRHs). Each RRH receives the signals from devices over the wireless link and forwards the signals to the server via the fronthaul link. We rigorously develop an energy consumption model for the local training at devices through the use of QNNs and communication models over Cloud-RAN. Based on the proposed energy consumption model, we formulate an energy minimization problem that optimizes the fronthaul rate allocation, user transmit power allocation, and QNN precision levels while satisfying the limited fronthaul capacity constraint and ensuring the convergence of the proposed FL model to a target accuracy. To solve this problem, we analyze the convergence rate and propose efficient algorithms based on the alternative optimization technique. Simulation results show that the proposed FL framework can significantly reduce energy consumption compared to other conventional approaches. We draw the conclusion that the proposed framework holds great potential for achieving a sustainable and environmentally-friendly FL in Cloud-RAN.

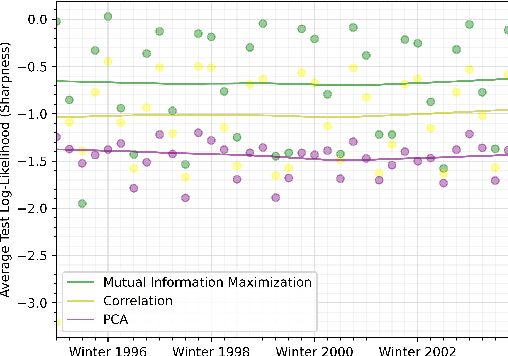

A Deep Learning Approach to Probabilistic Forecasting of Weather

Mar 24, 2022

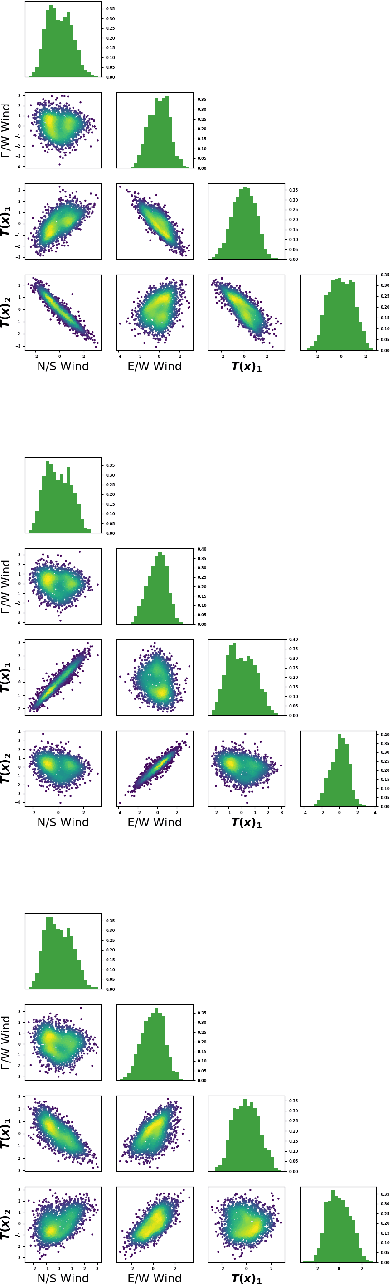

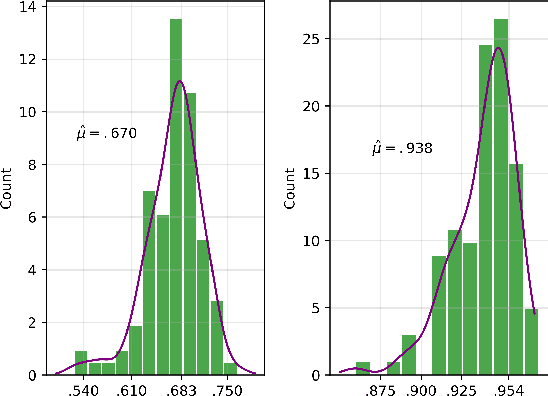

Abstract:We discuss an approach to probabilistic forecasting based on two chained machine-learning steps: a dimensional reduction step that learns a reduction map of predictor information to a low-dimensional space in a manner designed to preserve information about forecast quantities; and a density estimation step that uses the probabilistic machine learning technique of normalizing flows to compute the joint probability density of reduced predictors and forecast quantities. This joint density is then renormalized to produce the conditional forecast distribution. In this method, probabilistic calibration testing plays the role of a regularization procedure, preventing overfitting in the second step, while effective dimensional reduction from the first step is the source of forecast sharpness. We verify the method using a 22-year 1-hour cadence time series of Weather Research and Forecasting (WRF) simulation data of surface wind on a grid.

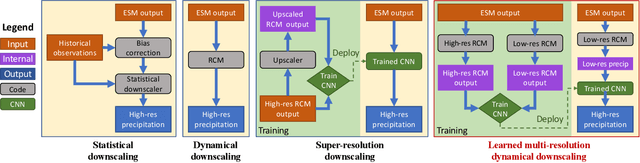

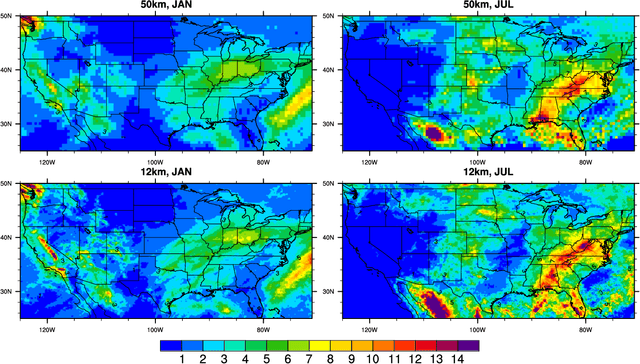

Fast and accurate learned multiresolution dynamical downscaling for precipitation

Jan 18, 2021

Abstract:This study develops a neural network-based approach for emulating high-resolution modeled precipitation data with comparable statistical properties but at greatly reduced computational cost. The key idea is to use combination of low- and high- resolution simulations to train a neural network to map from the former to the latter. Specifically, we define two types of CNNs, one that stacks variables directly and one that encodes each variable before stacking, and we train each CNN type both with a conventional loss function, such as mean square error (MSE), and with a conditional generative adversarial network (CGAN), for a total of four CNN variants. We compare the four new CNN-derived high-resolution precipitation results with precipitation generated from original high resolution simulations, a bilinear interpolater and the state-of-the-art CNN-based super-resolution (SR) technique. Results show that the SR technique produces results similar to those of the bilinear interpolator with smoother spatial and temporal distributions and smaller data variabilities and extremes than the original high resolution simulations. While the new CNNs trained by MSE generate better results over some regions than the interpolator and SR technique do, their predictions are still not as close as the original high resolution simulations. The CNNs trained by CGAN generate more realistic and physically reasonable results, better capturing not only data variability in time and space but also extremes such as intense and long-lasting storms. The new proposed CNN-based downscaling approach can downscale precipitation from 50~km to 12~km in 14~min for 30~years once the network is trained (training takes 4~hours using 1~GPU), while the conventional dynamical downscaling would take 1~month using 600 CPU cores to generate simulations at the resolution of 12~km over contiguous United States.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge