Fan Fei

Modular Safety Guardrails Are Necessary for Foundation-Model-Enabled Robots in the Real World

Feb 03, 2026Abstract:The integration of foundation models (FMs) into robotics has accelerated real-world deployment, while introducing new safety challenges arising from open-ended semantic reasoning and embodied physical action. These challenges require safety notions beyond physical constraint satisfaction. In this paper, we characterize FM-enabled robot safety along three dimensions: action safety (physical feasibility and constraint compliance), decision safety (semantic and contextual appropriateness), and human-centered safety (conformance to human intent, norms, and expectations). We argue that existing approaches, including static verification, monolithic controllers, and end-to-end learned policies, are insufficient in settings where tasks, environments, and human expectations are open-ended, long-tailed, and subject to adaptation over time. To address this gap, we propose modular safety guardrails, consisting of monitoring (evaluation) and intervention layers, as an architectural foundation for comprehensive safety across the autonomy stack. Beyond modularity, we highlight possible cross-layer co-design opportunities through representation alignment and conservatism allocation to enable faster, less conservative, and more effective safety enforcement. We call on the community to explore richer guardrail modules and principled co-design strategies to advance safe real-world physical AI deployment.

PacTure: Efficient PBR Texture Generation on Packed Views with Visual Autoregressive Models

May 28, 2025Abstract:We present PacTure, a novel framework for generating physically-based rendering (PBR) material textures from an untextured 3D mesh, a text description, and an optional image prompt. Early 2D generation-based texturing approaches generate textures sequentially from different views, resulting in long inference times and globally inconsistent textures. More recent approaches adopt multi-view generation with cross-view attention to enhance global consistency, which, however, limits the resolution for each view. In response to these weaknesses, we first introduce view packing, a novel technique that significantly increases the effective resolution for each view during multi-view generation without imposing additional inference cost, by formulating the arrangement of multi-view maps as a 2D rectangle bin packing problem. In contrast to UV mapping, it preserves the spatial proximity essential for image generation and maintains full compatibility with current 2D generative models. To further reduce the inference cost, we enable fine-grained control and multi-domain generation within the next-scale prediction autoregressive framework to create an efficient multi-view multi-domain generative backbone. Extensive experiments show that PacTure outperforms state-of-the-art methods in both quality of generated PBR textures and efficiency in training and inference.

VidLA: Video-Language Alignment at Scale

Mar 21, 2024Abstract:In this paper, we propose VidLA, an approach for video-language alignment at scale. There are two major limitations of previous video-language alignment approaches. First, they do not capture both short-range and long-range temporal dependencies and typically employ complex hierarchical deep network architectures that are hard to integrate with existing pretrained image-text foundation models. To effectively address this limitation, we instead keep the network architecture simple and use a set of data tokens that operate at different temporal resolutions in a hierarchical manner, accounting for the temporally hierarchical nature of videos. By employing a simple two-tower architecture, we are able to initialize our video-language model with pretrained image-text foundation models, thereby boosting the final performance. Second, existing video-language alignment works struggle due to the lack of semantically aligned large-scale training data. To overcome it, we leverage recent LLMs to curate the largest video-language dataset to date with better visual grounding. Furthermore, unlike existing video-text datasets which only contain short clips, our dataset is enriched with video clips of varying durations to aid our temporally hierarchical data tokens in extracting better representations at varying temporal scales. Overall, empirical results show that our proposed approach surpasses state-of-the-art methods on multiple retrieval benchmarks, especially on longer videos, and performs competitively on classification benchmarks.

Bio-Inspired Adversarial Attack Against Deep Neural Networks

Jun 30, 2021

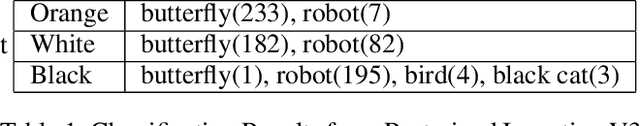

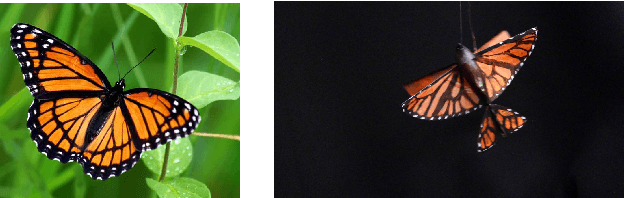

Abstract:The paper develops a new adversarial attack against deep neural networks (DNN), based on applying bio-inspired design to moving physical objects. To the best of our knowledge, this is the first work to introduce physical attacks with a moving object. Instead of following the dominating attack strategy in the existing literature, i.e., to introduce minor perturbations to a digital input or a stationary physical object, we show two new successful attack strategies in this paper. We show by superimposing several patterns onto one physical object, a DNN becomes confused and picks one of the patterns to assign a class label. Our experiment with three flapping wing robots demonstrates the possibility of developing an adversarial camouflage to cause a targeted mistake by DNN. We also show certain motion can reduce the dependency among consecutive frames in a video and make an object detector "blind", i.e., not able to detect an object exists in the video. Hence in a successful physical attack against DNN, targeted motion against the system should also be considered.

* Published in SafeAI 2020

Acting Is Seeing: Navigating Tight Space Using Flapping Wings

Mar 01, 2019

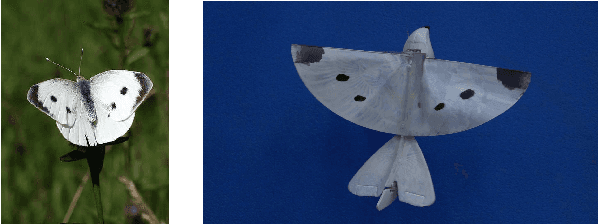

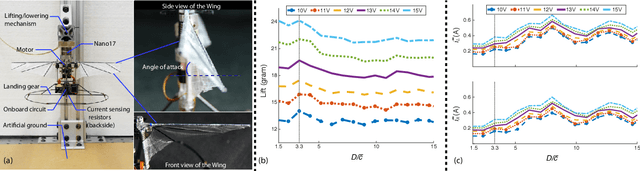

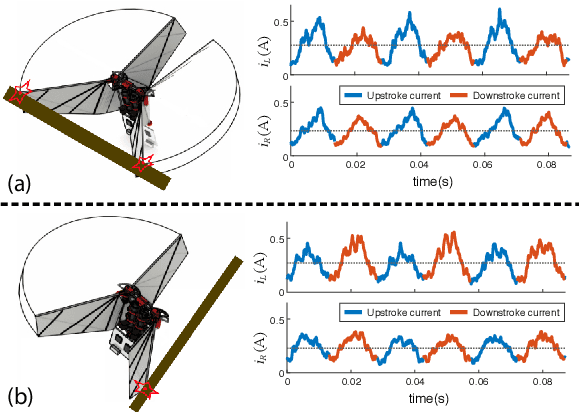

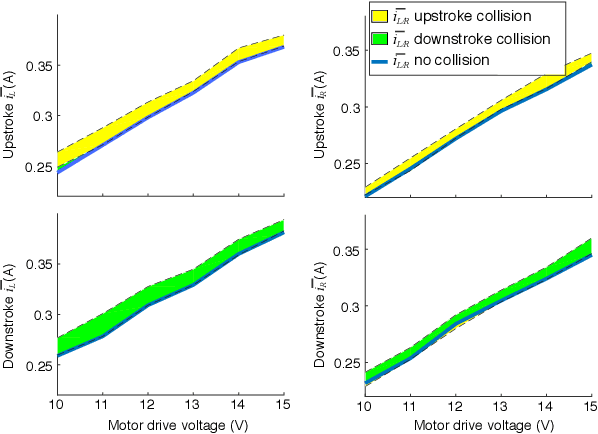

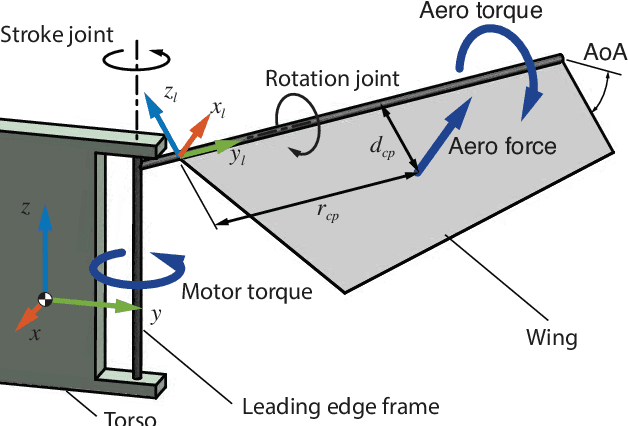

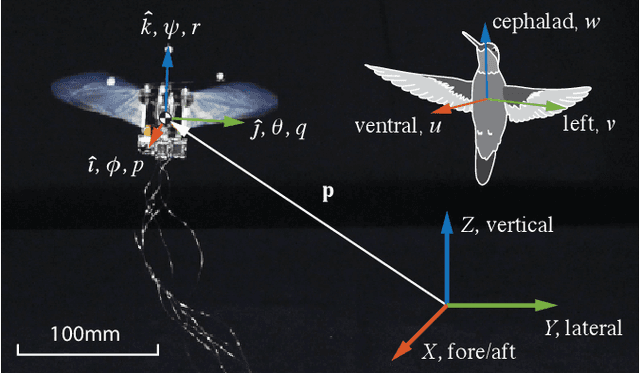

Abstract:Wings of flying animals can not only generate lift and control torques but also can sense their surroundings. Such dual functions of sensing and actuation coupled in one element are particularly useful for small sized bio-inspired robotic flyers, whose weight, size, and power are under stringent constraint. In this work, we present the first flapping-wing robot using its flapping wings for environmental perception and navigation in tight space, without the need for any visual feedback. As the test platform, we introduce the Purdue Hummingbird, a flapping-wing robot with 17cm wingspan and 12 grams weight, with a pair of 30-40Hz flapping wings driven by only two actuators. By interpreting the wing loading feedback and its variations, the vehicle can detect the presence of environmental changes such as grounds, walls, stairs, obstacles and wind gust. The instantaneous wing loading can be obtained through the measurements and interpretation of the current feedback by the motors that actuate the wings. The effectiveness of the proposed approach is experimentally demonstrated on several challenging flight tasks without vision: terrain following, wall following and going through a narrow corridor. To ensure flight stability, a robust controller was designed for handling unforeseen disturbances during the flight. Sensing and navigating one's environment through actuator loading is a promising method for mobile robots, and it can serve as an alternative or complementary method to visual perception.

Flappy Hummingbird: An Open Source Dynamic Simulation of Flapping Wing Robots and Animals

Feb 25, 2019

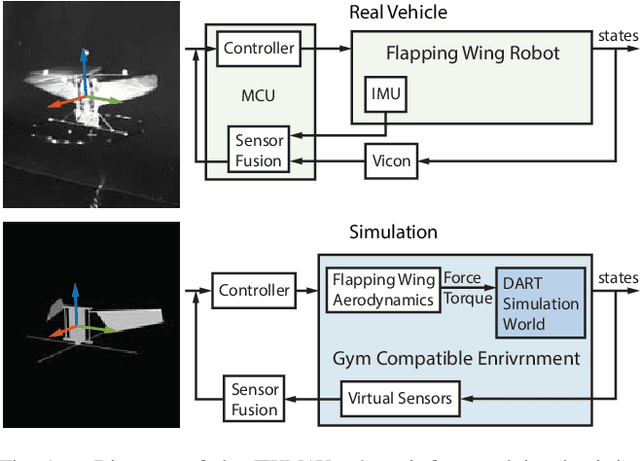

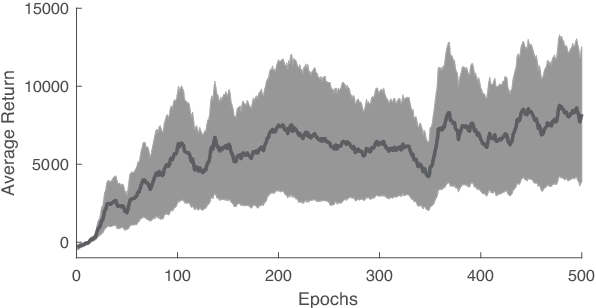

Abstract:Insects and hummingbirds exhibit extraordinary flight capabilities and can simultaneously master seemingly conflicting goals: stable hovering and aggressive maneuvering, unmatched by small scale man-made vehicles. Flapping Wing Micro Air Vehicles (FWMAVs) hold great promise for closing this performance gap. However, design and control of such systems remain challenging due to various constraints. Here, we present an open source high fidelity dynamic simulation for FWMAVs to serve as a testbed for the design, optimization and flight control of FWMAVs. For simulation validation, we recreated the hummingbird-scale robot developed in our lab in the simulation. System identification was performed to obtain the model parameters. The force generation, open-loop and closed-loop dynamic response between simulated and experimental flights were compared and validated. The unsteady aerodynamics and the highly nonlinear flight dynamics present challenging control problems for conventional and learning control algorithms such as Reinforcement Learning. The interface of the simulation is fully compatible with OpenAI Gym environment. As a benchmark study, we present a linear controller for hovering stabilization and a Deep Reinforcement Learning control policy for goal-directed maneuvering. Finally, we demonstrate direct simulation-to-real transfer of both control policies onto the physical robot, further demonstrating the fidelity of the simulation.

Learning Extreme Hummingbird Maneuvers on Flapping Wing Robots

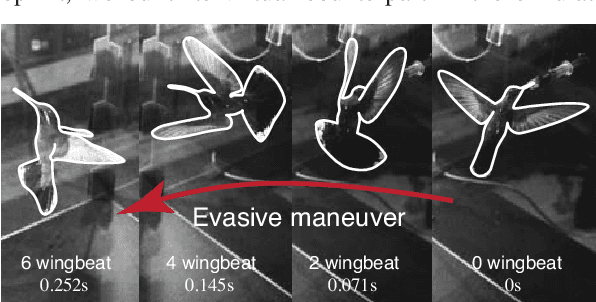

Feb 25, 2019

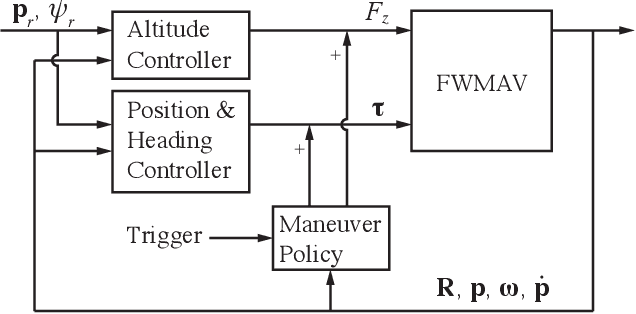

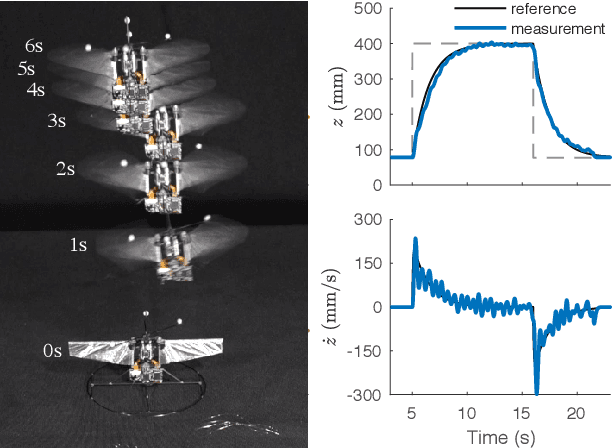

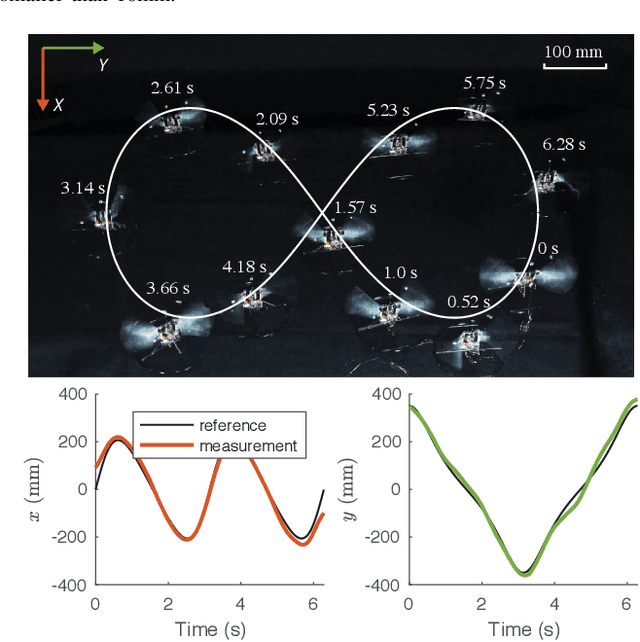

Abstract:Biological studies show that hummingbirds can perform extreme aerobatic maneuvers during fast escape. Given a sudden looming visual stimulus at hover, a hummingbird initiates a fast backward translation coupled with a 180-degree yaw turn, which is followed by instant posture stabilization in just under 10 wingbeats. Consider the wingbeat frequency of 40Hz, this aggressive maneuver is carried out in just 0.2 seconds. Inspired by the hummingbirds' near-maximal performance during such extreme maneuvers, we developed a flight control strategy and experimentally demonstrated that such maneuverability can be achieved by an at-scale 12-gram hummingbird robot equipped with just two actuators. The proposed hybrid control policy combines model-based nonlinear control with model-free reinforcement learning. We use model-based nonlinear control for nominal flight control, as the dynamic model is relatively accurate for these conditions. However, during extreme maneuver, the modeling error becomes unmanageable. A model-free reinforcement learning policy trained in simulation was optimized to 'destabilize' the system and maximize the performance during maneuvering. The hybrid policy manifests a maneuver that is close to that observed in hummingbirds. Direct simulation-to-real transfer is achieved, demonstrating the hummingbird-like fast evasive maneuvers on the at-scale hummingbird robot.

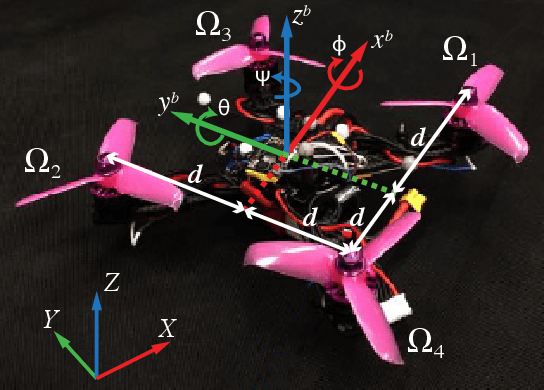

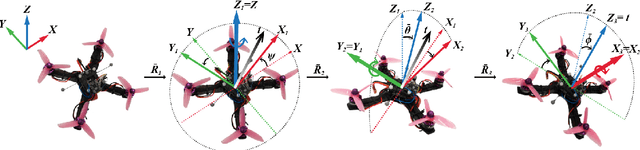

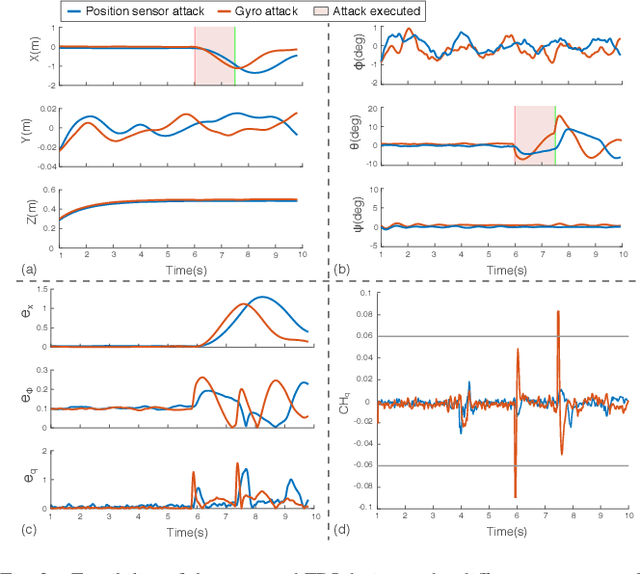

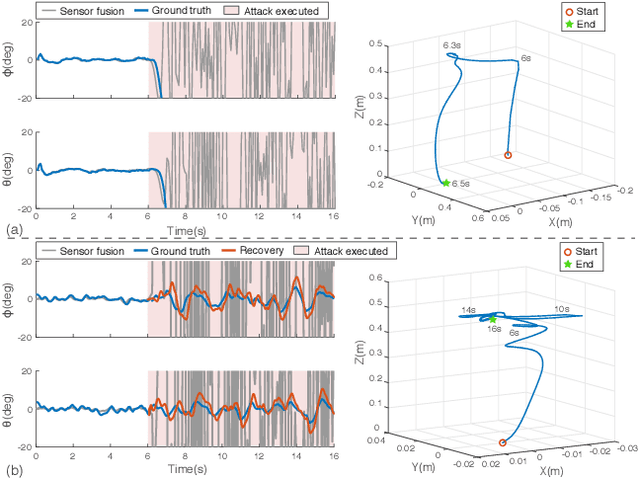

Redundancy-Free UAV Sensor Fault Isolation And Recovery

Nov 30, 2018

Abstract:The sensory system of unmanned aerial vehicles (UAVs) plays an important role in flight safety. Thus, any sensor fault/failure can have potentially catastrophic effects on vehicle control. The recent advance in adversarial studies demonstrated successful sensing fault generation by targeting the physical vulnerabilities of the sensors. It poses new security challenges for sensor fault detection and isolation (FDI) and fault recovery (FR) research because the conventional redundancy-based fault-tolerant design is not effective against such faults. To address these challenges, we present a redundancy-free method for UAV sensor FDI and FR. In the FDI design, we used a basic state estimator for a rough early warning of faults. We then refine the design by considering the unmeasurable actuator state and modeling uncertainties. Under such novel strategies, the proposed method achieves fine-grained fault isolation. Based on this method, we further designed a redundancy-free FR method by using complementary sensor estimations. In particular, position and attitude feedback can provide backup feedback for each other through geometric correlation. The effectiveness of our approach is validated through simulation of several challenging sensor failure scenarios. The recovery performance is experimentally demonstrated by a challenging flight tasks-restoring control after completely losing attitude sensory feedback. With the protection of FDI and FR, flight safety is ensured. This UAV security enhancement method is promising to be generalized for other types of vehicles and can serve as a compensation to other fault-tolerant methodologies.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge