Dongwei Ren

RoomEditor++: A Parameter-Sharing Diffusion Architecture for High-Fidelity Furniture Synthesis

Dec 19, 2025Abstract:Virtual furniture synthesis, which seamlessly integrates reference objects into indoor scenes while maintaining geometric coherence and visual realism, holds substantial promise for home design and e-commerce applications. However, this field remains underexplored due to the scarcity of reproducible benchmarks and the limitations of existing image composition methods in achieving high-fidelity furniture synthesis while preserving background integrity. To overcome these challenges, we first present RoomBench++, a comprehensive and publicly available benchmark dataset tailored for this task. It consists of 112,851 training pairs and 1,832 testing pairs drawn from both real-world indoor videos and realistic home design renderings, thereby supporting robust training and evaluation under practical conditions. Then, we propose RoomEditor++, a versatile diffusion-based architecture featuring a parameter-sharing dual diffusion backbone, which is compatible with both U-Net and DiT architectures. This design unifies the feature extraction and inpainting processes for reference and background images. Our in-depth analysis reveals that the parameter-sharing mechanism enforces aligned feature representations, facilitating precise geometric transformations, texture preservation, and seamless integration. Extensive experiments validate that RoomEditor++ is superior over state-of-the-art approaches in terms of quantitative metrics, qualitative assessments, and human preference studies, while highlighting its strong generalization to unseen indoor scenes and general scenes without task-specific fine-tuning. The dataset and source code are available at \url{https://github.com/stonecutter-21/roomeditor}.

Unleashing Degradation-Carrying Features in Symmetric U-Net: Simpler and Stronger Baselines for All-in-One Image Restoration

Dec 11, 2025Abstract:All-in-one image restoration aims to handle diverse degradations (e.g., noise, blur, adverse weather) within a unified framework, yet existing methods increasingly rely on complex architectures (e.g., Mixture-of-Experts, diffusion models) and elaborate degradation prompt strategies. In this work, we reveal a critical insight: well-crafted feature extraction inherently encodes degradation-carrying information, and a symmetric U-Net architecture is sufficient to unleash these cues effectively. By aligning feature scales across encoder-decoder and enabling streamlined cross-scale propagation, our symmetric design preserves intrinsic degradation signals robustly, rendering simple additive fusion in skip connections sufficient for state-of-the-art performance. Our primary baseline, SymUNet, is built on this symmetric U-Net and achieves better results across benchmark datasets than existing approaches while reducing computational cost. We further propose a semantic enhanced variant, SE-SymUNet, which integrates direct semantic injection from frozen CLIP features via simple cross-attention to explicitly amplify degradation priors. Extensive experiments on several benchmarks validate the superiority of our methods. Both baselines SymUNet and SE-SymUNet establish simpler and stronger foundations for future advancements in all-in-one image restoration. The source code is available at https://github.com/WenlongJiao/SymUNet.

BasicAVSR: Arbitrary-Scale Video Super-Resolution via Image Priors and Enhanced Motion Compensation

Oct 30, 2025Abstract:Arbitrary-scale video super-resolution (AVSR) aims to enhance the resolution of video frames, potentially at various scaling factors, which presents several challenges regarding spatial detail reproduction, temporal consistency, and computational complexity. In this paper, we propose a strong baseline BasicAVSR for AVSR by integrating four key components: 1) adaptive multi-scale frequency priors generated from image Laplacian pyramids, 2) a flow-guided propagation unit to aggregate spatiotemporal information from adjacent frames, 3) a second-order motion compensation unit for more accurate spatial alignment of adjacent frames, and 4) a hyper-upsampling unit to generate scale-aware and content-independent upsampling kernels. To meet diverse application demands, we instantiate three propagation variants: (i) a unidirectional RNN unit for strictly online inference, (ii) a unidirectional RNN unit empowered with a limited lookahead that tolerates a small output delay, and (iii) a bidirectional RNN unit designed for offline tasks where computational resources are less constrained. Experimental results demonstrate the effectiveness and adaptability of our model across these different scenarios. Through extensive experiments, we show that BasicAVSR significantly outperforms existing methods in terms of super-resolution quality, generalization ability, and inference speed. Our work not only advances the state-of-the-art in AVSR but also extends its core components to multiple frameworks for diverse scenarios. The code is available at https://github.com/shangwei5/BasicAVSR.

Motion-Aware Adaptive Pixel Pruning for Efficient Local Motion Deblurring

Jul 10, 2025Abstract:Local motion blur in digital images originates from the relative motion between dynamic objects and static imaging systems during exposure. Existing deblurring methods face significant challenges in addressing this problem due to their inefficient allocation of computational resources and inadequate handling of spatially varying blur patterns. To overcome these limitations, we first propose a trainable mask predictor that identifies blurred regions in the image. During training, we employ blur masks to exclude sharp regions. For inference optimization, we implement structural reparameterization by converting $3\times 3$ convolutions to computationally efficient $1\times 1$ convolutions, enabling pixel-level pruning of sharp areas to reduce computation. Second, we develop an intra-frame motion analyzer that translates relative pixel displacements into motion trajectories, establishing adaptive guidance for region-specific blur restoration. Our method is trained end-to-end using a combination of reconstruction loss, reblur loss, and mask loss guided by annotated blur masks. Extensive experiments demonstrate superior performance over state-of-the-art methods on both local and global blur datasets while reducing FLOPs by 49\% compared to SOTA models (e.g., LMD-ViT). The source code is available at https://github.com/shangwei5/M2AENet.

Efficient RAW Image Deblurring with Adaptive Frequency Modulation

May 30, 2025Abstract:Image deblurring plays a crucial role in enhancing visual clarity across various applications. Although most deep learning approaches primarily focus on sRGB images, which inherently lose critical information during the image signal processing pipeline, RAW images, being unprocessed and linear, possess superior restoration potential but remain underexplored. Deblurring RAW images presents unique challenges, particularly in handling frequency-dependent blur while maintaining computational efficiency. To address these issues, we propose Frequency Enhanced Network (FrENet), a framework specifically designed for RAW-to-RAW deblurring that operates directly in the frequency domain. We introduce a novel Adaptive Frequency Positional Modulation module, which dynamically adjusts frequency components according to their spectral positions, thereby enabling precise control over the deblurring process. Additionally, frequency domain skip connections are adopted to further preserve high-frequency details. Experimental results demonstrate that FrENet surpasses state-of-the-art deblurring methods in RAW image deblurring, achieving significantly better restoration quality while maintaining high efficiency in terms of reduced MACs. Furthermore, FrENet's adaptability enables it to be extended to sRGB images, where it delivers comparable or superior performance compared to methods specifically designed for sRGB data. The code will be available at https://github.com/WenlongJiao/FrENet .

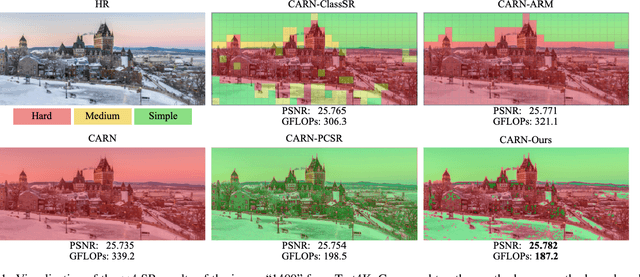

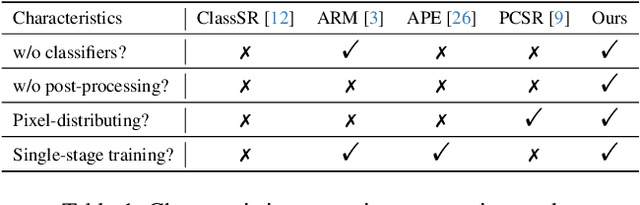

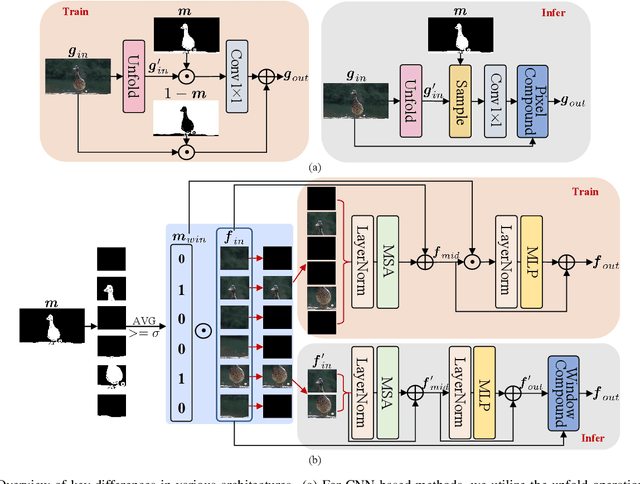

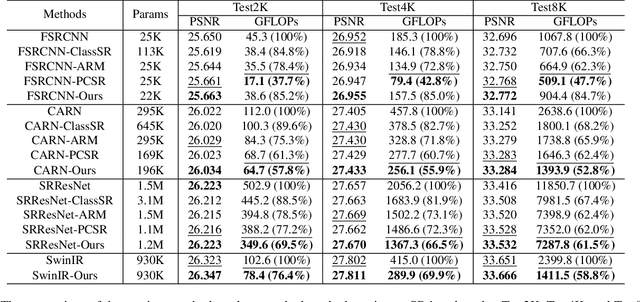

High-Frequency Prior-Driven Adaptive Masking for Accelerating Image Super-Resolution

May 11, 2025

Abstract:The primary challenge in accelerating image super-resolution lies in reducing computation while maintaining performance and adaptability. Motivated by the observation that high-frequency regions (e.g., edges and textures) are most critical for reconstruction, we propose a training-free adaptive masking module for acceleration that dynamically focuses computation on these challenging areas. Specifically, our method first extracts high-frequency components via Gaussian blur subtraction and adaptively generates binary masks using K-means clustering to identify regions requiring intensive processing. Our method can be easily integrated with both CNNs and Transformers. For CNN-based architectures, we replace standard $3 \times 3$ convolutions with an unfold operation followed by $1 \times 1$ convolutions, enabling pixel-wise sparse computation guided by the mask. For Transformer-based models, we partition the mask into non-overlapping windows and selectively process tokens based on their average values. During inference, unnecessary pixels or windows are pruned, significantly reducing computation. Moreover, our method supports dilation-based mask adjustment to control the processing scope without retraining, and is robust to unseen degradations (e.g., noise, compression). Extensive experiments on benchmarks demonstrate that our method reduces FLOPs by 24--43% for state-of-the-art models (e.g., CARN, SwinIR) while achieving comparable or better quantitative metrics. The source code is available at https://github.com/shangwei5/AMSR

Generative Inbetweening through Frame-wise Conditions-Driven Video Generation

Dec 16, 2024

Abstract:Generative inbetweening aims to generate intermediate frame sequences by utilizing two key frames as input. Although remarkable progress has been made in video generation models, generative inbetweening still faces challenges in maintaining temporal stability due to the ambiguous interpolation path between two key frames. This issue becomes particularly severe when there is a large motion gap between input frames. In this paper, we propose a straightforward yet highly effective Frame-wise Conditions-driven Video Generation (FCVG) method that significantly enhances the temporal stability of interpolated video frames. Specifically, our FCVG provides an explicit condition for each frame, making it much easier to identify the interpolation path between two input frames and thus ensuring temporally stable production of visually plausible video frames. To achieve this, we suggest extracting matched lines from two input frames that can then be easily interpolated frame by frame, serving as frame-wise conditions seamlessly integrated into existing video generation models. In extensive evaluations covering diverse scenarios such as natural landscapes, complex human poses, camera movements and animations, existing methods often exhibit incoherent transitions across frames. In contrast, our FCVG demonstrates the capability to generate temporally stable videos using both linear and non-linear interpolation curves. Our project page and code are available at \url{https://fcvg-inbetween.github.io/}.

Reblurring-Guided Single Image Defocus Deblurring: A Learning Framework with Misaligned Training Pairs

Sep 26, 2024

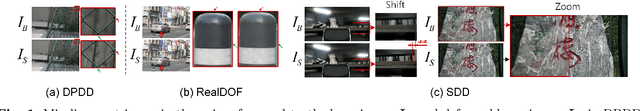

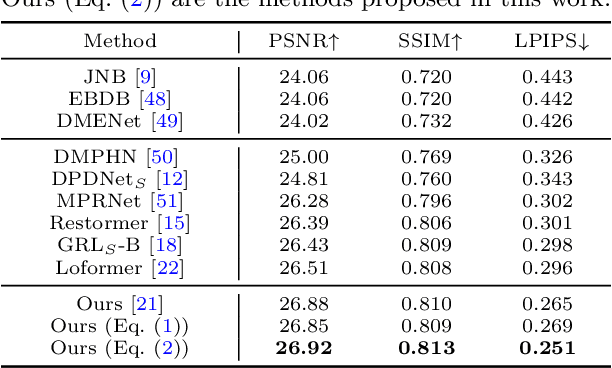

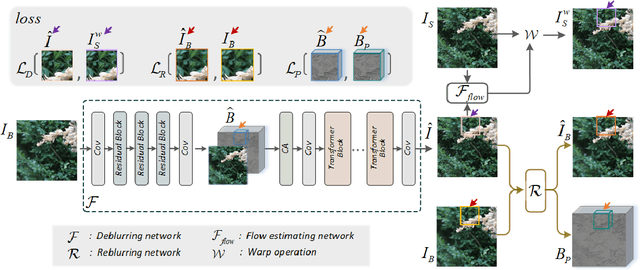

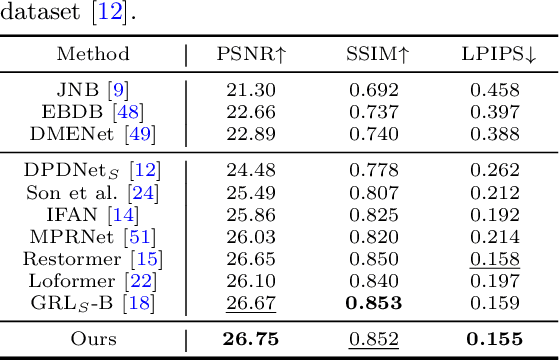

Abstract:For single image defocus deblurring, acquiring well-aligned training pairs (or training triplets), i.e., a defocus blurry image, an all-in-focus sharp image (and a defocus blur map), is an intricate task for the development of deblurring models. Existing image defocus deblurring methods typically rely on training data collected by specialized imaging equipment, presupposing that these pairs or triplets are perfectly aligned. However, in practical scenarios involving the collection of real-world data, direct acquisition of training triplets is infeasible, and training pairs inevitably encounter spatial misalignment issues. In this work, we introduce a reblurring-guided learning framework for single image defocus deblurring, enabling the learning of a deblurring network even with misaligned training pairs. Specifically, we first propose a baseline defocus deblurring network that utilizes spatially varying defocus blur map as degradation prior to enhance the deblurring performance. Then, to effectively learn the baseline defocus deblurring network with misaligned training pairs, our reblurring module ensures spatial consistency between the deblurred image, the reblurred image and the input blurry image by reconstructing spatially variant isotropic blur kernels. Moreover, the spatially variant blur derived from the reblurring module can serve as pseudo supervision for defocus blur map during training, interestingly transforming training pairs into training triplets. Additionally, we have collected a new dataset specifically for single image defocus deblurring (SDD) with typical misalignments, which not only substantiates our proposed method but also serves as a benchmark for future research.

SelfDRSC++: Self-Supervised Learning for Dual Reversed Rolling Shutter Correction

Aug 21, 2024

Abstract:Modern consumer cameras commonly employ the rolling shutter (RS) imaging mechanism, via which images are captured by scanning scenes row-by-row, resulting in RS distortion for dynamic scenes. To correct RS distortion, existing methods adopt a fully supervised learning manner that requires high framerate global shutter (GS) images as ground-truth for supervision. In this paper, we propose an enhanced Self-supervised learning framework for Dual reversed RS distortion Correction (SelfDRSC++). Firstly, we introduce a lightweight DRSC network that incorporates a bidirectional correlation matching block to refine the joint optimization of optical flows and corrected RS features, thereby improving correction performance while reducing network parameters. Subsequently, to effectively train the DRSC network, we propose a self-supervised learning strategy that ensures cycle consistency between input and reconstructed dual reversed RS images. The RS reconstruction in SelfDRSC++ can be interestingly formulated as a specialized instance of video frame interpolation, where each row in reconstructed RS images is interpolated from predicted GS images by utilizing RS distortion time maps. By achieving superior performance while simplifying the training process, SelfDRSC++ enables feasible one-stage self-supervised training. Additionally, besides start and end RS scanning time, SelfDRSC++ allows supervision of GS images at arbitrary intermediate scanning times, thus enabling the learned DRSC network to generate high framerate GS videos. The code and trained models are available at \url{https://github.com/shangwei5/SelfDRSC_plusplus}.

Thin-Plate Spline-based Interpolation for Animation Line Inbetweening

Aug 17, 2024

Abstract:Animation line inbetweening is a crucial step in animation production aimed at enhancing animation fluidity by predicting intermediate line arts between two key frames. However, existing methods face challenges in effectively addressing sparse pixels and significant motion in line art key frames. In literature, Chamfer Distance (CD) is commonly adopted for evaluating inbetweening performance. Despite achieving favorable CD values, existing methods often generate interpolated frames with line disconnections, especially for scenarios involving large motion. Motivated by this observation, we propose a simple yet effective interpolation method for animation line inbetweening that adopts thin-plate spline-based transformation to estimate coarse motion more accurately by modeling the keypoint correspondence between two key frames, particularly for large motion scenarios. Building upon the coarse estimation, a motion refine module is employed to further enhance motion details before final frame interpolation using a simple UNet model. Furthermore, to more accurately assess the performance of animation line inbetweening, we refine the CD metric and introduce a novel metric termed Weighted Chamfer Distance, which demonstrates a higher consistency with visual perception quality. Additionally, we incorporate Earth Mover's Distance and conduct user study to provide a more comprehensive evaluation. Our method outperforms existing approaches by delivering high-quality interpolation results with enhanced fluidity. The code is available at \url{https://github.com/Tian-one/tps-inbetween}.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge