Benjamin Kiefer

Learning-Based Distance Estimation for 360° Single-Sensor Setups

Jun 25, 2025Abstract:Accurate distance estimation is a fundamental challenge in robotic perception, particularly in omnidirectional imaging, where traditional geometric methods struggle with lens distortions and environmental variability. In this work, we propose a neural network-based approach for monocular distance estimation using a single 360{\deg} fisheye lens camera. Unlike classical trigonometric techniques that rely on precise lens calibration, our method directly learns and infers the distance of objects from raw omnidirectional inputs, offering greater robustness and adaptability across diverse conditions. We evaluate our approach on three 360{\deg} datasets (LOAF, ULM360, and a newly captured dataset Boat360), each representing distinct environmental and sensor setups. Our experimental results demonstrate that the proposed learning-based model outperforms traditional geometry-based methods and other learning baselines in both accuracy and robustness. These findings highlight the potential of deep learning for real-time omnidirectional distance estimation, making our approach particularly well-suited for low-cost applications in robotics, autonomous navigation, and surveillance.

Lightweight Multi-Frame Integration for Robust YOLO Object Detection in Videos

Jun 25, 2025Abstract:Modern image-based object detection models, such as YOLOv7, primarily process individual frames independently, thus ignoring valuable temporal context naturally present in videos. Meanwhile, existing video-based detection methods often introduce complex temporal modules, significantly increasing model size and computational complexity. In practical applications such as surveillance and autonomous driving, transient challenges including motion blur, occlusions, and abrupt appearance changes can severely degrade single-frame detection performance. To address these issues, we propose a straightforward yet highly effective strategy: stacking multiple consecutive frames as input to a YOLO-based detector while supervising only the output corresponding to a single target frame. This approach leverages temporal information with minimal modifications to existing architectures, preserving simplicity, computational efficiency, and real-time inference capability. Extensive experiments on the challenging MOT20Det and our BOAT360 datasets demonstrate that our method improves detection robustness, especially for lightweight models, effectively narrowing the gap between compact and heavy detection networks. Additionally, we contribute the BOAT360 benchmark dataset, comprising annotated fisheye video sequences captured from a boat, to support future research in multi-frame video object detection in challenging real-world scenarios.

Generating Synthetic Data via Augmentations for Improved Facial Resemblance in DreamBooth and InstantID

May 06, 2025

Abstract:The personalization of Stable Diffusion for generating professional portraits from amateur photographs is a burgeoning area, with applications in various downstream contexts. This paper investigates the impact of augmentations on improving facial resemblance when using two prominent personalization techniques: DreamBooth and InstantID. Through a series of experiments with diverse subject datasets, we assessed the effectiveness of various augmentation strategies on the generated headshots' fidelity to the original subject. We introduce FaceDistance, a wrapper around FaceNet, to rank the generations based on facial similarity, which aided in our assessment. Ultimately, this research provides insights into the role of augmentations in enhancing facial resemblance in SDXL-generated portraits, informing strategies for their effective deployment in downstream applications.

3rd Workshop on Maritime Computer Vision (MaCVi) 2025: Challenge Results

Jan 17, 2025Abstract:The 3rd Workshop on Maritime Computer Vision (MaCVi) 2025 addresses maritime computer vision for Unmanned Surface Vehicles (USV) and underwater. This report offers a comprehensive overview of the findings from the challenges. We provide both statistical and qualitative analyses, evaluating trends from over 700 submissions. All datasets, evaluation code, and the leaderboard are available to the public at https://macvi.org/workshop/macvi25.

Approximate Supervised Object Distance Estimation on Unmanned Surface Vehicles

Jan 09, 2025

Abstract:Unmanned surface vehicles (USVs) and boats are increasingly important in maritime operations, yet their deployment is limited due to costly sensors and complexity. LiDAR, radar, and depth cameras are either costly, yield sparse point clouds or are noisy, and require extensive calibration. Here, we introduce a novel approach for approximate distance estimation in USVs using supervised object detection. We collected a dataset comprising images with manually annotated bounding boxes and corresponding distance measurements. Leveraging this data, we propose a specialized branch of an object detection model, not only to detect objects but also to predict their distances from the USV. This method offers a cost-efficient and intuitive alternative to conventional distance measurement techniques, aligning more closely with human estimation capabilities. We demonstrate its application in a marine assistance system that alerts operators to nearby objects such as boats, buoys, or other waterborne hazards.

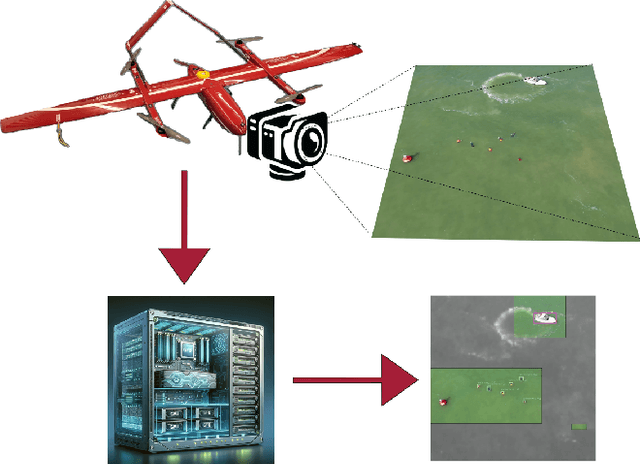

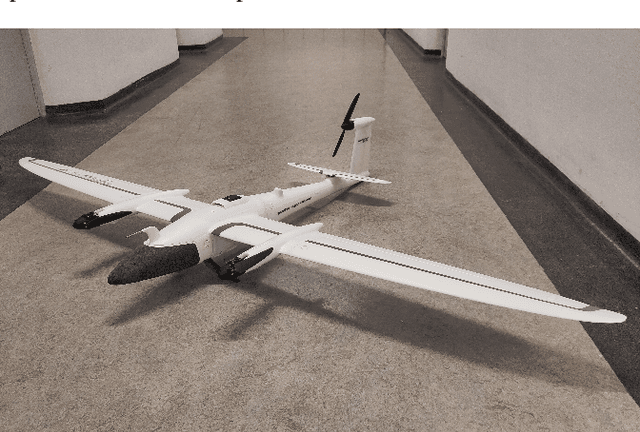

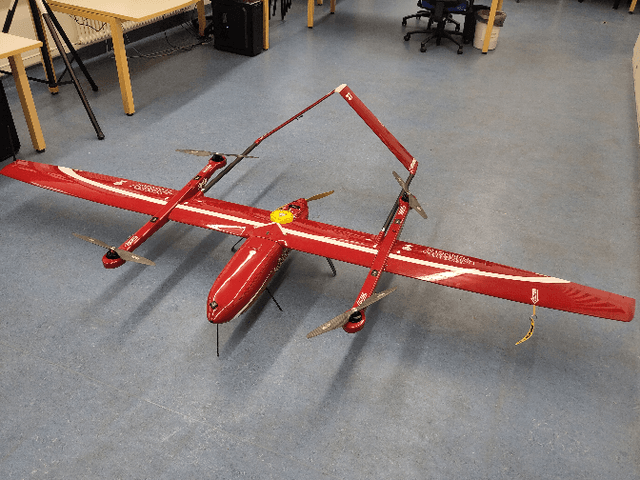

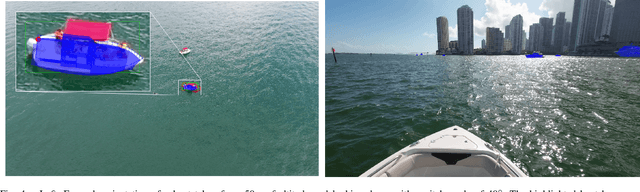

UAV-Assisted Maritime Search and Rescue: A Holistic Approach

Mar 21, 2024

Abstract:In this paper, we explore the application of Unmanned Aerial Vehicles (UAVs) in maritime search and rescue (mSAR) missions, focusing on medium-sized fixed-wing drones and quadcopters. We address the challenges and limitations inherent in operating some of the different classes of UAVs, particularly in search operations. Our research includes the development of a comprehensive software framework designed to enhance the efficiency and efficacy of SAR operations. This framework combines preliminary detection onboard UAVs with advanced object detection at ground stations, aiming to reduce visual strain and improve decision-making for operators. It will be made publicly available upon publication. We conduct experiments to evaluate various Region of Interest (RoI) proposal methods, especially by imposing simulated limited bandwidth on them, an important consideration when flying remote or offshore operations. This forces the algorithm to prioritize some predictions over others.

The 2nd Workshop on Maritime Computer Vision 2024

Nov 23, 2023

Abstract:The 2nd Workshop on Maritime Computer Vision (MaCVi) 2024 addresses maritime computer vision for Unmanned Aerial Vehicles (UAV) and Unmanned Surface Vehicles (USV). Three challenges categories are considered: (i) UAV-based Maritime Object Tracking with Re-identification, (ii) USV-based Maritime Obstacle Segmentation and Detection, (iii) USV-based Maritime Boat Tracking. The USV-based Maritime Obstacle Segmentation and Detection features three sub-challenges, including a new embedded challenge addressing efficicent inference on real-world embedded devices. This report offers a comprehensive overview of the findings from the challenges. We provide both statistical and qualitative analyses, evaluating trends from over 195 submissions. All datasets, evaluation code, and the leaderboard are available to the public at https://macvi.org/workshop/macvi24.

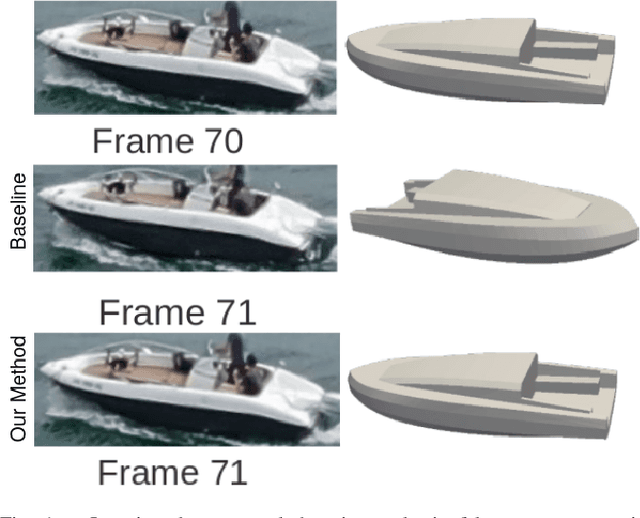

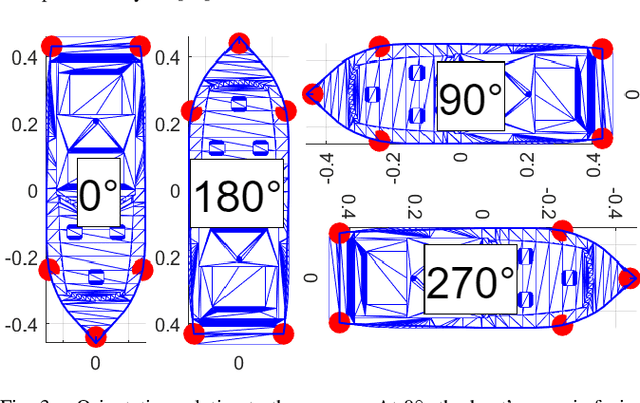

Stable Yaw Estimation of Boats from the Viewpoint of UAVs and USVs

Jun 24, 2023

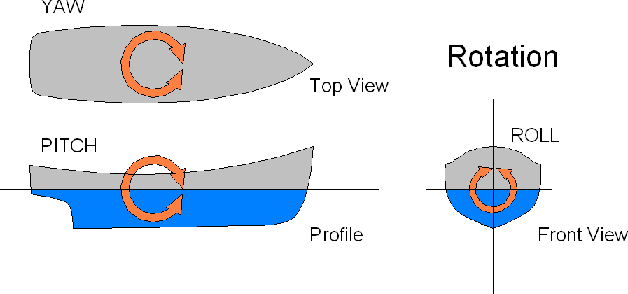

Abstract:Yaw estimation of boats from the viewpoint of unmanned aerial vehicles (UAVs) and unmanned surface vehicles (USVs) or boats is a crucial task in various applications such as 3D scene rendering, trajectory prediction, and navigation. However, the lack of literature on yaw estimation of objects from the viewpoint of UAVs has motivated us to address this domain. In this paper, we propose a method based on HyperPosePDF for predicting the orientation of boats in the 6D space. For that, we use existing datasets, such as PASCAL3D+ and our own datasets, SeaDronesSee-3D and BOArienT, which we annotated manually. We extend HyperPosePDF to work in video-based scenarios, such that it yields robust orientation predictions across time. Naively applying HyperPosePDF on video data yields single-point predictions, resulting in far-off predictions and often incorrect symmetric orientations due to unseen or visually different data. To alleviate this issue, we propose aggregating the probability distributions of pose predictions, resulting in significantly improved performance, as shown in our experimental evaluation. Our proposed method could significantly benefit downstream tasks in marine robotics.

Memory Maps for Video Object Detection and Tracking on UAVs

Mar 06, 2023

Abstract:This paper introduces a novel approach to video object detection detection and tracking on Unmanned Aerial Vehicles (UAVs). By incorporating metadata, the proposed approach creates a memory map of object locations in actual world coordinates, providing a more robust and interpretable representation of object locations in both, image space and the real world. We use this representation to boost confidences, resulting in improved performance for several temporal computer vision tasks, such as video object detection, short and long-term single and multi-object tracking, and video anomaly detection. These findings confirm the benefits of metadata in enhancing the capabilities of UAVs in the field of temporal computer vision and pave the way for further advancements in this area.

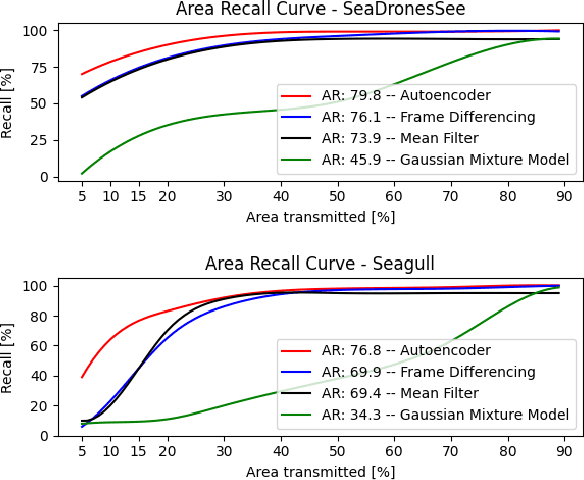

Fast Region of Interest Proposals on Maritime UAVs

Jan 27, 2023

Abstract:Unmanned aerial vehicles assist in maritime search and rescue missions by flying over large search areas to autonomously search for objects or people. Reliably detecting objects of interest requires fast models to employ on embedded hardware. Moreover, with increasing distance to the ground station only part of the video data can be transmitted. In this work, we consider the problem of finding meaningful region of interest proposals in a video stream on an embedded GPU. Current object or anomaly detectors are not suitable due to their slow speed, especially on limited hardware and for large image resolutions. Lastly, objects of interest, such as pieces of wreckage, are often not known a priori. Therefore, we propose an end-to-end future frame prediction model running in real-time on embedded GPUs to generate region proposals. We analyze its performance on large-scale maritime data sets and demonstrate its benefits over traditional and modern methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge