Anna Saranti

Be Careful When Evaluating Explanations Regarding Ground Truth

Nov 08, 2023

Abstract:Evaluating explanations of image classifiers regarding ground truth, e.g. segmentation masks defined by human perception, primarily evaluates the quality of the models under consideration rather than the explanation methods themselves. Driven by this observation, we propose a framework for $\textit{jointly}$ evaluating the robustness of safety-critical systems that $\textit{combine}$ a deep neural network with an explanation method. These are increasingly used in real-world applications like medical image analysis or robotics. We introduce a fine-tuning procedure to (mis)align model$\unicode{x2013}$explanation pipelines with ground truth and use it to quantify the potential discrepancy between worst and best-case scenarios of human alignment. Experiments across various model architectures and post-hoc local interpretation methods provide insights into the robustness of vision transformers and the overall vulnerability of such AI systems to potential adversarial attacks.

Explaining and visualizing black-box models through counterfactual paths

Aug 01, 2023Abstract:Explainable AI (XAI) is an increasingly important area of machine learning research, which aims to make black-box models transparent and interpretable. In this paper, we propose a novel approach to XAI that uses the so-called counterfactual paths generated by conditional permutations of features. The algorithm measures feature importance by identifying sequential permutations of features that most influence changes in model predictions. It is particularly suitable for generating explanations based on counterfactual paths in knowledge graphs incorporating domain knowledge. Counterfactual paths introduce an additional graph dimension to current XAI methods in both explaining and visualizing black-box models. Experiments with synthetic and medical data demonstrate the practical applicability of our approach.

A Practical Tutorial on Explainable AI Techniques

Nov 13, 2021

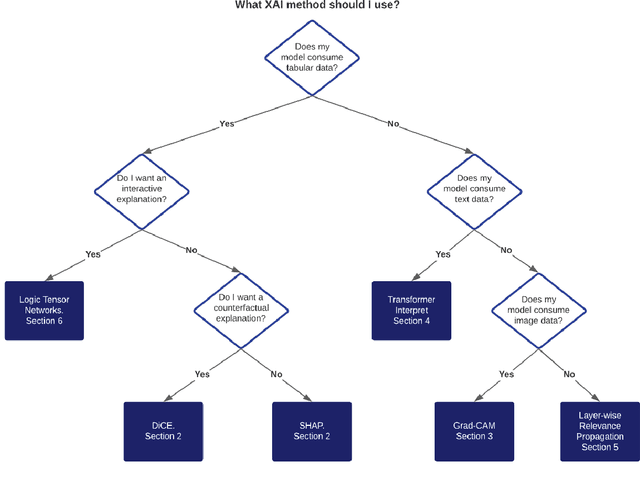

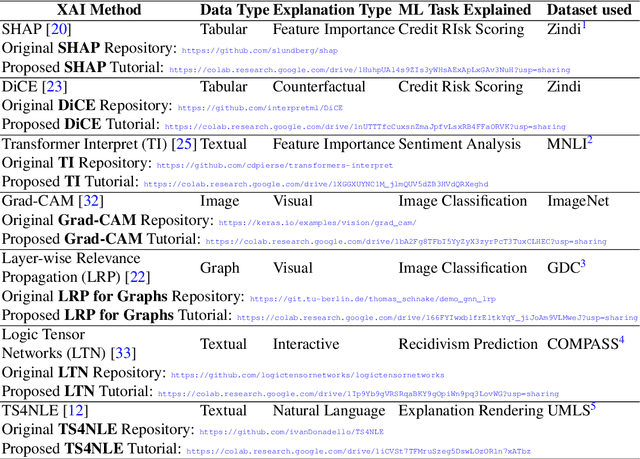

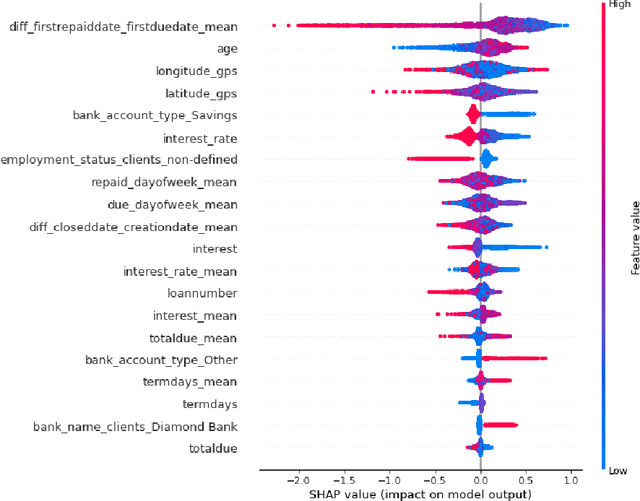

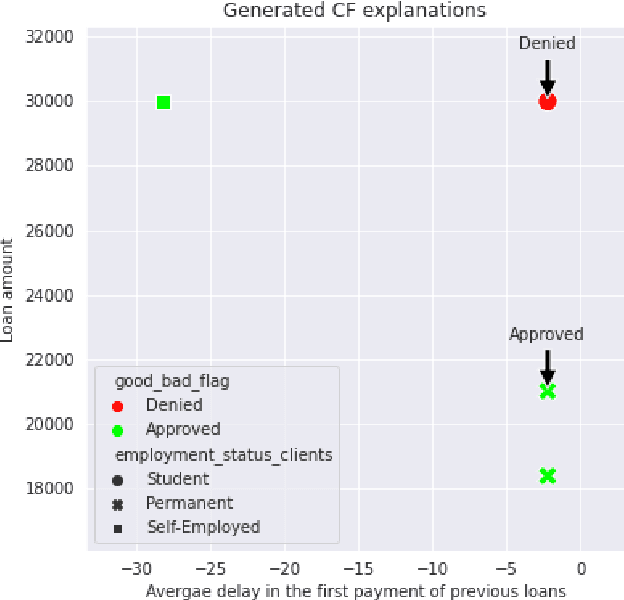

Abstract:Last years have been characterized by an upsurge of opaque automatic decision support systems, such as Deep Neural Networks (DNNs). Although they have great generalization and prediction skills, their functioning does not allow obtaining detailed explanations of their behaviour. As opaque machine learning models are increasingly being employed to make important predictions in critical environments, the danger is to create and use decisions that are not justifiable or legitimate. Therefore, there is a general agreement on the importance of endowing machine learning models with explainability. The reason is that EXplainable Artificial Intelligence (XAI) techniques can serve to verify and certify model outputs and enhance them with desirable notions such as trustworthiness, accountability, transparency and fairness. This tutorial is meant to be the go-to handbook for any audience with a computer science background aiming at getting intuitive insights of machine learning models, accompanied with straight, fast, and intuitive explanations out of the box. We believe that these methods provide a valuable contribution for applying XAI techniques in their particular day-to-day models, datasets and use-cases. Figure \ref{fig:Flowchart} acts as a flowchart/map for the reader and should help him to find the ideal method to use according to his type of data. The reader will find a description of the proposed method as well as an example of use and a Python notebook that he can easily modify as he pleases in order to apply it to his own case of application.

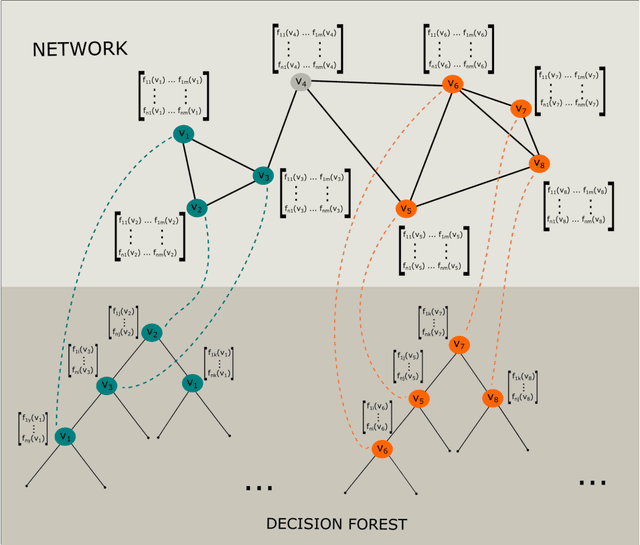

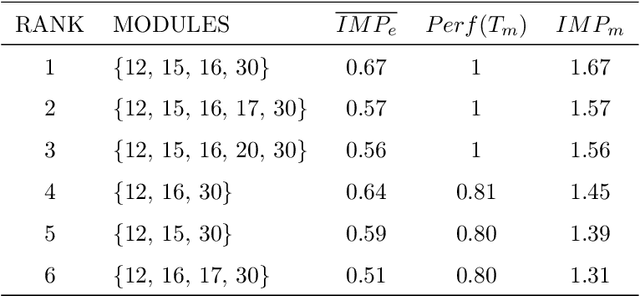

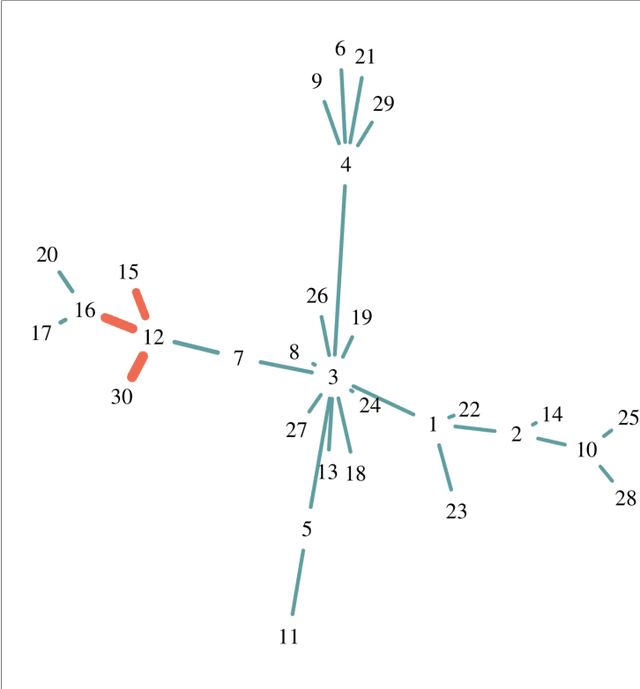

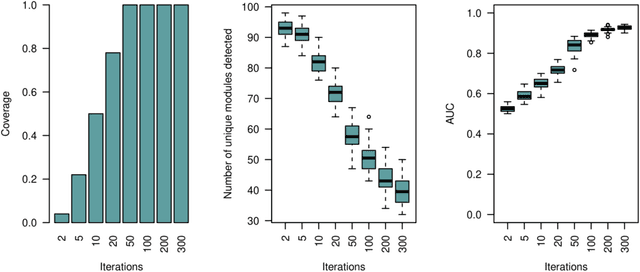

Network Module Detection from Multi-Modal Node Features with a Greedy Decision Forest for Actionable Explainable AI

Aug 26, 2021

Abstract:Network-based algorithms are used in most domains of research and industry in a wide variety of applications and are of great practical use. In this work, we demonstrate subnetwork detection based on multi-modal node features using a new Greedy Decision Forest for better interpretability. The latter will be a crucial factor in retaining experts and gaining their trust in such algorithms in the future. To demonstrate a concrete application example, we focus in this paper on bioinformatics and systems biology with a special focus on biomedicine. However, our methodological approach is applicable in many other domains as well. Systems biology serves as a very good example of a field in which statistical data-driven machine learning enables the analysis of large amounts of multi-modal biomedical data. This is important to reach the future goal of precision medicine, where the complexity of patients is modeled on a system level to best tailor medical decisions, health practices and therapies to the individual patient. Our glass-box approach could help to uncover disease-causing network modules from multi-omics data to better understand diseases such as cancer.

The FeatureCloud AI Store for Federated Learning in Biomedicine and Beyond

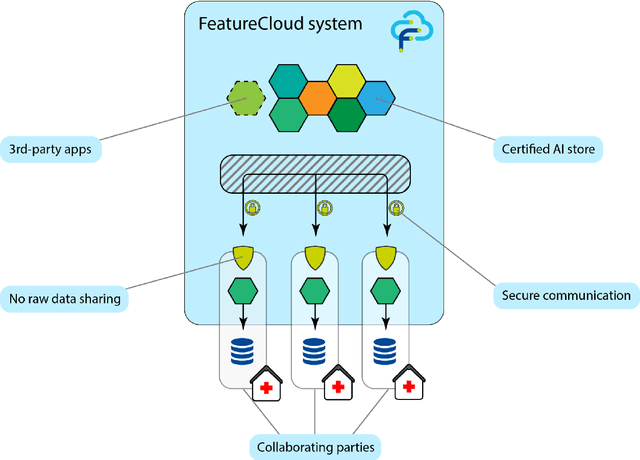

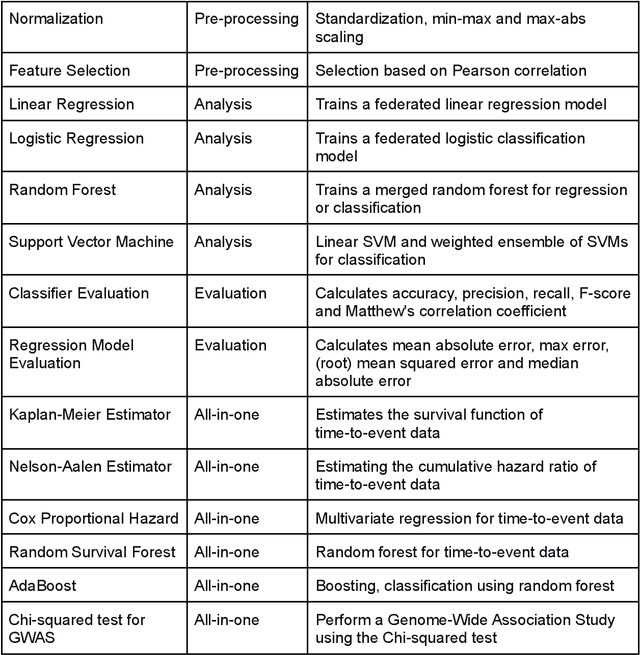

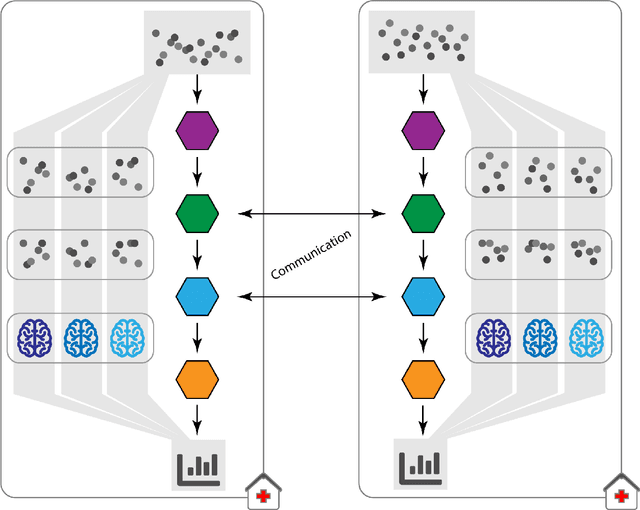

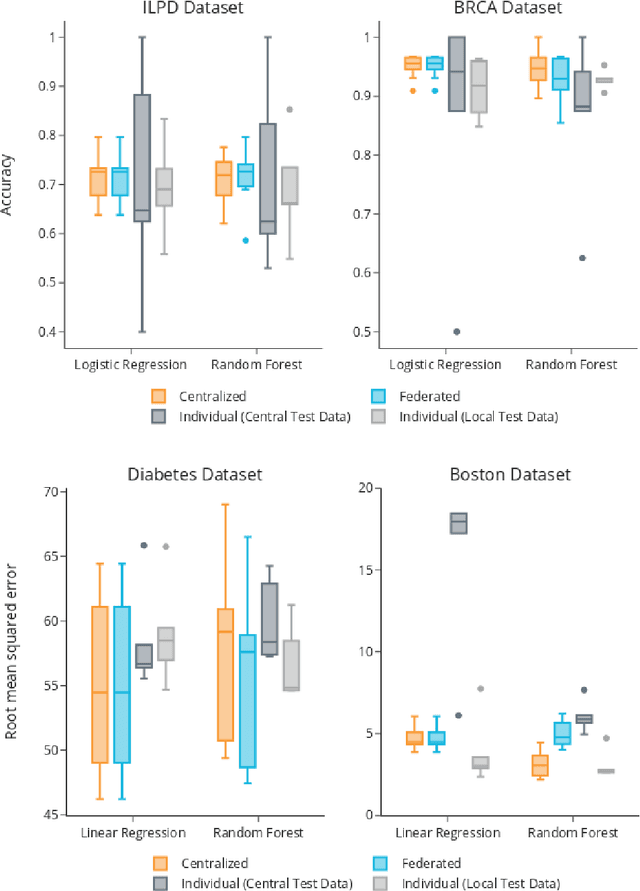

May 12, 2021

Abstract:Machine Learning (ML) and Artificial Intelligence (AI) have shown promising results in many areas and are driven by the increasing amount of available data. However, this data is often distributed across different institutions and cannot be shared due to privacy concerns. Privacy-preserving methods, such as Federated Learning (FL), allow for training ML models without sharing sensitive data, but their implementation is time-consuming and requires advanced programming skills. Here, we present the FeatureCloud AI Store for FL as an all-in-one platform for biomedical research and other applications. It removes large parts of this complexity for developers and end-users by providing an extensible AI Store with a collection of ready-to-use apps. We show that the federated apps produce similar results to centralized ML, scale well for a typical number of collaborators and can be combined with Secure Multiparty Computation (SMPC), thereby making FL algorithms safely and easily applicable in biomedical and clinical environments.

KANDINSKYPatterns -- An experimental exploration environment for Pattern Analysis and Machine Intelligence

Feb 28, 2021Abstract:Machine intelligence is very successful at standard recognition tasks when having high-quality training data. There is still a significant gap between machine-level pattern recognition and human-level concept learning. Humans can learn under uncertainty from only a few examples and generalize these concepts to solve new problems. The growing interest in explainable machine intelligence, requires experimental environments and diagnostic tests to analyze weaknesses in existing approaches to drive progress in the field. In this paper, we discuss existing diagnostic tests and test data sets such as CLEVR, CLEVERER, CLOSURE, CURI, Bongard-LOGO, V-PROM, and present our own experimental environment: The KANDINSKYPatterns, named after the Russian artist Wassily Kandinksy, who made theoretical contributions to compositivity, i.e. that all perceptions consist of geometrically elementary individual components. This was experimentally proven by Hubel &Wiesel in the 1960s and became the basis for machine learning approaches such as the Neocognitron and the even later Deep Learning. While KANDINSKYPatterns have computationally controllable properties on the one hand, bringing ground truth, they are also easily distinguishable by human observers, i.e., controlled patterns can be described by both humans and algorithms, making them another important contribution to international research in machine intelligence.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge