Alicia Casals

Automatic Feature Detection in Lung Ultrasound Images using Wavelet and Radon Transforms

Jun 22, 2023

Abstract:Objective: Lung ultrasonography is a significant advance toward a harmless lung imagery system. This work has investigated the automatic localization of diagnostically significant features in lung ultrasound pictures which are Pleural line, A-lines, and B-lines. Study Design: Wavelet and Radon transforms have been utilized in order to denoise and highlight the presence of clinically significant patterns. The proposed framework is developed and validated using three different lung ultrasound image datasets. Two of them contain synthetic data and the other one is taken from the publicly available POCUS dataset. The efficiency of the proposed method is evaluated using 200 real images. Results: The obtained results prove that the comparison between localized patterns and the baselines yields a promising F2-score of 62%, 86%, and 100% for B-lines, A-lines, and Pleural line, respectively. Conclusion: Finally, the high F-scores attained show that the developed technique is an effective way to automatically extract lung patterns from ultrasound images.

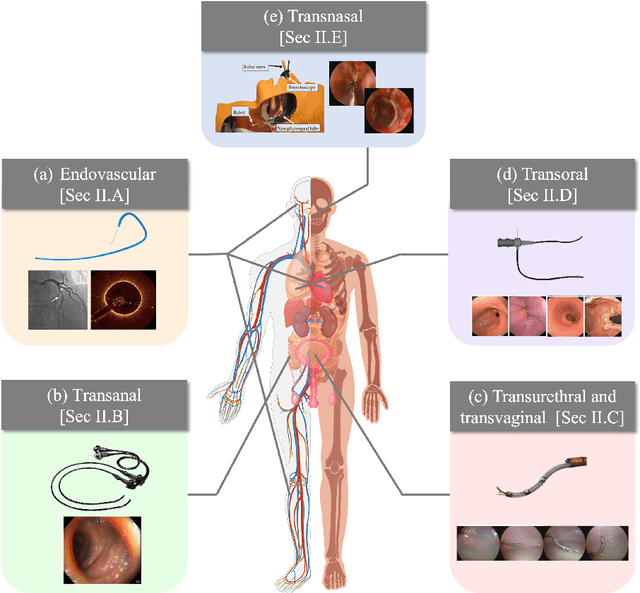

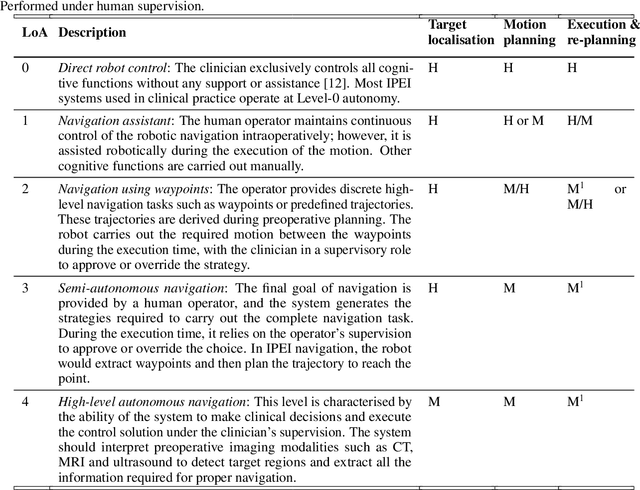

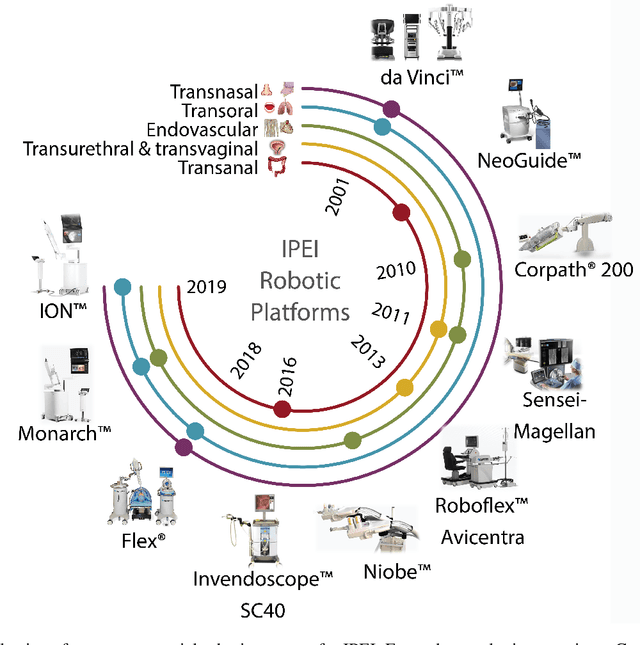

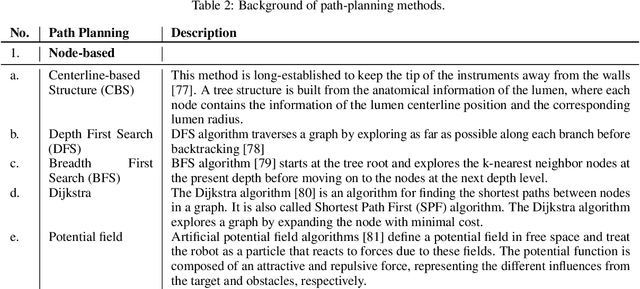

Autonomous Navigation for Robot-assisted Intraluminal and Endovascular Procedures: A Systematic Review

May 06, 2023

Abstract:Increased demand for less invasive procedures has accelerated the adoption of Intraluminal Procedures (IP) and Endovascular Interventions (EI) performed through body lumens and vessels. As navigation through lumens and vessels is quite complex, interest grows to establish autonomous navigation techniques for IP and EI for reaching the target area. Current research efforts are directed toward increasing the Level of Autonomy (LoA) during the navigation phase. One key ingredient for autonomous navigation is Motion Planning (MP) techniques. This paper provides an overview of MP techniques categorizing them based on LoA. Our analysis investigates advances for the different clinical scenarios. Through a systematic literature analysis using the PRISMA method, the study summarizes relevant works and investigates the clinical aim, LoA, adopted MP techniques, and validation types. We identify the limitations of the corresponding MP methods and provide directions to improve the robustness of the algorithms in dynamic intraluminal environments. MP for IP and EI can be classified into four subgroups: node, sampling, optimization, and learning-based techniques, with a notable rise in learning-based approaches in recent years. One of the review's contributions is the identification of the limiting factors in IP and EI robotic systems hindering higher levels of autonomous navigation. In the future, navigation is bound to become more autonomous, placing the clinician in a supervisory position to improve control precision and reduce workload.

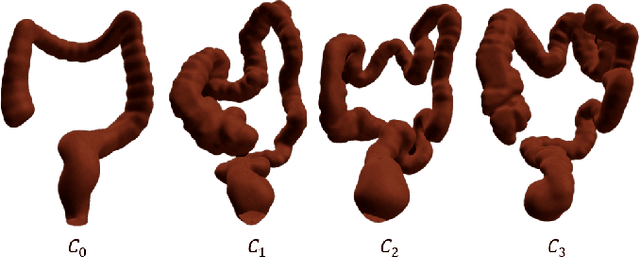

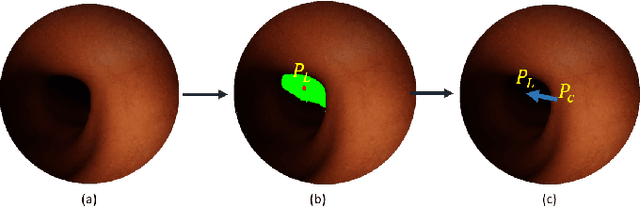

Constrained Reinforcement Learning and Formal Verification for Safe Colonoscopy Navigation

Mar 16, 2023

Abstract:The field of robotic Flexible Endoscopes (FEs) has progressed significantly, offering a promising solution to reduce patient discomfort. However, the limited autonomy of most robotic FEs results in non-intuitive and challenging manoeuvres, constraining their application in clinical settings. While previous studies have employed lumen tracking for autonomous navigation, they fail to adapt to the presence of obstructions and sharp turns when the endoscope faces the colon wall. In this work, we propose a Deep Reinforcement Learning (DRL)-based navigation strategy that eliminates the need for lumen tracking. However, the use of DRL methods poses safety risks as they do not account for potential hazards associated with the actions taken. To ensure safety, we exploit a Constrained Reinforcement Learning (CRL) method to restrict the policy in a predefined safety regime. Moreover, we present a model selection strategy that utilises Formal Verification (FV) to choose a policy that is entirely safe before deployment. We validate our approach in a virtual colonoscopy environment and report that out of the 300 trained policies, we could identify three policies that are entirely safe. Our work demonstrates that CRL, combined with model selection through FV, can improve the robustness and safety of robotic behaviour in surgical applications.

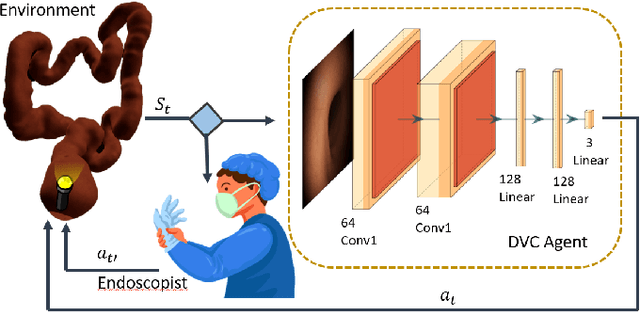

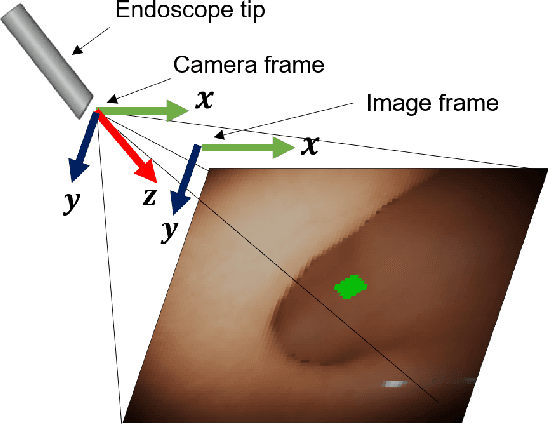

Colonoscopy Navigation using End-to-End Deep Visuomotor Control: A User Study

Jun 30, 2022

Abstract:Flexible endoscopes for colonoscopy present several limitations due to their inherent complexity, resulting in patient discomfort and lack of intuitiveness for clinicians. Robotic devices together with autonomous control represent a viable solution to reduce the workload of endoscopists and the training time while improving the overall procedure outcome. Prior works on autonomous endoscope control use heuristic policies that limit their generalisation to the unstructured and highly deformable colon environment and require frequent human intervention. This work proposes an image-based control of the endoscope using Deep Reinforcement Learning, called Deep Visuomotor Control (DVC), to exhibit adaptive behaviour in convoluted sections of the colon tract. DVC learns a mapping between the endoscopic images and the control signal of the endoscope. A first user study of 20 expert gastrointestinal endoscopists was carried out to compare their navigation performance with DVC policies using a realistic virtual simulator. The results indicate that DVC shows equivalent performance on several assessment parameters, being more safer. Moreover, a second user study with 20 novice participants was performed to demonstrate easier human supervision compared to a state-of-the-art heuristic control policy. Seamless supervision of colonoscopy procedures would enable interventionists to focus on the medical decision rather than on the control problem of the endoscope.

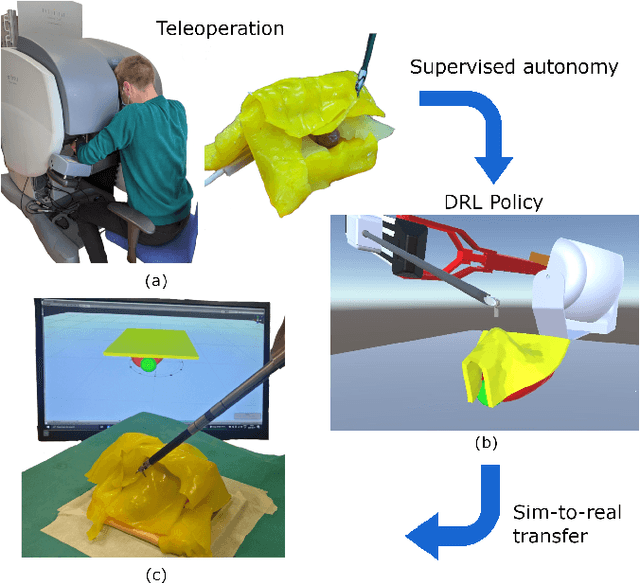

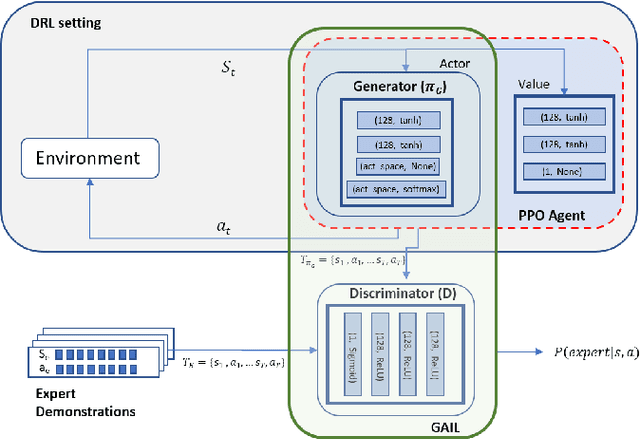

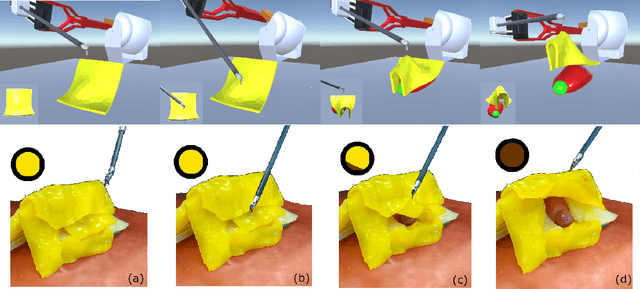

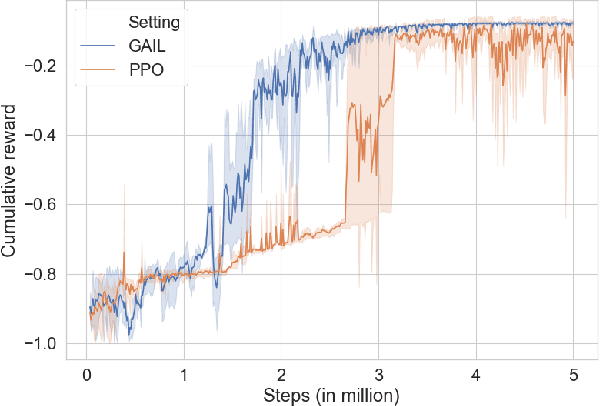

Learning from Demonstrations for Autonomous Soft-tissue Retraction

Oct 01, 2021

Abstract:The current research focus in Robot-Assisted Minimally Invasive Surgery (RAMIS) is directed towards increasing the level of robot autonomy, to place surgeons in a supervisory position. Although Learning from Demonstrations (LfD) approaches are among the preferred ways for an autonomous surgical system to learn expert gestures, they require a high number of demonstrations and show poor generalization to the variable conditions of the surgical environment. In this work, we propose an LfD methodology based on Generative Adversarial Imitation Learning (GAIL) that is built on a Deep Reinforcement Learning (DRL) setting. GAIL combines generative adversarial networks to learn the distribution of expert trajectories with a DRL setting to ensure generalisation of trajectories providing human-like behaviour. We consider automation of tissue retraction, a common RAMIS task that involves soft tissues manipulation to expose a region of interest. In our proposed methodology, a small set of expert trajectories can be acquired through the da Vinci Research Kit (dVRK) and used to train the proposed LfD method inside a simulated environment. Results indicate that our methodology can accomplish the tissue retraction task with human-like behaviour while being more sample-efficient than the baseline DRL method. Towards the end, we show that the learnt policies can be successfully transferred to the real robotic platform and deployed for soft tissue retraction on a synthetic phantom.

Safe Reinforcement Learning using Formal Verification for Tissue Retraction in Autonomous Robotic-Assisted Surgery

Sep 06, 2021

Abstract:Deep Reinforcement Learning (DRL) is a viable solution for automating repetitive surgical subtasks due to its ability to learn complex behaviours in a dynamic environment. This task automation could lead to reduced surgeon's cognitive workload, increased precision in critical aspects of the surgery, and fewer patient-related complications. However, current DRL methods do not guarantee any safety criteria as they maximise cumulative rewards without considering the risks associated with the actions performed. Due to this limitation, the application of DRL in the safety-critical paradigm of robot-assisted Minimally Invasive Surgery (MIS) has been constrained. In this work, we introduce a Safe-DRL framework that incorporates safety constraints for the automation of surgical subtasks via DRL training. We validate our approach in a virtual scene that replicates a tissue retraction task commonly occurring in multiple phases of an MIS. Furthermore, to evaluate the safe behaviour of the robotic arms, we formulate a formal verification tool for DRL methods that provides the probability of unsafe configurations. Our results indicate that a formal analysis guarantees safety with high confidence such that the robotic instruments operate within the safe workspace and avoid hazardous interaction with other anatomical structures.

A Recurrent Convolutional Neural Network Approach for Sensorless Force Estimation in Robotic Surgery

May 22, 2018

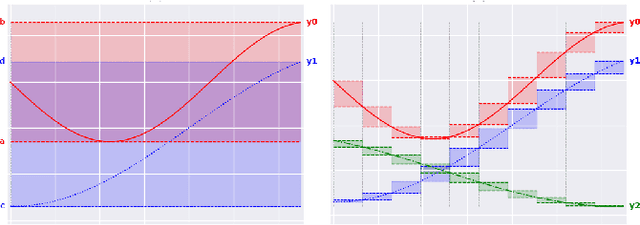

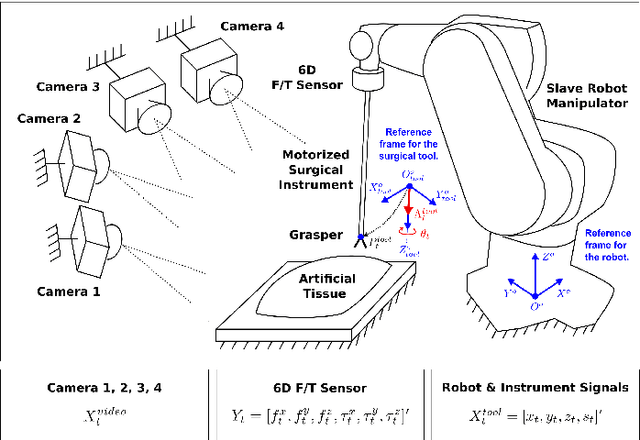

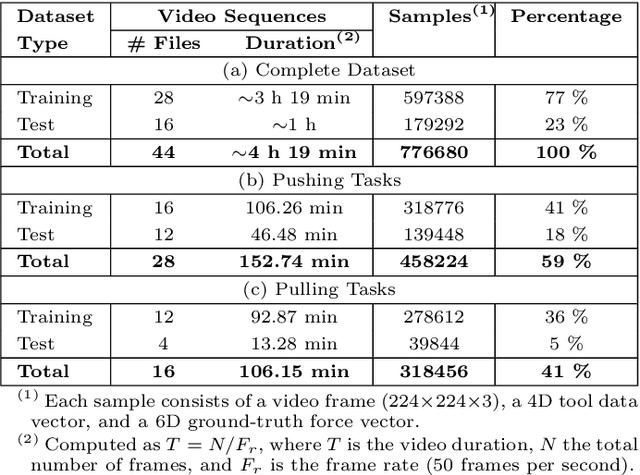

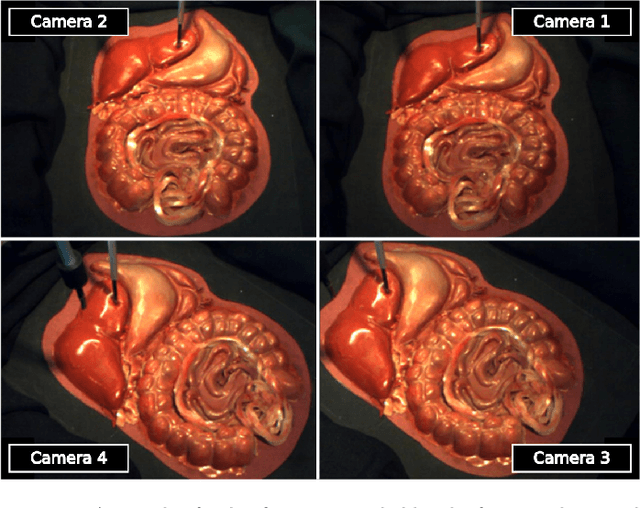

Abstract:Providing force feedback as relevant information in current Robot-Assisted Minimally Invasive Surgery systems constitutes a technological challenge due to the constraints imposed by the surgical environment. In this context, Sensorless Force Estimation techniques represent a potential solution, enabling to sense the interaction forces between the surgical instruments and soft-tissues. Specifically, if visual feedback is available for observing soft-tissues' deformation, this feedback can be used to estimate the forces applied to these tissues. To this end, a force estimation model, based on Convolutional Neural Networks and Long-Short Term Memory networks, is proposed in this work. This model is designed to process both, the spatiotemporal information present in video sequences and the temporal structure of tool data (the surgical tool-tip trajectory and its grasping status). A series of analyses are carried out to reveal the advantages of the proposal and the challenges that remain for real applications. This research work focuses on two surgical task scenarios, referred to as pushing and pulling tissue. For these two scenarios, different input data modalities and their effect on the force estimation quality are investigated. These input data modalities are tool data, video sequences and a combination of both. The results suggest that the force estimation quality is better when both, the tool data and video sequences, are processed by the neural network model. Moreover, this study reveals the need for a loss function, designed to promote the modeling of smooth and sharp details found in force signals. Finally, the results show that the modeling of forces due to pulling tasks is more challenging than for the simplest pushing actions.

Robust Cardiac Motion Estimation using Ultrafast Ultrasound Data: A Low-Rank-Topology-Preserving Approach

Apr 25, 2017

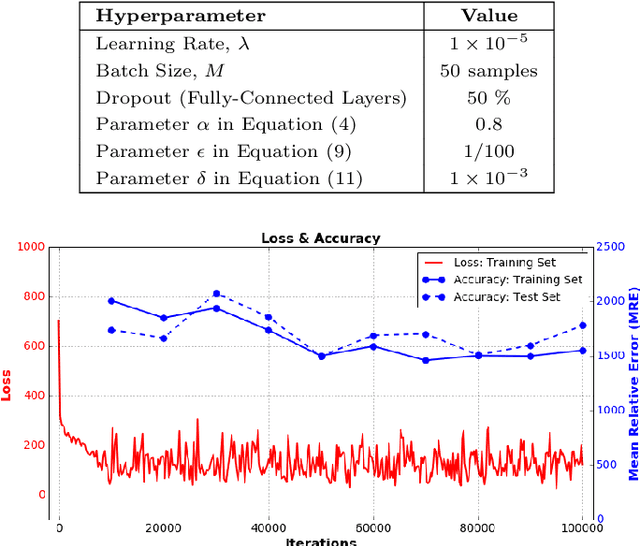

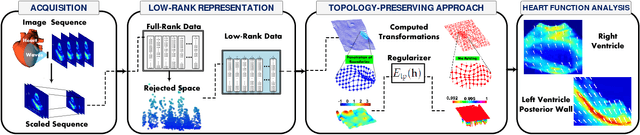

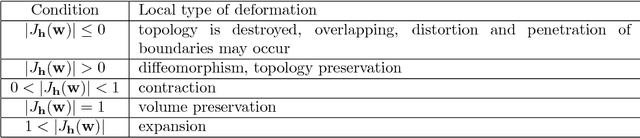

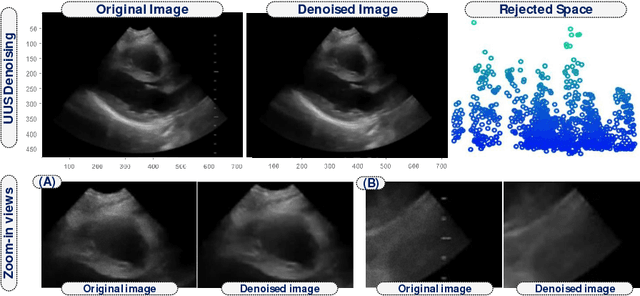

Abstract:Cardiac motion estimation is an important diagnostic tool to detect heart diseases and it has been explored with modalities such as MRI and conventional ultrasound (US) sequences. US cardiac motion estimation still presents challenges because of the complex motion patterns and the presence of noise. In this work, we propose a novel approach to estimate the cardiac motion using ultrafast ultrasound data. -- Our solution is based on a variational formulation characterized by the L2-regularized class. The displacement is represented by a lattice of b-splines and we ensure robustness by applying a maximum likelihood type estimator. While this is an important part of our solution, the main highlight of this paper is to combine a low-rank data representation with topology preservation. Low-rank data representation (achieved by finding the k-dominant singular values of a Casorati Matrix arranged from the data sequence) speeds up the global solution and achieves noise reduction. On the other hand, topology preservation (achieved by monitoring the Jacobian determinant) allows to radically rule out distortions while carefully controlling the size of allowed expansions and contractions. Our variational approach is carried out on a realistic dataset as well as on a simulated one. We demonstrate how our proposed variational solution deals with complex deformations through careful numerical experiments. While maintaining the accuracy of the solution, the low-rank preprocessing is shown to speed up the convergence of the variational problem. Beyond cardiac motion estimation, our approach is promising for the analysis of other organs that experience motion.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge