Eleonora Tagliabue

Sim-To-Real Transfer for Visual Reinforcement Learning of Deformable Object Manipulation for Robot-Assisted Surgery

Jun 10, 2024Abstract:Automation holds the potential to assist surgeons in robotic interventions, shifting their mental work load from visuomotor control to high level decision making. Reinforcement learning has shown promising results in learning complex visuomotor policies, especially in simulation environments where many samples can be collected at low cost. A core challenge is learning policies in simulation that can be deployed in the real world, thereby overcoming the sim-to-real gap. In this work, we bridge the visual sim-to-real gap with an image-based reinforcement learning pipeline based on pixel-level domain adaptation and demonstrate its effectiveness on an image-based task in deformable object manipulation. We choose a tissue retraction task because of its importance in clinical reality of precise cancer surgery. After training in simulation on domain-translated images, our policy requires no retraining to perform tissue retraction with a 50% success rate on the real robotic system using raw RGB images. Furthermore, our sim-to-real transfer method makes no assumptions on the task itself and requires no paired images. This work introduces the first successful application of visual sim-to-real transfer for robotic manipulation of deformable objects in the surgical field, which represents a notable step towards the clinical translation of cognitive surgical robotics.

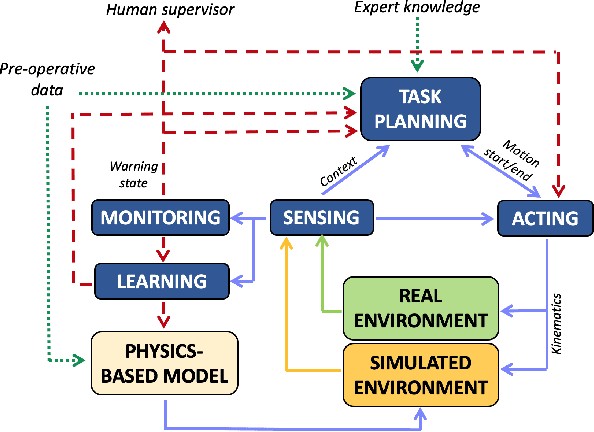

Deliberation in autonomous robotic surgery: a framework for handling anatomical uncertainty

Mar 10, 2022

Abstract:Autonomous robotic surgery requires deliberation, i.e. the ability to plan and execute a task adapting to uncertain and dynamic environments. Uncertainty in the surgical domain is mainly related to the partial pre-operative knowledge about patient-specific anatomical properties. In this paper, we introduce a logic-based framework for surgical tasks with deliberative functions of monitoring and learning. The DEliberative Framework for Robot-Assisted Surgery (DEFRAS) estimates a pre-operative patient-specific plan, and executes it while continuously measuring the applied force obtained from a biomechanical pre-operative model. Monitoring module compares this model with the actual situation reconstructed from sensors. In case of significant mismatch, the learning module is invoked to update the model, thus improving the estimate of the exerted force. DEFRAS is validated both in simulated and real environment with da Vinci Research Kit executing soft tissue retraction. Compared with state-of-the art related works, the success rate of the task is improved while minimizing the interaction with the tissue to prevent unintentional damage.

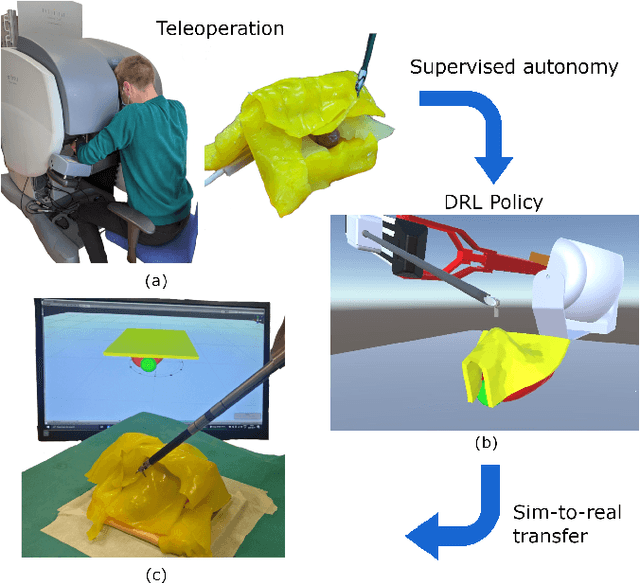

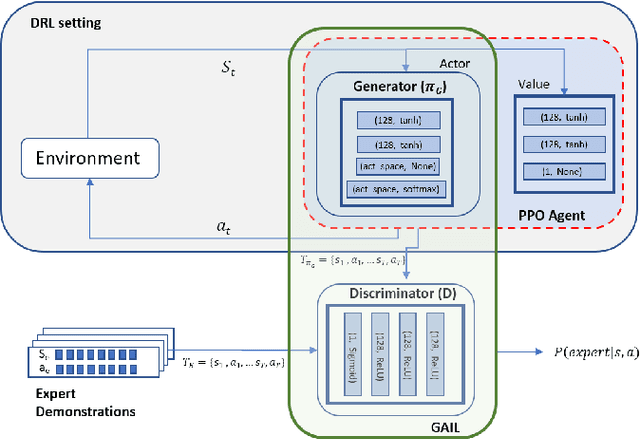

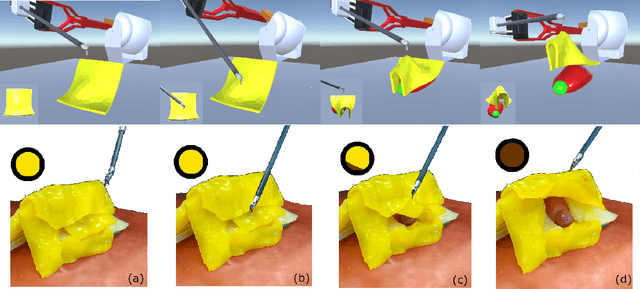

Learning from Demonstrations for Autonomous Soft-tissue Retraction

Oct 01, 2021

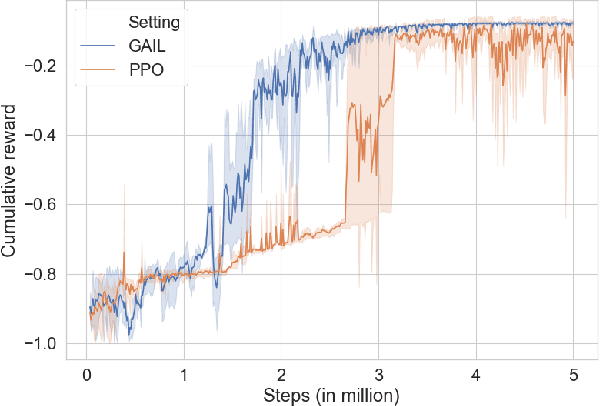

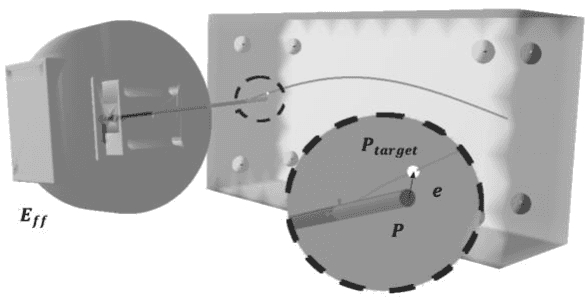

Abstract:The current research focus in Robot-Assisted Minimally Invasive Surgery (RAMIS) is directed towards increasing the level of robot autonomy, to place surgeons in a supervisory position. Although Learning from Demonstrations (LfD) approaches are among the preferred ways for an autonomous surgical system to learn expert gestures, they require a high number of demonstrations and show poor generalization to the variable conditions of the surgical environment. In this work, we propose an LfD methodology based on Generative Adversarial Imitation Learning (GAIL) that is built on a Deep Reinforcement Learning (DRL) setting. GAIL combines generative adversarial networks to learn the distribution of expert trajectories with a DRL setting to ensure generalisation of trajectories providing human-like behaviour. We consider automation of tissue retraction, a common RAMIS task that involves soft tissues manipulation to expose a region of interest. In our proposed methodology, a small set of expert trajectories can be acquired through the da Vinci Research Kit (dVRK) and used to train the proposed LfD method inside a simulated environment. Results indicate that our methodology can accomplish the tissue retraction task with human-like behaviour while being more sample-efficient than the baseline DRL method. Towards the end, we show that the learnt policies can be successfully transferred to the real robotic platform and deployed for soft tissue retraction on a synthetic phantom.

Robotic needle steering in deformable tissues with extreme learning machines

Apr 02, 2021

Abstract:Control strategies for robotic needle steering in soft tissues must account for complex interactions between the needle and the tissue to achieve accurate needle tip positioning. Recent findings show faster robotic command rate can improve the control stability in realistic scenarios. This study proposes the use of Extreme Learning Machines to provide fast commands for robotic needle steering. A synthetic dataset based on the inverse finite element simulation control framework is used to train the model. Results show the model is capable to infer commands 66% faster than the inverse simulation and reaches acceptable precision even on previously unseen trajectories.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge