Adrienne Raglin

CAMO: Causality-Guided Adversarial Multimodal Domain Generalization for Crisis Classification

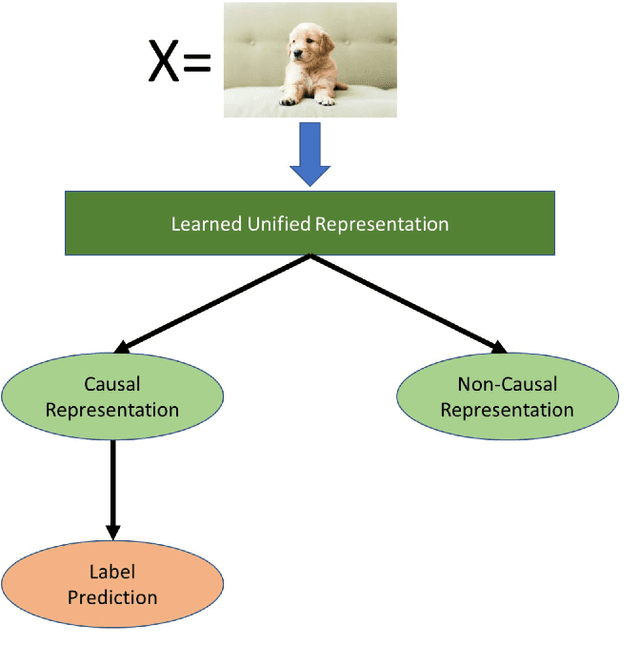

Dec 08, 2025Abstract:Crisis classification in social media aims to extract actionable disaster-related information from multimodal posts, which is a crucial task for enhancing situational awareness and facilitating timely emergency responses. However, the wide variation in crisis types makes achieving generalizable performance across unseen disasters a persistent challenge. Existing approaches primarily leverage deep learning to fuse textual and visual cues for crisis classification, achieving numerically plausible results under in-domain settings. However, they exhibit poor generalization across unseen crisis types because they 1. do not disentangle spurious and causal features, resulting in performance degradation under domain shift, and 2. fail to align heterogeneous modality representations within a shared space, which hinders the direct adaptation of established single-modality domain generalization (DG) techniques to the multimodal setting. To address these issues, we introduce a causality-guided multimodal domain generalization (MMDG) framework that combines adversarial disentanglement with unified representation learning for crisis classification. The adversarial objective encourages the model to disentangle and focus on domain-invariant causal features, leading to more generalizable classifications grounded in stable causal mechanisms. The unified representation aligns features from different modalities within a shared latent space, enabling single-modality DG strategies to be seamlessly extended to multimodal learning. Experiments on the different datasets demonstrate that our approach achieves the best performance in unseen disaster scenarios.

SERN: Simulation-Enhanced Realistic Navigation for Multi-Agent Robotic Systems in Contested Environments

Oct 22, 2024

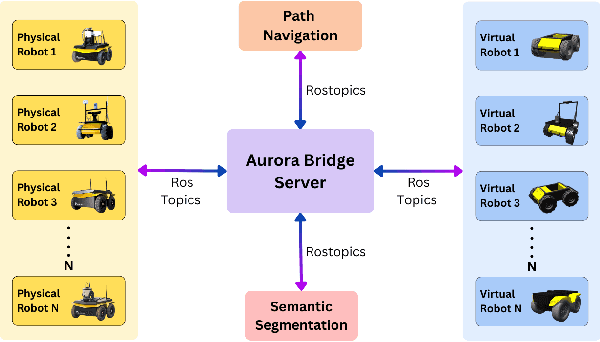

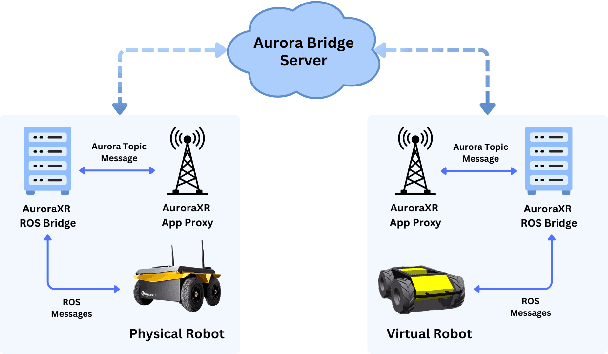

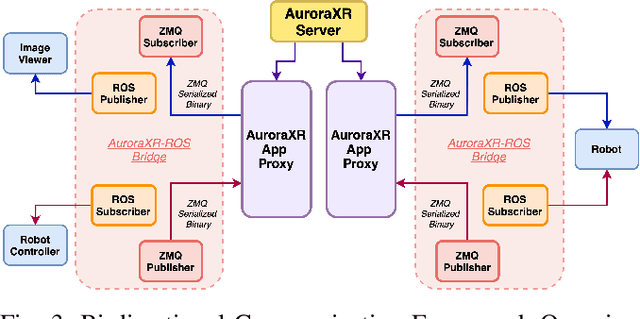

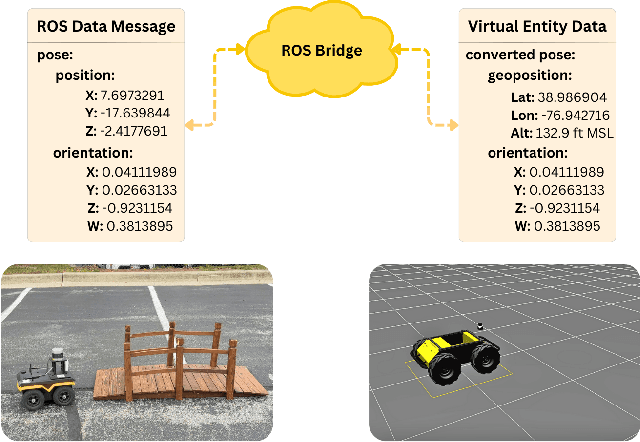

Abstract:The increasing deployment of autonomous systems in complex environments necessitates efficient communication and task completion among multiple agents. This paper presents SERN (Simulation-Enhanced Realistic Navigation), a novel framework integrating virtual and physical environments for real-time collaborative decision-making in multi-robot systems. SERN addresses key challenges in asset deployment and coordination through a bi-directional communication framework using the AuroraXR ROS Bridge. Our approach advances the SOTA through accurate real-world representation in virtual environments using Unity high-fidelity simulator; synchronization of physical and virtual robot movements; efficient ROS data distribution between remote locations; and integration of SOTA semantic segmentation for enhanced environmental perception. Our evaluations show a 15% to 24% improvement in latency and up to a 15% increase in processing efficiency compared to traditional ROS setups. Real-world and virtual simulation experiments with multiple robots demonstrate synchronization accuracy, achieving less than 5 cm positional error and under 2-degree rotational error. These results highlight SERN's potential to enhance situational awareness and multi-agent coordination in diverse, contested environments.

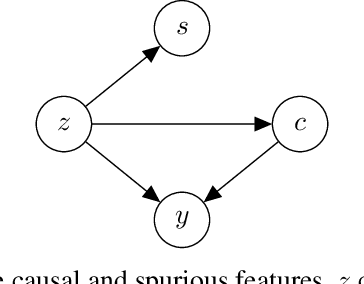

Domain Generalization -- A Causal Perspective

Sep 30, 2022

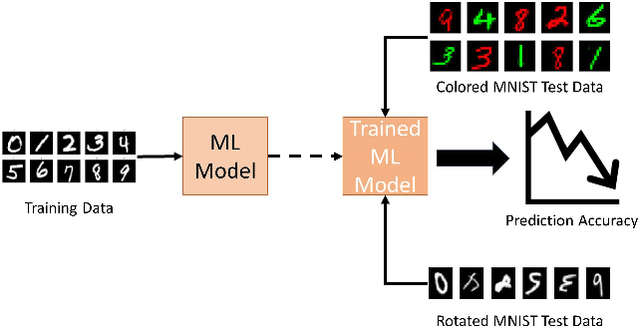

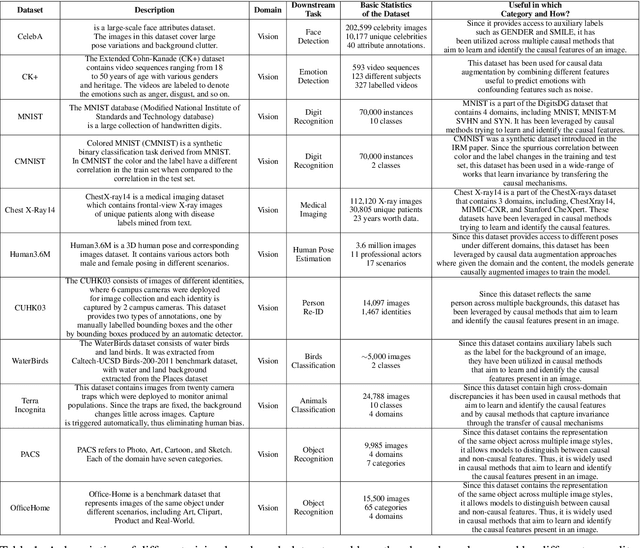

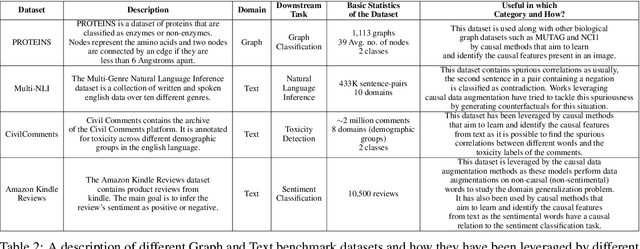

Abstract:Machine learning models have gained widespread success, from healthcare to personalized recommendations. One of the preliminary assumptions of these models is the independent and identical distribution. Therefore, the train and test data are sampled from the same observation per this assumption. However, this assumption seldom holds in the real world due to distribution shifts. Since the models rely heavily on this assumption, they exhibit poor generalization capabilities. Over the recent years, dedicated efforts have been made to improve the generalization capabilities of these models. The primary idea behind these methods is to identify stable features or mechanisms that remain invariant across the different distributions. Many generalization approaches employ causal theories to describe invariance since causality and invariance are inextricably intertwined. However, current surveys deal with the causality-aware domain generalization methods on a very high-level. Furthermore, none of the existing surveys categorize the causal domain generalization methods based on the problem and causal theories these methods leverage. To this end, we present a comprehensive survey on causal domain generalization models from the aspects of the problem and causal theories. Furthermore, this survey includes in-depth insights into publicly accessible datasets and benchmarks for domain generalization in various domains. Finally, we conclude the survey with insights and discussions on future research directions. Finally, we conclude the survey with insights and discussions on future research directions.

Image-Audio Encoding to Improve C2 Decision-Making in Multi-Domain Environment

Jun 03, 2021

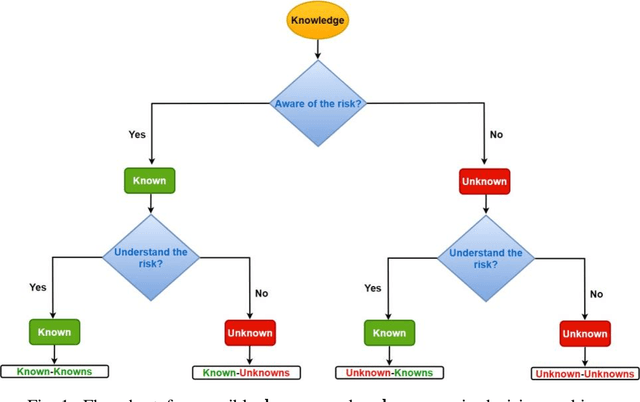

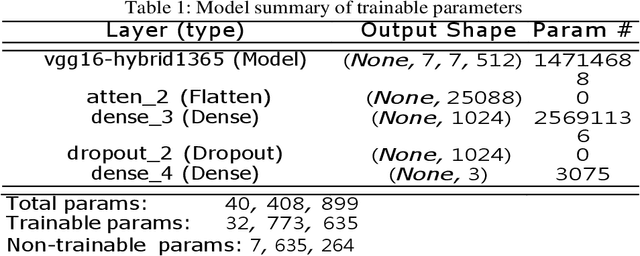

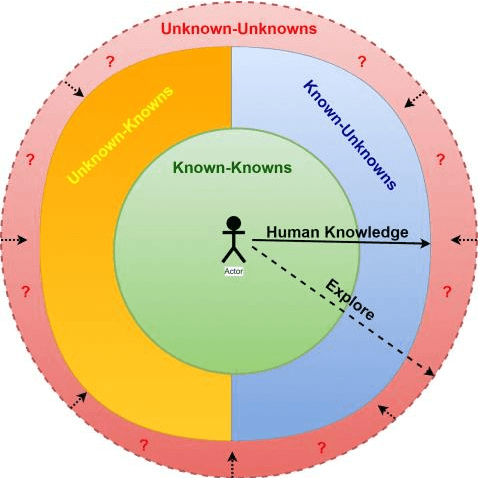

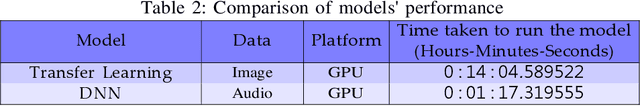

Abstract:The military is investigating methods to improve communication and agility in its multi-domain operations (MDO). Nascent popularity of Internet of Things (IoT) has gained traction in public and government domains. Its usage in MDO may revolutionize future battlefields and may enable strategic advantage. While this technology offers leverage to military capabilities, it comes with challenges where one is the uncertainty and associated risk. A key question is how can these uncertainties be addressed. Recently published studies proposed information camouflage to transform information from one data domain to another. As this is comparatively a new approach, we investigate challenges of such transformations and how these associated uncertainties can be detected and addressed, specifically unknown-unknowns to improve decision-making.

* Published in: The 25th International Command and Control Research and Technology Symposium (ICCRTS - 2020)

IoT Solutions with Multi-Sensor Fusion and Signal-Image Encoding for Secure Data Transfer and Decision Making

Jun 02, 2021

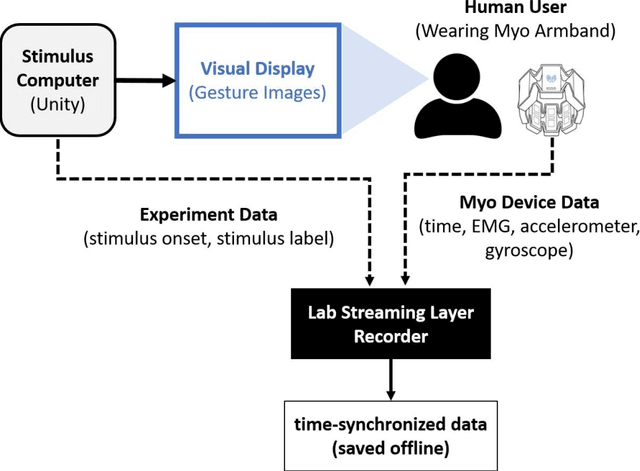

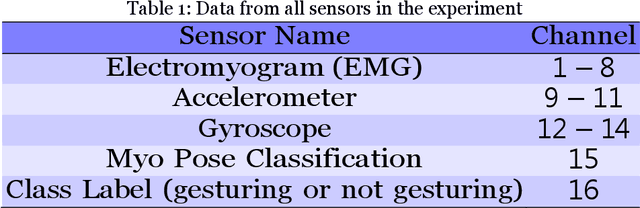

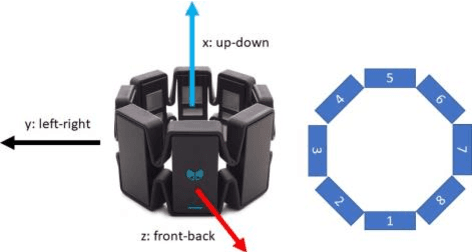

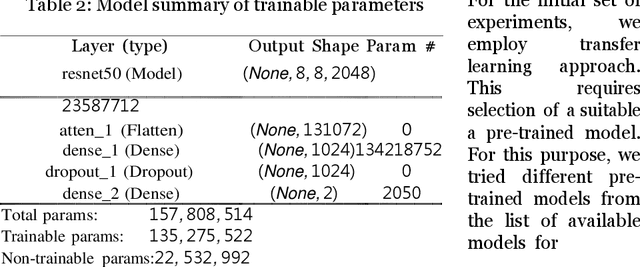

Abstract:Deployment of Internet of Things (IoT) devices and Data Fusion techniques have gained popularity in public and government domains. This usually requires capturing and consolidating data from multiple sources. As datasets do not necessarily originate from identical sensors, fused data typically results in a complex data problem. Because military is investigating how heterogeneous IoT devices can aid processes and tasks, we investigate a multi-sensor approach. Moreover, we propose a signal to image encoding approach to transform information (signal) to integrate (fuse) data from IoT wearable devices to an image which is invertible and easier to visualize supporting decision making. Furthermore, we investigate the challenge of enabling an intelligent identification and detection operation and demonstrate the feasibility of the proposed Deep Learning and Anomaly Detection models that can support future application that utilizes hand gesture data from wearable devices.

Causal Inference for Time series Analysis: Problems, Methods and Evaluation

Feb 11, 2021

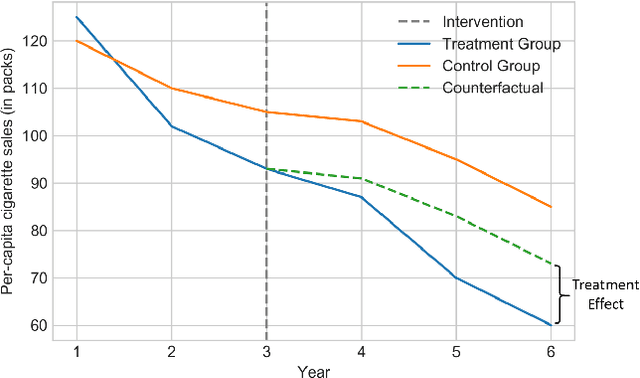

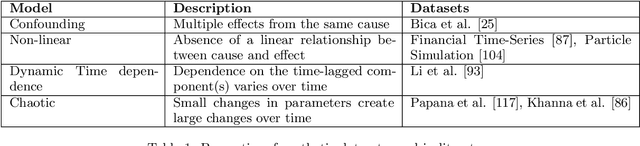

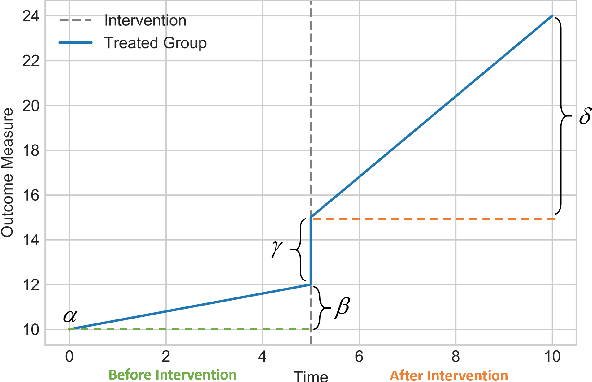

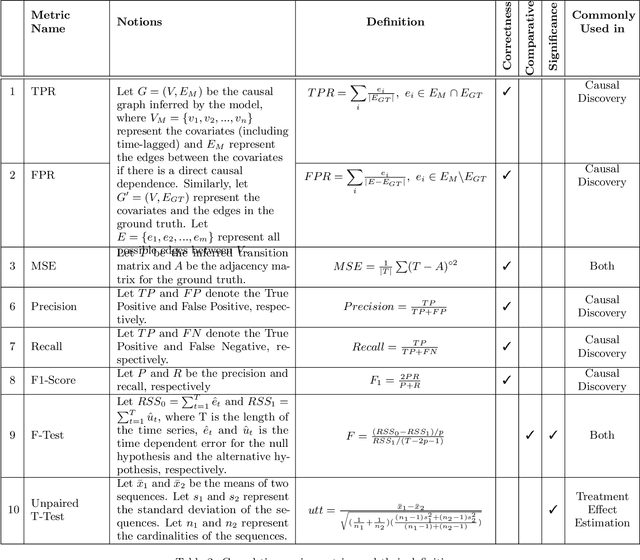

Abstract:Time series data is a collection of chronological observations which is generated by several domains such as medical and financial fields. Over the years, different tasks such as classification, forecasting, and clustering have been proposed to analyze this type of data. Time series data has been also used to study the effect of interventions over time. Moreover, in many fields of science, learning the causal structure of dynamic systems and time series data is considered an interesting task which plays an important role in scientific discoveries. Estimating the effect of an intervention and identifying the causal relations from the data can be performed via causal inference. Existing surveys on time series discuss traditional tasks such as classification and forecasting or explain the details of the approaches proposed to solve a specific task. In this paper, we focus on two causal inference tasks, i.e., treatment effect estimation and causal discovery for time series data, and provide a comprehensive review of the approaches in each task. Furthermore, we curate a list of commonly used evaluation metrics and datasets for each task and provide in-depth insight. These metrics and datasets can serve as benchmarks for research in the field.

Causal Adversarial Network for Learning Conditional and Interventional Distributions

Sep 21, 2020

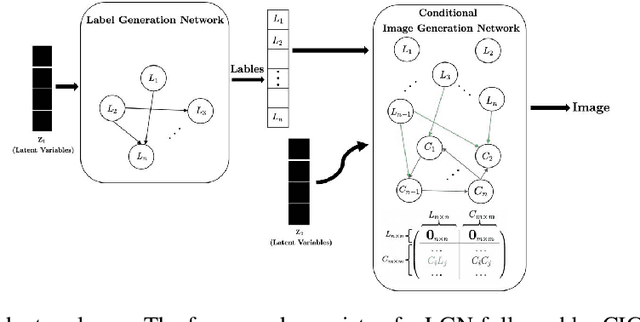

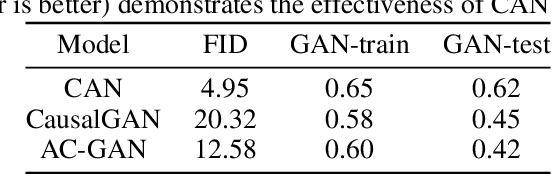

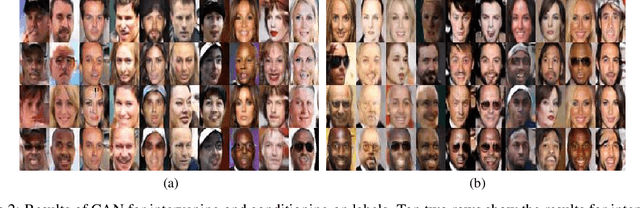

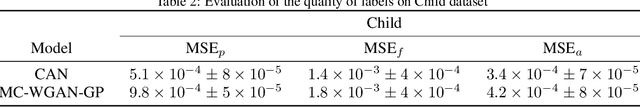

Abstract:We propose a generative Causal Adversarial Network (CAN) for learning and sampling from conditional and interventional distributions. In contrast to the existing CausalGAN which requires the causal graph to be given, our proposed framework learns the causal relations from the data and generates samples accordingly. The proposed CAN comprises a two-fold process namely Label Generation Network (LGN) and Conditional Image Generation Network (CIGN). The LGN is a GAN-based architecture which learns and samples from the causal model over labels. The sampled labels are then fed to CIGN, a conditional GAN architecture, which learns the relationships amongst labels and pixels and pixels themselves and generates samples based on them. This framework is equipped with an intervention mechanism which enables. the model to generate samples from interventional distributions. We quantitatively and qualitatively assess the performance of CAN and empirically show that our model is able to generate both interventional and conditional samples without having access to the causal graph for the application of face generation on CelebA data.

Causal Interpretability for Machine Learning -- Problems, Methods and Evaluation

Mar 19, 2020

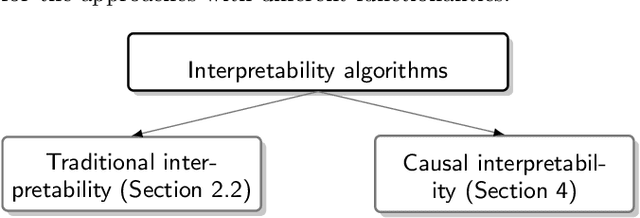

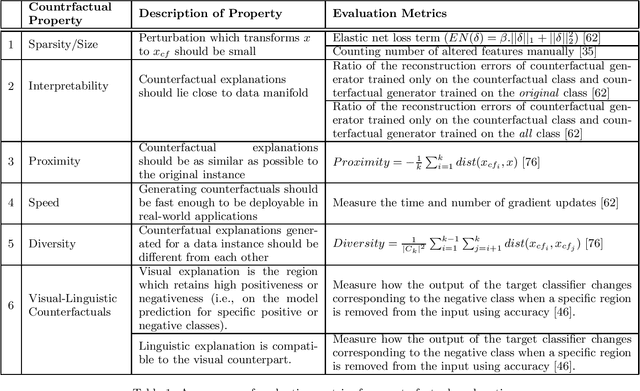

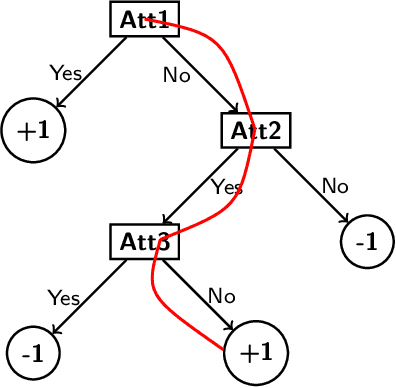

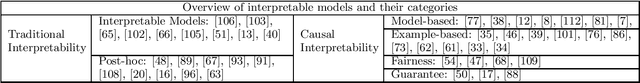

Abstract:Machine learning models have had discernible achievements in a myriad of applications. However, most of these models are black-boxes, and it is obscure how the decisions are made by them. This makes the models unreliable and untrustworthy. To provide insights into the decision making processes of these models, a variety of traditional interpretable models have been proposed. Moreover, to generate more human-friendly explanations, recent work on interpretability tries to answer questions related to causality such as "Why does this model makes such decisions?" or "Was it a specific feature that caused the decision made by the model?". In this work, models that aim to answer causal questions are referred to as causal interpretable models. The existing surveys have covered concepts and methodologies of traditional interpretability. In this work, we present a comprehensive survey on causal interpretable models from the aspects of the problems and methods. In addition, this survey provides in-depth insights into the existing evaluation metrics for measuring interpretability, which can help practitioners understand for what scenarios each evaluation metric is suitable.

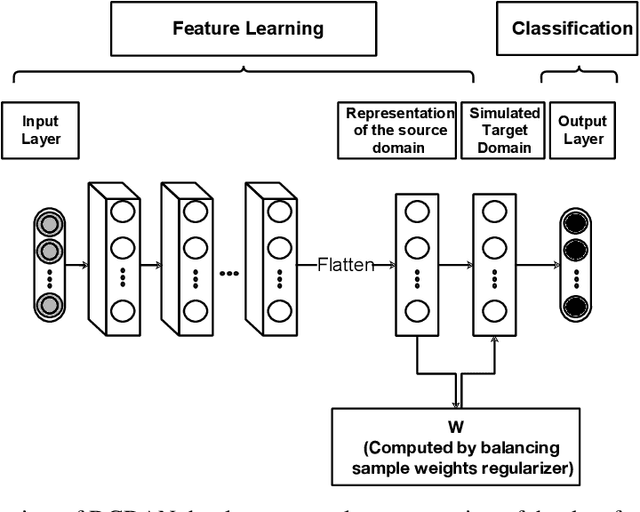

Deep causal representation learning for unsupervised domain adaptation

Oct 28, 2019

Abstract:Studies show that the representations learned by deep neural networks can be transferred to similar prediction tasks in other domains for which we do not have enough labeled data. However, as we transition to higher layers in the model, the representations become more task-specific and less generalizable. Recent research on deep domain adaptation proposed to mitigate this problem by forcing the deep model to learn more transferable feature representations across domains. This is achieved by incorporating domain adaptation methods into deep learning pipeline. The majority of existing models learn the transferable feature representations which are highly correlated with the outcome. However, correlations are not always transferable. In this paper, we propose a novel deep causal representation learning framework for unsupervised domain adaptation, in which we propose to learn domain-invariant causal representations of the input from the source domain. We simulate a virtual target domain using reweighted samples from the source domain and estimate the causal effect of features on the outcomes. The extensive comparative study demonstrates the strengths of the proposed model for unsupervised domain adaptation via causal representations.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge