Zhuowen Zou

Hyperdimensional Quantum Factorization

Jun 13, 2024

Abstract:This paper presents a quantum algorithm for efficiently decoding hypervectors, a crucial process in extracting atomic elements from hypervectors - an essential task in Hyperdimensional Computing (HDC) models for interpretable learning and information retrieval. HDC employs high-dimensional vectors and efficient operators to encode and manipulate information, representing complex objects from atomic concepts. When one attempts to decode a hypervector that is the product (binding) of multiple hypervectors, the factorization becomes prohibitively costly with classical optimization-based methods and specialized recurrent networks, an inherent consequence of the binding operation. We propose HDQF, an innovative quantum computing approach, to address this challenge. By exploiting parallels between HDC and quantum computing and capitalizing on quantum algorithms' speedup capabilities, HDQF encodes potential factors as a quantum superposition using qubit states and bipolar vector representation. This yields a quadratic speedup over classical search methods and effectively mitigates Hypervector Factorization capacity issues.

Generalized Holographic Reduced Representations

May 15, 2024

Abstract:Deep learning has achieved remarkable success in recent years. Central to its success is its ability to learn representations that preserve task-relevant structure. However, massive energy, compute, and data costs are required to learn general representations. This paper explores Hyperdimensional Computing (HDC), a computationally and data-efficient brain-inspired alternative. HDC acts as a bridge between connectionist and symbolic approaches to artificial intelligence (AI), allowing explicit specification of representational structure as in symbolic approaches while retaining the flexibility of connectionist approaches. However, HDC's simplicity poses challenges for encoding complex compositional structures, especially in its binding operation. To address this, we propose Generalized Holographic Reduced Representations (GHRR), an extension of Fourier Holographic Reduced Representations (FHRR), a specific HDC implementation. GHRR introduces a flexible, non-commutative binding operation, enabling improved encoding of complex data structures while preserving HDC's desirable properties of robustness and transparency. In this work, we introduce the GHRR framework, prove its theoretical properties and its adherence to HDC properties, explore its kernel and binding characteristics, and perform empirical experiments showcasing its flexible non-commutativity, enhanced decoding accuracy for compositional structures, and improved memorization capacity compared to FHRR.

HDReason: Algorithm-Hardware Codesign for Hyperdimensional Knowledge Graph Reasoning

Mar 09, 2024

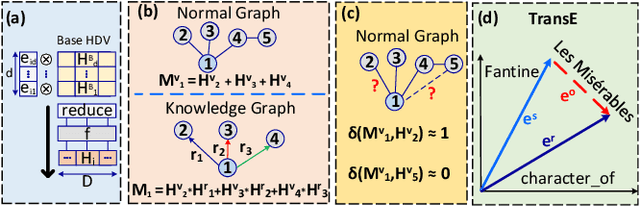

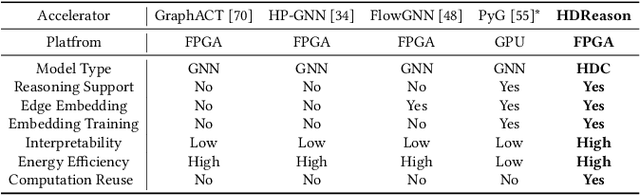

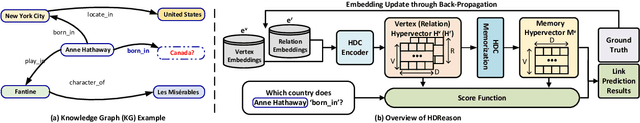

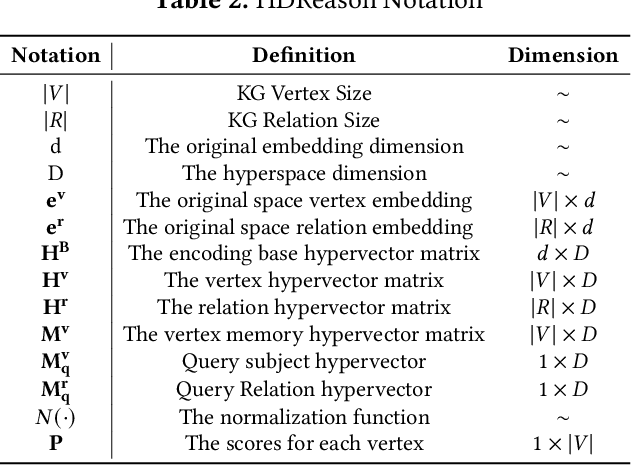

Abstract:In recent times, a plethora of hardware accelerators have been put forth for graph learning applications such as vertex classification and graph classification. However, previous works have paid little attention to Knowledge Graph Completion (KGC), a task that is well-known for its significantly higher algorithm complexity. The state-of-the-art KGC solutions based on graph convolution neural network (GCN) involve extensive vertex/relation embedding updates and complicated score functions, which are inherently cumbersome for acceleration. As a result, existing accelerator designs are no longer optimal, and a novel algorithm-hardware co-design for KG reasoning is needed. Recently, brain-inspired HyperDimensional Computing (HDC) has been introduced as a promising solution for lightweight machine learning, particularly for graph learning applications. In this paper, we leverage HDC for an intrinsically more efficient and acceleration-friendly KGC algorithm. We also co-design an acceleration framework named HDReason targeting FPGA platforms. On the algorithm level, HDReason achieves a balance between high reasoning accuracy, strong model interpretability, and less computation complexity. In terms of architecture, HDReason offers reconfigurability, high training throughput, and low energy consumption. When compared with NVIDIA RTX 4090 GPU, the proposed accelerator achieves an average 10.6x speedup and 65x energy efficiency improvement. When conducting cross-models and cross-platforms comparison, HDReason yields an average 4.2x higher performance and 3.4x better energy efficiency with similar accuracy versus the state-of-the-art FPGA-based GCN training platform.

HEAL: Brain-inspired Hyperdimensional Efficient Active Learning

Feb 17, 2024

Abstract:Drawing inspiration from the outstanding learning capability of our human brains, Hyperdimensional Computing (HDC) emerges as a novel computing paradigm, and it leverages high-dimensional vector presentation and operations for brain-like lightweight Machine Learning (ML). Practical deployments of HDC have significantly enhanced the learning efficiency compared to current deep ML methods on a broad spectrum of applications. However, boosting the data efficiency of HDC classifiers in supervised learning remains an open question. In this paper, we introduce Hyperdimensional Efficient Active Learning (HEAL), a novel Active Learning (AL) framework tailored for HDC classification. HEAL proactively annotates unlabeled data points via uncertainty and diversity-guided acquisition, leading to a more efficient dataset annotation and lowering labor costs. Unlike conventional AL methods that only support classifiers built upon deep neural networks (DNN), HEAL operates without the need for gradient or probabilistic computations. This allows it to be effortlessly integrated with any existing HDC classifier architecture. The key design of HEAL is a novel approach for uncertainty estimation in HDC classifiers through a lightweight HDC ensemble with prior hypervectors. Additionally, by exploiting hypervectors as prototypes (i.e., compact representations), we develop an extra metric for HEAL to select diverse samples within each batch for annotation. Our evaluation shows that HEAL surpasses a diverse set of baselines in AL quality and achieves notably faster acquisition than many BNN-powered or diversity-guided AL methods, recording 11 times to 40,000 times speedup in acquisition runtime per batch.

Spiking Hyperdimensional Network: Neuromorphic Models Integrated with Memory-Inspired Framework

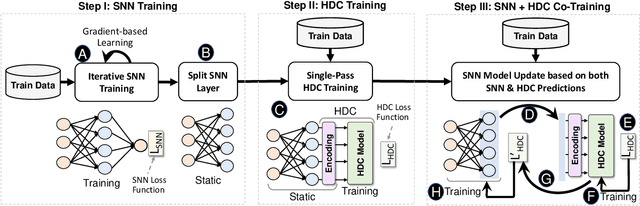

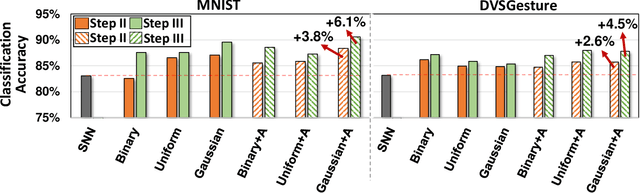

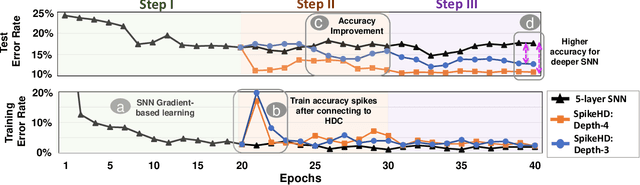

Oct 01, 2021

Abstract:Recently, brain-inspired computing models have shown great potential to outperform today's deep learning solutions in terms of robustness and energy efficiency. Particularly, Spiking Neural Networks (SNNs) and HyperDimensional Computing (HDC) have shown promising results in enabling efficient and robust cognitive learning. Despite the success, these two brain-inspired models have different strengths. While SNN mimics the physical properties of the human brain, HDC models the brain on a more abstract and functional level. Their design philosophies demonstrate complementary patterns that motivate their combination. With the help of the classical psychological model on memory, we propose SpikeHD, the first framework that fundamentally combines Spiking neural network and hyperdimensional computing. SpikeHD generates a scalable and strong cognitive learning system that better mimics brain functionality. SpikeHD exploits spiking neural networks to extract low-level features by preserving the spatial and temporal correlation of raw event-based spike data. Then, it utilizes HDC to operate over SNN output by mapping the signal into high-dimensional space, learning the abstract information, and classifying the data. Our extensive evaluation on a set of benchmark classification problems shows that SpikeHD provides the following benefit compared to SNN architecture: (1) significantly enhance learning capability by exploiting two-stage information processing, (2) enables substantial robustness to noise and failure, and (3) reduces the network size and required parameters to learn complex information.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge