Yury Polyanskiy

The Radio-Frequency Transformer for Signal Separation

Mar 10, 2026Abstract:We study a problem of signal separation: estimating a signal of interest (SOI) contaminated by an unknown non-Gaussian background/interference. Given the training data consisting of examples of SOI and interference, we show how to build a fully data-driven signal separator. To that end we learn a good discrete tokenizer for SOI and then train an end-to-end transformer on a cross-entropy loss. Training with a cross-entropy shows substantial improvements over the conventional mean-squared error (MSE). Our tokenizer is a modification of Google's SoundStream, which incorporates additional transformer layers and switches from VQVAE to finite-scalar quantization (FSQ). Across real and synthetic mixtures from the MIT RF Challenge dataset, our method achieves competitive performance, including a 122x reduction in bit-error rate (BER) over prior state-of-the-art techniques for separating a QPSK signal from 5G interference. The learned representation adapts to the interference type without side information and shows zero-shot generalization to unseen mixtures at inference time, underscoring its potential beyond RF. Although we instantiate our approach on radio-frequency mixtures, we expect the same architecture to apply to gravitational-wave data (e.g., LIGO strain) and other scientific sensing problems that require data-driven modeling of background and noise.

WaterSIC: information-theoretically (near) optimal linear layer quantization

Mar 05, 2026Abstract:This paper considers the problem of converting a given dense linear layer to low precision. The tradeoff between compressed length and output discrepancy is analyzed information theoretically (IT). It is shown that a popular GPTQ algorithm may have an arbitrarily large gap to the IT limit. To alleviate this problem, a novel algorithm, termed ''WaterSIC'', is proposed and is shown to be within a rate gap of 0.255 bits to the IT limit, uniformly over all possible covariance matrices of input activations. The key innovation of WaterSIC's is to allocate different quantization rates to different columns (in-features) of the weight matrix, mimicking the classical IT solution known as ''waterfilling''. Applying WaterSIC to the Llama and Qwen family of LLMs establishes new state-of-the-art performance for all quantization rates from 1 to 4 bits.

Universal priors: solving empirical Bayes via Bayesian inference and pretraining

Feb 16, 2026Abstract:We theoretically justify the recent empirical finding of [Teh et al., 2025] that a transformer pretrained on synthetically generated data achieves strong performance on empirical Bayes (EB) problems. We take an indirect approach to this question: rather than analyzing the model architecture or training dynamics, we ask why a pretrained Bayes estimator, trained under a prespecified training distribution, can adapt to arbitrary test distributions. Focusing on Poisson EB problems, we identify the existence of universal priors such that training under these priors yields a near-optimal regret bound of $\widetilde{O}(\frac{1}{n})$ uniformly over all test distributions. Our analysis leverages the classical phenomenon of posterior contraction in Bayesian statistics, showing that the pretrained transformer adapts to unknown test distributions precisely through posterior contraction. This perspective also explains the phenomenon of length generalization, in which the test sequence length exceeds the training length, as the model performs Bayesian inference using a generalized posterior.

Price of universality in vector quantization is at most 0.11 bit

Feb 05, 2026Abstract:Fast computation of a matrix product $W^\top X$ is a workhorse of modern LLMs. To make their deployment more efficient, a popular approach is that of using a low-precision approximation $\widehat W$ in place of true $W$ ("weight-only quantization''). Information theory demonstrates that an optimal algorithm for reducing precision of $W$ depends on the (second order) statistics of $X$ and requires a careful alignment of vector quantization codebook with PCA directions of $X$ (a process known as "waterfilling allocation''). Dependence of the codebook on statistics of $X$, however, is highly impractical. This paper proves that there exist a universal codebook that is simultaneously near-optimal for all possible statistics of $X$, in the sense of being at least as good as an $X$-adapted waterfilling codebook with rate reduced by 0.11 bit per dimension. Such universal codebook would be an ideal candidate for the low-precision storage format, a topic of active modern research, but alas the existence proof is non-constructive. Equivalently, our result shows existence of a net in $\mathbb{R}^n$ that is a nearly-optimal covering of a sphere simultaneously with respect to all Hilbert norms.

YuriiFormer: A Suite of Nesterov-Accelerated Transformers

Jan 30, 2026Abstract:We propose a variational framework that interprets transformer layers as iterations of an optimization algorithm acting on token embeddings. In this view, self-attention implements a gradient step of an interaction energy, while MLP layers correspond to gradient updates of a potential energy. Standard GPT-style transformers emerge as vanilla gradient descent on the resulting composite objective, implemented via Lie--Trotter splitting between these two energy functionals. This perspective enables principled architectural design using classical optimization ideas. As a proof of concept, we introduce a Nesterov-style accelerated transformer that preserves the same attention and MLP oracles. The resulting architecture consistently outperforms a nanoGPT baseline on TinyStories and OpenWebText, demonstrating that optimization-theoretic insights can translate into practical gains.

High-Rate Quantized Matrix Multiplication: Theory and Practice

Jan 23, 2026Abstract:This work investigates the problem of quantized matrix multiplication (MatMul), which has become crucial for the efficient deployment of large language models (LLMs). We consider two settings: 1) Generic MatMul, where both matrices must be quantized (weight+activation quantization); and 2) weight-only quantization, where the second matrix is only known through covariance matrix $Σ_X$ of its columns. For each setting, we first review the fundamental information-theoretic tradeoff between quantization rate and distortion (high-rate theory), and then analyze the performance of several popular quantization schemes, comparing them to these fundamental limits. Specifically, we discuss rate loss (compared to information theoretic optima) of absmax INT and floating-point (FP) quantization, for which we also derive remarkably accurate heuristic approximations. Weight-only quantization is related to the problem of weighted mean squared error (WMSE) source coding, whose classical (reverse) waterfilling solution dictates how one should distribute rate between coordinates of the vector. We show how waterfilling can be used to improve practical LLM quantization algorithms (GPTQ), which at present allocate rate equally. This new scheme (termed ``WaterSIC'') only uses scalar INT quantizers, but its high-rate performance is basis free (it depends only on the determinant of $Σ_X$ and, thus, unlike existing schemes, is immune to applying random rotations) and is within a multiplicative factor of $\frac{2πe}{12}$ (or 0.25 bit/entry) of the information-theoretic distortion limit (!). GPTQ's performance is affected by the choice of basis, but for a random rotation and actual $Σ_X$ from Llama-3-8B we find GPTQ to be within 0.1 bit (depending on the layer type) of WaterSIC, suggesting that GPTQ with random rotation is also near optimal (for high-rate quantization).

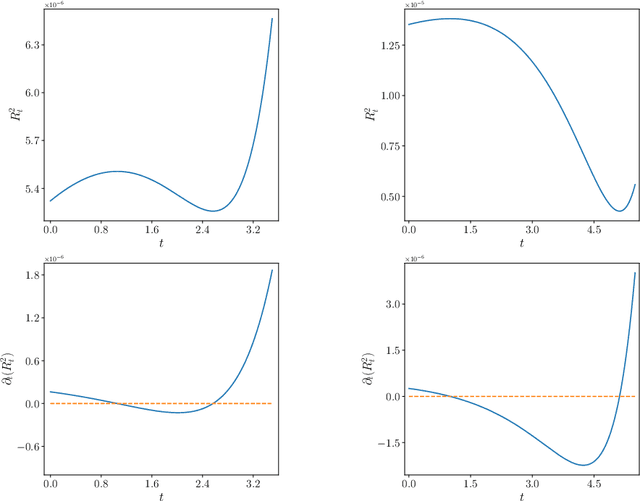

Synchronization of mean-field models on the circle

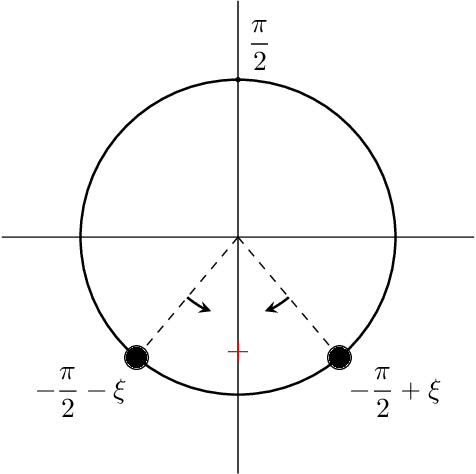

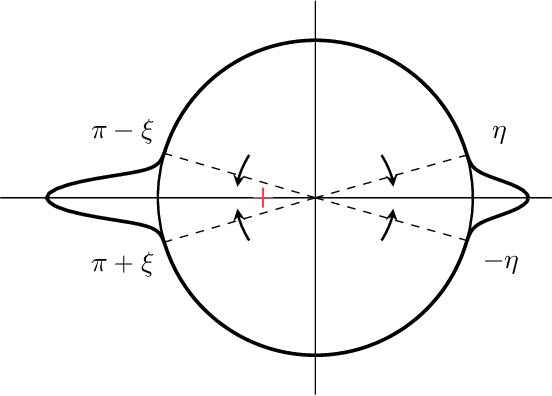

Jul 30, 2025Abstract:This paper considers a mean-field model of $n$ interacting particles whose state space is the unit circle, a generalization of the classical Kuramoto model. Global synchronization is said to occur if after starting from almost any initial state, all particles coalesce to a common point on the circle. We propose a general synchronization criterion in terms of $L_1$-norm of the third derivative of the particle interaction function. As an application we resolve a conjecture for the so-called self-attention dynamics (stylized model of transformers), by showing synchronization for all $\beta \ge -0.16$, which significantly extends the previous bound of $0\le \beta \le 1$ from Criscitiello, Rebjock, McRae, and Boumal (2024). We also show that global synchronization does not occur when $\beta < -2/3$.

Quantitative Clustering in Mean-Field Transformer Models

Apr 20, 2025

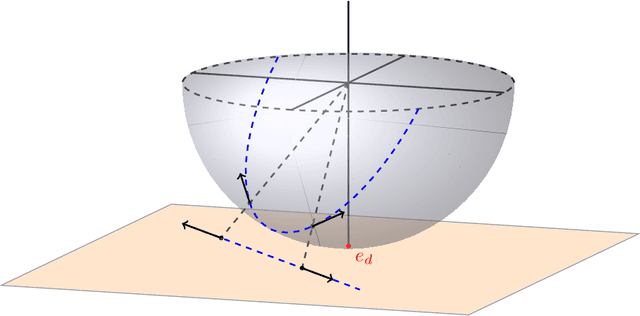

Abstract:The evolution of tokens through a deep transformer models can be modeled as an interacting particle system that has been shown to exhibit an asymptotic clustering behavior akin to the synchronization phenomenon in Kuramoto models. In this work, we investigate the long-time clustering of mean-field transformer models. More precisely, we establish exponential rates of contraction to a Dirac point mass for any suitably regular initialization under some assumptions on the parameters of transformer models, any suitably regular mean-field initialization synchronizes exponentially fast with some quantitative rates.

Nonparametric MLE for Gaussian Location Mixtures: Certified Computation and Generic Behavior

Mar 26, 2025Abstract:We study the nonparametric maximum likelihood estimator $\widehat{\pi}$ for Gaussian location mixtures in one dimension. It has been known since (Lindsay, 1983) that given an $n$-point dataset, this estimator always returns a mixture with at most $n$ components, and more recently (Wu-Polyanskiy, 2020) gave a sharp $O(\log n)$ bound for subgaussian data. In this work we study computational aspects of $\widehat{\pi}$. We provide an algorithm which for small enough $\varepsilon>0$ computes an $\varepsilon$-approximation of $\widehat\pi$ in Wasserstein distance in time $K+Cnk^2\log\log(1/\varepsilon)$. Here $K$ is data-dependent but independent of $\varepsilon$, while $C$ is an absolute constant and $k=|supp(\widehat{\pi})|\leq n$ is the number of atoms in $\widehat\pi$. We also certifiably compute the exact value of $|supp(\widehat\pi)|$ in finite time. These guarantees hold almost surely whenever the dataset $(x_1,\dots,x_n)\in [-cn^{1/4},cn^{1/4}]$ consists of independent points from a probability distribution with a density (relative to Lebesgue measure). We also show the distribution of $\widehat\pi$ conditioned to be $k$-atomic admits a density on the associated $2k-1$ dimensional parameter space for all $k\leq \sqrt{n}/3$, and almost sure locally linear convergence of the EM algorithm. One key tool is a classical Fourier analytic estimate for non-degenerate curves.

Residual connections provably mitigate oversmoothing in graph neural networks

Jan 04, 2025

Abstract:Graph neural networks (GNNs) have achieved remarkable empirical success in processing and representing graph-structured data across various domains. However, a significant challenge known as "oversmoothing" persists, where vertex features become nearly indistinguishable in deep GNNs, severely restricting their expressive power and practical utility. In this work, we analyze the asymptotic oversmoothing rates of deep GNNs with and without residual connections by deriving explicit convergence rates for a normalized vertex similarity measure. Our analytical framework is grounded in the multiplicative ergodic theorem. Furthermore, we demonstrate that adding residual connections effectively mitigates or prevents oversmoothing across several broad families of parameter distributions. The theoretical findings are strongly supported by numerical experiments.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge