Yunyi Zhu

MIT CSAIL, Cambridge, MA, USA

TactStyle: Generating Tactile Textures with Generative AI for Digital Fabrication

Mar 03, 2025

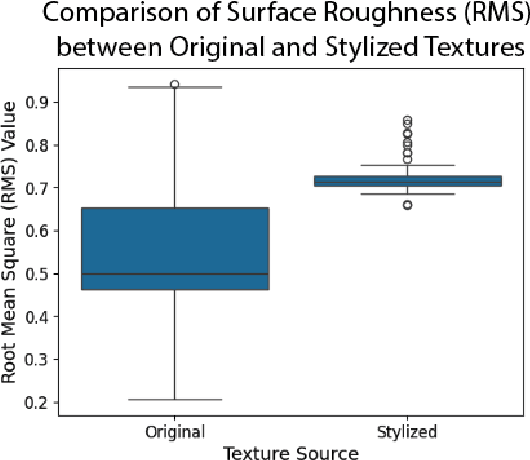

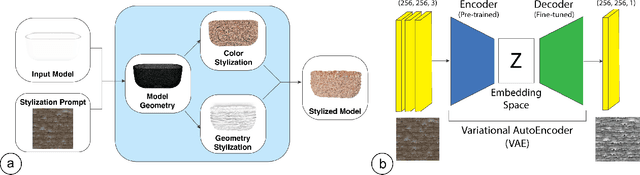

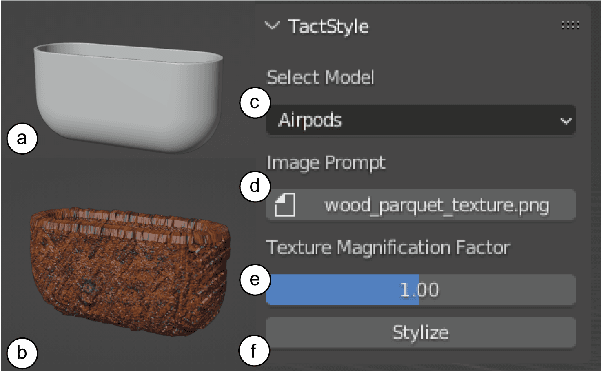

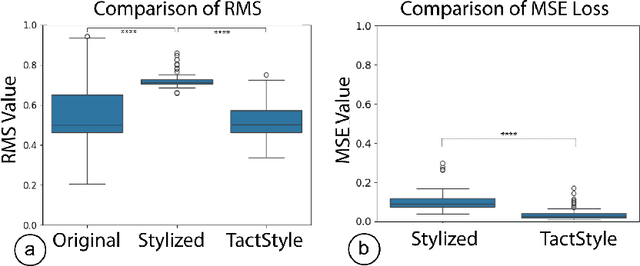

Abstract:Recent work in Generative AI enables the stylization of 3D models based on image prompts. However, these methods do not incorporate tactile information, leading to designs that lack the expected tactile properties. We present TactStyle, a system that allows creators to stylize 3D models with images while incorporating the expected tactile properties. TactStyle accomplishes this using a modified image-generation model fine-tuned to generate heightfields for given surface textures. By optimizing 3D model surfaces to embody a generated texture, TactStyle creates models that match the desired style and replicate the tactile experience. We utilize a large-scale dataset of textures to train our texture generation model. In a psychophysical experiment, we evaluate the tactile qualities of a set of 3D-printed original textures and TactStyle's generated textures. Our results show that TactStyle successfully generates a wide range of tactile features from a single image input, enabling a novel approach to haptic design.

Generative AI in Color-Changing Systems: Re-Programmable 3D Object Textures with Material and Design Constraints

Apr 25, 2024Abstract:Advances in Generative AI tools have allowed designers to manipulate existing 3D models using text or image-based prompts, enabling creators to explore different design goals. Photochromic color-changing systems, on the other hand, allow for the reprogramming of surface texture of 3D models, enabling easy customization of physical objects and opening up the possibility of using object surfaces for data display. However, existing photochromic systems require the user to manually design the desired texture, inspect the simulation of the pattern on the object, and verify the efficacy of the generated pattern. These manual design, inspection, and verification steps prevent the user from efficiently exploring the design space of possible patterns. Thus, by designing an automated workflow desired for an end-to-end texture application process, we can allow rapid iteration on different practicable patterns. In this workshop paper, we discuss the possibilities of extending generative AI systems, with material and design constraints for reprogrammable surfaces with photochromic materials. By constraining generative AI systems to colors and materials possible to be physically realized with photochromic dyes, we can create tools that would allow users to explore different viable patterns, with text and image-based prompts. We identify two focus areas in this topic: photochromic material constraints and design constraints for data-encoded textures. We highlight the current limitations of using generative AI tools to create viable textures using photochromic material. Finally, we present possible approaches to augment generative AI methods to take into account the photochromic material constraints, allowing for the creation of viable photochromic textures rapidly and easily.

Novel View Synthesis for High-fidelity Headshot Scenes

May 31, 2022

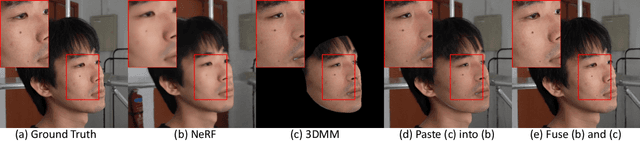

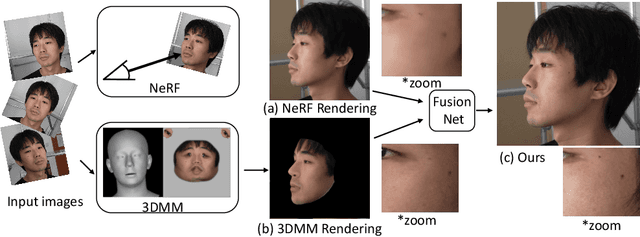

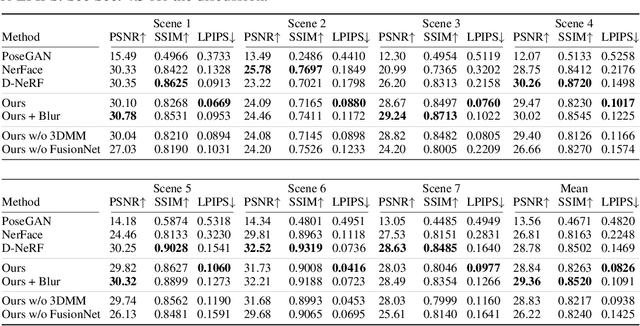

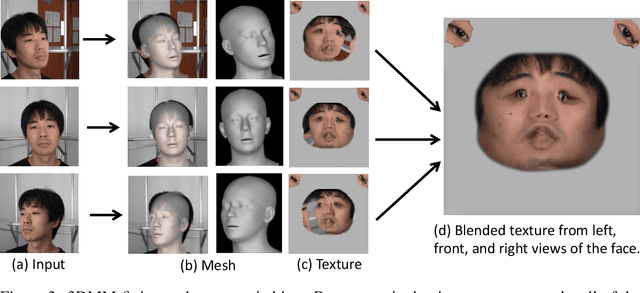

Abstract:Rendering scenes with a high-quality human face from arbitrary viewpoints is a practical and useful technique for many real-world applications. Recently, Neural Radiance Fields (NeRF), a rendering technique that uses neural networks to approximate classical ray tracing, have been considered as one of the promising approaches for synthesizing novel views from a sparse set of images. We find that NeRF can render new views while maintaining geometric consistency, but it does not properly maintain skin details, such as moles and pores. These details are important particularly for faces because when we look at an image of a face, we are much more sensitive to details than when we look at other objects. On the other hand, 3D Morpable Models (3DMMs) based on traditional meshes and textures can perform well in terms of skin detail despite that it has less precise geometry and cannot cover the head and the entire scene with background. Based on these observations, we propose a method to use both NeRF and 3DMM to synthesize a high-fidelity novel view of a scene with a face. Our method learns a Generative Adversarial Network (GAN) to mix a NeRF-synthesized image and a 3DMM-rendered image and produces a photorealistic scene with a face preserving the skin details. Experiments with various real-world scenes demonstrate the effectiveness of our approach. The code will be available on https://github.com/showlab/headshot .

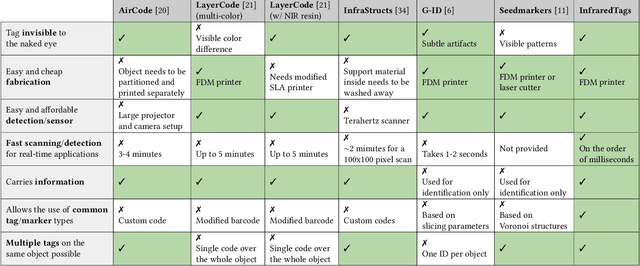

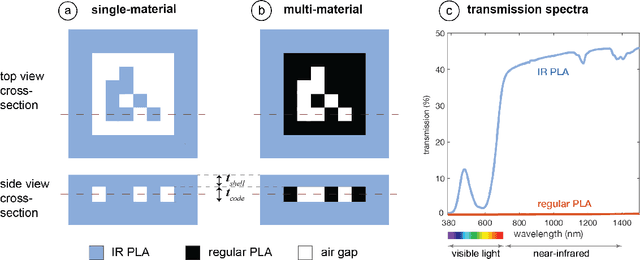

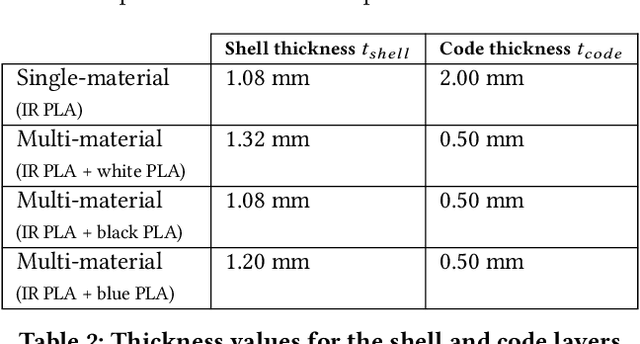

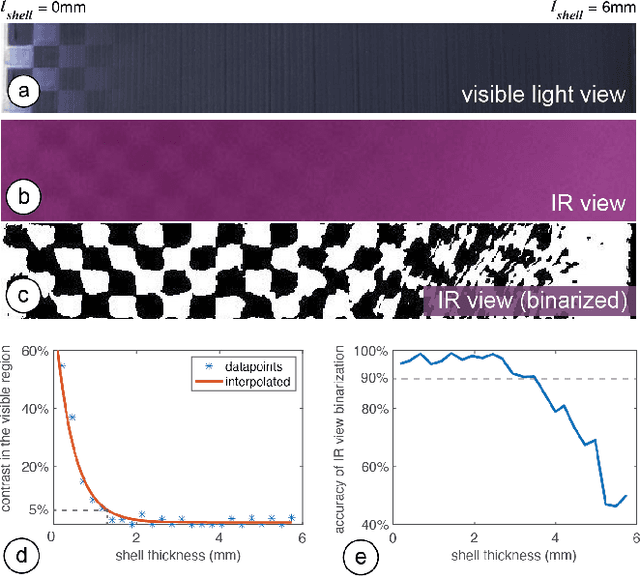

InfraredTags: Embedding Invisible AR Markers and Barcodes Using Low-Cost, Infrared-Based 3D Printing and Imaging Tools

Feb 12, 2022

Abstract:Existing approaches for embedding unobtrusive tags inside 3D objects require either complex fabrication or high-cost imaging equipment. We present InfraredTags, which are 2D markers and barcodes imperceptible to the naked eye that can be 3D printed as part of objects, and detected rapidly by low-cost near-infrared cameras. We achieve this by printing objects from an infrared-transmitting filament, which infrared cameras can see through, and by having air gaps inside for the tag's bits, which appear at a different intensity in the infrared image. We built a user interface that facilitates the integration of common tags (QR codes, ArUco markers) with the object geometry to make them 3D printable as InfraredTags. We also developed a low-cost infrared imaging module that augments existing mobile devices and decodes tags using our image processing pipeline. Our evaluation shows that the tags can be detected with little near-infrared illumination (0.2lux) and from distances as far as 250cm. We demonstrate how our method enables various applications, such as object tracking and embedding metadata for augmented reality and tangible interactions.

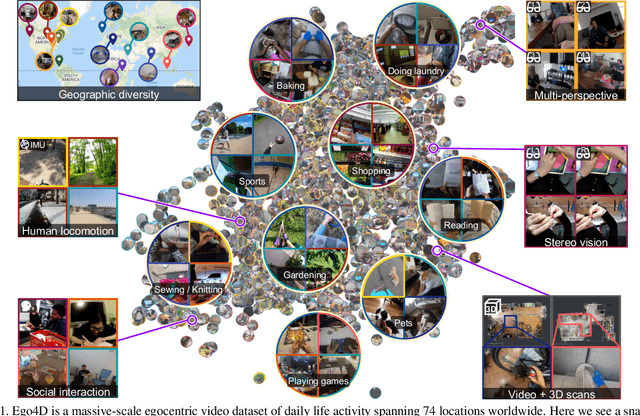

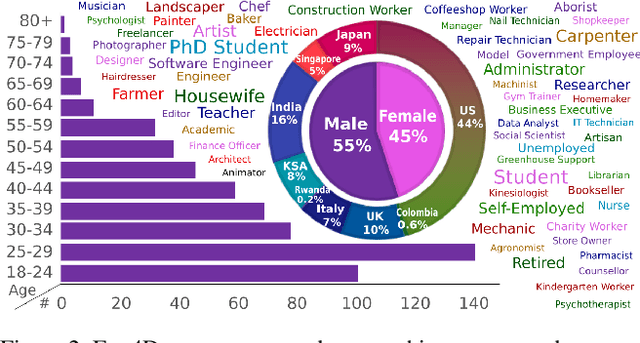

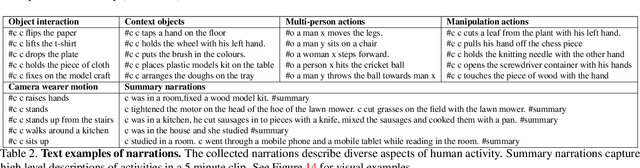

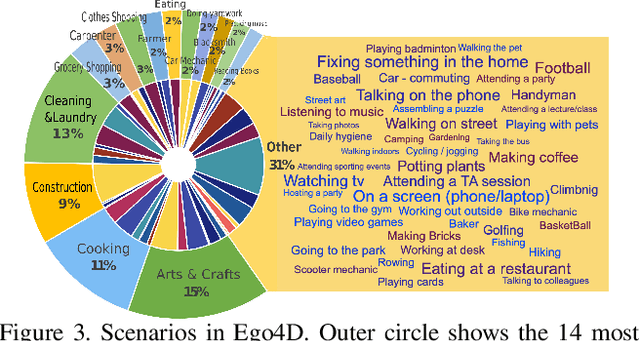

Ego4D: Around the World in 3,000 Hours of Egocentric Video

Oct 13, 2021

Abstract:We introduce Ego4D, a massive-scale egocentric video dataset and benchmark suite. It offers 3,025 hours of daily-life activity video spanning hundreds of scenarios (household, outdoor, workplace, leisure, etc.) captured by 855 unique camera wearers from 74 worldwide locations and 9 different countries. The approach to collection is designed to uphold rigorous privacy and ethics standards with consenting participants and robust de-identification procedures where relevant. Ego4D dramatically expands the volume of diverse egocentric video footage publicly available to the research community. Portions of the video are accompanied by audio, 3D meshes of the environment, eye gaze, stereo, and/or synchronized videos from multiple egocentric cameras at the same event. Furthermore, we present a host of new benchmark challenges centered around understanding the first-person visual experience in the past (querying an episodic memory), present (analyzing hand-object manipulation, audio-visual conversation, and social interactions), and future (forecasting activities). By publicly sharing this massive annotated dataset and benchmark suite, we aim to push the frontier of first-person perception. Project page: https://ego4d-data.org/

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge