Xurong Li

Subwavelength Imaging using a Solid-Immersion Diffractive Optical Processor

Jan 17, 2024Abstract:Phase imaging is widely used in biomedical imaging, sensing, and material characterization, among other fields. However, direct imaging of phase objects with subwavelength resolution remains a challenge. Here, we demonstrate subwavelength imaging of phase and amplitude objects based on all-optical diffractive encoding and decoding. To resolve subwavelength features of an object, the diffractive imager uses a thin, high-index solid-immersion layer to transmit high-frequency information of the object to a spatially-optimized diffractive encoder, which converts/encodes high-frequency information of the input into low-frequency spatial modes for transmission through air. The subsequent diffractive decoder layers (in air) are jointly designed with the encoder using deep-learning-based optimization, and communicate with the encoder layer to create magnified images of input objects at its output, revealing subwavelength features that would otherwise be washed away due to diffraction limit. We demonstrate that this all-optical collaboration between a diffractive solid-immersion encoder and the following decoder layers in air can resolve subwavelength phase and amplitude features of input objects in a highly compact design. To experimentally demonstrate its proof-of-concept, we used terahertz radiation and developed a fabrication method for creating monolithic multi-layer diffractive processors. Through these monolithically fabricated diffractive encoder-decoder pairs, we demonstrated phase-to-intensity transformations and all-optically reconstructed subwavelength phase features of input objects by directly transforming them into magnified intensity features at the output. This solid-immersion-based diffractive imager, with its compact and cost-effective design, can find wide-ranging applications in bioimaging, endoscopy, sensing and materials characterization.

Machine Vision using Diffractive Spectral Encoding

May 15, 2020

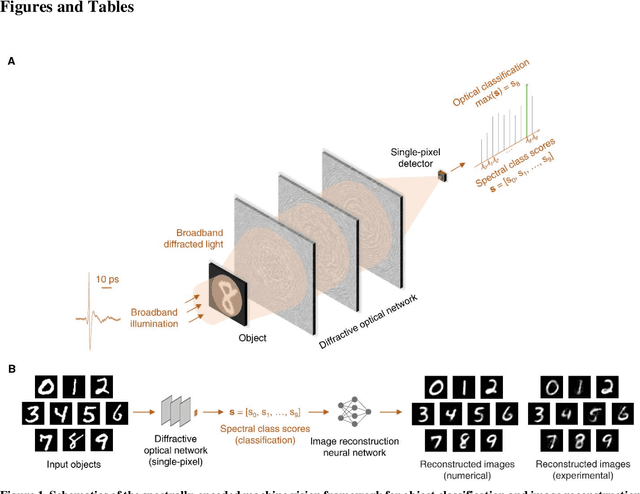

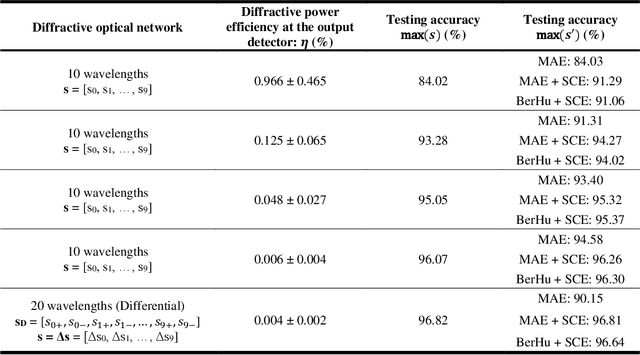

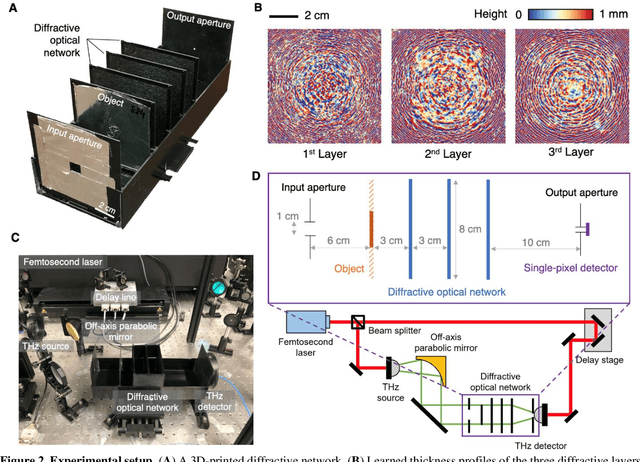

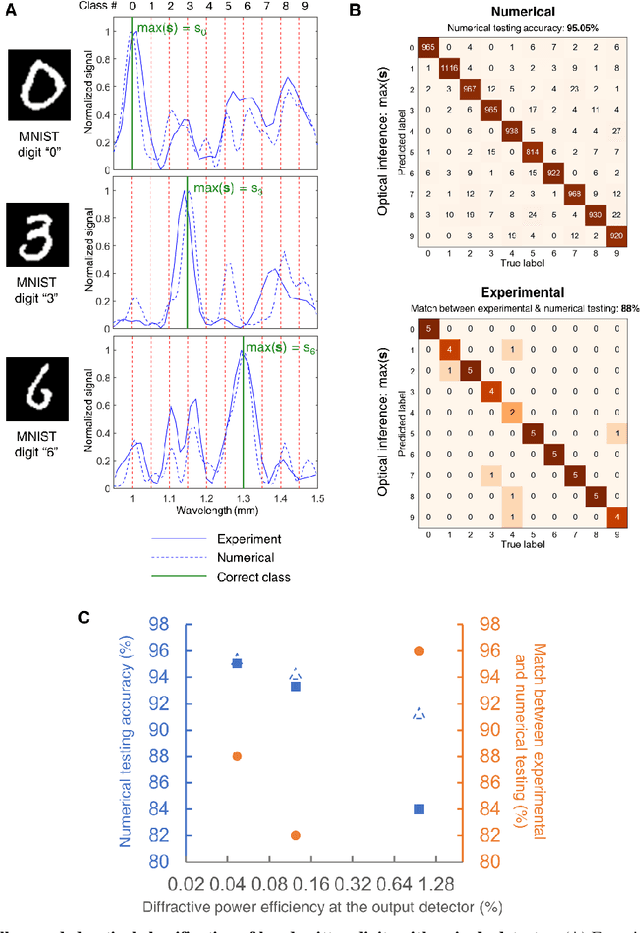

Abstract:Machine vision systems mostly rely on lens-based optical imaging architectures that relay the spatial information of objects onto high pixel-count opto-electronic sensor arrays, followed by digital processing of this information. Here, we demonstrate an optical machine vision system that uses trainable matter in the form of diffractive layers to transform and encode the spatial information of objects into the power spectrum of the diffracted light, which is used to perform optical classification of objects with a single-pixel spectroscopic detector. Using a time-domain spectroscopy setup with a plasmonic nanoantenna-based detector, we experimentally validated this framework at terahertz spectrum to optically classify the images of handwritten digits by detecting the spectral power of the diffracted light at ten distinct wavelengths, each representing one class/digit. We also report the coupling of this spectral encoding achieved through a diffractive optical network with a shallow electronic neural network, separately trained to reconstruct the images of handwritten digits based on solely the spectral information encoded in these ten distinct wavelengths within the diffracted light. These reconstructed images demonstrate task-specific image decompression and can also be cycled back as new inputs to the same diffractive network to improve its optical object classification. This unique framework merges the power of deep learning with the spatial and spectral processing capabilities of trainable matter, and can also be extended to other spectral-domain measurement systems to enable new 3D imaging and sensing modalities integrated with spectrally encoded classification tasks performed through diffractive networks.

Adversarial Examples versus Cloud-based Detectors: A Black-box Empirical Study

Jan 13, 2019

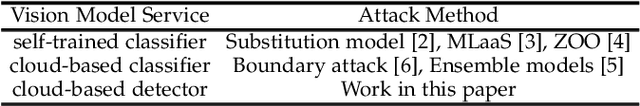

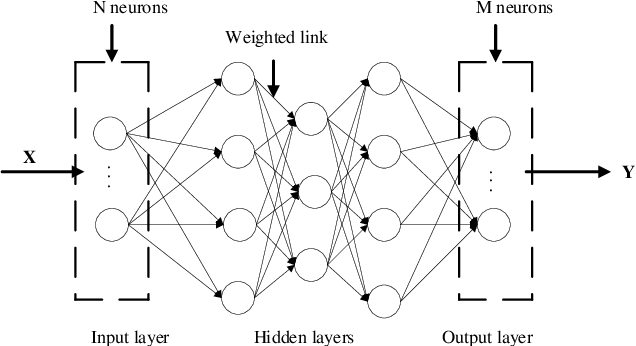

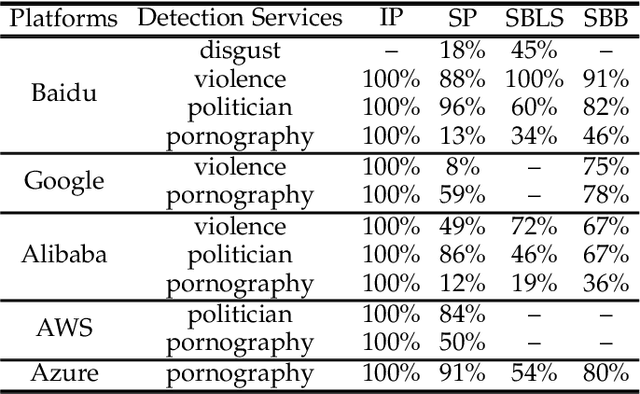

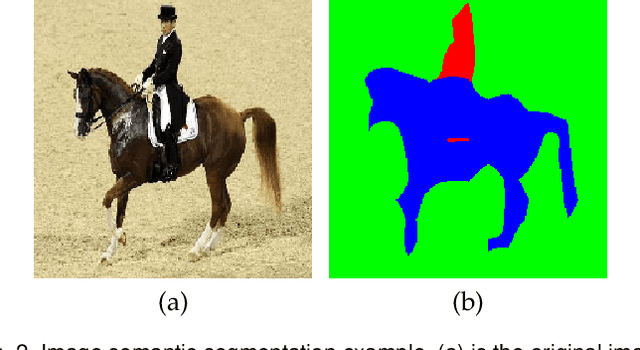

Abstract:Deep learning has been broadly leveraged by major cloud providers such as Google, AWS, Baidu, to offer various computer vision related services including image auto-classification, object identification and illegal image detection. While many recent works demonstrated that deep learning classification models are vulnerable to adversarial examples, real-world cloud-based image detection services are more complex than classification and there is little literature about adversarial example attacks on detection services. In this paper, we mainly focus on studying the security of real-world cloud-based image detectors. Specifically, (1) based on effective semantic segmentation, we propose four different attacks to generate semantics-aware adversarial examples via only interacting with black-box APIs; and (2) we make the first attempt to conduct an extensive empirical study of black-box attacks against real-world cloud-based image detectors. Through evaluations on five popular cloud platforms including AWS, Azure, Google Cloud, Baidu Cloud and Alibaba Cloud, we demonstrate that our IP attack has a success rate of approximately 100%, and semantic segmentation based attacks (e.g., SP, SBLS, SBB) have a a success rate over 90% among different detection services, such as violence, politician and pornography detection. We discuss the possible defenses to address these security challenges in cloud-based detectors.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge