Nezih T. Yardimci

Terahertz Pulse Shaping Using Diffractive Legos

Jun 30, 2020

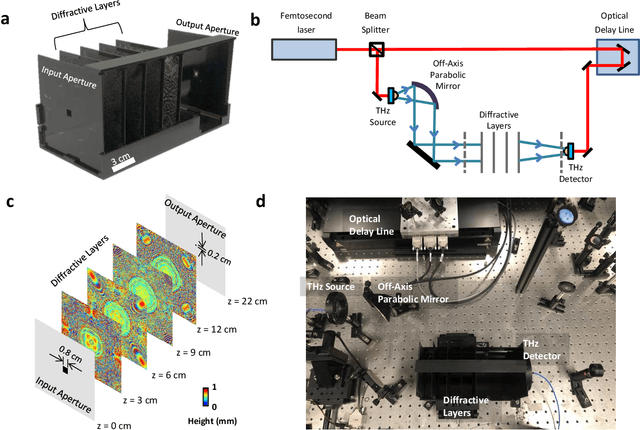

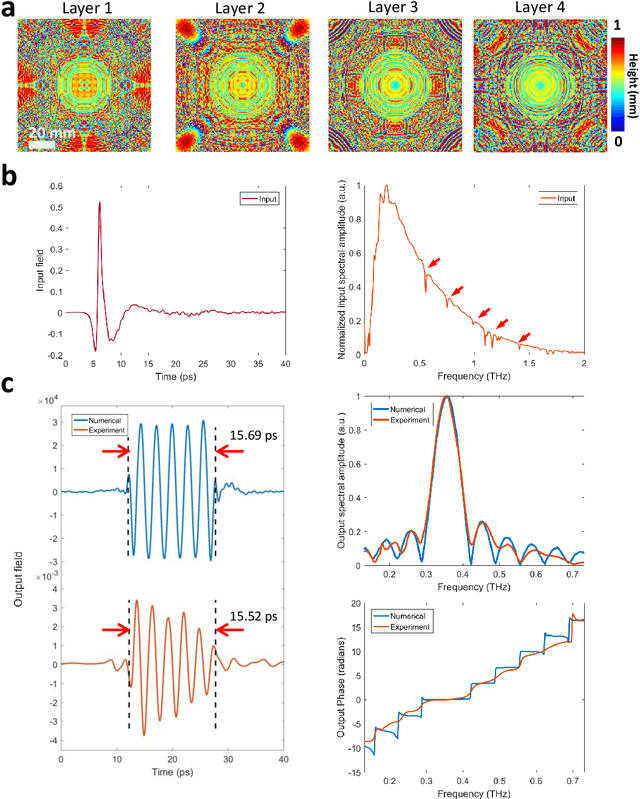

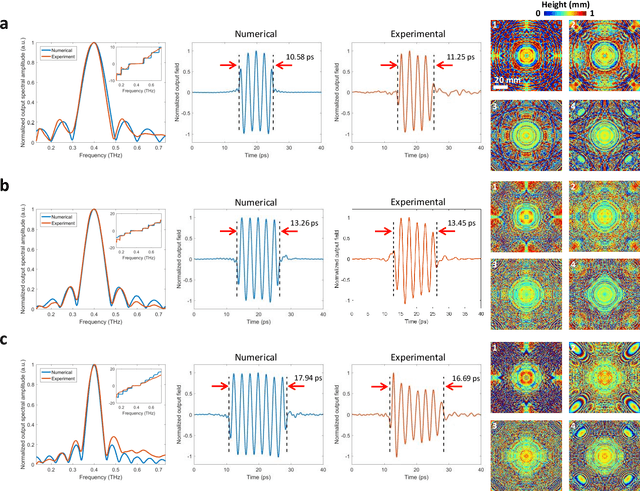

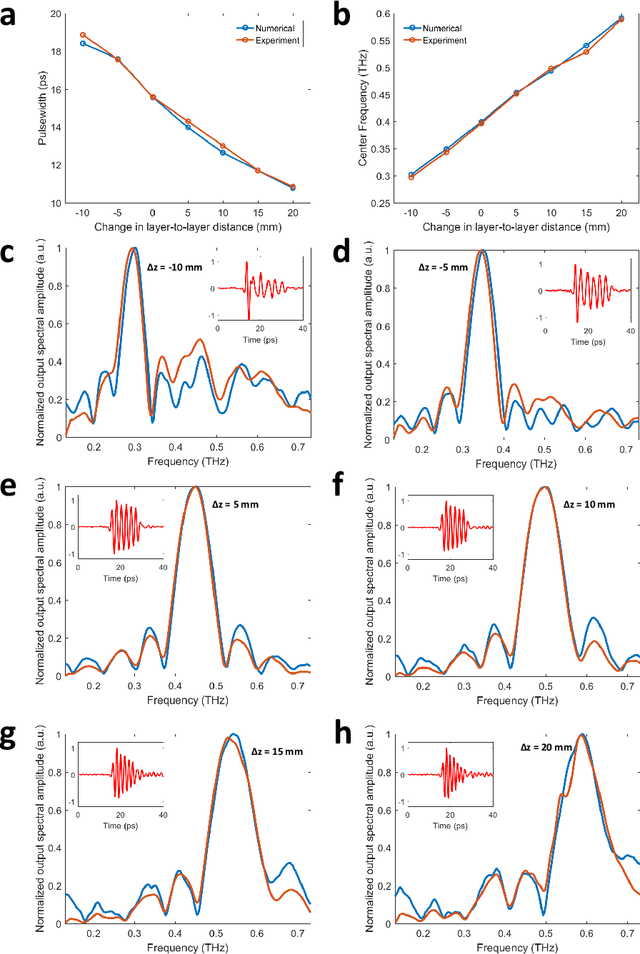

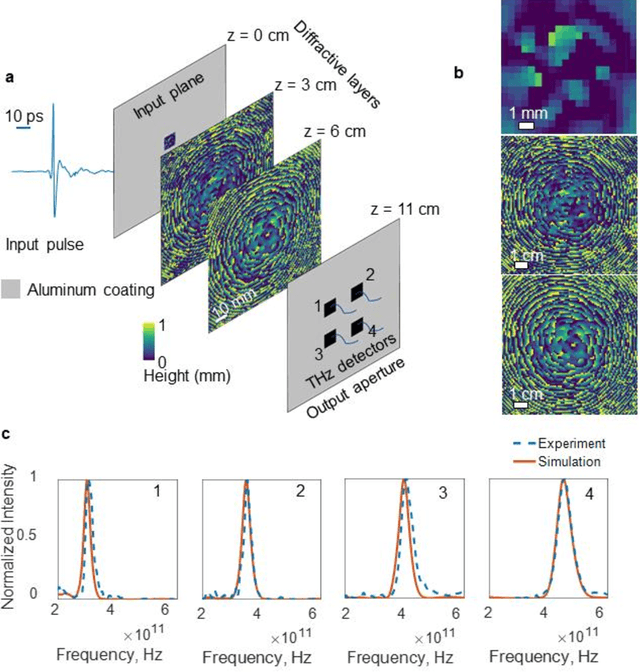

Abstract:Recent advances in deep learning have been providing non-intuitive solutions to various inverse problems in optics. At the intersection of machine learning and optics, diffractive networks merge wave-optics with deep learning to design task-specific elements to all-optically perform various tasks such as object classification and machine vision. Here, we present a diffractive network, which is used to shape an arbitrary broadband pulse into a desired optical waveform, forming a compact pulse engineering system. We experimentally demonstrate the synthesis of square pulses with different temporal-widths by manufacturing passive diffractive layers that collectively control both the spectral amplitude and the phase of an input terahertz pulse. Our results constitute the first demonstration of direct pulse shaping in terahertz spectrum, where a complex-valued spectral modulation function directly acts on terahertz frequencies. Furthermore, a Lego-like physical transfer learning approach is presented to illustrate pulse-width tunability by replacing part of an existing network with newly trained diffractive layers, demonstrating its modularity. This learning-based diffractive pulse engineering framework can find broad applications in e.g., communications, ultra-fast imaging and spectroscopy.

Misalignment Resilient Diffractive Optical Networks

May 23, 2020

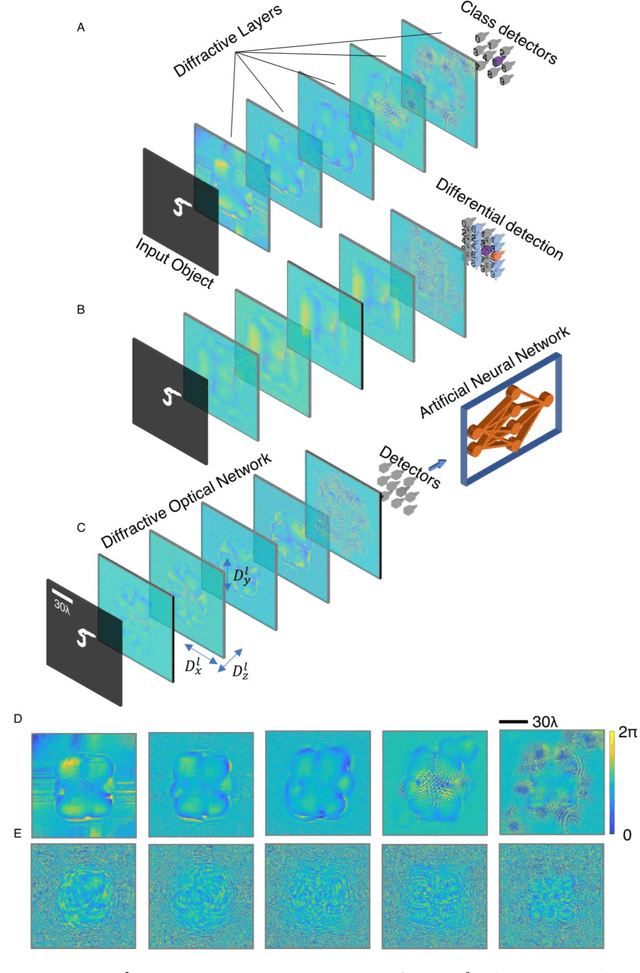

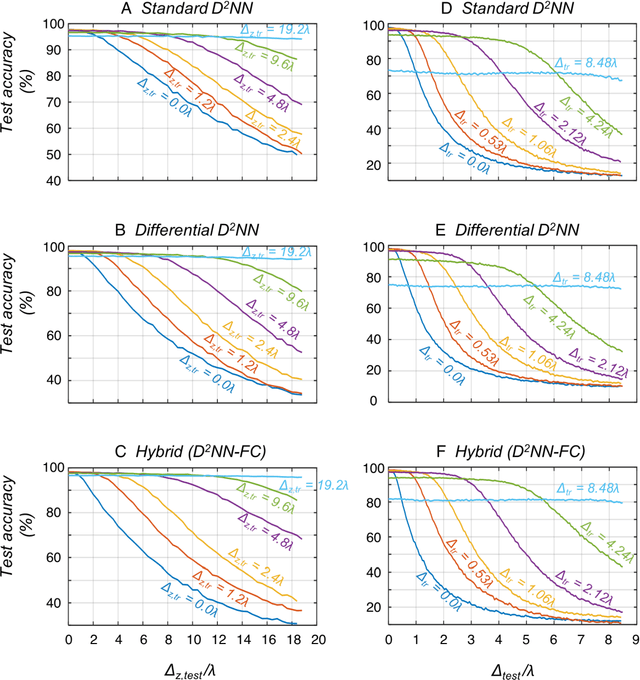

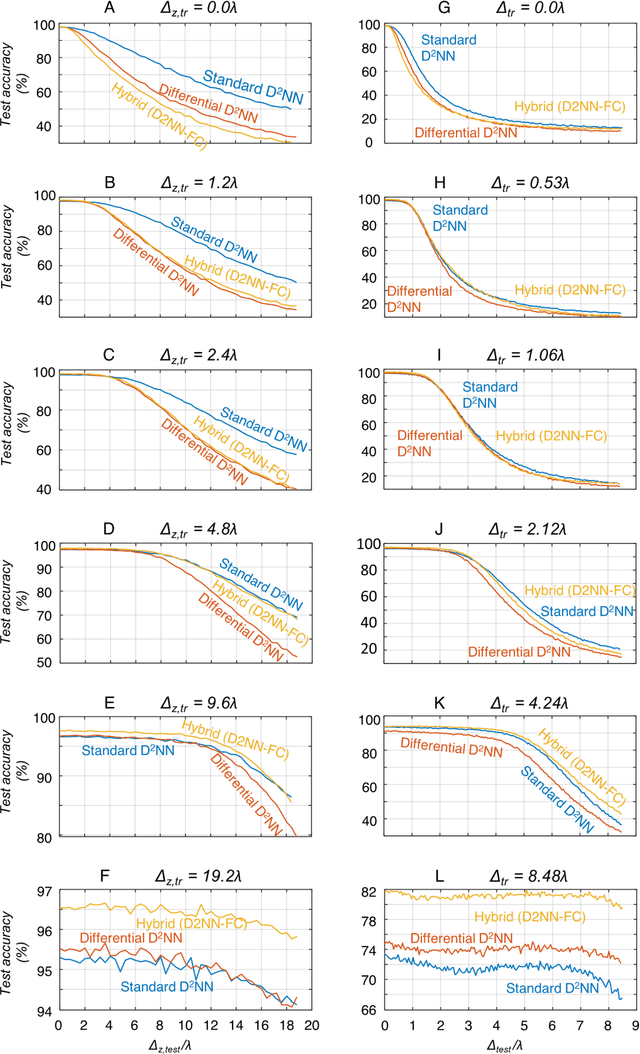

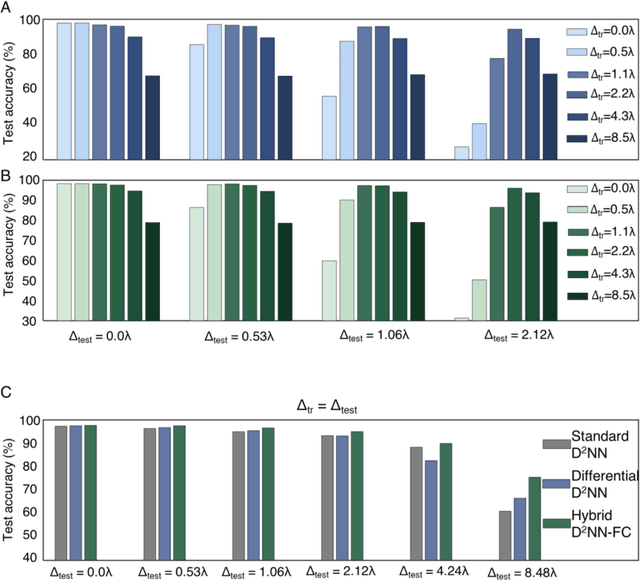

Abstract:As an optical machine learning framework, Diffractive Deep Neural Networks (D2NN) take advantage of data-driven training methods used in deep learning to devise light-matter interaction in 3D for performing a desired statistical inference task. Multi-layer optical object recognition platforms designed with this diffractive framework have been shown to generalize to unseen image data achieving e.g., >98% blind inference accuracy for hand-written digit classification. The multi-layer structure of diffractive networks offers significant advantages in terms of their diffraction efficiency, inference capability and optical signal contrast. However, the use of multiple diffractive layers also brings practical challenges for the fabrication and alignment of these diffractive systems for accurate optical inference. Here, we introduce and experimentally demonstrate a new training scheme that significantly increases the robustness of diffractive networks against 3D misalignments and fabrication tolerances in the physical implementation of a trained diffractive network. By modeling the undesired layer-to-layer misalignments in 3D as continuous random variables in the optical forward model, diffractive networks are trained to maintain their inference accuracy over a large range of misalignments; we term this diffractive network design as vaccinated D2NN (v-D2NN). We further extend this vaccination strategy to the training of diffractive networks that use differential detectors at the output plane as well as to jointly-trained hybrid (optical-electronic) networks to reveal that all of these diffractive designs improve their resilience to misalignments by taking into account possible 3D fabrication variations and displacements during their training phase.

Machine Vision using Diffractive Spectral Encoding

May 15, 2020

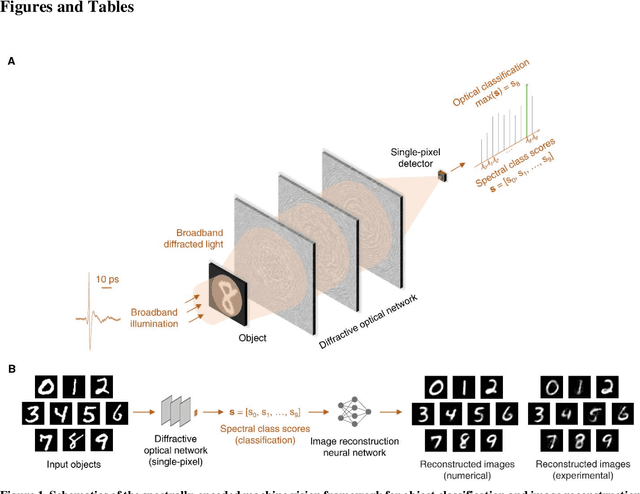

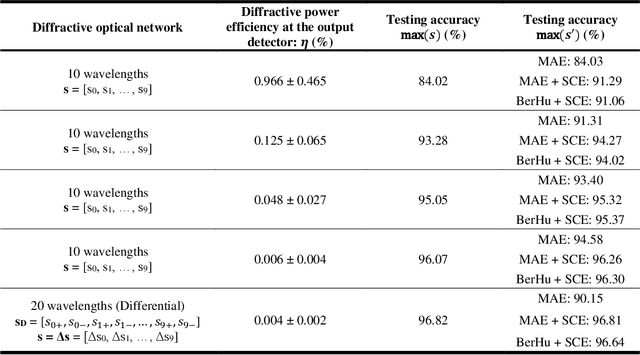

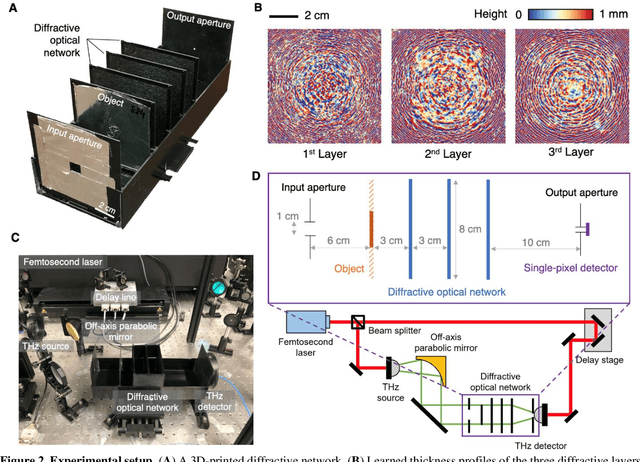

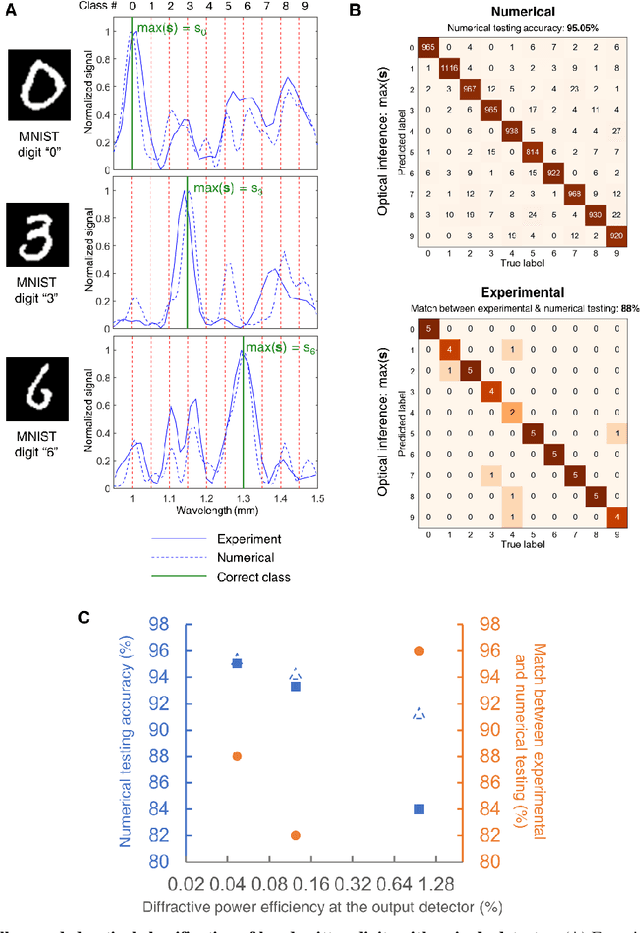

Abstract:Machine vision systems mostly rely on lens-based optical imaging architectures that relay the spatial information of objects onto high pixel-count opto-electronic sensor arrays, followed by digital processing of this information. Here, we demonstrate an optical machine vision system that uses trainable matter in the form of diffractive layers to transform and encode the spatial information of objects into the power spectrum of the diffracted light, which is used to perform optical classification of objects with a single-pixel spectroscopic detector. Using a time-domain spectroscopy setup with a plasmonic nanoantenna-based detector, we experimentally validated this framework at terahertz spectrum to optically classify the images of handwritten digits by detecting the spectral power of the diffracted light at ten distinct wavelengths, each representing one class/digit. We also report the coupling of this spectral encoding achieved through a diffractive optical network with a shallow electronic neural network, separately trained to reconstruct the images of handwritten digits based on solely the spectral information encoded in these ten distinct wavelengths within the diffracted light. These reconstructed images demonstrate task-specific image decompression and can also be cycled back as new inputs to the same diffractive network to improve its optical object classification. This unique framework merges the power of deep learning with the spatial and spectral processing capabilities of trainable matter, and can also be extended to other spectral-domain measurement systems to enable new 3D imaging and sensing modalities integrated with spectrally encoded classification tasks performed through diffractive networks.

Design of Task-Specific Optical Systems Using Broadband Diffractive Neural Networks

Sep 14, 2019

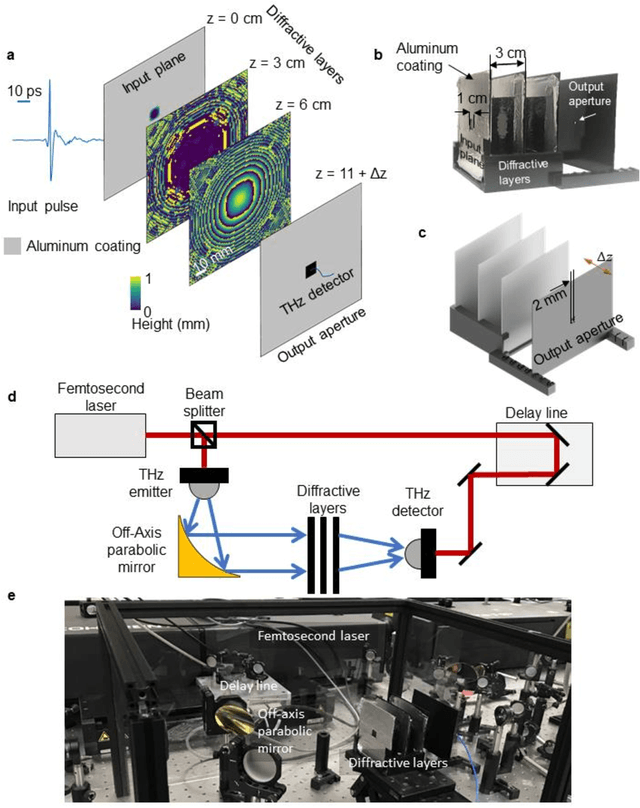

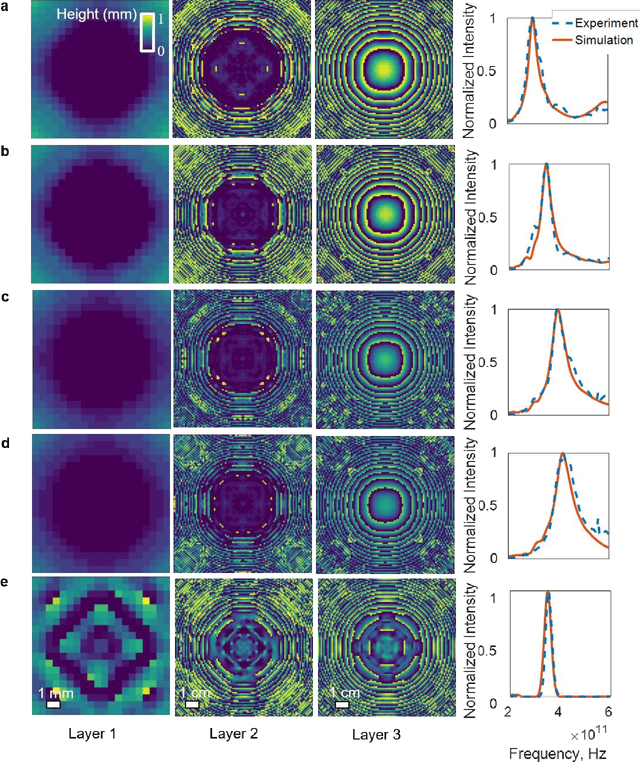

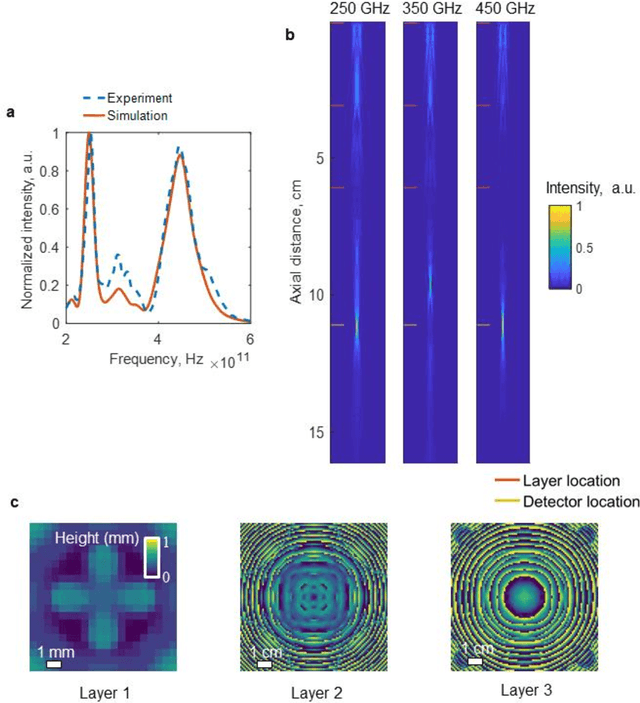

Abstract:We report a broadband diffractive optical neural network design that simultaneously processes a continuum of wavelengths generated by a temporally-incoherent broadband source to all-optically perform a specific task learned using deep learning. We experimentally validated the success of this broadband diffractive neural network architecture by designing, fabricating and testing seven different multi-layer, diffractive optical systems that transform the optical wavefront generated by a broadband THz pulse to realize (1) a series of tunable, single passband as well as dual passband spectral filters, and (2) spatially-controlled wavelength de-multiplexing. Merging the native or engineered dispersion of various material systems with a deep learning-based design strategy, broadband diffractive neural networks help us engineer light-matter interaction in 3D, diverging from intuitive and analytical design methods to create task-specific optical components that can all-optically perform deterministic tasks or statistical inference for optical machine learning.

All-Optical Machine Learning Using Diffractive Deep Neural Networks

Jul 26, 2018Abstract:We introduce an all-optical Diffractive Deep Neural Network (D2NN) architecture that can learn to implement various functions after deep learning-based design of passive diffractive layers that work collectively. We experimentally demonstrated the success of this framework by creating 3D-printed D2NNs that learned to implement handwritten digit classification and the function of an imaging lens at terahertz spectrum. With the existing plethora of 3D-printing and other lithographic fabrication methods as well as spatial-light-modulators, this all-optical deep learning framework can perform, at the speed of light, various complex functions that computer-based neural networks can implement, and will find applications in all-optical image analysis, feature detection and object classification, also enabling new camera designs and optical components that can learn to perform unique tasks using D2NNs.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge