Vincent Christlein

Pattern Recognition Lab, FAU Erlangen-Nürnberg

DRetHTR: Linear-Time Decoder-Only Retentive Network for Handwritten Text Recognition

Feb 19, 2026Abstract:State-of-the-art handwritten text recognition (HTR) systems commonly use Transformers, whose growing key-value (KV) cache makes decoding slow and memory-intensive. We introduce DRetHTR, a decoder-only model built on Retentive Networks (RetNet). Compared to an equally sized decoder-only Transformer baseline, DRetHTR delivers 1.6-1.9x faster inference with 38-42% less memory usage, without loss of accuracy. By replacing softmax attention with softmax-free retention and injecting multi-scale sequential priors, DRetHTR avoids a growing KV cache: decoding is linear in output length in both time and memory. To recover the local-to-global inductive bias of attention, we propose layer-wise gamma scaling, which progressively enlarges the effective retention horizon in deeper layers. This encourages early layers to model short-range dependencies and later layers to capture broader context, mitigating the flexibility gap introduced by removing softmax. Consequently, DRetHTR achieves best reported test character error rates of 2.26% (IAM-A, en), 1.81% (RIMES, fr), and 3.46% (Bentham, en), and is competitive on READ-2016 (de) with 4.21%. This demonstrates that decoder-only RetNet enables Transformer-level HTR accuracy with substantially improved decoding speed and memory efficiency.

Enhancing IMU-Based Online Handwriting Recognition via Contrastive Learning with Zero Inference Overhead

Feb 04, 2026Abstract:Online handwriting recognition using inertial measurement units opens up handwriting on paper as input for digital devices. Doing it on edge hardware improves privacy and lowers latency, but entails memory constraints. To address this, we propose Error-enhanced Contrastive Handwriting Recognition (ECHWR), a training framework designed to improve feature representation and recognition accuracy without increasing inference costs. ECHWR utilizes a temporary auxiliary branch that aligns sensor signals with semantic text embeddings during the training phase. This alignment is maintained through a dual contrastive objective: an in-batch contrastive loss for general modality alignment and a novel error-based contrastive loss that distinguishes between correct signals and synthetic hard negatives. The auxiliary branch is discarded after training, which allows the deployed model to keep its original, efficient architecture. Evaluations on the OnHW-Words500 dataset show that ECHWR significantly outperforms state-of-the-art baselines, reducing character error rates by up to 7.4% on the writer-independent split and 10.4% on the writer-dependent split. Finally, although our ablation studies indicate that solving specific challenges require specific architectural and objective configurations, error-based contrastive loss shows its effectiveness for handling unseen writing styles.

Few-Shot Domain Adaptation with Temporal References and Static Priors for Glacier Calving Front Delineation

Jan 29, 2026Abstract:During benchmarking, the state-of-the-art model for glacier calving front delineation achieves near-human performance. However, when applied in a real-world setting at a novel study site, its delineation accuracy is insufficient for calving front products intended for further scientific analyses. This site represents an out-of-distribution domain for a model trained solely on the benchmark dataset. By employing a few-shot domain adaptation strategy, incorporating spatial static prior knowledge, and including summer reference images in the input time series, the delineation error is reduced from 1131.6 m to 68.7 m without any architectural modifications. These methodological advancements establish a framework for applying deep learning-based calving front segmentation to novel study sites, enabling calving front monitoring on a global scale.

Coarse-to-Fine Non-rigid Multi-modal Image Registration for Historical Panel Paintings based on Crack Structures

Jan 22, 2026Abstract:Art technological investigations of historical panel paintings rely on acquiring multi-modal image data, including visual light photography, infrared reflectography, ultraviolet fluorescence photography, x-radiography, and macro photography. For a comprehensive analysis, the multi-modal images require pixel-wise alignment, which is still often performed manually. Multi-modal image registration can reduce this laborious manual work, is substantially faster, and enables higher precision. Due to varying image resolutions, huge image sizes, non-rigid distortions, and modality-dependent image content, registration is challenging. Therefore, we propose a coarse-to-fine non-rigid multi-modal registration method efficiently relying on sparse keypoints and thin-plate-splines. Historical paintings exhibit a fine crack pattern, called craquelure, on the paint layer, which is captured by all image systems and is well-suited as a feature for registration. In our one-stage non-rigid registration approach, we employ a convolutional neural network for joint keypoint detection and description based on the craquelure and a graph neural network for descriptor matching in a patch-based manner, and filter matches based on homography reprojection errors in local areas. For coarse-to-fine registration, we introduce a novel multi-level keypoint refinement approach to register mixed-resolution images up to the highest resolution. We created a multi-modal dataset of panel paintings with a high number of keypoint annotations, and a large test set comprising five multi-modal domains and varying image resolutions. The ablation study demonstrates the effectiveness of all modules of our refinement method. Our proposed approaches achieve the best registration results compared to competing keypoint and dense matching methods and refinement methods.

AMD-HookNet++: Evolution of AMD-HookNet with Hybrid CNN-Transformer Feature Enhancement for Glacier Calving Front Segmentation

Dec 16, 2025Abstract:The dynamics of glaciers and ice shelf fronts significantly impact the mass balance of ice sheets and coastal sea levels. To effectively monitor glacier conditions, it is crucial to consistently estimate positional shifts of glacier calving fronts. AMD-HookNet firstly introduces a pure two-branch convolutional neural network (CNN) for glacier segmentation. Yet, the local nature and translational invariance of convolution operations, while beneficial for capturing low-level details, restricts the model ability to maintain long-range dependencies. In this study, we propose AMD-HookNet++, a novel advanced hybrid CNN-Transformer feature enhancement method for segmenting glaciers and delineating calving fronts in synthetic aperture radar images. Our hybrid structure consists of two branches: a Transformer-based context branch to capture long-range dependencies, which provides global contextual information in a larger view, and a CNN-based target branch to preserve local details. To strengthen the representation of the connected hybrid features, we devise an enhanced spatial-channel attention module to foster interactions between the hybrid CNN-Transformer branches through dynamically adjusting the token relationships from both spatial and channel perspectives. Additionally, we develop a pixel-to-pixel contrastive deep supervision to optimize our hybrid model by integrating pixelwise metric learning into glacier segmentation. Through extensive experiments and comprehensive quantitative and qualitative analyses on the challenging glacier segmentation benchmark dataset CaFFe, we show that AMD-HookNet++ sets a new state of the art with an IoU of 78.2 and a HD95 of 1,318 m, while maintaining a competitive MDE of 367 m. More importantly, our hybrid model produces smoother delineations of calving fronts, resolving the issue of jagged edges typically seen in pure Transformer-based approaches.

EcoScapes: LLM-Powered Advice for Crafting Sustainable Cities

Dec 16, 2025Abstract:Climate adaptation is vital for the sustainability and sometimes the mere survival of our urban areas. However, small cities often struggle with limited personnel resources and integrating vast amounts of data from multiple sources for a comprehensive analysis. To overcome these challenges, this paper proposes a multi-layered system combining specialized LLMs, satellite imagery analysis and a knowledge base to aid in developing effective climate adaptation strategies. The corresponding code can be found at https://github.com/Photon-GitHub/EcoScapes.

Multi-temporal Calving Front Segmentation

Dec 12, 2025Abstract:The calving fronts of marine-terminating glaciers undergo constant changes. These changes significantly affect the glacier's mass and dynamics, demanding continuous monitoring. To address this need, deep learning models were developed that can automatically delineate the calving front in Synthetic Aperture Radar imagery. However, these models often struggle to correctly classify areas affected by seasonal conditions such as ice melange or snow-covered surfaces. To address this issue, we propose to process multiple frames from a satellite image time series of the same glacier in parallel and exchange temporal information between the corresponding feature maps to stabilize each prediction. We integrate our approach into the current state-of-the-art architecture Tyrion and accomplish a new state-of-the-art performance on the CaFFe benchmark dataset. In particular, we achieve a Mean Distance Error of 184.4 m and a mean Intersection over Union of 83.6.

Data Augmentation via Latent Diffusion Models for Detecting Smell-Related Objects in Historical Artworks

Sep 18, 2025Abstract:Finding smell references in historic artworks is a challenging problem. Beyond artwork-specific challenges such as stylistic variations, their recognition demands exceptionally detailed annotation classes, resulting in annotation sparsity and extreme class imbalance. In this work, we explore the potential of synthetic data generation to alleviate these issues and enable accurate detection of smell-related objects. We evaluate several diffusion-based augmentation strategies and demonstrate that incorporating synthetic data into model training can improve detection performance. Our findings suggest that leveraging the large-scale pretraining of diffusion models offers a promising approach for improving detection accuracy, particularly in niche applications where annotations are scarce and costly to obtain. Furthermore, the proposed approach proves to be effective even with relatively small amounts of data, and scaling it up provides high potential for further enhancements.

SSL4SAR: Self-Supervised Learning for Glacier Calving Front Extraction from SAR Imagery

Jul 02, 2025Abstract:Glaciers are losing ice mass at unprecedented rates, increasing the need for accurate, year-round monitoring to understand frontal ablation, particularly the factors driving the calving process. Deep learning models can extract calving front positions from Synthetic Aperture Radar imagery to track seasonal ice losses at the calving fronts of marine- and lake-terminating glaciers. The current state-of-the-art model relies on ImageNet-pretrained weights. However, they are suboptimal due to the domain shift between the natural images in ImageNet and the specialized characteristics of remote sensing imagery, in particular for Synthetic Aperture Radar imagery. To address this challenge, we propose two novel self-supervised multimodal pretraining techniques that leverage SSL4SAR, a new unlabeled dataset comprising 9,563 Sentinel-1 and 14 Sentinel-2 images of Arctic glaciers, with one optical image per glacier in the dataset. Additionally, we introduce a novel hybrid model architecture that combines a Swin Transformer encoder with a residual Convolutional Neural Network (CNN) decoder. When pretrained on SSL4SAR, this model achieves a mean distance error of 293 m on the "CAlving Fronts and where to Find thEm" (CaFFe) benchmark dataset, outperforming the prior best model by 67 m. Evaluating an ensemble of the proposed model on a multi-annotator study of the benchmark dataset reveals a mean distance error of 75 m, approaching the human performance of 38 m. This advancement enables precise monitoring of seasonal changes in glacier calving fronts.

Comparison Study: Glacier Calving Front Delineation in Synthetic Aperture Radar Images With Deep Learning

Jan 09, 2025

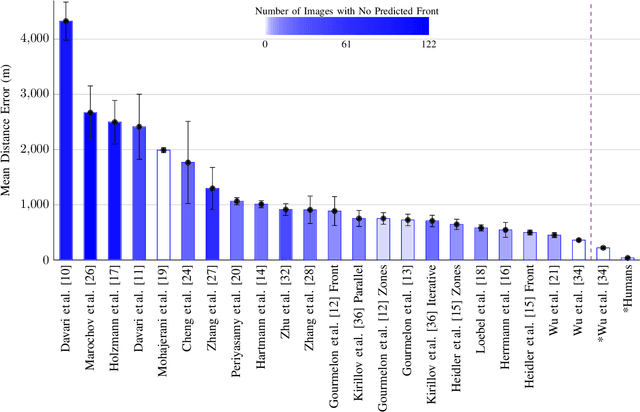

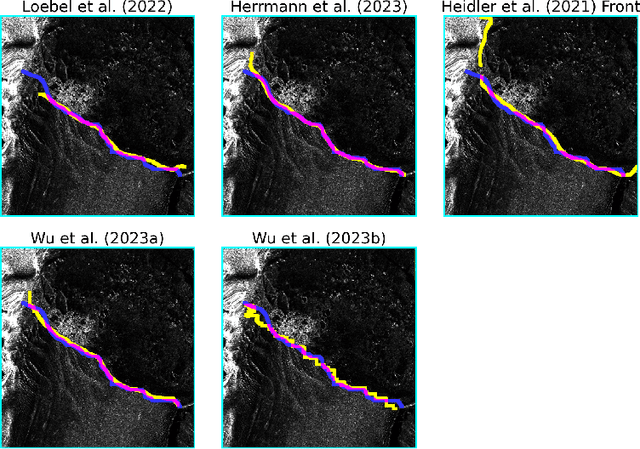

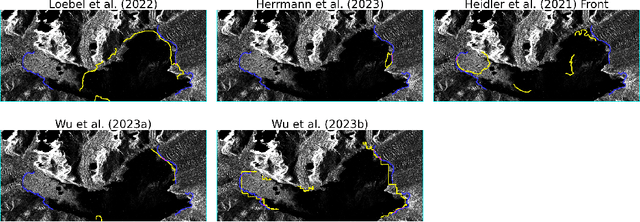

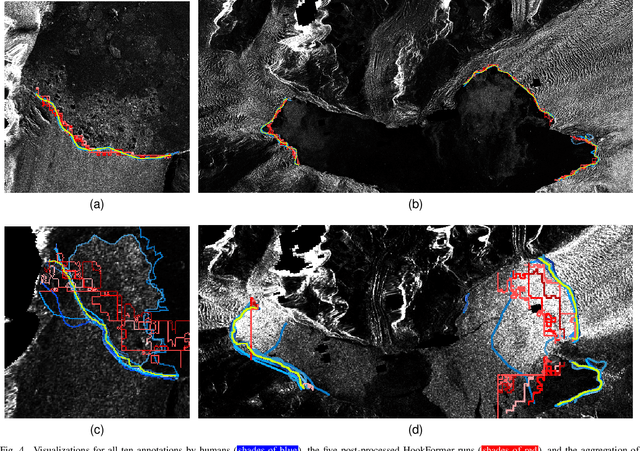

Abstract:Calving front position variation of marine-terminating glaciers is an indicator of ice mass loss and a crucial parameter in numerical glacier models. Deep Learning (DL) systems can automatically extract this position from Synthetic Aperture Radar (SAR) imagery, enabling continuous, weather- and illumination-independent, large-scale monitoring. This study presents the first comparison of DL systems on a common calving front benchmark dataset. A multi-annotator study with ten annotators is performed to contrast the best-performing DL system against human performance. The best DL model's outputs deviate 221 m on average, while the average deviation of the human annotators is 38 m. This significant difference shows that current DL systems do not yet match human performance and that further research is needed to enable fully automated monitoring of glacier calving fronts. The study of Vision Transformers, foundation models, and the inclusion and processing strategy of more information are identified as avenues for future research.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge