Tristan Perez

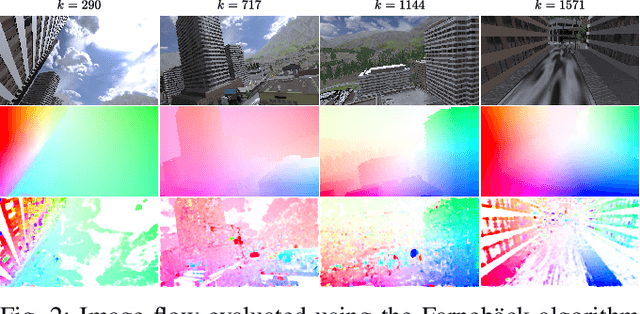

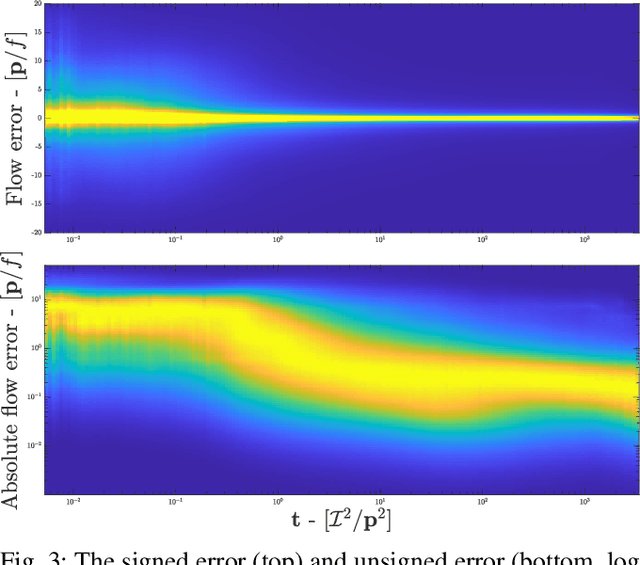

A Heteroscedastic Likelihood Model for Two-frame Optical Flow

Oct 14, 2020

Abstract:Machine vision is an important sensing technology used in mobile robotic systems. Advancing the autonomy of such systems requires accurate characterisation of sensor uncertainty. Vision includes intrinsic uncertainty due to the camera sensor and extrinsic uncertainty due to environmental lighting and texture, which propagate through the image processing algorithms used to produce visual measurements. To faithfully characterise visual measurements, we must take into account these uncertainties. In this paper, we propose a new class of likelihood functions that characterises the uncertainty of the error distribution of two-frame optical flow that enables a heteroscedastic dependence on texture. We employ the proposed class to characterise the Farneback and Lucas Kanade optical flow algorithms and achieve close agreement with their respective empirical error distributions over a wide range of texture in a simulated environment. The utility of the proposed likelihood model is demonstrated in a visual odometry ego-motion simulation study, which results in 30-83% reduction in position drift rate compared to traditional methods employing a Gaussian error assumption. The development of an empirically congruent likelihood model advances the requisite tool-set for vision-based Bayesian inference and enables sensor data fusion with GPS, LiDAR and IMU to advance robust autonomous navigation.

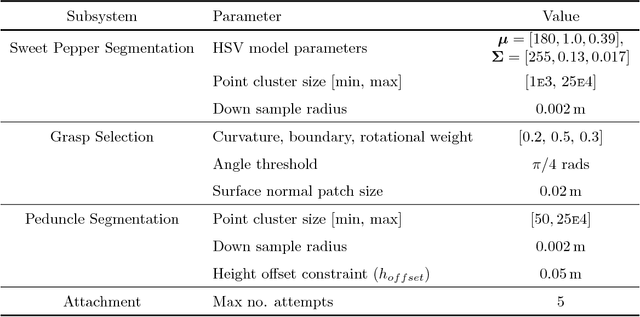

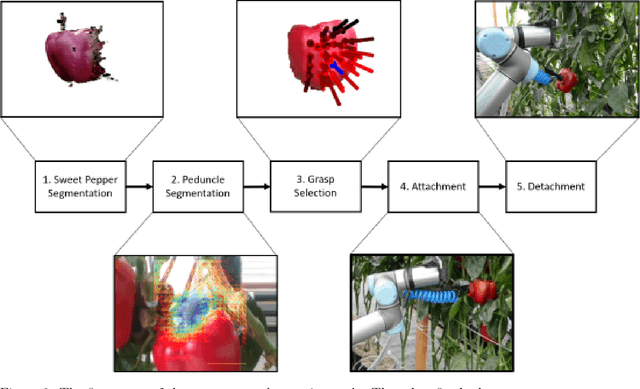

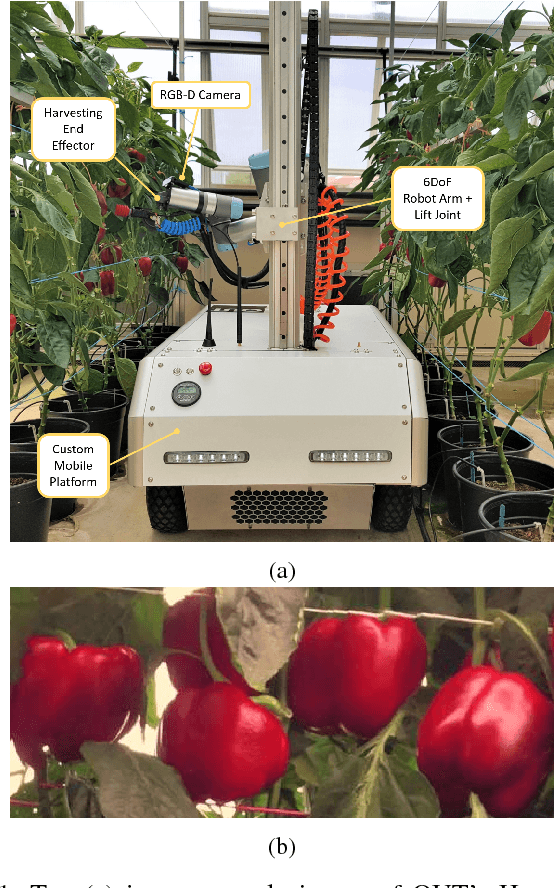

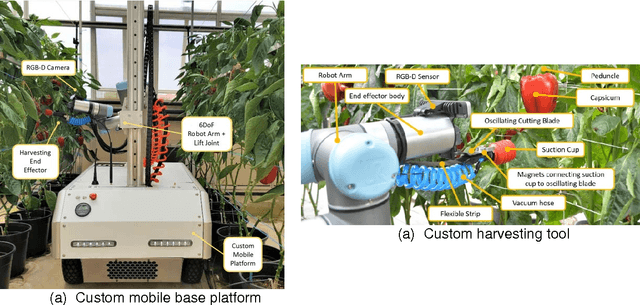

A Sweet Pepper Harvesting Robot for Protected Cropping Environments

Oct 29, 2018

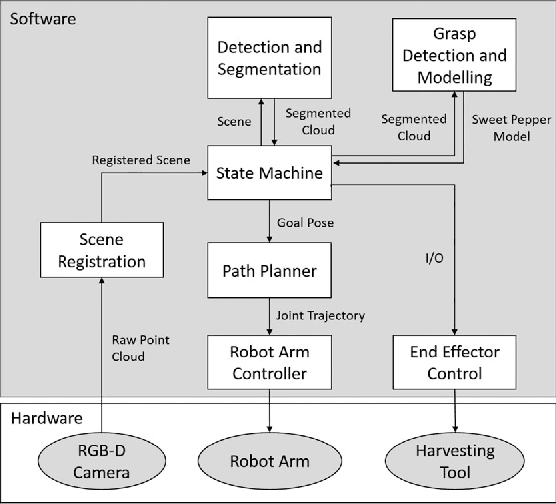

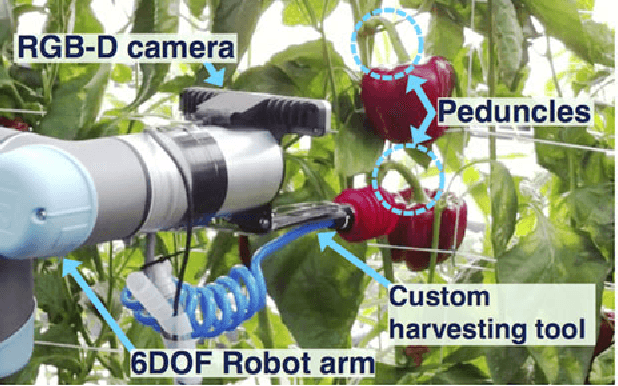

Abstract:Using robots to harvest sweet peppers in protected cropping environments has remained unsolved despite considerable effort by the research community over several decades. In this paper, we present the robotic harvester, Harvey, designed for sweet peppers in protected cropping environments that achieved a 76.5% success rate (within a modified scenario) which improves upon our prior work which achieved 58% and related sweet pepper harvesting work which achieved 33\%. This improvement was primarily achieved through the introduction of a novel peduncle segmentation system using an efficient deep convolutional neural network, in conjunction with 3D post-filtering to detect the critical cutting location. We benchmark the peduncle segmentation against prior art demonstrating a considerable improvement in performance with an F_1 score of 0.564 compared to 0.302. The robotic harvester uses a perception pipeline to detect a target sweet pepper and an appropriate grasp and cutting pose used to determine the trajectory of a multi-modal harvesting tool to grasp the sweet pepper and cut it from the plant. A novel decoupling mechanism enables the gripping and cutting operations to be performed independently. We perform an in-depth analysis of the full robotic harvesting system to highlight bottlenecks and failure points that future work could address.

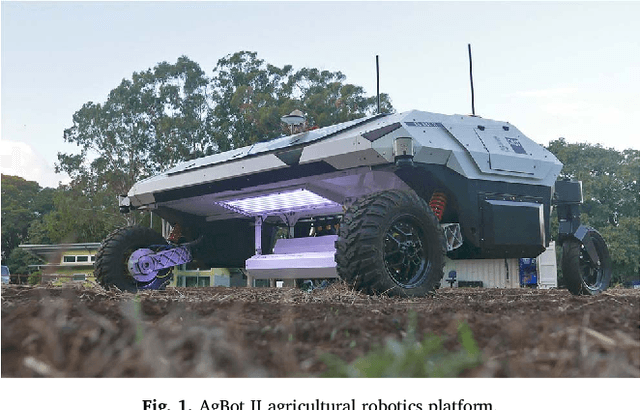

A Rapidly Deployable Classification System using Visual Data for the Application of Precision Weed Management

Apr 26, 2018

Abstract:In this work we demonstrate a rapidly deployable weed classification system that uses visual data to enable autonomous precision weeding without making prior assumptions about which weed species are present in a given field. Previous work in this area relies on having prior knowledge of the weed species present in the field. This assumption cannot always hold true for every field, and thus limits the use of weed classification systems based on this assumption. In this work, we obviate this assumption and introduce a rapidly deployable approach able to operate on any field without any weed species assumptions prior to deployment. We present a three stage pipeline for the implementation of our weed classification system consisting of initial field surveillance, offline processing and selective labelling, and automated precision weeding. The key characteristic of our approach is the combination of plant clustering and selective labelling which is what enables our system to operate without prior weed species knowledge. Testing using field data we are able to label 12.3 times fewer images than traditional full labelling whilst reducing classification accuracy by only 14%.

* 36 pages, 14 figures, published Computers and Electronics in Agriculture Vol. 148

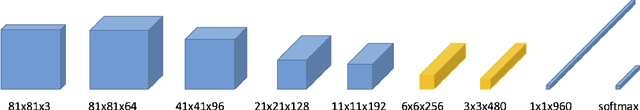

In-Field Peduncle Detection of Sweet Peppers for Robotic Harvesting: a comparative study

Sep 29, 2017

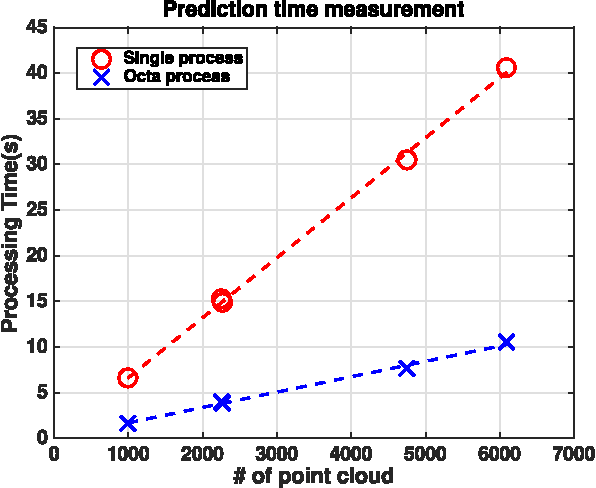

Abstract:Robotic harvesting of crops has the potential to disrupt current agricultural practices. A key element to enabling robotic harvesting is to safely remove the crop from the plant which often involves locating and cutting the peduncle, the part of the crop that attaches it to the main stem of the plant. In this paper we present a comparative study of two methods for performing peduncle detection. The first method is based on classic colour and geometric features obtained from the scene with a support vector machine classifier, referred to as PFH-SVM. The second method is an efficient deep neural network approach, MiniInception, that is able to be deployed on a robotic platform. In both cases we employ a secondary filtering process that enforces reasonable assumptions about the crop structure, such as the proximity of the peduncle to the crop. Our tests are conducted on Harvey, a sweet pepper harvesting robot, and is evaluated in a greenhouse using two varieties of sweet pepper, Ducati and Mercuno. We demonstrate that the MiniInception method achieves impressive accuracy and considerably outperforms the PFH-SVM approach achieving an F1 score of 0.564 and 0.302 respectively.

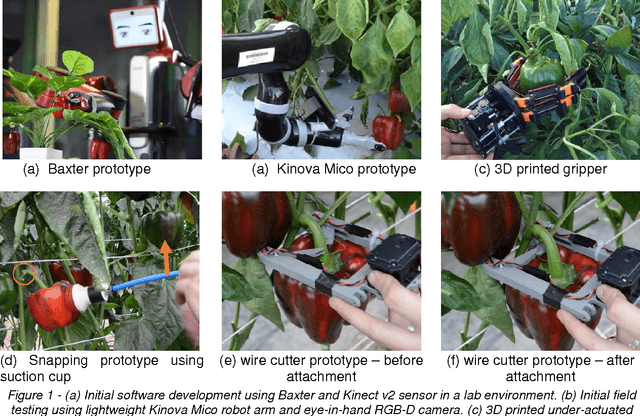

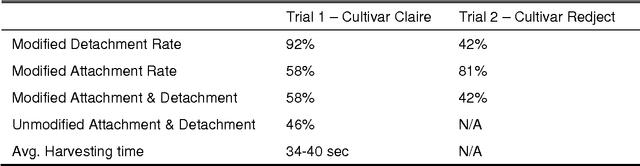

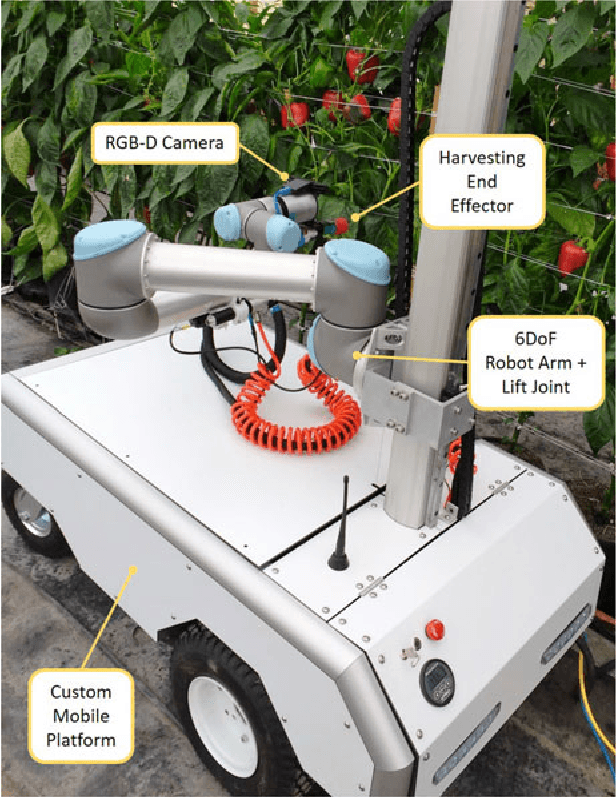

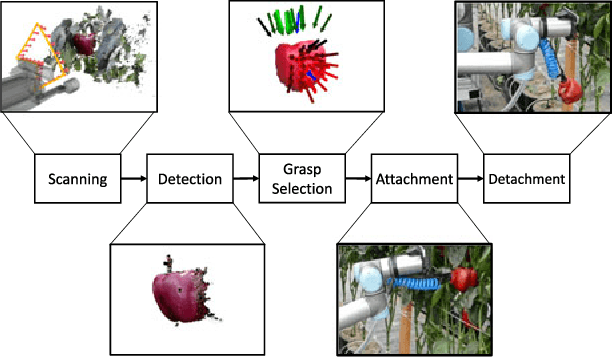

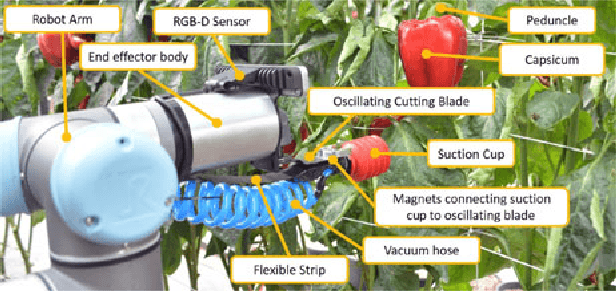

Lessons Learnt from Field Trials of a Robotic Sweet Pepper Harvester

Jun 19, 2017

Abstract:In this paper, we present the lessons learnt during the development of a new robotic harvester (Harvey) that can autonomously harvest sweet pepper (capsicum) in protected cropping environments. Robotic harvesting offers an attractive potential solution to reducing labour costs while enabling more regular and selective harvesting, optimising crop quality, scheduling and therefore profit. Our approach combines effective vision algorithms with a novel end-effector design to enable successful harvesting of sweet peppers. We demonstrate a simple and effective vision-based algorithm for crop detection, a grasp selection method, and a novel end-effector design for harvesting. To reduce the complexity of motion planning and to minimise occlusions we focus on picking sweet peppers in a protected cropping environment where plants are grown on planar trellis structures. Initial field trials in protected cropping environments, with two cultivars, demonstrate the efficacy of this approach. The results show that the robot harvester can successfully detect, grasp, and detach crop from the plant within a real protected cropping system. The novel contributions of this work have resulted in significant and encouraging improvements in sweet pepper picking success rates compared with the state-of-the-art. Future work will look at detecting sweet pepper peduncles and improving the total harvesting cycle time for each sweet pepper. The methods presented in this paper provide steps towards the goal of fully autonomous and reliable crop picking systems that will revolutionise the horticulture industry by reducing labour costs, maximising the quality of produce, and ultimately improving the sustainability of farming enterprises.

Autonomous Sweet Pepper Harvesting for Protected Cropping Systems

Jun 07, 2017

Abstract:In this letter, we present a new robotic harvester (Harvey) that can autonomously harvest sweet pepper in protected cropping environments. Our approach combines effective vision algorithms with a novel end-effector design to enable successful harvesting of sweet peppers. Initial field trials in protected cropping environments, with two cultivar, demonstrate the efficacy of this approach achieving a 46% success rate for unmodified crop, and 58% for modified crop. Furthermore, for the more favourable cultivar we were also able to detach 90% of sweet peppers, indicating that improvements in the grasping success rate would result in greatly improved harvesting performance.

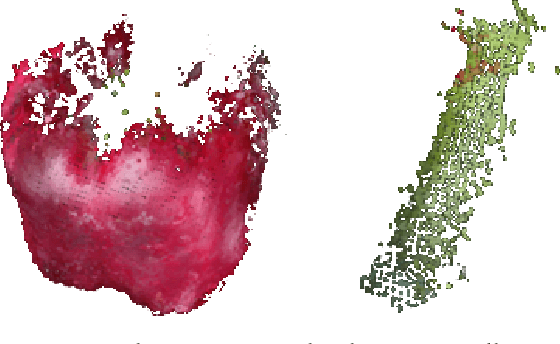

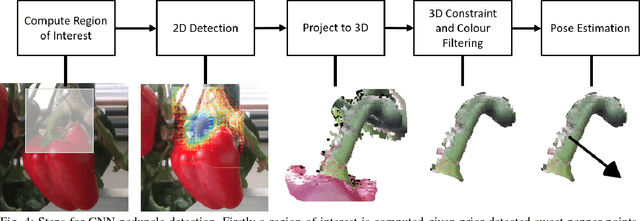

Peduncle Detection of Sweet Pepper for Autonomous Crop Harvesting - Combined Colour and 3D Information

Jan 30, 2017

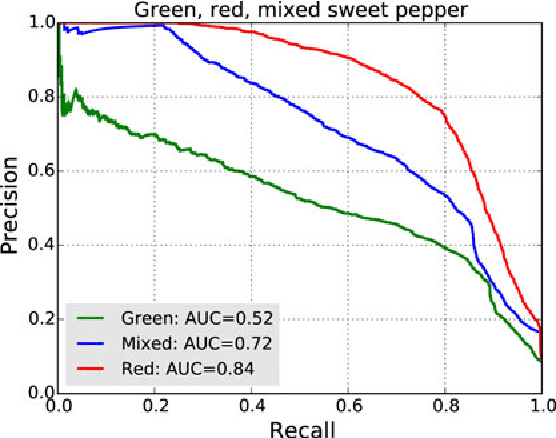

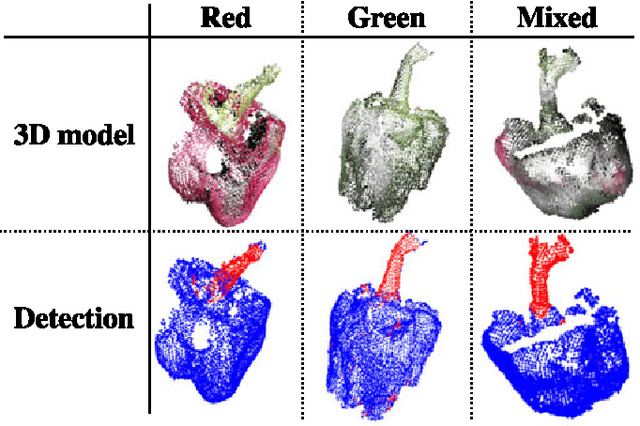

Abstract:This paper presents a 3D visual detection method for the challenging task of detecting peduncles of sweet peppers (Capsicum annuum) in the field. Cutting the peduncle cleanly is one of the most difficult stages of the harvesting process, where the peduncle is the part of the crop that attaches it to the main stem of the plant. Accurate peduncle detection in 3D space is therefore a vital step in reliable autonomous harvesting of sweet peppers, as this can lead to precise cutting while avoiding damage to the surrounding plant. This paper makes use of both colour and geometry information acquired from an RGB-D sensor and utilises a supervised-learning approach for the peduncle detection task. The performance of the proposed method is demonstrated and evaluated using qualitative and quantitative results (the Area-Under-the-Curve (AUC) of the detection precision-recall curve). We are able to achieve an AUC of 0.71 for peduncle detection on field-grown sweet peppers. We release a set of manually annotated 3D sweet pepper and peduncle images to assist the research community in performing further research on this topic.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge