A Heteroscedastic Likelihood Model for Two-frame Optical Flow

Paper and Code

Oct 14, 2020

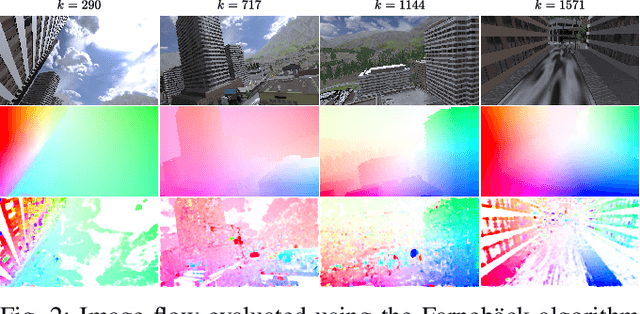

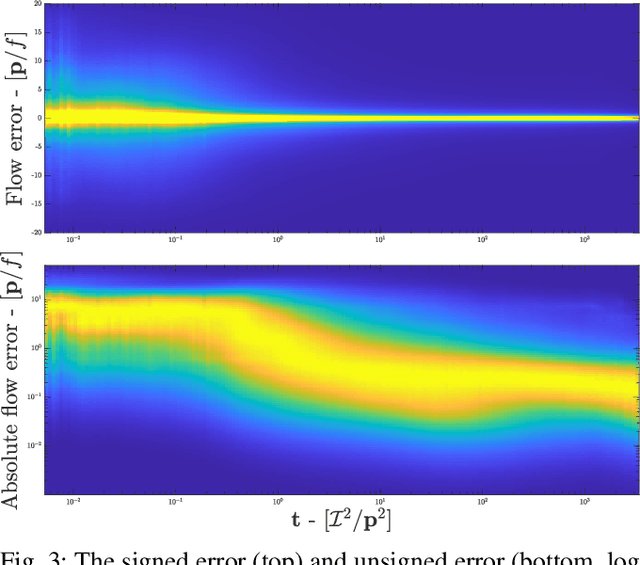

Machine vision is an important sensing technology used in mobile robotic systems. Advancing the autonomy of such systems requires accurate characterisation of sensor uncertainty. Vision includes intrinsic uncertainty due to the camera sensor and extrinsic uncertainty due to environmental lighting and texture, which propagate through the image processing algorithms used to produce visual measurements. To faithfully characterise visual measurements, we must take into account these uncertainties. In this paper, we propose a new class of likelihood functions that characterises the uncertainty of the error distribution of two-frame optical flow that enables a heteroscedastic dependence on texture. We employ the proposed class to characterise the Farneback and Lucas Kanade optical flow algorithms and achieve close agreement with their respective empirical error distributions over a wide range of texture in a simulated environment. The utility of the proposed likelihood model is demonstrated in a visual odometry ego-motion simulation study, which results in 30-83% reduction in position drift rate compared to traditional methods employing a Gaussian error assumption. The development of an empirically congruent likelihood model advances the requisite tool-set for vision-based Bayesian inference and enables sensor data fusion with GPS, LiDAR and IMU to advance robust autonomous navigation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge