Tao Teng

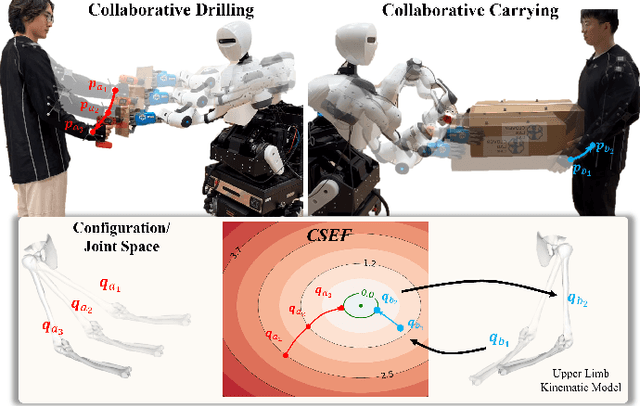

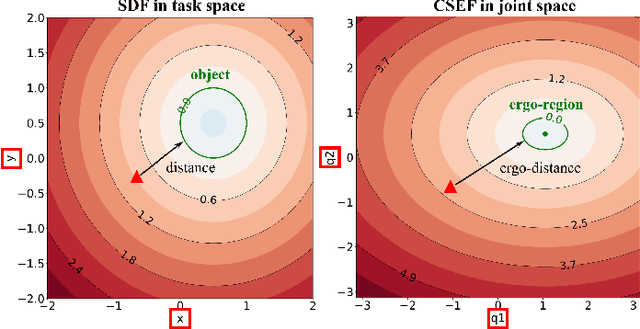

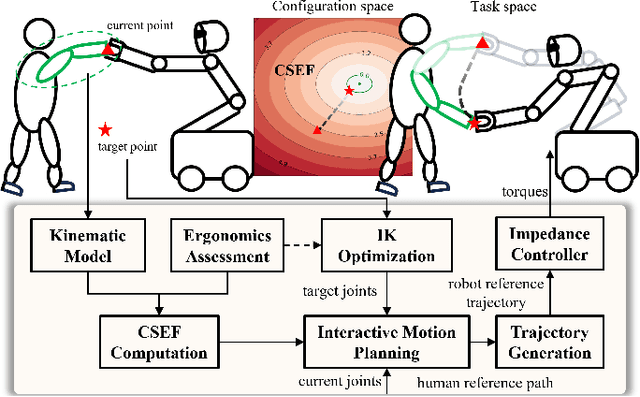

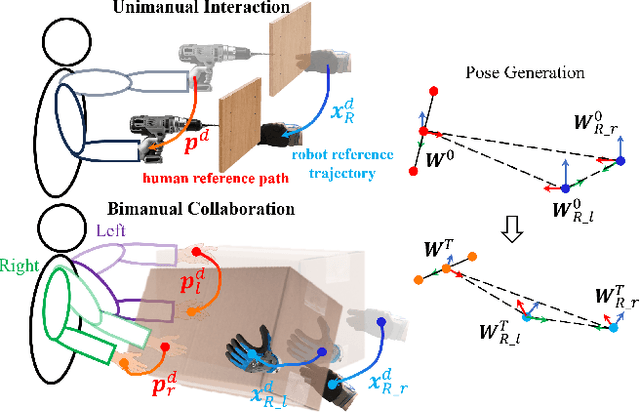

Interactive Motion Planning for Human-Robot Collaboration Based on Human-Centric Configuration Space Ergonomic Field

Dec 16, 2025

Abstract:Industrial human-robot collaboration requires motion planning that is collision-free, responsive, and ergonomically safe to reduce fatigue and musculoskeletal risk. We propose the Configuration Space Ergonomic Field (CSEF), a continuous and differentiable field over the human joint space that quantifies ergonomic quality and provides gradients for real-time ergonomics-aware planning. An efficient algorithm constructs CSEF from established metrics with joint-wise weighting and task conditioning, and we integrate it into a gradient-based planner compatible with impedance-controlled robots. In a 2-DoF benchmark, CSEF-based planning achieves higher success rates, lower ergonomic cost, and faster computation than a task-space ergonomic planner. Hardware experiments with a dual-arm robot in unimanual guidance, collaborative drilling, and bimanual cocarrying show faster ergonomic cost reduction, closer tracking to optimized joint targets, and lower muscle activation than a point-to-point baseline. CSEF-based planning method reduces average ergonomic scores by up to 10.31% for collaborative drilling tasks and 5.60% for bimanual co-carrying tasks while decreasing activation in key muscle groups, indicating practical benefits for real-world deployment.

Towards Deploying VLA without Fine-Tuning: Plug-and-Play Inference-Time VLA Policy Steering via Embodied Evolutionary Diffusion

Nov 18, 2025Abstract:Vision-Language-Action (VLA) models have demonstrated significant potential in real-world robotic manipulation. However, pre-trained VLA policies still suffer from substantial performance degradation during downstream deployment. Although fine-tuning can mitigate this issue, its reliance on costly demonstration collection and intensive computation makes it impractical in real-world settings. In this work, we introduce VLA-Pilot, a plug-and-play inference-time policy steering method for zero-shot deployment of pre-trained VLA without any additional fine-tuning or data collection. We evaluate VLA-Pilot on six real-world downstream manipulation tasks across two distinct robotic embodiments, encompassing both in-distribution and out-of-distribution scenarios. Experimental results demonstrate that VLA-Pilot substantially boosts the success rates of off-the-shelf pre-trained VLA policies, enabling robust zero-shot generalization to diverse tasks and embodiments. Experimental videos and code are available at: https://rip4kobe.github.io/vla-pilot/.

ManiDP: Manipulability-Aware Diffusion Policy for Posture-Dependent Bimanual Manipulation

Oct 27, 2025Abstract:Recent work has demonstrated the potential of diffusion models in robot bimanual skill learning. However, existing methods ignore the learning of posture-dependent task features, which are crucial for adapting dual-arm configurations to meet specific force and velocity requirements in dexterous bimanual manipulation. To address this limitation, we propose Manipulability-Aware Diffusion Policy (ManiDP), a novel imitation learning method that not only generates plausible bimanual trajectories, but also optimizes dual-arm configurations to better satisfy posture-dependent task requirements. ManiDP achieves this by extracting bimanual manipulability from expert demonstrations and encoding the encapsulated posture features using Riemannian-based probabilistic models. These encoded posture features are then incorporated into a conditional diffusion process to guide the generation of task-compatible bimanual motion sequences. We evaluate ManiDP on six real-world bimanual tasks, where the experimental results demonstrate a 39.33$\%$ increase in average manipulation success rate and a 0.45 improvement in task compatibility compared to baseline methods. This work highlights the importance of integrating posture-relevant robotic priors into bimanual skill diffusion to enable human-like adaptability and dexterity.

Whole-Body Coordination for Dynamic Object Grasping with Legged Manipulators

Aug 10, 2025Abstract:Quadrupedal robots with manipulators offer strong mobility and adaptability for grasping in unstructured, dynamic environments through coordinated whole-body control. However, existing research has predominantly focused on static-object grasping, neglecting the challenges posed by dynamic targets and thus limiting applicability in dynamic scenarios such as logistics sorting and human-robot collaboration. To address this, we introduce DQ-Bench, a new benchmark that systematically evaluates dynamic grasping across varying object motions, velocities, heights, object types, and terrain complexities, along with comprehensive evaluation metrics. Building upon this benchmark, we propose DQ-Net, a compact teacher-student framework designed to infer grasp configurations from limited perceptual cues. During training, the teacher network leverages privileged information to holistically model both the static geometric properties and dynamic motion characteristics of the target, and integrates a grasp fusion module to deliver robust guidance for motion planning. Concurrently, we design a lightweight student network that performs dual-viewpoint temporal modeling using only the target mask, depth map, and proprioceptive state, enabling closed-loop action outputs without reliance on privileged data. Extensive experiments on DQ-Bench demonstrate that DQ-Net achieves robust dynamic objects grasping across multiple task settings, substantially outperforming baseline methods in both success rate and responsiveness.

Human-Like Robot Impedance Regulation Skill Learning from Human-Human Demonstrations

Feb 19, 2025Abstract:Humans are experts in collaborating with others physically by regulating compliance behaviors based on the perception of their partner states and the task requirements. Enabling robots to develop proficiency in human collaboration skills can facilitate more efficient human-robot collaboration (HRC). This paper introduces an innovative impedance regulation skill learning framework for achieving HRC in multiple physical collaborative tasks. The framework is designed to adjust the robot compliance to the human partner states while adhering to reference trajectories provided by human-human demonstrations. Specifically, electromyography (EMG) signals from human muscles are collected and analyzed to extract limb impedance, representing compliance behaviors during demonstrations. Human endpoint motions are captured and represented using a probabilistic learning method to create reference trajectories and corresponding impedance profiles. Meanwhile, an LSTMbased module is implemented to develop task-oriented impedance regulation policies by mapping the muscle synergistic contributions between two demonstrators. Finally, we propose a wholebody impedance controller for a human-like robot, coordinating joint outputs to achieve the desired impedance and reference trajectory during task execution. Experimental validation was conducted through a collaborative transportation task and two interactive Tai Chi pushing hands tasks, demonstrating superior performance from the perspective of interactive forces compared to a constant impedance control method.

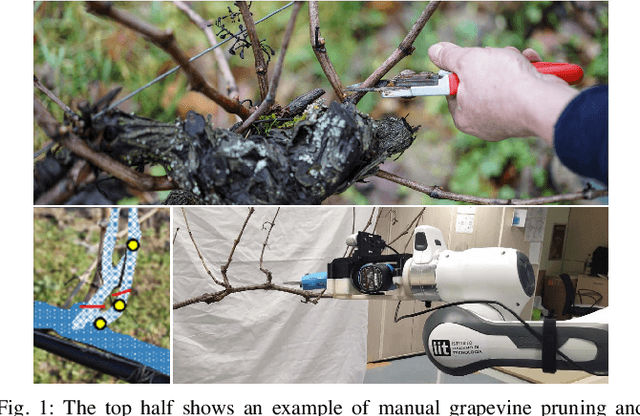

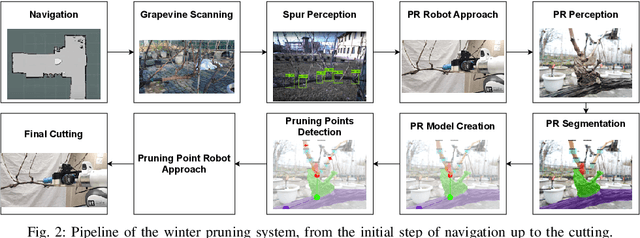

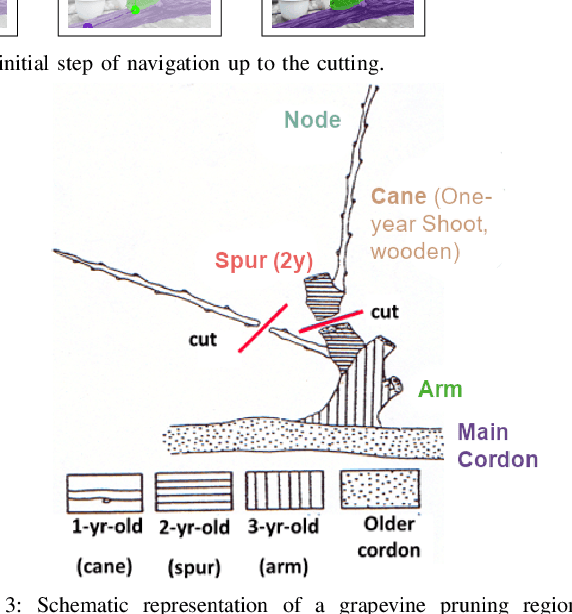

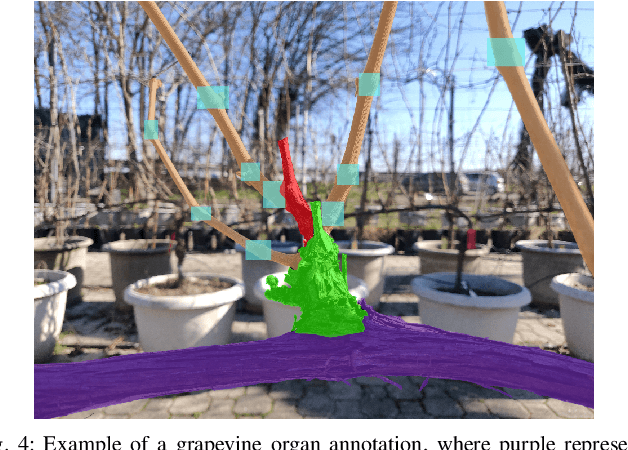

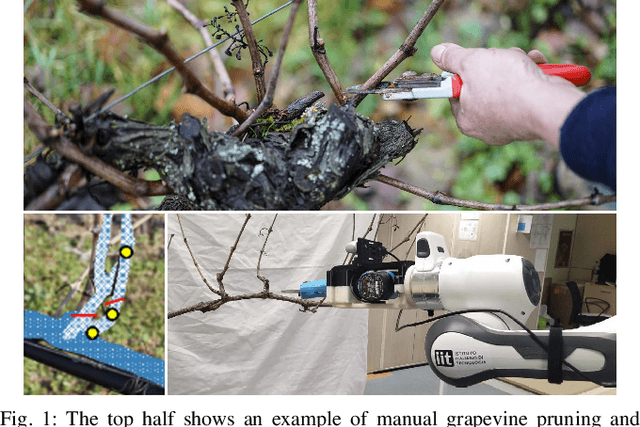

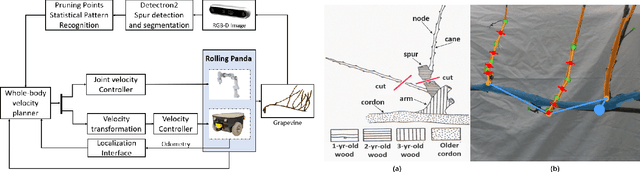

Towards Precise Pruning Points Detection using Semantic-Instance-Aware Plant Models for Grapevine Winter Pruning Automation

Sep 15, 2021

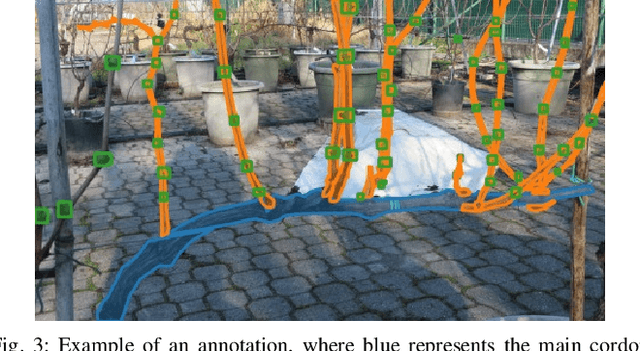

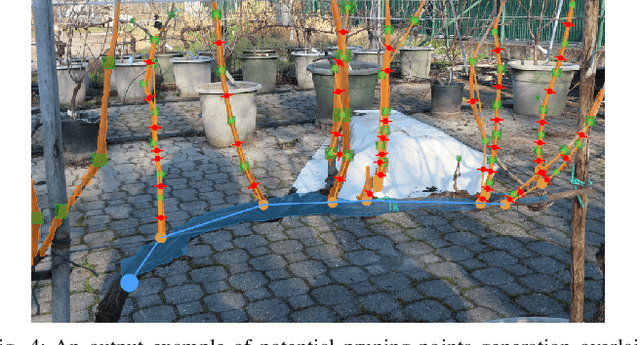

Abstract:Grapevine winter pruning is a complex task, that requires skilled workers to execute it correctly. The complexity makes it time consuming. It is an operation that requires about 80-120 hours per hectare annually, making an automated robotic system that helps in speeding up the process a crucial tool in large-size vineyards. We will describe (a) a novel expert annotated dataset for grapevine segmentation, (b) a state of the art neural network implementation and (c) generation of pruning points following agronomic rules, leveraging the simplified structure of the plant. With this approach, we are able to generate a set of pruning points on the canes, paving the way towards a correct automation of grapevine winter pruning.

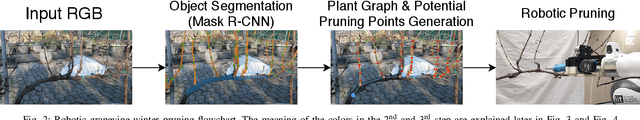

Grapevine Winter Pruning Automation: On Potential Pruning Points Detection through 2D Plant Modeling using Grapevine Segmentation

Jun 08, 2021

Abstract:Grapevine winter pruning is a complex task, that requires skilled workers to execute it correctly. The complexity of this task is also the reason why it is time consuming. Considering that this operation takes about 80-120 hours/ha to be completed, and therefore is even more crucial in large-size vineyards, an automated system can help to speed up the process. To this end, this paper presents a novel multidisciplinary approach that tackles this challenging task by performing object segmentation on grapevine images, used to create a representative model of the grapevine plants. Second, a set of potential pruning points is generated from this plant representation. We will describe (a) a methodology for data acquisition and annotation, (b) a neural network fine-tuning for grapevine segmentation, (c) an image processing based method for creating the representative model of grapevines, starting from the inferred segmentation and (d) potential pruning points detection and localization, based on the plant model which is a simplification of the grapevine structure. With this approach, we are able to identify a significant set of potential pruning points on the canes, that can be used, with further selection, to derive the final set of the real pruning points.

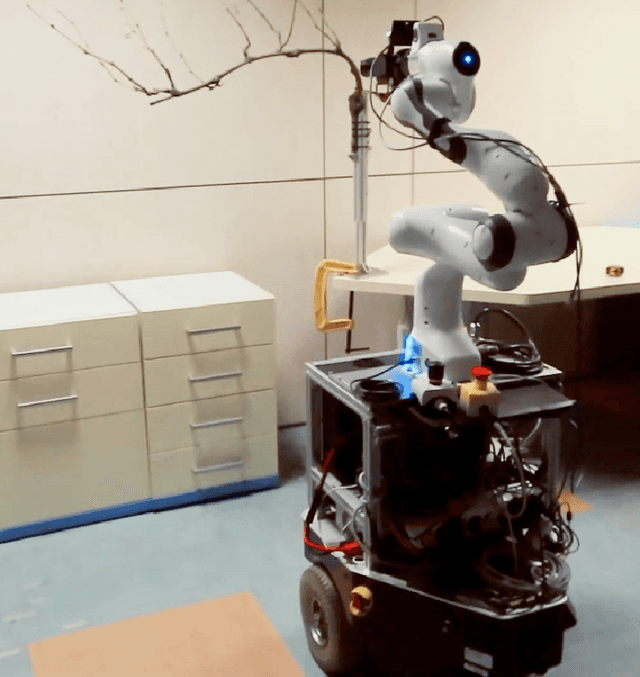

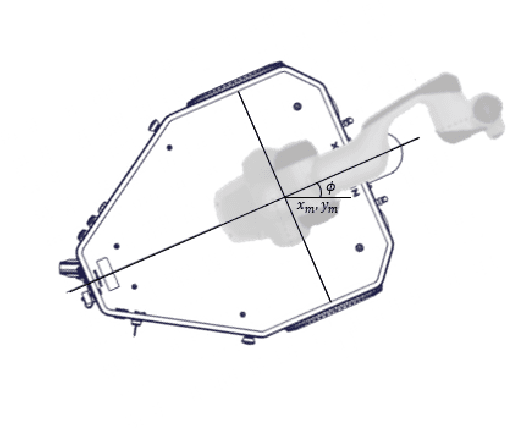

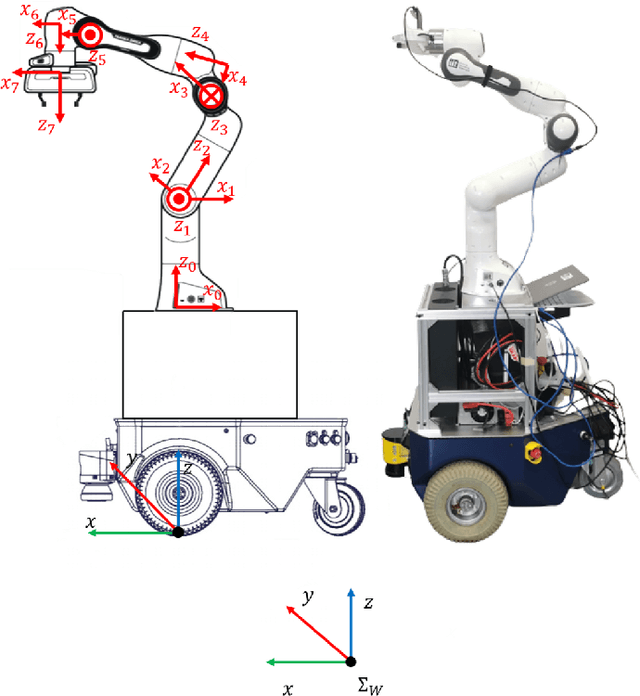

Whole-Body Control on Non-holonomic Mobile Manipulation for Grapevine Winter Pruning Automation

May 22, 2021

Abstract:Mobile manipulators that combine mobility and manipulability, are increasingly being used for various unstructured application scenarios in the field, e.g. vineyards. Therefore, the coordinated motion of the mobile base and manipulator is an essential feature of the overall performance. In this paper, we explore a whole-body motion controller of a robot which is composed of a 2-DoFs non-holonomic wheeled mobile base with a 7-DoFs manipulator (non-holonomic wheeled mobile manipulator, NWMM) This robotic platform is designed to efficiently undertake complex grapevine pruning tasks. In the control framework, a task priority coordinated motion of the NWMM is guaranteed. Lower-priority tasks are projected into the null space of the top-priority tasks so that higher-priority tasks are completed without interruption from lower-priority tasks. The proposed controller was evaluated in a grapevine spur pruning experiment scenario.

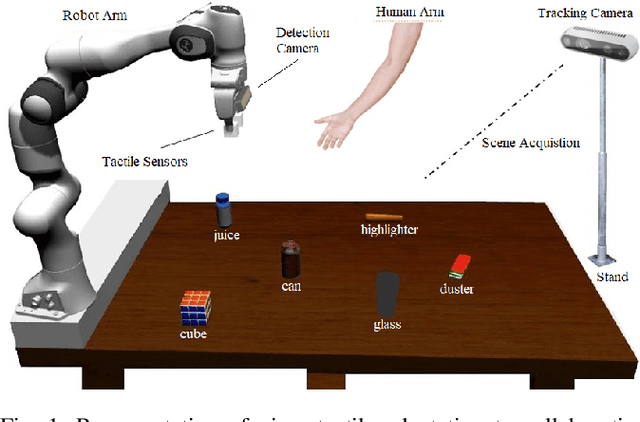

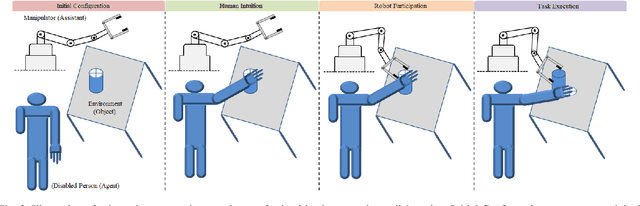

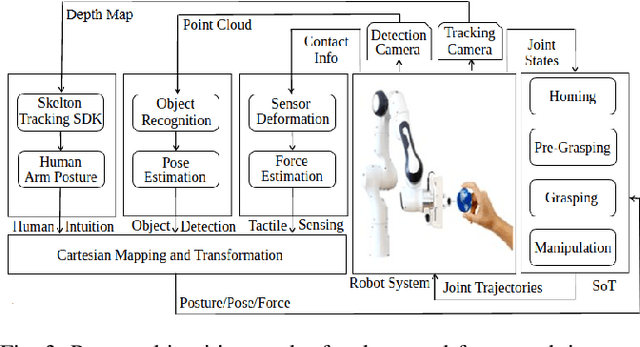

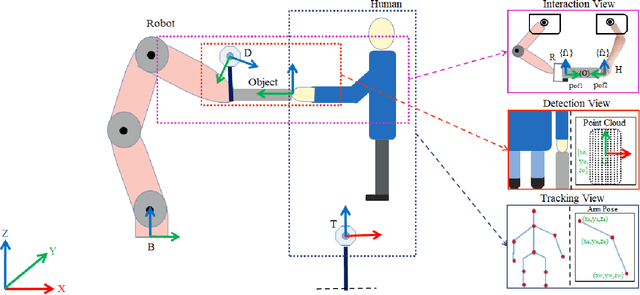

Formulating Intuitive Stack-of-Tasks with Visuo-Tactile Perception for Collaborative Human-Robot Fine Manipulation

Mar 09, 2021

Abstract:Enabling robots to work in close proximity with humans necessitates to employ not only multi-sensory information for coordinated and autonomous interactions but also a control framework that ensures adaptive and flexible collaborative behavior. Such a control framework needs to integrate accuracy and repeatability of robots with cognitive ability and adaptability of humans for co-manipulation. In this regard, an intuitive stack of tasks (iSOT) formulation is proposed, that defines the robots actions based on human ergonomics and task progress. The framework is augmented with visuo-tactile perception for flexible interaction and autonomous adaption. The visual information using depth cameras, monitors and estimates the object pose and human arm gesture while the tactile feedback provides exploration skills for maintaining the desired contact to avoid slippage. Experiments conducted on robot system with human partnership for assembly and disassembly tasks confirm the effectiveness and usability of proposed framework.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge