Rui Cai

Summarize Before You Speak with ARACH: A Training-Free Inference-Time Plug-In for Enhancing LLMs via Global Attention Reallocation

Mar 10, 2026Abstract:Large language models (LLMs) achieve remarkable performance, yet further gains often require costly training. This has motivated growing interest in post-training techniques-especially training-free approaches that improve models at inference time without updating weights. Most training-free methods treat the model as a black box and improve outputs via input/output-level interventions, such as prompt design and test-time scaling through repeated sampling, reranking/verification, or search. In contrast, they rarely offer a plug-and-play mechanism to intervene in a model's internal computation. We propose ARACH(Attention Reallocation via an Adaptive Context Hub), a training-free inference-time plug-in that augments LLMs with an adaptive context hub to aggregate context and reallocate attention. Extensive experiments across multiple language modeling tasks show consistent improvements with modest inference overhead and no parameter updates. Attention analyses further suggest that ARACH mitigates the attention sink phenomenon. These results indicate that engineering a model's internal computation offers a distinct inference-time strategy, fundamentally different from both prompt-based test-time methods and training-based post-training approaches.

Xiaomi-Robotics-0: An Open-Sourced Vision-Language-Action Model with Real-Time Execution

Feb 13, 2026Abstract:In this report, we introduce Xiaomi-Robotics-0, an advanced vision-language-action (VLA) model optimized for high performance and fast and smooth real-time execution. The key to our method lies in a carefully designed training recipe and deployment strategy. Xiaomi-Robotics-0 is first pre-trained on large-scale cross-embodiment robot trajectories and vision-language data, endowing it with broad and generalizable action-generation capabilities while avoiding catastrophic forgetting of the visual-semantic knowledge of the underlying pre-trained VLM. During post-training, we propose several techniques for training the VLA model for asynchronous execution to address the inference latency during real-robot rollouts. During deployment, we carefully align the timesteps of consecutive predicted action chunks to ensure continuous and seamless real-time rollouts. We evaluate Xiaomi-Robotics-0 extensively in simulation benchmarks and on two challenging real-robot tasks that require precise and dexterous bimanual manipulation. Results show that our method achieves state-of-the-art performance across all simulation benchmarks. Moreover, Xiaomi-Robotics-0 can roll out fast and smoothly on real robots using a consumer-grade GPU, achieving high success rates and throughput on both real-robot tasks. To facilitate future research, code and model checkpoints are open-sourced at https://xiaomi-robotics-0.github.io

CARPE: Context-Aware Image Representation Prioritization via Ensemble for Large Vision-Language Models

Jan 20, 2026Abstract:Recent advancements in Large Vision-Language Models (LVLMs) have pushed them closer to becoming general-purpose assistants. Despite their strong performance, LVLMs still struggle with vision-centric tasks such as image classification, underperforming compared to their base vision encoders, which are often CLIP-based models. To address this limitation, we propose Context-Aware Image Representation Prioritization via Ensemble (CARPE), a novel, model-agnostic framework which introduces vision-integration layers and a context-aware ensemble strategy to identify when to prioritize image representations or rely on the reasoning capabilities of the language model. This design enhances the model's ability to adaptively weight visual and textual modalities and enables the model to capture various aspects of image representations, leading to consistent improvements in generalization across classification and vision-language benchmarks. Extensive experiments demonstrate that CARPE not only improves performance on image classification benchmarks but also enhances results across various vision-language benchmarks. Finally, CARPE is designed to be effectively integrated with most open-source LVLMs that consist of a vision encoder and a language model, ensuring its adaptability across diverse architectures.

Diagnosing and Mitigating Modality Interference in Multimodal Large Language Models

May 26, 2025

Abstract:Multimodal Large Language Models (MLLMs) have demonstrated impressive capabilities across tasks, yet they often exhibit difficulty in distinguishing task-relevant from irrelevant signals, particularly in tasks like Visual Question Answering (VQA), which can lead to susceptibility to misleading or spurious inputs. We refer to this broader limitation as the Cross-Modality Competency Problem: the model's inability to fairly evaluate all modalities. This vulnerability becomes more evident in modality-specific tasks such as image classification or pure text question answering, where models are expected to rely solely on one modality. In such tasks, spurious information from irrelevant modalities often leads to significant performance degradation. We refer to this failure as Modality Interference, which serves as a concrete and measurable instance of the cross-modality competency problem. We further design a perturbation-based causal diagnostic experiment to verify and quantify this problem. To mitigate modality interference, we propose a novel framework to fine-tune MLLMs, including perturbation-based data augmentations with both heuristic perturbations and adversarial perturbations via Projected Gradient Descent (PGD), and a consistency regularization strategy applied to model outputs with original and perturbed inputs. Experiments on multiple benchmark datasets (image-heavy, text-heavy, and VQA tasks) and multiple model families with different scales demonstrate significant improvements in robustness and cross-modality competency, indicating our method's effectiveness in boosting unimodal reasoning ability while enhancing performance on multimodal tasks.

Boosting Knowledge Graph-based Recommendations through Confidence-Aware Augmentation with Large Language Models

Feb 06, 2025

Abstract:Knowledge Graph-based recommendations have gained significant attention due to their ability to leverage rich semantic relationships. However, constructing and maintaining Knowledge Graphs (KGs) is resource-intensive, and the accuracy of KGs can suffer from noisy, outdated, or irrelevant triplets. Recent advancements in Large Language Models (LLMs) offer a promising way to improve the quality and relevance of KGs for recommendation tasks. Despite this, integrating LLMs into KG-based systems presents challenges, such as efficiently augmenting KGs, addressing hallucinations, and developing effective joint learning methods. In this paper, we propose the Confidence-aware KG-based Recommendation Framework with LLM Augmentation (CKG-LLMA), a novel framework that combines KGs and LLMs for recommendation task. The framework includes: (1) an LLM-based subgraph augmenter for enriching KGs with high-quality information, (2) a confidence-aware message propagation mechanism to filter noisy triplets, and (3) a dual-view contrastive learning method to integrate user-item interactions and KG data. Additionally, we employ a confidence-aware explanation generation process to guide LLMs in producing realistic explanations for recommendations. Finally, extensive experiments demonstrate the effectiveness of CKG-LLMA across multiple public datasets.

Dynamic Adapter with Semantics Disentangling for Cross-lingual Cross-modal Retrieval

Dec 18, 2024

Abstract:Existing cross-modal retrieval methods typically rely on large-scale vision-language pair data. This makes it challenging to efficiently develop a cross-modal retrieval model for under-resourced languages of interest. Therefore, Cross-lingual Cross-modal Retrieval (CCR), which aims to align vision and the low-resource language (the target language) without using any human-labeled target-language data, has gained increasing attention. As a general parameter-efficient way, a common solution is to utilize adapter modules to transfer the vision-language alignment ability of Vision-Language Pretraining (VLP) models from a source language to a target language. However, these adapters are usually static once learned, making it difficult to adapt to target-language captions with varied expressions. To alleviate it, we propose Dynamic Adapter with Semantics Disentangling (DASD), whose parameters are dynamically generated conditioned on the characteristics of the input captions. Considering that the semantics and expression styles of the input caption largely influence how to encode it, we propose a semantic disentangling module to extract the semantic-related and semantic-agnostic features from the input, ensuring that generated adapters are well-suited to the characteristics of input caption. Extensive experiments on two image-text datasets and one video-text dataset demonstrate the effectiveness of our model for cross-lingual cross-modal retrieval, as well as its good compatibility with various VLP models.

Zero-Shot Relational Learning for Multimodal Knowledge Graphs

Apr 09, 2024Abstract:Relational learning is an essential task in the domain of knowledge representation, particularly in knowledge graph completion (KGC).While relational learning in traditional single-modal settings has been extensively studied, exploring it within a multimodal KGC context presents distinct challenges and opportunities. One of the major challenges is inference on newly discovered relations without any associated training data. This zero-shot relational learning scenario poses unique requirements for multimodal KGC, i.e., utilizing multimodality to facilitate relational learning. However, existing works fail to support the leverage of multimodal information and leave the problem unexplored. In this paper, we propose a novel end-to-end framework, consisting of three components, i.e., multimodal learner, structure consolidator, and relation embedding generator, to integrate diverse multimodal information and knowledge graph structures to facilitate the zero-shot relational learning. Evaluation results on two multimodal knowledge graphs demonstrate the superior performance of our proposed method.

Masked Face Dataset Generation and Masked Face Recognition

Nov 13, 2023

Abstract:In the post-pandemic era, wearing face masks has posed great challenge to the ordinary face recognition. In the previous study, researchers has applied pretrained VGG16, and ResNet50 to extract features on the elaborate curated existing masked face recognition (MFR) datasets, RMFRD and SMFRD. To make the model more adaptable to the real world situation where the sample size is smaller and the camera environment has greater changes, we created a more challenging masked face dataset ourselves, by selecting 50 identities with 1702 images from Labelled Faces in the Wild (LFW) Dataset, and simulated face masks through key point detection. The another part of our study is to solve the masked face recognition problem, and we chose models by referring to the former state of the art results, instead of directly using pretrained models, we fine tuned the model on our new dataset and use the last linear layer to do the classification directly. Furthermore, we proposed using data augmentation strategy to further increase the test accuracy, and fine tuned a new networks beyond the former study, one of the most SOTA networks, Inception ResNet v1. The best test accuracy on 50 identity MFR has achieved 95%.

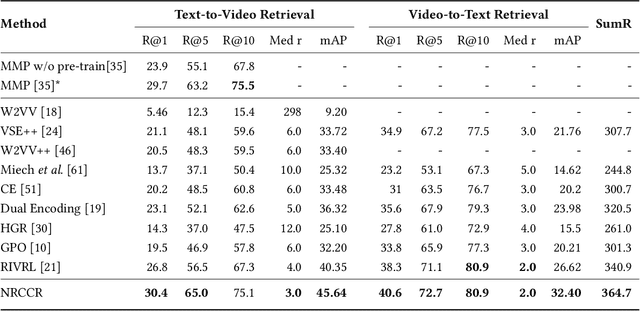

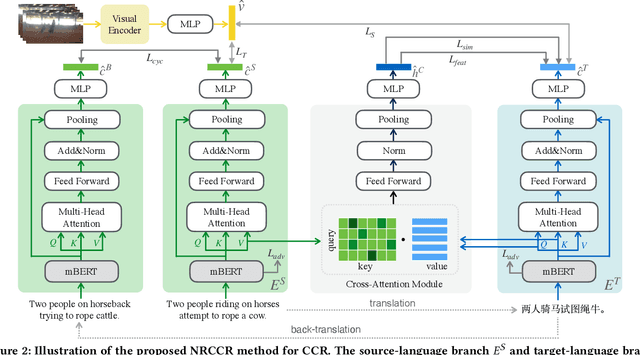

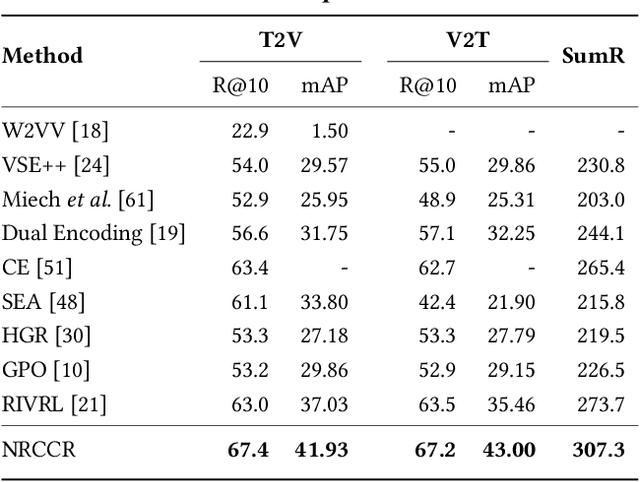

Cross-Lingual Cross-Modal Retrieval with Noise-Robust Learning

Aug 26, 2022

Abstract:Despite the recent developments in the field of cross-modal retrieval, there has been less research focusing on low-resource languages due to the lack of manually annotated datasets. In this paper, we propose a noise-robust cross-lingual cross-modal retrieval method for low-resource languages. To this end, we use Machine Translation (MT) to construct pseudo-parallel sentence pairs for low-resource languages. However, as MT is not perfect, it tends to introduce noise during translation, rendering textual embeddings corrupted and thereby compromising the retrieval performance. To alleviate this, we introduce a multi-view self-distillation method to learn noise-robust target-language representations, which employs a cross-attention module to generate soft pseudo-targets to provide direct supervision from the similarity-based view and feature-based view. Besides, inspired by the back-translation in unsupervised MT, we minimize the semantic discrepancies between origin sentences and back-translated sentences to further improve the noise robustness of the textual encoder. Extensive experiments are conducted on three video-text and image-text cross-modal retrieval benchmarks across different languages, and the results demonstrate that our method significantly improves the overall performance without using extra human-labeled data. In addition, equipped with a pre-trained visual encoder from a recent vision-and-language pre-training framework, i.e., CLIP, our model achieves a significant performance gain, showing that our method is compatible with popular pre-training models. Code and data are available at https://github.com/HuiGuanLab/nrccr.

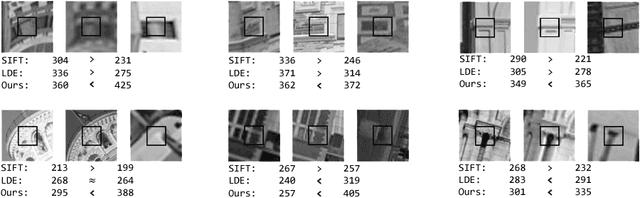

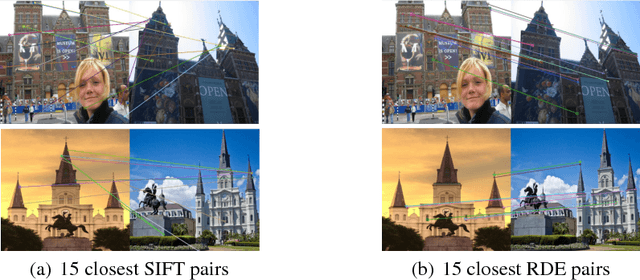

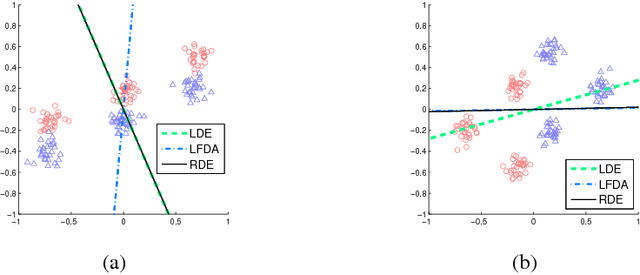

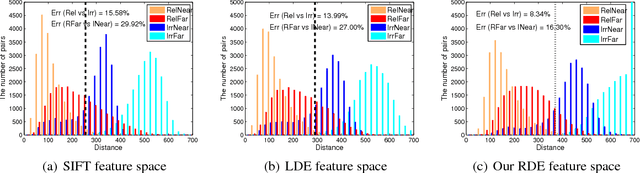

Regularized Discriminant Embedding for Visual Descriptor Learning

Jan 16, 2013

Abstract:Images can vary according to changes in viewpoint, resolution, noise, and illumination. In this paper, we aim to learn representations for an image, which are robust to wide changes in such environmental conditions, using training pairs of matching and non-matching local image patches that are collected under various environmental conditions. We present a regularized discriminant analysis that emphasizes two challenging categories among the given training pairs: (1) matching, but far apart pairs and (2) non-matching, but close pairs in the original feature space (e.g., SIFT feature space). Compared to existing work on metric learning and discriminant analysis, our method can better distinguish relevant images from irrelevant, but look-alike images.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge