Kye-Hyeon Kim

Transferable Candidate Proposal with Bounded Uncertainty

Dec 07, 2023Abstract:From an empirical perspective, the subset chosen through active learning cannot guarantee an advantage over random sampling when transferred to another model. While it underscores the significance of verifying transferability, experimental design from previous works often neglected that the informativeness of a data subset can change over model configurations. To tackle this issue, we introduce a new experimental design, coined as Candidate Proposal, to find transferable data candidates from which active learning algorithms choose the informative subset. Correspondingly, a data selection algorithm is proposed, namely Transferable candidate proposal with Bounded Uncertainty (TBU), which constrains the pool of transferable data candidates by filtering out the presumably redundant data points based on uncertainty estimation. We verified the validity of TBU in image classification benchmarks, including CIFAR-10/100 and SVHN. When transferred to different model configurations, TBU consistency improves performance in existing active learning algorithms. Our code is available at https://github.com/gokyeongryeol/TBU.

PVANet: Lightweight Deep Neural Networks for Real-time Object Detection

Dec 09, 2016

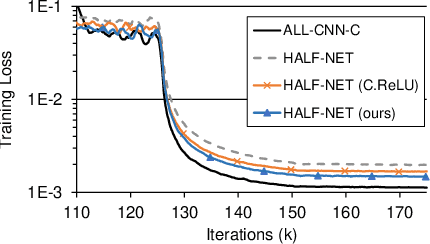

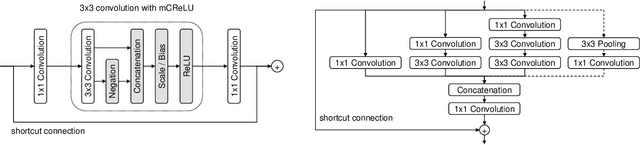

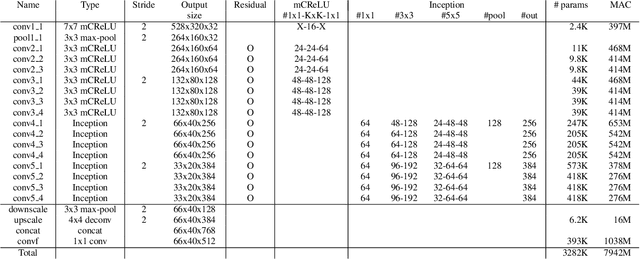

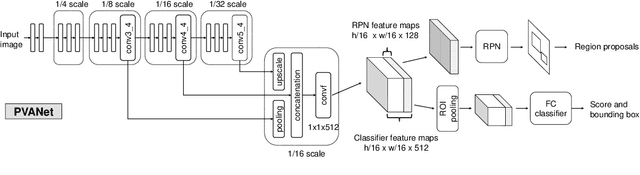

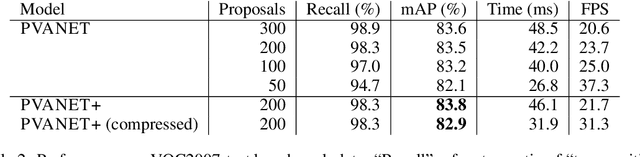

Abstract:In object detection, reducing computational cost is as important as improving accuracy for most practical usages. This paper proposes a novel network structure, which is an order of magnitude lighter than other state-of-the-art networks while maintaining the accuracy. Based on the basic principle of more layers with less channels, this new deep neural network minimizes its redundancy by adopting recent innovations including C.ReLU and Inception structure. We also show that this network can be trained efficiently to achieve solid results on well-known object detection benchmarks: 84.9% and 84.2% mAP on VOC2007 and VOC2012 while the required compute is less than 10% of the recent ResNet-101.

PVANET: Deep but Lightweight Neural Networks for Real-time Object Detection

Sep 30, 2016

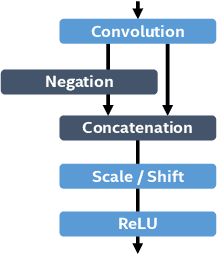

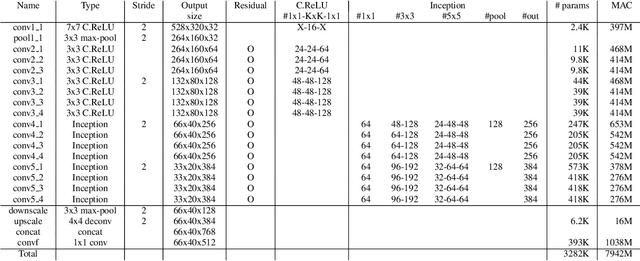

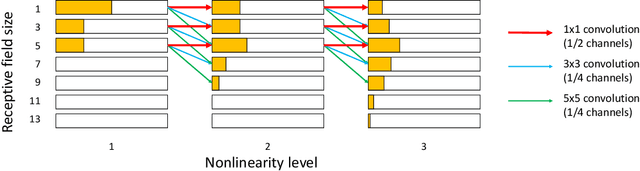

Abstract:This paper presents how we can achieve the state-of-the-art accuracy in multi-category object detection task while minimizing the computational cost by adapting and combining recent technical innovations. Following the common pipeline of "CNN feature extraction + region proposal + RoI classification", we mainly redesign the feature extraction part, since region proposal part is not computationally expensive and classification part can be efficiently compressed with common techniques like truncated SVD. Our design principle is "less channels with more layers" and adoption of some building blocks including concatenated ReLU, Inception, and HyperNet. The designed network is deep and thin and trained with the help of batch normalization, residual connections, and learning rate scheduling based on plateau detection. We obtained solid results on well-known object detection benchmarks: 83.8% mAP (mean average precision) on VOC2007 and 82.5% mAP on VOC2012 (2nd place), while taking only 750ms/image on Intel i7-6700K CPU with a single core and 46ms/image on NVIDIA Titan X GPU. Theoretically, our network requires only 12.3% of the computational cost compared to ResNet-101, the winner on VOC2012.

Regularized Discriminant Embedding for Visual Descriptor Learning

Jan 16, 2013

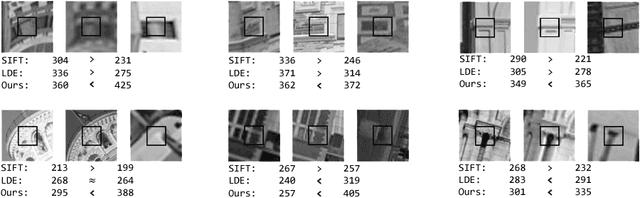

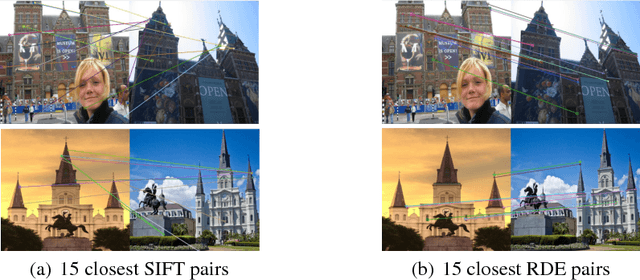

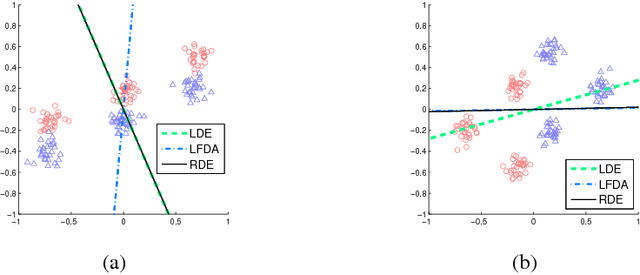

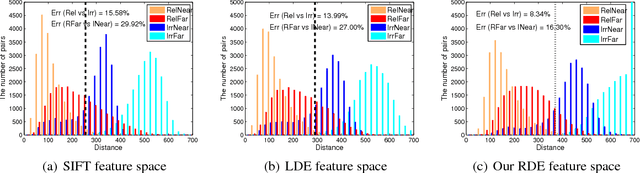

Abstract:Images can vary according to changes in viewpoint, resolution, noise, and illumination. In this paper, we aim to learn representations for an image, which are robust to wide changes in such environmental conditions, using training pairs of matching and non-matching local image patches that are collected under various environmental conditions. We present a regularized discriminant analysis that emphasizes two challenging categories among the given training pairs: (1) matching, but far apart pairs and (2) non-matching, but close pairs in the original feature space (e.g., SIFT feature space). Compared to existing work on metric learning and discriminant analysis, our method can better distinguish relevant images from irrelevant, but look-alike images.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge