Qiming Bao

CoRA: Optimizing Low-Rank Adaptation with Common Subspace of Large Language Models

Aug 31, 2024

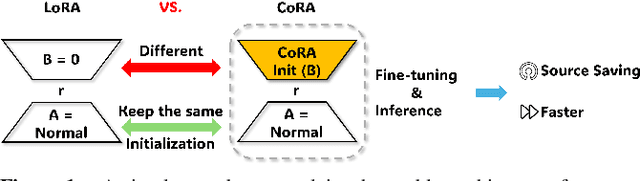

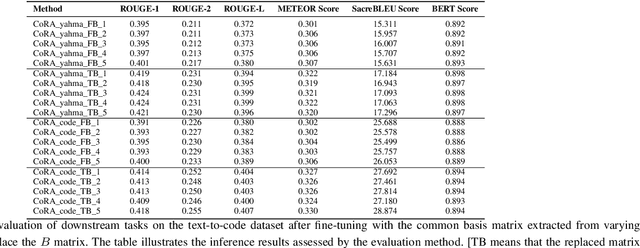

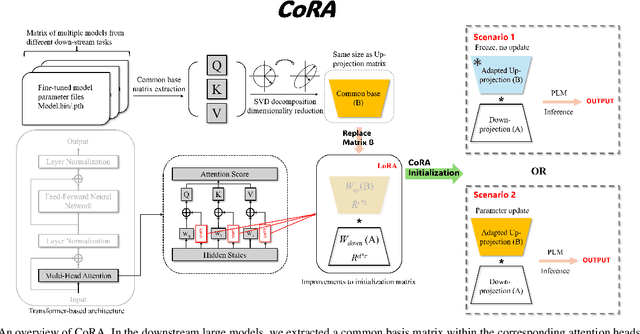

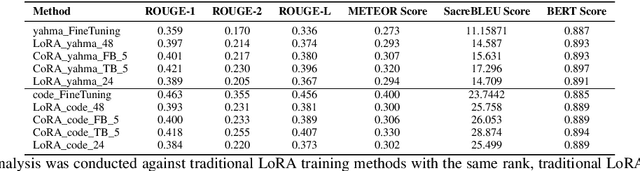

Abstract:In fine-tuning large language models (LLMs), conserving computational resources while maintaining effectiveness and improving outcomes within the same computational constraints is crucial. The Low-Rank Adaptation (LoRA) strategy balances efficiency and performance in fine-tuning large models by reducing the number of trainable parameters and computational costs. However, current advancements in LoRA might be focused on its fine-tuning methodologies, with not as much exploration as might be expected into further compression of LoRA. Since most of LoRA's parameters might still be superfluous, this may lead to unnecessary wastage of computational resources. In this paper, we propose \textbf{CoRA}: leveraging shared knowledge to optimize LoRA training by substituting its matrix $B$ with a common subspace from large models. Our two-fold method includes (1) Freezing the substitute matrix $B$ to halve parameters while training matrix $A$ for specific tasks and (2) Using the substitute matrix $B$ as an enhanced initial state for the original matrix $B$, achieving improved results with the same parameters. Our experiments show that the first approach achieves the same efficacy as the original LoRA fine-tuning while being more efficient than halving parameters. At the same time, the second approach has some improvements compared to LoRA's original fine-tuning performance. They generally attest to the effectiveness of our work.

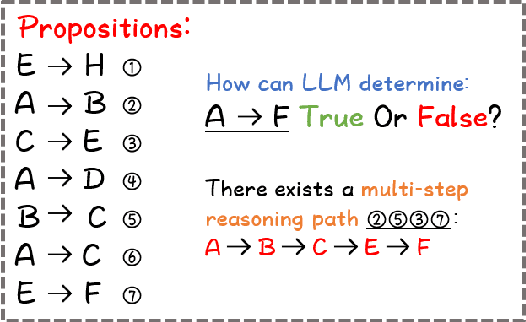

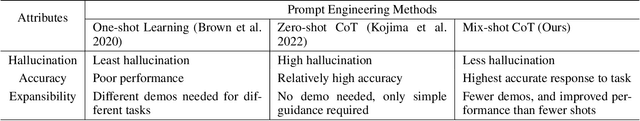

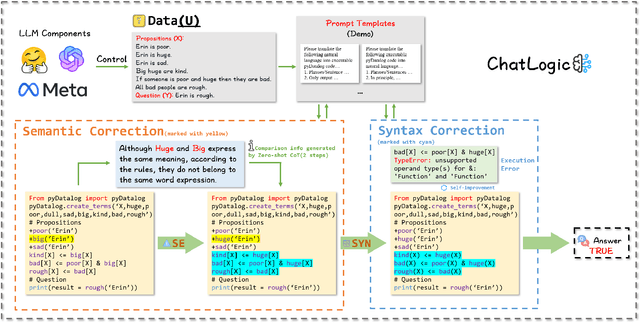

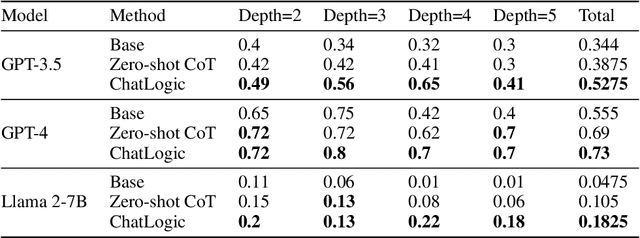

ChatLogic: Integrating Logic Programming with Large Language Models for Multi-Step Reasoning

Jul 14, 2024

Abstract:Large language models (LLMs) such as ChatGPT and GPT-4 have demonstrated impressive capabilities in various generative tasks. However, their performance is often hampered by limitations in accessing and leveraging long-term memory, leading to specific vulnerabilities and biases, especially during long interactions. This paper introduces ChatLogic, an innovative framework specifically targeted at LLM reasoning tasks that can enhance the performance of LLMs in multi-step deductive reasoning tasks by integrating logic programming. In ChatLogic, the language model plays a central role, acting as a controller and participating in every system operation stage. We propose a novel method of converting logic problems into symbolic integration with an inference engine. This approach leverages large language models' situational understanding and imitation skills and uses symbolic memory to enhance multi-step deductive reasoning capabilities. Our results show that the ChatLogic framework significantly improves the multi-step reasoning capabilities of LLMs. The source code and data are available at \url{https://github.com/Strong-AI-Lab/ChatLogic}

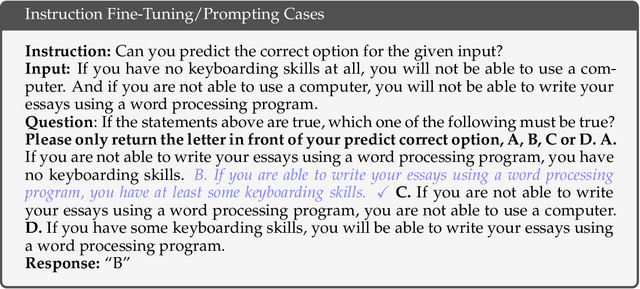

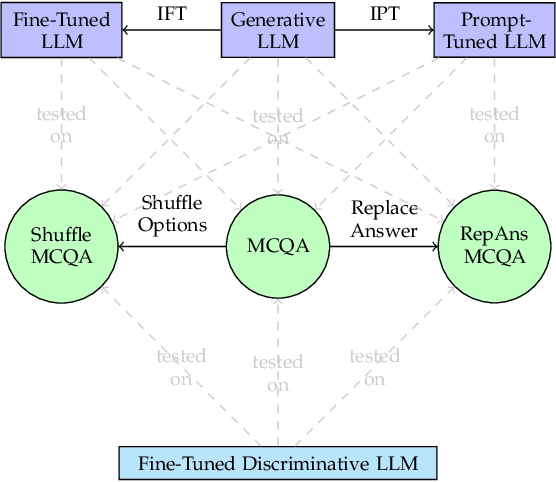

A Systematic Evaluation of Large Language Models on Out-of-Distribution Logical Reasoning Tasks

Oct 18, 2023

Abstract:Large language models (LLMs), such as GPT-3.5 and GPT-4, have greatly advanced the performance of artificial systems on various natural language processing tasks to human-like levels. However, their generalisation and robustness to perform logical reasoning remain under-evaluated. To probe this ability, we propose three new logical reasoning datasets named "ReClor-plus", "LogiQA-plus" and "LogiQAv2-plus", each featuring three subsets: the first with randomly shuffled options, the second with the correct choices replaced by "none of the other options are correct", and a combination of the previous two subsets. We carry out experiments on these datasets with both discriminative and generative LLMs and show that these simple tricks greatly hinder the performance of the language models. Despite their superior performance on the original publicly available datasets, we find that all models struggle to answer our newly constructed datasets. We show that introducing task variations by perturbing a sizable training set can markedly improve the model's generalisation and robustness in logical reasoning tasks. Moreover, applying logic-driven data augmentation for fine-tuning, combined with prompting can enhance the generalisation performance of both discriminative large language models and generative large language models. These results offer insights into assessing and improving the generalisation and robustness of large language models for logical reasoning tasks. We make our source code and data publicly available \url{https://github.com/Strong-AI-Lab/Logical-and-abstract-reasoning}.

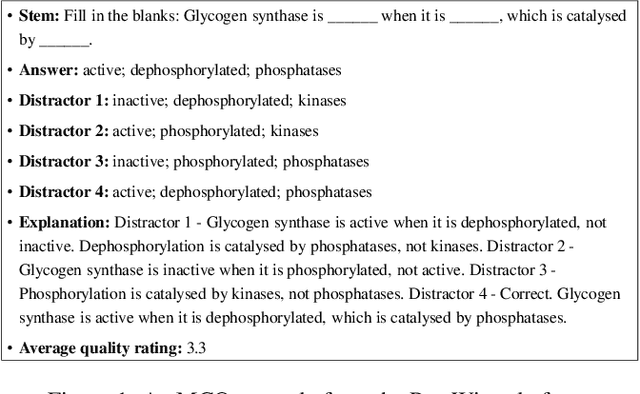

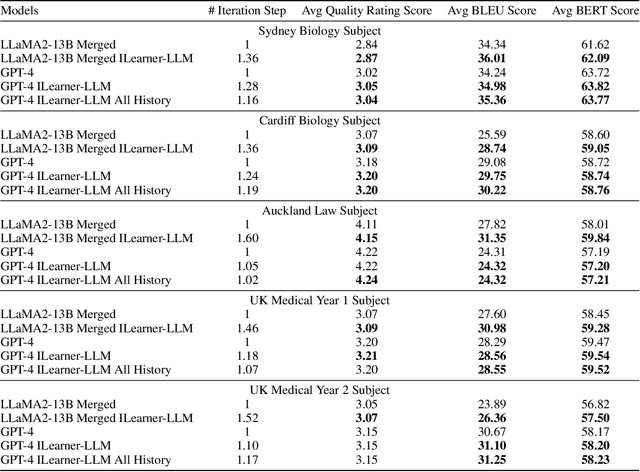

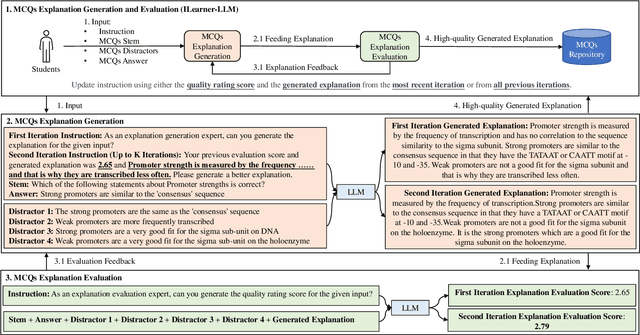

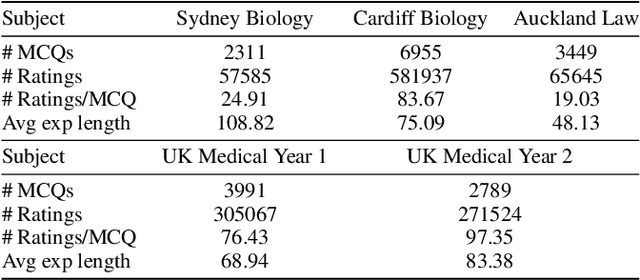

Exploring Self-Reinforcement for Improving Learnersourced Multiple-Choice Question Explanations with Large Language Models

Sep 19, 2023

Abstract:Learnersourcing involves students generating and sharing learning resources with their peers. When learnersourcing multiple-choice questions, creating explanations for the generated questions is a crucial step as it facilitates a deeper understanding of the related concepts. However, it is often difficult for students to craft effective explanations due to limited subject understanding and a tendency to merely restate the question stem, distractors, and correct answer. To help scaffold this task, in this work we propose a self-reinforcement large-language-model framework, with the goal of generating and evaluating explanations automatically. Comprising three modules, the framework generates student-aligned explanations, evaluates these explanations to ensure their quality and iteratively enhances the explanations. If an explanation's evaluation score falls below a defined threshold, the framework iteratively refines and reassesses the explanation. Importantly, our framework emulates the manner in which students compose explanations at the relevant grade level. For evaluation, we had a human subject-matter expert compare the explanations generated by students with the explanations created by the open-source large language model Vicuna-13B, a version of Vicuna-13B that had been fine-tuned using our method, and by GPT-4. We observed that, when compared to other large language models, GPT-4 exhibited a higher level of creativity in generating explanations. We also found that explanations generated by GPT-4 were ranked higher by the human expert than both those created by the other models and the original student-created explanations. Our findings represent a significant advancement in enriching the learnersourcing experience for students and enhancing the capabilities of large language models in educational applications.

Large Language Models Are Not Abstract Reasoners

May 31, 2023Abstract:Large Language Models have shown tremendous performance on a large variety of natural language processing tasks, ranging from text comprehension to common sense reasoning. However, the mechanisms responsible for this success remain unknown, and it is unclear whether LLMs can achieve human-like cognitive capabilities or whether these models are still fundamentally limited. Abstract reasoning is a fundamental task for cognition, consisting of finding and applying a general pattern from few data. Evaluating deep neural architectures on this task could give insight into their potential limitations regarding reasoning and their broad generalisation abilities, yet this is currently an under-explored area. In this paper, we perform extensive evaluations of state-of-the-art LLMs on abstract reasoning tasks, showing that they achieve very limited performance in contrast with other natural language tasks, and we investigate the reasons for this difference. We apply techniques that have been shown to improve performance on other NLP tasks and show that in most cases their impact on abstract reasoning performance is limited. In the course of this work, we have generated a new benchmark for evaluating language models on abstract reasoning tasks.

Contrastive Learning with Logic-driven Data Augmentation for Logical Reasoning over Text

May 21, 2023Abstract:Pre-trained large language model (LLM) is under exploration to perform NLP tasks that may require logical reasoning. Logic-driven data augmentation for representation learning has been shown to improve the performance of tasks requiring logical reasoning, but most of these data rely on designed templates and therefore lack generalization. In this regard, we propose an AMR-based logical equivalence-driven data augmentation method (AMR-LE) for generating logically equivalent data. Specifically, we first parse a text into the form of an AMR graph, next apply four logical equivalence laws (contraposition, double negation, commutative and implication laws) on the AMR graph to construct a logically equivalent/inequivalent AMR graph, and then convert it into a logically equivalent/inequivalent sentence. To help the model to better learn these logical equivalence laws, we propose a logical equivalence-driven contrastive learning training paradigm, which aims to distinguish the difference between logical equivalence and inequivalence. Our AMR-LE (Ensemble) achieves #2 on the ReClor leaderboard https://eval.ai/web/challenges/challenge-page/503/leaderboard/1347 . Our model shows better performance on seven downstream tasks, including ReClor, LogiQA, MNLI, MRPC, RTE, QNLI, and QQP. The source code and dataset are public at https://github.com/Strong-AI-Lab/Logical-Equivalence-driven-AMR-Data-Augmentation-for-Representation-Learning .

Input-length-shortening and text generation via attention values

Mar 14, 2023Abstract:Identifying words that impact a task's performance more than others is a challenge in natural language processing. Transformers models have recently addressed this issue by incorporating an attention mechanism that assigns greater attention (i.e., relevance) scores to some words than others. Because of the attention mechanism's high computational cost, transformer models usually have an input-length limitation caused by hardware constraints. This limitation applies to many transformers, including the well-known bidirectional encoder representations of the transformer (BERT) model. In this paper, we examined BERT's attention assignment mechanism, focusing on two questions: (1) How can attention be employed to reduce input length? (2) How can attention be used as a control mechanism for conditional text generation? We investigated these questions in the context of a text classification task. We discovered that BERT's early layers assign more critical attention scores for text classification tasks compared to later layers. We demonstrated that the first layer's attention sums could be used to filter tokens in a given sequence, considerably decreasing the input length while maintaining good test accuracy. We also applied filtering, which uses a compute-efficient semantic similarities algorithm, and discovered that retaining approximately 6\% of the original sequence is sufficient to obtain 86.5\% accuracy. Finally, we showed that we could generate data in a stable manner and indistinguishable from the original one by only using a small percentage (10\%) of the tokens with high attention scores according to BERT's first layer.

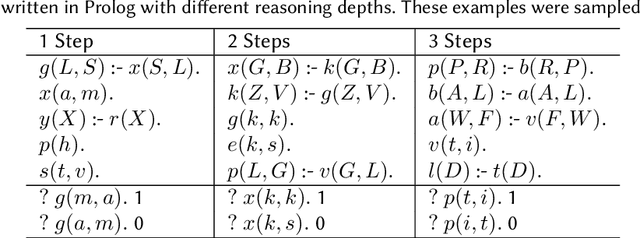

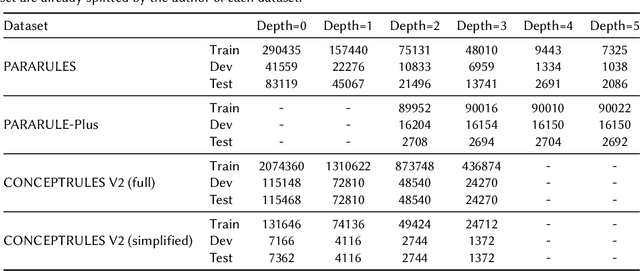

Multi-Step Deductive Reasoning Over Natural Language: An Empirical Study on Out-of-Distribution Generalisation

Jul 28, 2022

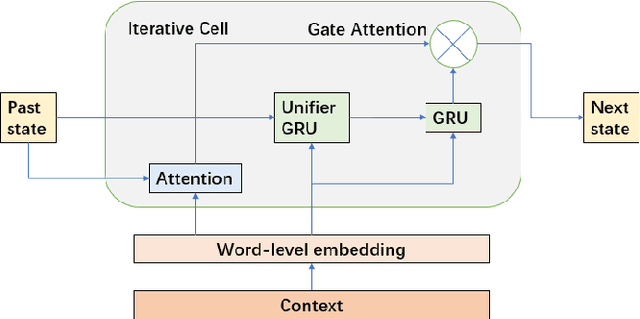

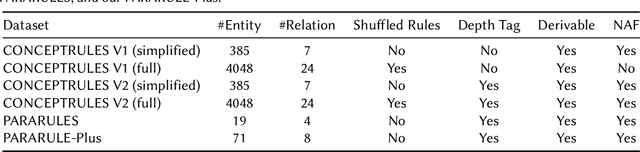

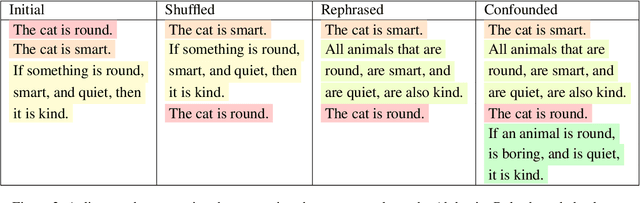

Abstract:Combining deep learning with symbolic logic reasoning aims to capitalize on the success of both fields and is drawing increasing attention. Inspired by DeepLogic, an end-to-end model trained to perform inference on logic programs, we introduce IMA-GloVe-GA, an iterative neural inference network for multi-step reasoning expressed in natural language. In our model, reasoning is performed using an iterative memory neural network based on RNN with a gate attention mechanism. We evaluate IMA-GloVe-GA on three datasets: PARARULES, CONCEPTRULES V1 and CONCEPTRULES V2. Experimental results show DeepLogic with gate attention can achieve higher test accuracy than DeepLogic and other RNN baseline models. Our model achieves better out-of-distribution generalisation than RoBERTa-Large when the rules have been shuffled. Furthermore, to address the issue of unbalanced distribution of reasoning depths in the current multi-step reasoning datasets, we develop PARARULE-Plus, a large dataset with more examples that require deeper reasoning steps. Experimental results show that the addition of PARARULE-Plus can increase the model's performance on examples requiring deeper reasoning depths. The source code and data are available at https://github.com/Strong-AI-Lab/Multi-Step-Deductive-Reasoning-Over-Natural-Language.

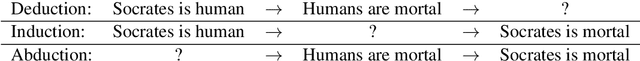

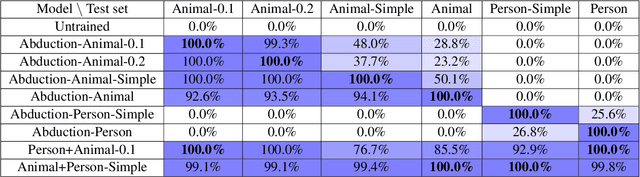

AbductionRules: Training Transformers to Explain Unexpected Inputs

Mar 23, 2022

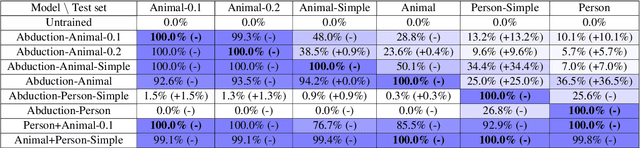

Abstract:Transformers have recently been shown to be capable of reliably performing logical reasoning over facts and rules expressed in natural language, but abductive reasoning - inference to the best explanation of an unexpected observation - has been underexplored despite significant applications to scientific discovery, common-sense reasoning, and model interpretability. We present AbductionRules, a group of natural language datasets designed to train and test generalisable abduction over natural-language knowledge bases. We use these datasets to finetune pretrained Transformers and discuss their performance, finding that our models learned generalisable abductive techniques but also learned to exploit the structure of our data. Finally, we discuss the viability of this approach to abductive reasoning and ways in which it may be improved in future work.

Relating Blindsight and AI: A Review

Dec 09, 2021

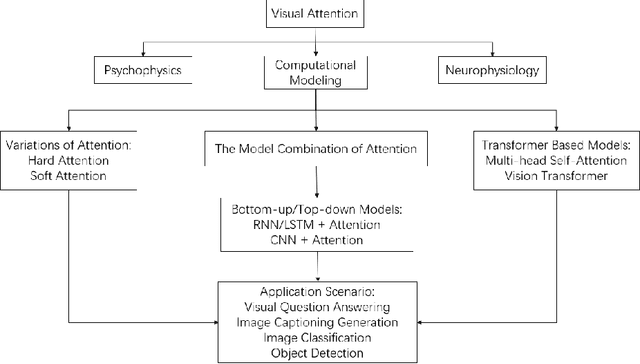

Abstract:Processes occurring in brains, a.k.a. biological neural networks, can and have been modeled within artificial neural network architectures. Due to this, we have conducted a review of research on the phenomenon of blindsight in an attempt to generate ideas for artificial intelligence models. Blindsight can be considered as a diminished form of visual experience. If we assume that artificial networks have no form of visual experience, then deficits caused by blindsight give us insights into the processes occurring within visual experience that we can incorporate into artificial neural networks. This article has been structured into three parts. Section 2 is a review of blindsight research, looking specifically at the errors occurring during this condition compared to normal vision. Section 3 identifies overall patterns from Section 2 to generate insights for computational models of vision. Section 4 demonstrates the utility of examining biological research to inform artificial intelligence research by examining computation models of visual attention relevant to one of the insights generated in Section 3. The research covered in Section 4 shows that incorporating one of our insights into computational vision does benefit those models. Future research will be required to determine whether our other insights are as valuable.

* Preprint of an article published in Journal of Artificial Intelligence and Consciousness, 2021 doi.org/10.1142/S2705078521500156 \c{opyright} copyright World Scientific Publishing Company www.worldscientific.com/worldscinet/jaic

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge