Paolo Testolina

Enabling Site-Specific Cellular Network Simulation Through Ray-Tracing-Driven ns-3

Aug 06, 2025

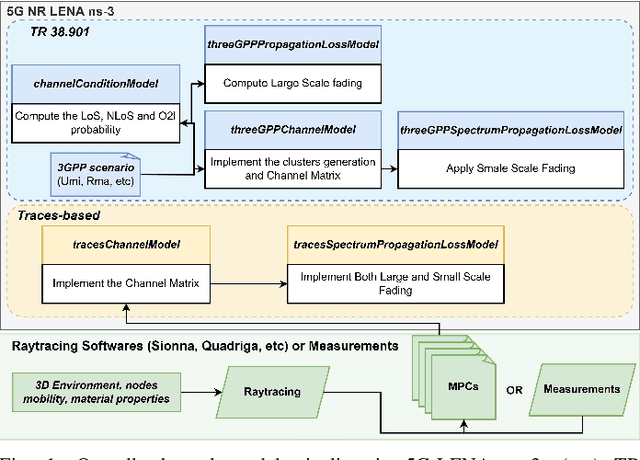

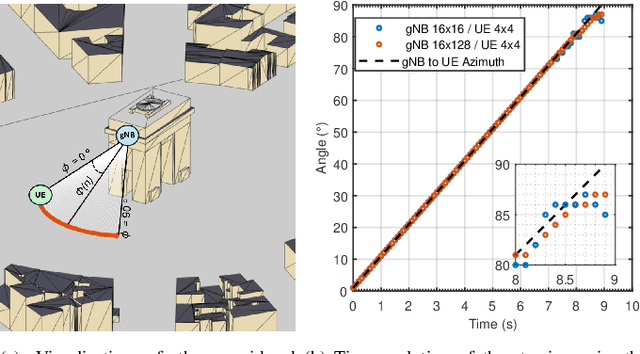

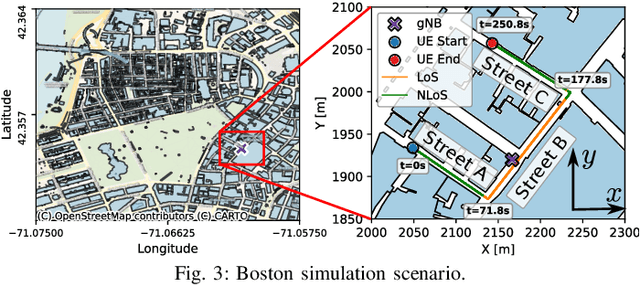

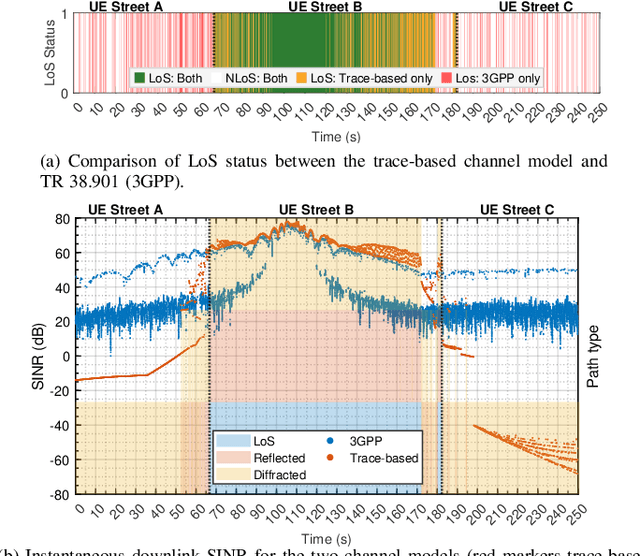

Abstract:Evaluating cellular systems, from 5G New Radio (NR) and 5G-Advanced to 6G, is challenging because the performance emerges from the tight coupling of propagation, beam management, scheduling, and higher-layer interactions. System-level simulation is therefore indispensable, yet the vast majority of studies rely on the statistical 3GPP channel models. These are well suited to capture average behavior across many statistical realizations, but cannot reproduce site-specific phenomena such as corner diffraction, street-canyon blockage, or deterministic line-of-sight conditions and angle-of-departure/arrival relationships that drive directional links. This paper extends 5G-LENA, an NR module for the system-level Network Simulator 3 (ns-3), with a trace-based channel model that processes the Multipath Components (MPCs) obtained from external ray-tracers (e.g., Sionna Ray Tracer (RT)) or measurement campaigns. Our module constructs frequency-domain channel matrices and feeds them to the existing Physical (PHY)/Medium Access Control (MAC) stack without any further modifications. The result is a geometry-based channel model that remains fully compatible with the standard 3GPP implementation in 5G-LENA, while delivering site-specific geometric fidelity. This new module provides a key building block toward Digital Twin (DT) capabilities by offering realistic site-specific channel modeling, unlocking studies that require site awareness, including beam management, blockage mitigation, and environment-aware sensing. We demonstrate its capabilities for precise beam-steering validation and end-to-end metric analysis. In both cases, the trace-driven engine exposes performance inflections that the statistical model does not exhibit, confirming its value for high-fidelity system-level cellular networks research and as a step toward DT applications.

Teleoperated Driving: a New Challenge for 3D Object Detection in Compressed Point Clouds

Jun 13, 2025Abstract:In recent years, the development of interconnected devices has expanded in many fields, from infotainment to education and industrial applications. This trend has been accelerated by the increased number of sensors and accessibility to powerful hardware and software. One area that significantly benefits from these advancements is Teleoperated Driving (TD). In this scenario, a controller drives safely a vehicle from remote leveraging sensors data generated onboard the vehicle, and exchanged via Vehicle-to-Everything (V2X) communications. In this work, we tackle the problem of detecting the presence of cars and pedestrians from point cloud data to enable safe TD operations. More specifically, we exploit the SELMA dataset, a multimodal, open-source, synthetic dataset for autonomous driving, that we expanded by including the ground-truth bounding boxes of 3D objects to support object detection. We analyze the performance of state-of-the-art compression algorithms and object detectors under several metrics, including compression efficiency, (de)compression and inference time, and detection accuracy. Moreover, we measure the impact of compression and detection on the V2X network in terms of data rate and latency with respect to 3GPP requirements for TD applications.

Agentic Semantic Control for Autonomous Wireless Space Networks: Extending Space-O-RAN with MCP-Driven Distributed Intelligence

Jun 12, 2025Abstract:Lunar surface operations impose stringent requirements on wireless communication systems, including autonomy, robustness to disruption, and the ability to adapt to environmental and mission-driven context. While Space-O-RAN provides a distributed orchestration model aligned with 3GPP standards, its decision logic is limited to static policies and lacks semantic integration. We propose a novel extension incorporating a semantic agentic layer enabled by the Model Context Protocol (MCP) and Agent-to-Agent (A2A) communication protocols, allowing context-aware decision making across real-time, near-real-time, and non-real-time control layers. Distributed cognitive agents deployed in rovers, landers, and lunar base stations implement wireless-aware coordination strategies, including delay-adaptive reasoning and bandwidth-aware semantic compression, while interacting with multiple MCP servers to reason over telemetry, locomotion planning, and mission constraints.

Space-O-RAN: Enabling Intelligent, Open, and Interoperable Non Terrestrial Networks in 6G

Feb 21, 2025

Abstract:Non-terrestrial networks (NTNs) are essential for ubiquitous connectivity, providing coverage in remote and underserved areas. However, since NTNs are currently operated independently, they face challenges such as isolation, limited scalability, and high operational costs. Integrating satellite constellations with terrestrial networks offers a way to address these limitations while enabling adaptive and cost-efficient connectivity through the application of Artificial Intelligence (AI) models. This paper introduces Space-O-RAN, a framework that extends Open Radio Access Network (RAN) principles to NTNs. It employs hierarchical closed-loop control with distributed Space RAN Intelligent Controllers (Space-RICs) to dynamically manage and optimize operations across both domains. To enable adaptive resource allocation and network orchestration, the proposed architecture integrates real-time satellite optimization and control with AI-driven management and digital twin (DT) modeling. It incorporates distributed Space Applications (sApps) and dApps to ensure robust performance in in highly dynamic orbital environments. A core feature is dynamic link-interface mapping, which allows network functions to adapt to specific application requirements and changing link conditions using all physical links on the satellite. Simulation results evaluate its feasibility by analyzing latency constraints across different NTN link types, demonstrating that intra-cluster coordination operates within viable signaling delay bounds, while offloading non-real-time tasks to ground infrastructure enhances scalability toward sixth-generation (6G) networks.

BostonTwin: the Boston Digital Twin for Ray-Tracing in 6G Networks

Mar 18, 2024

Abstract:Digital twins are now a staple of wireless networks design and evolution. Creating an accurate digital copy of a real system offers numerous opportunities to study and analyze its performance and issues. It also allows designing and testing new solutions in a risk-free environment, and applying them back to the real system after validation. A candidate technology that will heavily rely on digital twins for design and deployment is 6G, which promises robust and ubiquitous networks for eXtended Reality (XR) and immersive communications solutions. In this paper, we present BostonTwin, a dataset that merges a high-fidelity 3D model of the city of Boston, MA, with the existing geospatial data on cellular base stations deployments, in a ray-tracing-ready format. Thus, BostonTwin enables not only the instantaneous rendering and programmatic access to the building models, but it also allows for an accurate representation of the electromagnetic propagation environment in the real-world city of Boston. The level of detail and accuracy of this characterization is crucial to designing 6G networks that can support the strict requirements of sensitive and high-bandwidth applications, such as XR and immersive communication.

Modeling Interference for the Coexistence of 6G Networks and Passive Sensing Systems

Aug 07, 2023

Abstract:Future wireless networks and sensing systems will benefit from access to large chunks of spectrum above 100 GHz, to achieve terabit-per-second data rates in 6th Generation (6G) cellular systems and improve accuracy and reach of Earth exploration and sensing and radio astronomy applications. These are extremely sensitive to interference from artificial signals, thus the spectrum above 100 GHz features several bands which are protected from active transmissions under current spectrum regulations. To provide more agile access to the spectrum for both services, active and passive users will have to coexist without harming passive sensing operations. In this paper, we provide the first, fundamental analysis of Radio Frequency Interference (RFI) that large-scale terrestrial deployments introduce in different satellite sensing systems now orbiting the Earth. We develop a geometry-based analysis and extend it into a data-driven model which accounts for realistic propagation, building obstruction, ground reflection, for network topology with up to $10^5$ nodes in more than $85$ km$^2$. We show that the presence of harmful RFI depends on several factors, including network load, density and topology, satellite orientation, and building density. The results and methodology provide the foundation for the development of coexistence solutions and spectrum policy towards 6G.

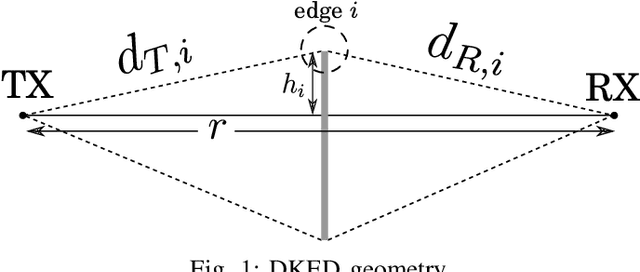

An Open Framework to Model Diffraction by Dynamic Blockers in Millimeter Wave Simulations

Jun 10, 2022

Abstract:The millimeter wave (mmWave) band will be exploited to address the growing demand for high data rates and low latency. The higher frequencies, however, are prone to limitations on the propagation of the signal in the environment. Thus, highly directional beamforming is needed to increase the antenna gain. Another crucial problem of the mmWave frequencies is their vulnerability to blockage by physical obstacles. To this aim, we studied the problem of modeling the impact of second-order effects on mmWave channels, specifically the susceptibility of the mmWave signals to physical blockers. With respect to existing works on this topic, our project focuses on scenarios where mmWaves interact with multiple, dynamic blockers. Our open source software includes diffraction-based blockage models and interfaces directly with an open source Radio Frequency (RF) ray-tracing software.

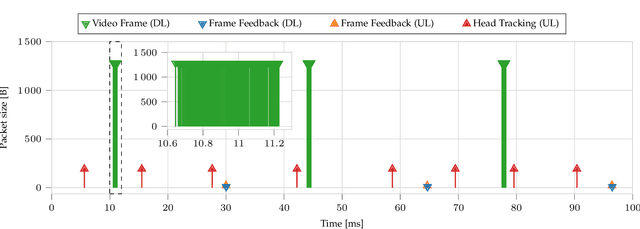

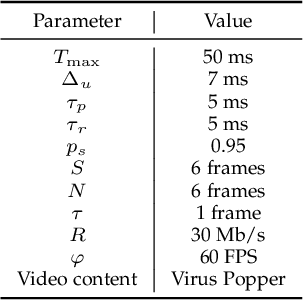

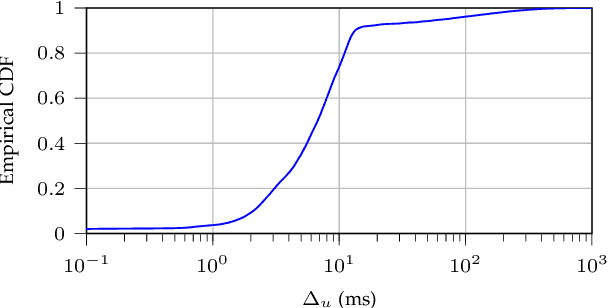

Temporal Characterization of VR Traffic for Network Slicing Requirement Definition

Jun 01, 2022

Abstract:Over the past few years, the concept of VR has attracted increasing interest thanks to its extensive industrial and commercial applications. Currently, the 3D models of the virtual scenes are generally stored in the VR visor itself, which operates as a standalone device. However, applications that entail multi-party interactions will likely require the scene to be processed by an external server and then streamed to the visors. However, the stringent Quality of Service (QoS) constraints imposed by VR's interactive nature require Network Slicing (NS) solutions, for which profiling the traffic generated by the VR application is crucial. To this end, we collected more than 4 hours of traces in a real setup and analyzed their temporal correlation. More specifically, we focused on the CBR encoding mode, which should generate more predictable traffic streams. From the collected data, we then distilled two prediction models for future frame size, which can be instrumental in the design of dynamic resource allocation algorithms. Our results show that even the state-of-the-art H.264 CBR mode can have significant fluctuations, which can impact the NS optimization. We then exploited the proposed models to dynamically determine the Service Level Agreement (SLA) parameters in an NS scenario, providing service with the required QoS while minimizing resource usage.

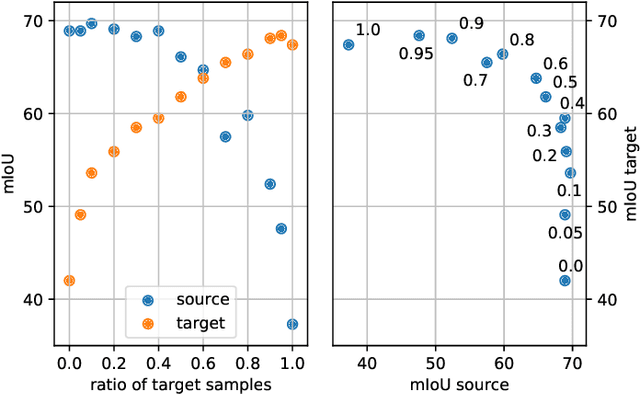

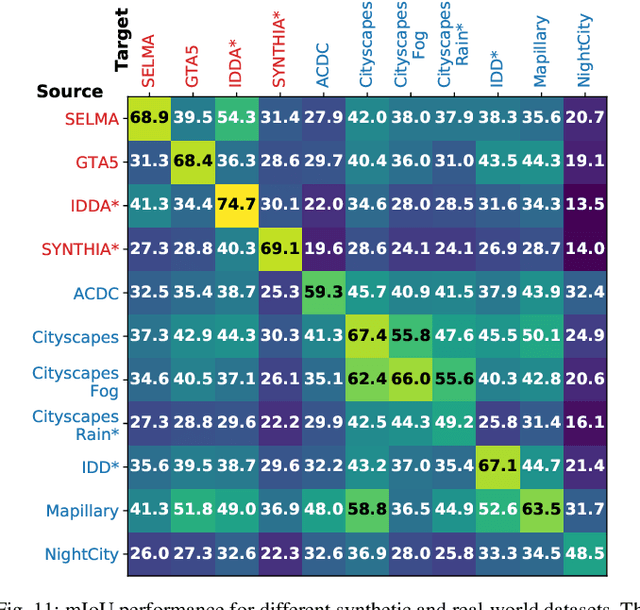

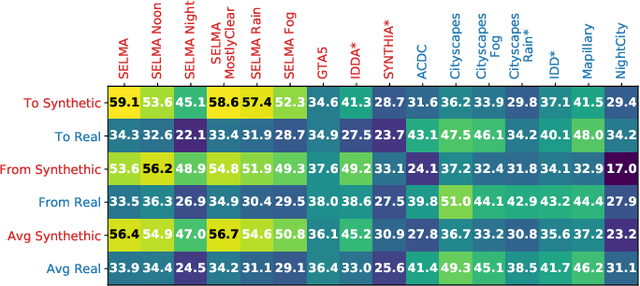

SELMA: SEmantic Large-scale Multimodal Acquisitions in Variable Weather, Daytime and Viewpoints

Apr 20, 2022

Abstract:Accurate scene understanding from multiple sensors mounted on cars is a key requirement for autonomous driving systems. Nowadays, this task is mainly performed through data-hungry deep learning techniques that need very large amounts of data to be trained. Due to the high cost of performing segmentation labeling, many synthetic datasets have been proposed. However, most of them miss the multi-sensor nature of the data, and do not capture the significant changes introduced by the variation of daytime and weather conditions. To fill these gaps, we introduce SELMA, a novel synthetic dataset for semantic segmentation that contains more than 30K unique waypoints acquired from 24 different sensors including RGB, depth, semantic cameras and LiDARs, in 27 different atmospheric and daytime conditions, for a total of more than 20M samples. SELMA is based on CARLA, an open-source simulator for generating synthetic data in autonomous driving scenarios, that we modified to increase the variability and the diversity in the scenes and class sets, and to align it with other benchmark datasets. As shown by the experimental evaluation, SELMA allows the efficient training of standard and multi-modal deep learning architectures, and achieves remarkable results on real-world data. SELMA is free and publicly available, thus supporting open science and research.

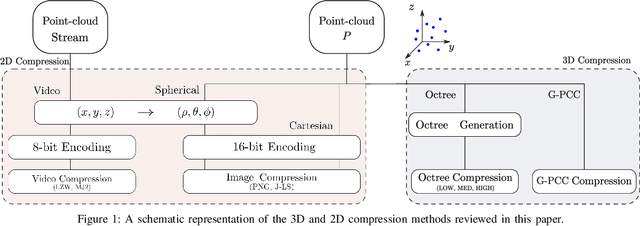

Point Cloud Compression for Efficient Data Broadcasting: A Performance Comparison

Feb 01, 2022

Abstract:The worldwide commercialization of fifth generation (5G) wireless networks and the exciting possibilities offered by connected and autonomous vehicles (CAVs) are pushing toward the deployment of heterogeneous sensors for tracking dynamic objects in the automotive environment. Among them, Light Detection and Ranging (LiDAR) sensors are witnessing a surge in popularity as their application to vehicular networks seem particularly promising. LiDARs can indeed produce a three-dimensional (3D) mapping of the surrounding environment, which can be used for object detection, recognition, and topography. These data are encoded as a point cloud which, when transmitted, may pose significant challenges to the communication systems as it can easily congest the wireless channel. Along these lines, this paper investigates how to compress point clouds in a fast and efficient way. Both 2D- and a 3D-oriented approaches are considered, and the performance of the corresponding techniques is analyzed in terms of (de)compression time, efficiency, and quality of the decompressed frame compared to the original. We demonstrate that, thanks to the matrix form in which LiDAR frames are saved, compression methods that are typically applied for 2D images give equivalent results, if not better, than those specifically designed for 3D point clouds.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge