Nathan Hatch

University of Washington

Long Range Navigator (LRN): Extending robot planning horizons beyond metric maps

Apr 17, 2025Abstract:A robot navigating an outdoor environment with no prior knowledge of the space must rely on its local sensing to perceive its surroundings and plan. This can come in the form of a local metric map or local policy with some fixed horizon. Beyond that, there is a fog of unknown space marked with some fixed cost. A limited planning horizon can often result in myopic decisions leading the robot off course or worse, into very difficult terrain. Ideally, we would like the robot to have full knowledge that can be orders of magnitude larger than a local cost map. In practice, this is intractable due to sparse sensing information and often computationally expensive. In this work, we make a key observation that long-range navigation only necessitates identifying good frontier directions for planning instead of full map knowledge. To this end, we propose Long Range Navigator (LRN), that learns an intermediate affordance representation mapping high-dimensional camera images to `affordable' frontiers for planning, and then optimizing for maximum alignment with the desired goal. LRN notably is trained entirely on unlabeled ego-centric videos making it easy to scale and adapt to new platforms. Through extensive off-road experiments on Spot and a Big Vehicle, we find that augmenting existing navigation stacks with LRN reduces human interventions at test-time and leads to faster decision making indicating the relevance of LRN. https://personalrobotics.github.io/lrn

TerrainNet: Visual Modeling of Complex Terrain for High-speed, Off-road Navigation

Mar 28, 2023

Abstract:Effective use of camera-based vision systems is essential for robust performance in autonomous off-road driving, particularly in the high-speed regime. Despite success in structured, on-road settings, current end-to-end approaches for scene prediction have yet to be successfully adapted for complex outdoor terrain. To this end, we present TerrainNet, a vision-based terrain perception system for semantic and geometric terrain prediction for aggressive, off-road navigation. The approach relies on several key insights and practical considerations for achieving reliable terrain modeling. The network includes a multi-headed output representation to capture fine- and coarse-grained terrain features necessary for estimating traversability. Accurate depth estimation is achieved using self-supervised depth completion with multi-view RGB and stereo inputs. Requirements for real-time performance and fast inference speeds are met using efficient, learned image feature projections. Furthermore, the model is trained on a large-scale, real-world off-road dataset collected across a variety of diverse outdoor environments. We show how TerrainNet can also be used for costmap prediction and provide a detailed framework for integration into a planning module. We demonstrate the performance of TerrainNet through extensive comparison to current state-of-the-art baselines for camera-only scene prediction. Finally, we showcase the effectiveness of integrating TerrainNet within a complete autonomous-driving stack by conducting a real-world vehicle test in a challenging off-road scenario.

The Value of Planning for Infinite-Horizon Model Predictive Control

Apr 07, 2021

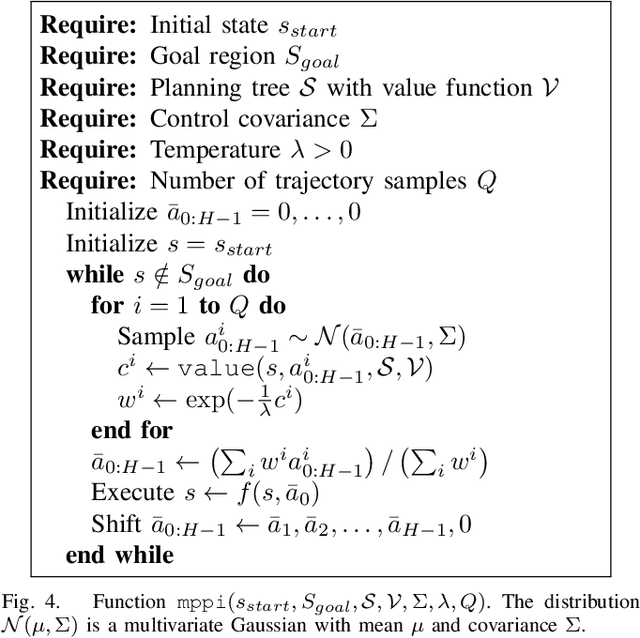

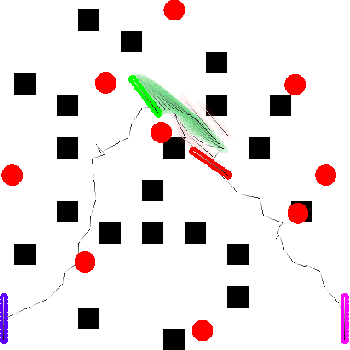

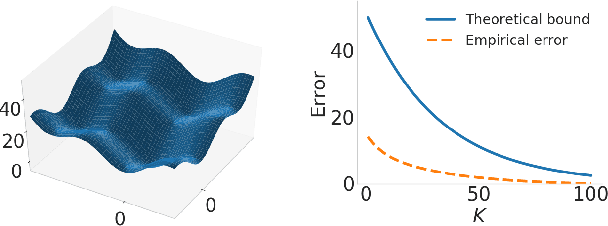

Abstract:Model Predictive Control (MPC) is a classic tool for optimal control of complex, real-world systems. Although it has been successfully applied to a wide range of challenging tasks in robotics, it is fundamentally limited by the prediction horizon, which, if too short, will result in myopic decisions. Recently, several papers have suggested using a learned value function as the terminal cost for MPC. If the value function is accurate, it effectively allows MPC to reason over an infinite horizon. Unfortunately, Reinforcement Learning (RL) solutions to value function approximation can be difficult to realize for robotics tasks. In this paper, we suggest a more efficient method for value function approximation that applies to goal-directed problems, like reaching and navigation. In these problems, MPC is often formulated to track a path or trajectory returned by a planner. However, this strategy is brittle in that unexpected perturbations to the robot will require replanning, which can be costly at runtime. Instead, we show how the intermediate data structures used by modern planners can be interpreted as an approximate value function. We show that that this value function can be used by MPC directly, resulting in more efficient and resilient behavior at runtime.

Truncated Back-propagation for Bilevel Optimization

Oct 25, 2018

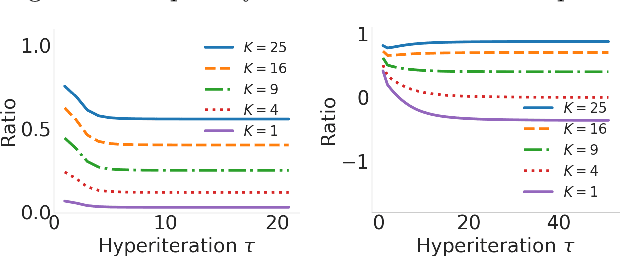

Abstract:Bilevel optimization has been recently revisited for designing and analyzing algorithms in hyperparameter tuning and meta learning tasks. However, due to its nested structure, evaluating exact gradients for high-dimensional problems is computationally challenging. One heuristic to circumvent this difficulty is to use the approximate gradient given by performing truncated back-propagation through the iterative optimization procedure that solves the lower-level problem. Although promising empirical performance has been reported, its theoretical properties are still unclear. In this paper, we analyze the properties of this family of approximate gradients and establish sufficient conditions for convergence. We validate this on several hyperparameter tuning and meta learning tasks. We find that optimization with the approximate gradient computed using few-step back-propagation often performs comparably to optimization with the exact gradient, while requiring far less memory and half the computation time.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge