Natalie Collina

The Sample Complexity of Multicalibration

Apr 23, 2026Abstract:We study the minimax sample complexity of multicalibration in the batch setting. A learner observes $n$ i.i.d. samples from an unknown distribution and must output a (possibly randomized) predictor whose population multicalibration error, measured by Expected Calibration Error (ECE), is at most $\varepsilon$ with respect to a given family of groups. For every fixed $κ> 0$, in the regime $|G|\le \varepsilon^{-κ}$, we prove that $\widetildeΘ(\varepsilon^{-3})$ samples are necessary and sufficient, up to polylogarithmic factors. The lower bound holds even for randomized predictors, and the upper bound is realized by a randomized predictor obtained via an online-to-batch reduction. This separates the sample complexity of multicalibration from that of marginal calibration, which scales as $\widetildeΘ(\varepsilon^{-2})$, and shows that mean-ECE multicalibration is as difficult in the batch setting as it is in the online setting, in contrast to marginal calibration which is strictly more difficult in the online setting. In contrast we observe that for $κ= 0$, the sample complexity of multicalibration remains $\widetildeΘ(\varepsilon^{-2})$ exhibiting a sharp threshold phenomenon. More generally, we establish matching upper and lower bounds, up to polylogarithmic factors, for a weighted $L_p$ multicalibration metric for all $1 \le p \le 2$, with optimal exponent $3/p$. We also extend the lower-bound template to a regular class of elicitable properties, and combine it with the online upper bounds of Hu et al. (2025) to obtain matching bounds for calibrating properties including expectiles and bounded-density quantiles.

Recommending Best Paper Awards for ML/AI Conferences via the Isotonic Mechanism

Jan 21, 2026Abstract:Machine learning and artificial intelligence conferences such as NeurIPS and ICML now regularly receive tens of thousands of submissions, posing significant challenges to maintaining the quality and consistency of the peer review process. This challenge is particularly acute for best paper awards, which are an important part of the peer review process, yet whose selection has increasingly become a subject of debate in recent years. In this paper, we introduce an author-assisted mechanism to facilitate the selection of best paper awards. Our method employs the Isotonic Mechanism for eliciting authors' assessments of their own submissions in the form of a ranking, which is subsequently utilized to adjust the raw review scores for optimal estimation of the submissions' ground-truth quality. We demonstrate that authors are incentivized to report truthfully when their utility is a convex additive function of the adjusted scores, and we validate this convexity assumption for best paper awards using publicly accessible review data of ICLR from 2019 to 2023 and NeurIPS from 2021 to 2023. Crucially, in the special case where an author has a single quota -- that is, may nominate only one paper -- we prove that truthfulness holds even when the utility function is merely nondecreasing and additive. This finding represents a substantial relaxation of the assumptions required in prior work. For practical implementation, we extend our mechanism to accommodate the common scenario of overlapping authorship. Finally, simulation results demonstrate that our mechanism significantly improves the quality of papers selected for awards.

How to Find Fantastic Papers: Self-Rankings as a Powerful Predictor of Scientific Impact Beyond Peer Review

Oct 02, 2025Abstract:Peer review in academic research aims not only to ensure factual correctness but also to identify work of high scientific potential that can shape future research directions. This task is especially critical in fast-moving fields such as artificial intelligence (AI), yet it has become increasingly difficult given the rapid growth of submissions. In this paper, we investigate an underexplored measure for identifying high-impact research: authors' own rankings of their multiple submissions to the same AI conference. Grounded in game-theoretic reasoning, we hypothesize that self-rankings are informative because authors possess unique understanding of their work's conceptual depth and long-term promise. To test this hypothesis, we conducted a large-scale experiment at a leading AI conference, where 1,342 researchers self-ranked their 2,592 submissions by perceived quality. Tracking outcomes over more than a year, we found that papers ranked highest by their authors received twice as many citations as their lowest-ranked counterparts; self-rankings were especially effective at identifying highly cited papers (those with over 150 citations). Moreover, we showed that self-rankings outperformed peer review scores in predicting future citation counts. Our results remained robust after accounting for confounders such as preprint posting time and self-citations. Together, these findings demonstrate that authors' self-rankings provide a reliable and valuable complement to peer review for identifying and elevating high-impact research in AI.

Emergent Alignment via Competition

Sep 18, 2025Abstract:Aligning AI systems with human values remains a fundamental challenge, but does our inability to create perfectly aligned models preclude obtaining the benefits of alignment? We study a strategic setting where a human user interacts with multiple differently misaligned AI agents, none of which are individually well-aligned. Our key insight is that when the users utility lies approximately within the convex hull of the agents utilities, a condition that becomes easier to satisfy as model diversity increases, strategic competition can yield outcomes comparable to interacting with a perfectly aligned model. We model this as a multi-leader Stackelberg game, extending Bayesian persuasion to multi-round conversations between differently informed parties, and prove three results: (1) when perfect alignment would allow the user to learn her Bayes-optimal action, she can also do so in all equilibria under the convex hull condition (2) under weaker assumptions requiring only approximate utility learning, a non-strategic user employing quantal response achieves near-optimal utility in all equilibria and (3) when the user selects the best single AI after an evaluation period, equilibrium guarantees remain near-optimal without further distributional assumptions. We complement the theory with two sets of experiments.

Collaborative Prediction: Tractable Information Aggregation via Agreement

Apr 08, 2025Abstract:We give efficient "collaboration protocols" through which two parties, who observe different features about the same instances, can interact to arrive at predictions that are more accurate than either could have obtained on their own. The parties only need to iteratively share and update their own label predictions-without either party ever having to share the actual features that they observe. Our protocols are efficient reductions to the problem of learning on each party's feature space alone, and so can be used even in settings in which each party's feature space is illegible to the other-which arises in models of human/AI interaction and in multi-modal learning. The communication requirements of our protocols are independent of the dimensionality of the data. In an online adversarial setting we show how to give regret bounds on the predictions that the parties arrive at with respect to a class of benchmark policies defined on the joint feature space of the two parties, despite the fact that neither party has access to this joint feature space. We also give simpler algorithms for the same task in the batch setting in which we assume that there is a fixed but unknown data distribution. We generalize our protocols to a decision theoretic setting with high dimensional outcome spaces, where parties communicate only "best response actions." Our theorems give a computationally and statistically tractable generalization of past work on information aggregation amongst Bayesians who share a common and correct prior, as part of a literature studying "agreement" in the style of Aumann's agreement theorem. Our results require no knowledge of (or even the existence of) a prior distribution and are computationally efficient. Nevertheless we show how to lift our theorems back to this classical Bayesian setting, and in doing so, give new information aggregation theorems for Bayesian agreement.

Swap Regret and Correlated Equilibria Beyond Normal-Form Games

Feb 27, 2025Abstract:Swap regret is a notion that has proven itself to be central to the study of general-sum normal-form games, with swap-regret minimization leading to convergence to the set of correlated equilibria and guaranteeing non-manipulability against a self-interested opponent. However, the situation for more general classes of games -- such as Bayesian games and extensive-form games -- is less clear-cut, with multiple candidate definitions for swap-regret but no known efficiently minimizable variant of swap regret that implies analogous non-manipulability guarantees. In this paper, we present a new variant of swap regret for polytope games that we call ``profile swap regret'', with the property that obtaining sublinear profile swap regret is both necessary and sufficient for any learning algorithm to be non-manipulable by an opponent (resolving an open problem of Mansour et al., 2022). Although we show profile swap regret is NP-hard to compute given a transcript of play, we show it is nonetheless possible to design efficient learning algorithms that guarantee at most $O(\sqrt{T})$ profile swap regret. Finally, we explore the correlated equilibrium notion induced by low-profile-swap-regret play, and demonstrate a gap between the set of outcomes that can be implemented by this learning process and the set of outcomes that can be implemented by a third-party mediator (in contrast to the situation in normal-form games).

Learning to Play Against Unknown Opponents

Dec 24, 2024

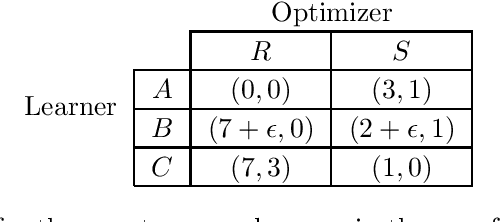

Abstract:We consider the problem of a learning agent who has to repeatedly play a general sum game against a strategic opponent who acts to maximize their own payoff by optimally responding against the learner's algorithm. The learning agent knows their own payoff function, but is uncertain about the payoff of their opponent (knowing only that it is drawn from some distribution $\mathcal{D}$). What learning algorithm should the agent run in order to maximize their own total utility? We demonstrate how to construct an $\varepsilon$-optimal learning algorithm (obtaining average utility within $\varepsilon$ of the optimal utility) for this problem in time polynomial in the size of the input and $1/\varepsilon$ when either the size of the game or the support of $\mathcal{D}$ is constant. When the learning algorithm is further constrained to be a no-regret algorithm, we demonstrate how to efficiently construct an optimal learning algorithm (asymptotically achieving the optimal utility) in polynomial time, independent of any other assumptions. Both results make use of recently developed machinery that converts the analysis of learning algorithms to the study of the class of corresponding geometric objects known as menus.

Tractable Agreement Protocols

Nov 29, 2024Abstract:We present an efficient reduction that converts any machine learning algorithm into an interactive protocol, enabling collaboration with another party (e.g., a human) to achieve consensus on predictions and improve accuracy. This approach imposes calibration conditions on each party, which are computationally and statistically tractable relaxations of Bayesian rationality. These conditions are sensible even in prior-free settings, representing a significant generalization of Aumann's classic "agreement theorem." In our protocol, the model first provides a prediction. The human then responds by either agreeing or offering feedback. The model updates its state and revises its prediction, while the human may adjust their beliefs. This iterative process continues until the two parties reach agreement. Initially, we study a setting that extends Aumann's Agreement Theorem, where parties aim to agree on a one-dimensional expectation by iteratively sharing their current estimates. Here, we recover the convergence theorem of Aaronson'05 under weaker assumptions. We then address the case where parties hold beliefs over distributions with d outcomes, exploring two feedback mechanisms. The first involves vector-valued estimates of predictions, while the second adopts a decision-theoretic approach: the human, needing to take an action from a finite set based on utility, communicates their utility-maximizing action at each round. In this setup, the number of rounds until agreement remains independent of d. Finally, we generalize to scenarios with more than two parties, where computational complexity scales linearly with the number of participants. Our protocols rely on simple, efficient conditions and produce predictions that surpass the accuracy of any individual party's alone.

Algorithmic Collusion Without Threats

Sep 06, 2024Abstract:There has been substantial recent concern that pricing algorithms might learn to ``collude.'' Supra-competitive prices can emerge as a Nash equilibrium of repeated pricing games, in which sellers play strategies which threaten to punish their competitors who refuse to support high prices, and these strategies can be automatically learned. In fact, a standard economic intuition is that supra-competitive prices emerge from either the use of threats, or a failure of one party to optimize their payoff. Is this intuition correct? Would preventing threats in algorithmic decision-making prevent supra-competitive prices when sellers are optimizing for their own revenue? No. We show that supra-competitive prices can emerge even when both players are using algorithms which do not encode threats, and which optimize for their own revenue. We study sequential pricing games in which a first mover deploys an algorithm and then a second mover optimizes within the resulting environment. We show that if the first mover deploys any algorithm with a no-regret guarantee, and then the second mover even approximately optimizes within this now static environment, monopoly-like prices arise. The result holds for any no-regret learning algorithm deployed by the first mover and for any pricing policy of the second mover that obtains them profit at least as high as a random pricing would -- and hence the result applies even when the second mover is optimizing only within a space of non-responsive pricing distributions which are incapable of encoding threats. In fact, there exists a set of strategies, neither of which explicitly encode threats that form a Nash equilibrium of the simultaneous pricing game in algorithm space, and lead to near monopoly prices. This suggests that the definition of ``algorithmic collusion'' may need to be expanded, to include strategies without explicitly encoded threats.

Analysis of the ICML 2023 Ranking Data: Can Authors' Opinions of Their Own Papers Assist Peer Review in Machine Learning?

Aug 24, 2024Abstract:We conducted an experiment during the review process of the 2023 International Conference on Machine Learning (ICML) that requested authors with multiple submissions to rank their own papers based on perceived quality. We received 1,342 rankings, each from a distinct author, pertaining to 2,592 submissions. In this paper, we present an empirical analysis of how author-provided rankings could be leveraged to improve peer review processes at machine learning conferences. We focus on the Isotonic Mechanism, which calibrates raw review scores using author-provided rankings. Our analysis demonstrates that the ranking-calibrated scores outperform raw scores in estimating the ground truth ``expected review scores'' in both squared and absolute error metrics. Moreover, we propose several cautious, low-risk approaches to using the Isotonic Mechanism and author-provided rankings in peer review processes, including assisting senior area chairs' oversight of area chairs' recommendations, supporting the selection of paper awards, and guiding the recruitment of emergency reviewers. We conclude the paper by addressing the study's limitations and proposing future research directions.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge