Sampath Kannan

Algorithmic Collusion Without Threats

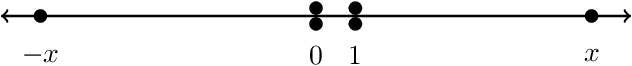

Sep 06, 2024Abstract:There has been substantial recent concern that pricing algorithms might learn to ``collude.'' Supra-competitive prices can emerge as a Nash equilibrium of repeated pricing games, in which sellers play strategies which threaten to punish their competitors who refuse to support high prices, and these strategies can be automatically learned. In fact, a standard economic intuition is that supra-competitive prices emerge from either the use of threats, or a failure of one party to optimize their payoff. Is this intuition correct? Would preventing threats in algorithmic decision-making prevent supra-competitive prices when sellers are optimizing for their own revenue? No. We show that supra-competitive prices can emerge even when both players are using algorithms which do not encode threats, and which optimize for their own revenue. We study sequential pricing games in which a first mover deploys an algorithm and then a second mover optimizes within the resulting environment. We show that if the first mover deploys any algorithm with a no-regret guarantee, and then the second mover even approximately optimizes within this now static environment, monopoly-like prices arise. The result holds for any no-regret learning algorithm deployed by the first mover and for any pricing policy of the second mover that obtains them profit at least as high as a random pricing would -- and hence the result applies even when the second mover is optimizing only within a space of non-responsive pricing distributions which are incapable of encoding threats. In fact, there exists a set of strategies, neither of which explicitly encode threats that form a Nash equilibrium of the simultaneous pricing game in algorithm space, and lead to near monopoly prices. This suggests that the definition of ``algorithmic collusion'' may need to be expanded, to include strategies without explicitly encoded threats.

Oracle Efficient Algorithms for Groupwise Regret

Oct 07, 2023Abstract:We study the problem of online prediction, in which at each time step $t$, an individual $x_t$ arrives, whose label we must predict. Each individual is associated with various groups, defined based on their features such as age, sex, race etc., which may intersect. Our goal is to make predictions that have regret guarantees not just overall but also simultaneously on each sub-sequence comprised of the members of any single group. Previous work such as [Blum & Lykouris] and [Lee et al] provide attractive regret guarantees for these problems; however, these are computationally intractable on large model classes. We show that a simple modification of the sleeping experts technique of [Blum & Lykouris] yields an efficient reduction to the well-understood problem of obtaining diminishing external regret absent group considerations. Our approach gives similar regret guarantees compared to [Blum & Lykouris]; however, we run in time linear in the number of groups, and are oracle-efficient in the hypothesis class. This in particular implies that our algorithm is efficient whenever the number of groups is polynomially bounded and the external-regret problem can be solved efficiently, an improvement on [Blum & Lykouris]'s stronger condition that the model class must be small. Our approach can handle online linear regression and online combinatorial optimization problems like online shortest paths. Beyond providing theoretical regret bounds, we evaluate this algorithm with an extensive set of experiments on synthetic data and on two real data sets -- Medical costs and the Adult income dataset, both instantiated with intersecting groups defined in terms of race, sex, and other demographic characteristics. We find that uniformly across groups, our algorithm gives substantial error improvements compared to running a standard online linear regression algorithm with no groupwise regret guarantees.

Fair Prediction with Endogenous Behavior

Feb 18, 2020

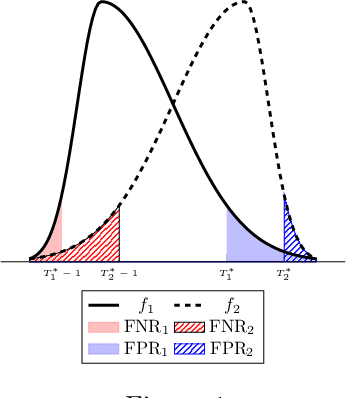

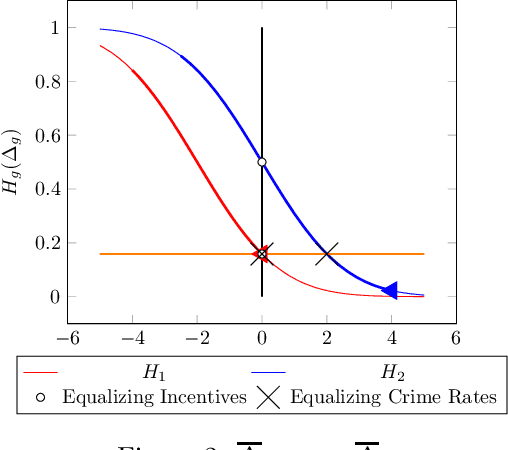

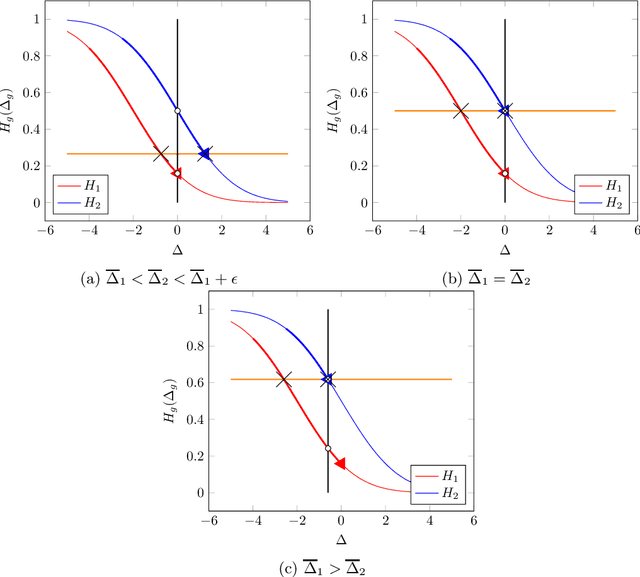

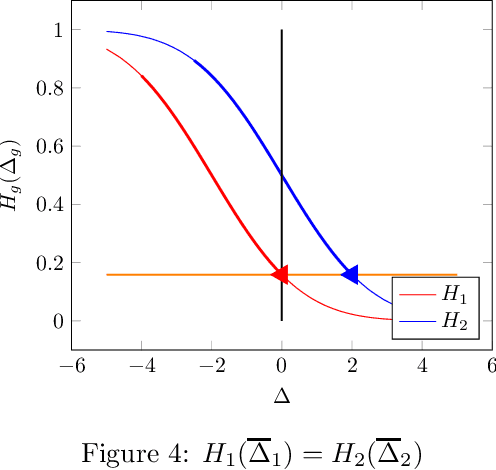

Abstract:There is increasing regulatory interest in whether machine learning algorithms deployed in consequential domains (e.g. in criminal justice) treat different demographic groups "fairly." However, there are several proposed notions of fairness, typically mutually incompatible. Using criminal justice as an example, we study a model in which society chooses an incarceration rule. Agents of different demographic groups differ in their outside options (e.g. opportunity for legal employment) and decide whether to commit crimes. We show that equalizing type I and type II errors across groups is consistent with the goal of minimizing the overall crime rate; other popular notions of fairness are not.

Pipeline Interventions

Feb 16, 2020

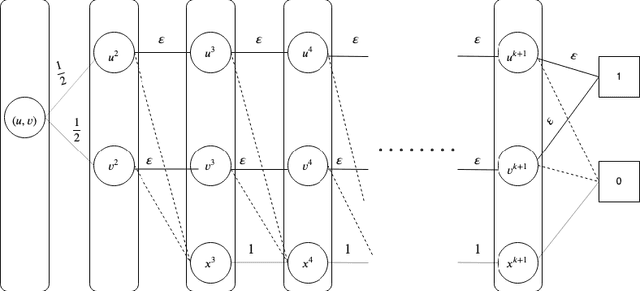

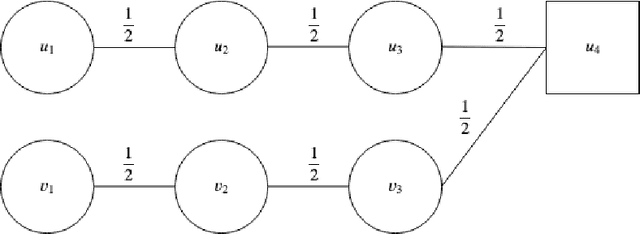

Abstract:We introduce the \emph{pipeline intervention} problem, defined by a layered directed acyclic graph and a set of stochastic matrices governing transitions between successive layers. The graph is a stylized model for how people from different populations are presented opportunities, eventually leading to some reward. In our model, individuals are born into an initial position (i.e. some node in the first layer of the graph) according to a fixed probability distribution, and then stochastically progress through the graph according to the transition matrices, until they reach a node in the final layer of the graph; each node in the final layer has a \emph{reward} associated with it. The pipeline intervention problem asks how to best make costly changes to the transition matrices governing people's stochastic transitions through the graph, subject to a budget constraint. We consider two objectives: social welfare maximization, and a fairness-motivated maximin objective that seeks to maximize the value to the population (starting node) with the \emph{least} expected value. We consider two variants of the maximin objective that turn out to be distinct, depending on whether we demand a deterministic solution or allow randomization. For each objective, we give an efficient approximation algorithm (an additive FPTAS) for constant width networks. We also tightly characterize the "price of fairness" in our setting: the ratio between the highest achievable social welfare and the highest social welfare consistent with a maximin optimal solution. Finally we show that for polynomial width networks, even approximating the maximin objective to any constant factor is NP hard, even for networks with constant depth. This shows that the restriction on the width in our positive results is essential.

A Center in Your Neighborhood: Fairness in Facility Location

Aug 30, 2019

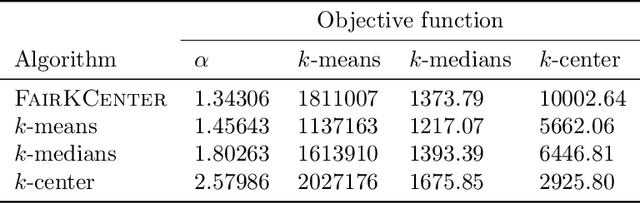

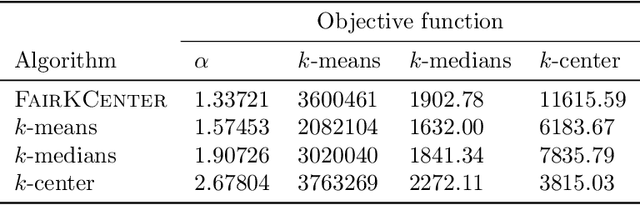

Abstract:When selecting locations for a set of facilities, standard clustering algorithms may place unfair burden on some individuals and neighborhoods. We formulate a fairness concept that takes local population densities into account. In particular, given $k$ facilities to locate and a population of size $n$, we define the "neighborhood radius" of an individual $i$ as the minimum radius of a ball centered at $i$ that contains at least $n/k$ individuals. Our objective is to ensure that each individual has a facility within at most a small constant factor of her neighborhood radius. We present several theoretical results: We show that optimizing this factor is NP-hard; we give an approximation algorithm that guarantees a factor of at most 2 in all metric spaces; and we prove matching lower bounds in some metric spaces. We apply a variant of this algorithm to real-world address data, showing that it is quite different from standard clustering algorithms and outperforms them on our objective function and balances the load between facilities more evenly.

Quantifying the Burden of Exploration and the Unfairness of Free Riding

Oct 20, 2018

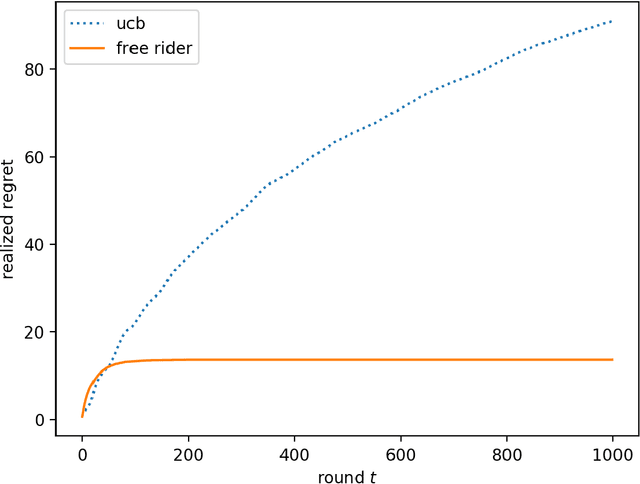

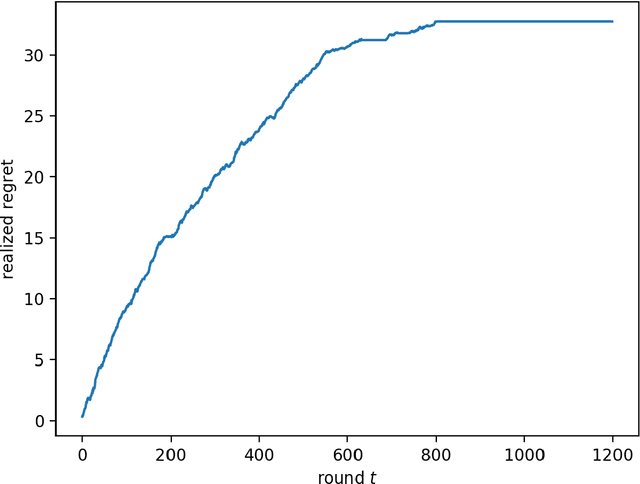

Abstract:We consider the multi-armed bandit setting with a twist. Rather than having just one decision maker deciding which arm to pull in each round, we have $n$ different decision makers (agents). In the simple stochastic setting we show that one of the agents (called the free rider), who has access to the history of other agents playing some zero regret algorithm can achieve just $O(1)$ regret, as opposed to the regret lower bound of $\Omega (\log T)$ when one decision maker is playing in isolation. In the linear contextual setting, we show that if the other agents play a particular, popular zero regret algorithm (UCB), then the free rider can again achieve $O(1)$ regret. In order to prove this result, we give a deterministic lower bound on the number of times each suboptimal arm must be pulled in UCB. In contrast, we show that the free-rider cannot beat the standard single-player regret bounds in certain partial information settings.

Downstream Effects of Affirmative Action

Aug 27, 2018Abstract:We study a two-stage model, in which students are 1) admitted to college on the basis of an entrance exam which is a noisy signal about their qualifications (type), and then 2) those students who were admitted to college can be hired by an employer as a function of their college grades, which are an independently drawn noisy signal of their type. Students are drawn from one of two populations, which might have different type distributions. We assume that the employer at the end of the pipeline is rational, in the sense that it computes a posterior distribution on student type conditional on all information that it has available (college admissions, grades, and group membership), and makes a decision based on posterior expectation. We then study what kinds of fairness goals can be achieved by the college by setting its admissions rule and grading policy. For example, the college might have the goal of guaranteeing equal opportunity across populations: that the probability of passing through the pipeline and being hired by the employer should be independent of group membership, conditioned on type. Alternately, the college might have the goal of incentivizing the employer to have a group blind hiring rule. We show that both goals can be achieved when the college does not report grades. On the other hand, we show that under reasonable conditions, these goals are impossible to achieve even in isolation when the college uses an (even minimally) informative grading policy.

A Smoothed Analysis of the Greedy Algorithm for the Linear Contextual Bandit Problem

Jan 10, 2018Abstract:Bandit learning is characterized by the tension between long-term exploration and short-term exploitation. However, as has recently been noted, in settings in which the choices of the learning algorithm correspond to important decisions about individual people (such as criminal recidivism prediction, lending, and sequential drug trials), exploration corresponds to explicitly sacrificing the well-being of one individual for the potential future benefit of others. This raises a fairness concern. In such settings, one might like to run a "greedy" algorithm, which always makes the (myopically) optimal decision for the individuals at hand - but doing this can result in a catastrophic failure to learn. In this paper, we consider the linear contextual bandit problem and revisit the performance of the greedy algorithm. We give a smoothed analysis, showing that even when contexts may be chosen by an adversary, small perturbations of the adversary's choices suffice for the algorithm to achieve "no regret", perhaps (depending on the specifics of the setting) with a constant amount of initial training data. This suggests that "generically" (i.e. in slightly perturbed environments), exploration and exploitation need not be in conflict in the linear setting.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge