Kaicheng Zhang

Stuart-Landau Oscillatory Graph Neural Network

Nov 11, 2025Abstract:Oscillatory Graph Neural Networks (OGNNs) are an emerging class of physics-inspired architectures designed to mitigate oversmoothing and vanishing gradient problems in deep GNNs. In this work, we introduce the Complex-Valued Stuart-Landau Graph Neural Network (SLGNN), a novel architecture grounded in Stuart-Landau oscillator dynamics. Stuart-Landau oscillators are canonical models of limit-cycle behavior near Hopf bifurcations, which are fundamental to synchronization theory and are widely used in e.g. neuroscience for mesoscopic brain modeling. Unlike harmonic oscillators and phase-only Kuramoto models, Stuart-Landau oscillators retain both amplitude and phase dynamics, enabling rich phenomena such as amplitude regulation and multistable synchronization. The proposed SLGNN generalizes existing phase-centric Kuramoto-based OGNNs by allowing node feature amplitudes to evolve dynamically according to Stuart-Landau dynamics, with explicit tunable hyperparameters (such as the Hopf-parameter and the coupling strength) providing additional control over the interplay between feature amplitudes and network structure. We conduct extensive experiments across node classification, graph classification, and graph regression tasks, demonstrating that SLGNN outperforms existing OGNNs and establishes a novel, expressive, and theoretically grounded framework for deep oscillatory architectures on graphs.

Wasserstein Transfer Learning

May 23, 2025Abstract:Transfer learning is a powerful paradigm for leveraging knowledge from source domains to enhance learning in a target domain. However, traditional transfer learning approaches often focus on scalar or multivariate data within Euclidean spaces, limiting their applicability to complex data structures such as probability distributions. To address this, we introduce a novel framework for transfer learning in regression models, where outputs are probability distributions residing in the Wasserstein space. When the informative subset of transferable source domains is known, we propose an estimator with provable asymptotic convergence rates, quantifying the impact of domain similarity on transfer efficiency. For cases where the informative subset is unknown, we develop a data-driven transfer learning procedure designed to mitigate negative transfer. The proposed methods are supported by rigorous theoretical analysis and are validated through extensive simulations and real-world applications.

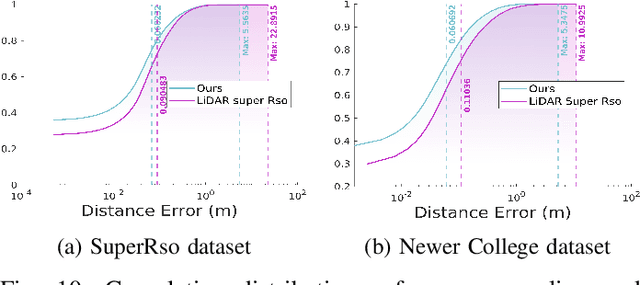

Digital Beamforming Enhanced Radar Odometry

Mar 17, 2025Abstract:Radar has become an essential sensor for autonomous navigation, especially in challenging environments where camera and LiDAR sensors fail. 4D single-chip millimeter-wave radar systems, in particular, have drawn increasing attention thanks to their ability to provide spatial and Doppler information with low hardware cost and power consumption. However, most single-chip radar systems using traditional signal processing, such as Fast Fourier Transform, suffer from limited spatial resolution in radar detection, significantly limiting the performance of radar-based odometry and Simultaneous Localization and Mapping (SLAM) systems. In this paper, we develop a novel radar signal processing pipeline that integrates spatial domain beamforming techniques, and extend it to 3D Direction of Arrival estimation. Experiments using public datasets are conducted to evaluate and compare the performance of our proposed signal processing pipeline against traditional methodologies. These tests specifically focus on assessing structural precision across diverse scenes and measuring odometry accuracy in different radar odometry systems. This research demonstrates the feasibility of achieving more accurate radar odometry by simply replacing the standard FFT-based processing with the proposed pipeline. The codes are available at GitHub*.

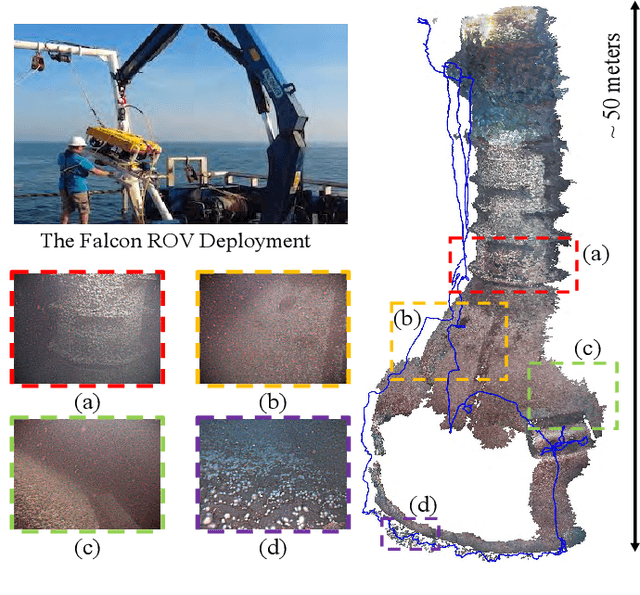

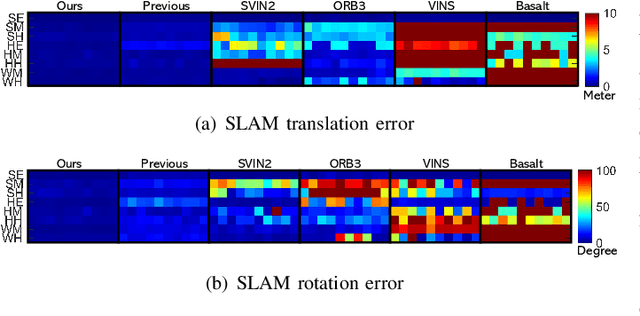

AQUA-SLAM: Tightly-Coupled Underwater Acoustic-Visual-Inertial SLAM with Sensor Calibration

Mar 14, 2025

Abstract:Underwater environments pose significant challenges for visual Simultaneous Localization and Mapping (SLAM) systems due to limited visibility, inadequate illumination, and sporadic loss of structural features in images. Addressing these challenges, this paper introduces a novel, tightly-coupled Acoustic-Visual-Inertial SLAM approach, termed AQUA-SLAM, to fuse a Doppler Velocity Log (DVL), a stereo camera, and an Inertial Measurement Unit (IMU) within a graph optimization framework. Moreover, we propose an efficient sensor calibration technique, encompassing multi-sensor extrinsic calibration (among the DVL, camera and IMU) and DVL transducer misalignment calibration, with a fast linear approximation procedure for real-time online execution. The proposed methods are extensively evaluated in a tank environment with ground truth, and validated for offshore applications in the North Sea. The results demonstrate that our method surpasses current state-of-the-art underwater and visual-inertial SLAM systems in terms of localization accuracy and robustness. The proposed system will be made open-source for the community.

On the Vulnerability of Concept Erasure in Diffusion Models

Feb 24, 2025

Abstract:The proliferation of text-to-image diffusion models has raised significant privacy and security concerns, particularly regarding the generation of copyrighted or harmful images. To address these issues, research on machine unlearning has developed various concept erasure methods, which aim to remove the effect of unwanted data through post-hoc training. However, we show these erasure techniques are vulnerable, where images of supposedly erased concepts can still be generated using adversarially crafted prompts. We introduce RECORD, a coordinate-descent-based algorithm that discovers prompts capable of eliciting the generation of erased content. We demonstrate that RECORD significantly beats the attack success rate of current state-of-the-art attack methods. Furthermore, our findings reveal that models subjected to concept erasure are more susceptible to adversarial attacks than previously anticipated, highlighting the urgency for more robust unlearning approaches. We open source all our code at https://github.com/LucasBeerens/RECORD

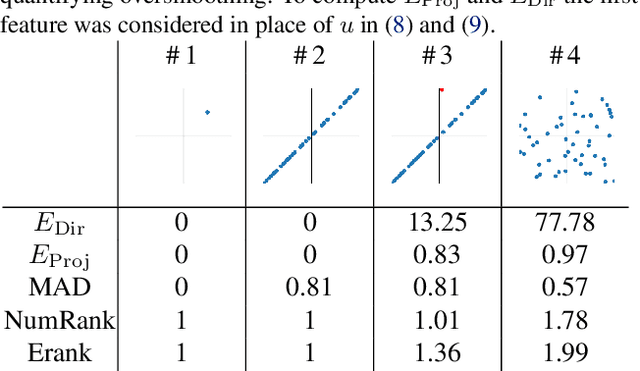

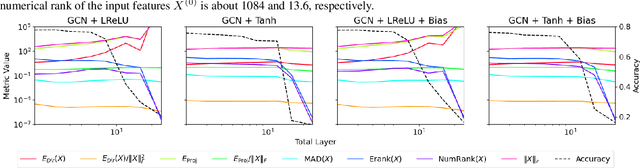

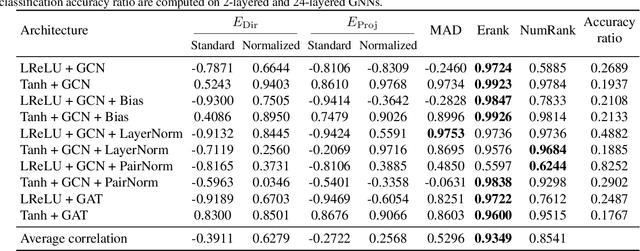

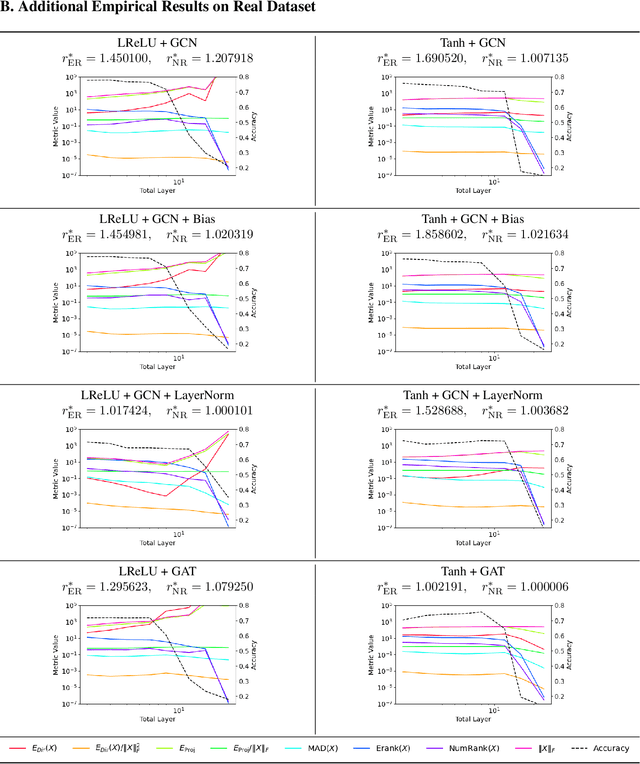

Rethinking Oversmoothing in Graph Neural Networks: A Rank-Based Perspective

Feb 07, 2025

Abstract:Oversmoothing is a fundamental challenge in graph neural networks (GNNs): as the number of layers increases, node embeddings become increasingly similar, and model performance drops sharply. Traditionally, oversmoothing has been quantified using metrics that measure the similarity of neighbouring node features, such as the Dirichlet energy. While these metrics are related to oversmoothing, we argue they have critical limitations and fail to reliably capture oversmoothing in realistic scenarios. For instance, they provide meaningful insights only for very deep networks and under somewhat strict conditions on the norm of network weights and feature representations. As an alternative, we propose measuring oversmoothing by examining the numerical or effective rank of the feature representations. We provide theoretical support for this approach, demonstrating that the numerical rank of feature representations converges to one for a broad family of nonlinear activation functions under the assumption of nonnegative trained weights. To the best of our knowledge, this is the first result that proves the occurrence of oversmoothing without assumptions on the boundedness of the weight matrices. Along with the theoretical findings, we provide extensive numerical evaluation across diverse graph architectures. Our results show that rank-based metrics consistently capture oversmoothing, whereas energy-based metrics often fail. Notably, we reveal that a significant drop in the rank aligns closely with performance degradation, even in scenarios where energy metrics remain unchanged.

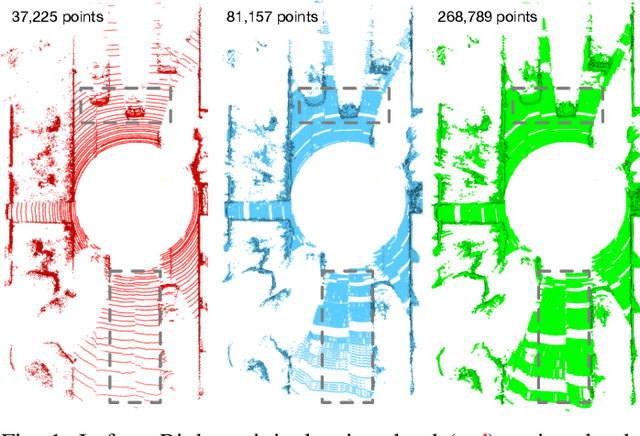

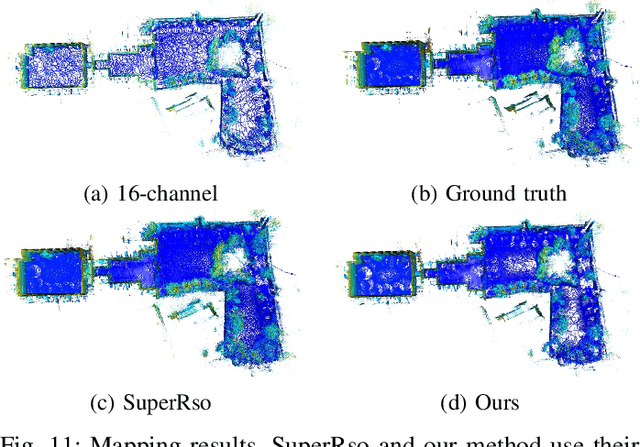

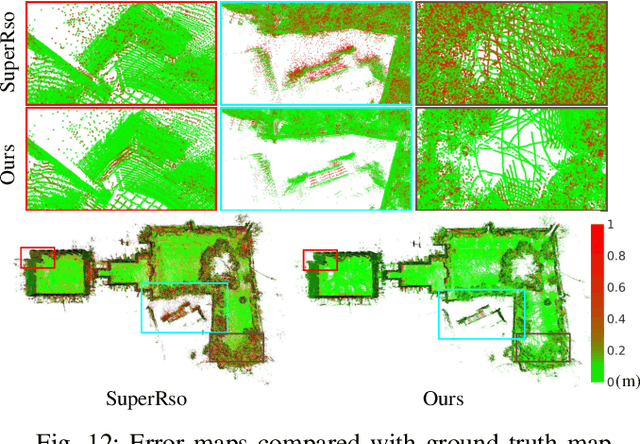

CURL: Continuous, Ultra-compact Representation for LiDAR

May 12, 2022

Abstract:Increasing the density of the 3D LiDAR point cloud is appealing for many applications in robotics. However, high-density LiDAR sensors are usually costly and still limited to a level of coverage per scan (e.g., 128 channels). Meanwhile, denser point cloud scans and maps mean larger volumes to store and longer times to transmit. Existing works focus on either improving point cloud density or compressing its size. This paper aims to design a novel 3D point cloud representation that can continuously increase point cloud density while reducing its storage and transmitting size. The pipeline of the proposed Continuous, Ultra-compact Representation of LiDAR (CURL) includes four main steps: meshing, upsampling, encoding, and continuous reconstruction. It is capable of transforming a 3D LiDAR scan or map into a compact spherical harmonics representation which can be used or transmitted in low latency to continuously reconstruct a much denser 3D point cloud. Extensive experiments on four public datasets, covering college gardens, city streets, and indoor rooms, demonstrate that much denser 3D point clouds can be accurately reconstructed using the proposed CURL representation while achieving up to 80% storage space-saving. We open-source the CURL codes for the community.

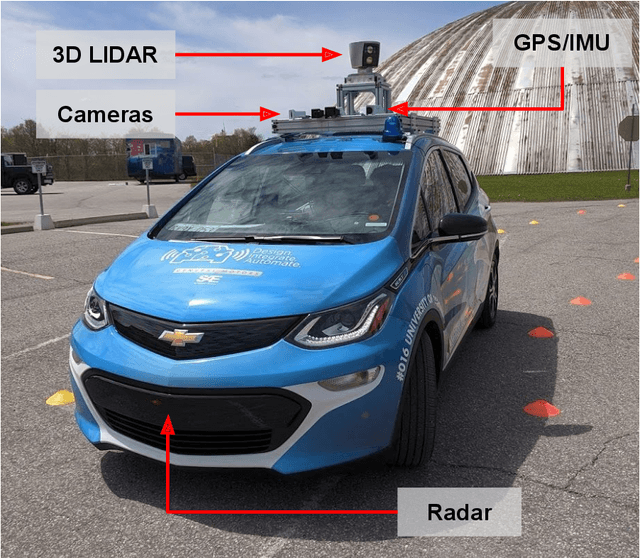

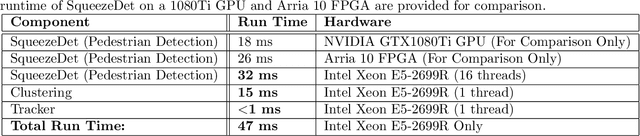

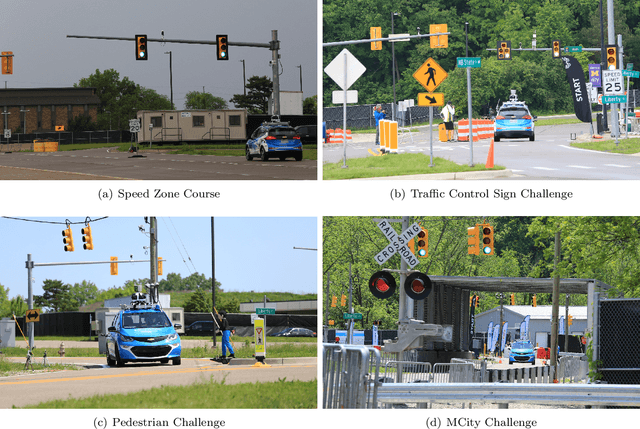

Zeus: A System Description of the Two-Time Winner of the Collegiate SAE AutoDrive Competition

Apr 19, 2020

Abstract:The SAE AutoDrive Challenge is a three-year collegiate competition to develop a self-driving car by 2020. The second year of the competition was held in June 2019 at MCity, a mock town built for self-driving car testing at the University of Michigan. Teams were required to autonomously navigate a series of intersections while handling pedestrians, traffic lights, and traffic signs. Zeus is aUToronto's winning entry in the AutoDrive Challenge. This article describes the system design and development of Zeus as well as many of the lessons learned along the way. This includes details on the team's organizational structure, sensor suite, software components, and performance at the Year 2 competition. With a team of mostly undergraduates and minimal resources, aUToronto has made progress towards a functioning self-driving vehicle, in just two years. This article may prove valuable to researchers looking to develop their own self-driving platform.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge