Jiancheng Zhao

AniCrafter: Customizing Realistic Human-Centric Animation via Avatar-Background Conditioning in Video Diffusion Models

May 26, 2025Abstract:Recent advances in video diffusion models have significantly improved character animation techniques. However, current approaches rely on basic structural conditions such as DWPose or SMPL-X to animate character images, limiting their effectiveness in open-domain scenarios with dynamic backgrounds or challenging human poses. In this paper, we introduce $\textbf{AniCrafter}$, a diffusion-based human-centric animation model that can seamlessly integrate and animate a given character into open-domain dynamic backgrounds while following given human motion sequences. Built on cutting-edge Image-to-Video (I2V) diffusion architectures, our model incorporates an innovative "avatar-background" conditioning mechanism that reframes open-domain human-centric animation as a restoration task, enabling more stable and versatile animation outputs. Experimental results demonstrate the superior performance of our method. Codes will be available at https://github.com/MyNiuuu/AniCrafter.

All-in-One Transferring Image Compression from Human Perception to Multi-Machine Perception

Apr 17, 2025Abstract:Efficiently transferring Learned Image Compression (LIC) model from human perception to machine perception is an emerging challenge in vision-centric representation learning. Existing approaches typically adapt LIC to downstream tasks in a single-task manner, which is inefficient, lacks task interaction, and results in multiple task-specific bitstreams. To address these limitations, we propose an asymmetric adaptor framework that supports multi-task adaptation within a single model. Our method introduces a shared adaptor to learn general semantic features and task-specific adaptors to preserve task-level distinctions. With only lightweight plug-in modules and a frozen base codec, our method achieves strong performance across multiple tasks while maintaining compression efficiency. Experiments on the PASCAL-Context benchmark demonstrate that our method outperforms both Fully Fine-Tuned and other Parameter Efficient Fine-Tuned (PEFT) baselines, and validating the effectiveness of multi-vision transferring.

Tree-NeRV: A Tree-Structured Neural Representation for Efficient Non-Uniform Video Encoding

Apr 17, 2025Abstract:Implicit Neural Representations for Videos (NeRV) have emerged as a powerful paradigm for video representation, enabling direct mappings from frame indices to video frames. However, existing NeRV-based methods do not fully exploit temporal redundancy, as they rely on uniform sampling along the temporal axis, leading to suboptimal rate-distortion (RD) performance. To address this limitation, we propose Tree-NeRV, a novel tree-structured feature representation for efficient and adaptive video encoding. Unlike conventional approaches, Tree-NeRV organizes feature representations within a Binary Search Tree (BST), enabling non-uniform sampling along the temporal axis. Additionally, we introduce an optimization-driven sampling strategy, dynamically allocating higher sampling density to regions with greater temporal variation. Extensive experiments demonstrate that Tree-NeRV achieves superior compression efficiency and reconstruction quality, outperforming prior uniform sampling-based methods. Code will be released.

MSPLoRA: A Multi-Scale Pyramid Low-Rank Adaptation for Efficient Model Fine-Tuning

Mar 27, 2025Abstract:Parameter-Efficient Fine-Tuning (PEFT) has become an essential approach for adapting large-scale pre-trained models while reducing computational costs. Among PEFT methods, LoRA significantly reduces trainable parameters by decomposing weight updates into low-rank matrices. However, traditional LoRA applies a fixed rank across all layers, failing to account for the varying complexity of hierarchical information, which leads to inefficient adaptation and redundancy. To address this, we propose MSPLoRA (Multi-Scale Pyramid LoRA), which introduces Global Shared LoRA, Mid-Level Shared LoRA, and Layer-Specific LoRA to capture global patterns, mid-level features, and fine-grained information, respectively. This hierarchical structure reduces inter-layer redundancy while maintaining strong adaptation capability. Experiments on various NLP tasks demonstrate that MSPLoRA achieves more efficient adaptation and better performance while significantly reducing the number of trainable parameters. Furthermore, additional analyses based on Singular Value Decomposition validate its information decoupling ability, highlighting MSPLoRA as a scalable and effective optimization strategy for parameter-efficient fine-tuning in large language models. Our code is available at https://github.com/Oblivioniss/MSPLoRA.

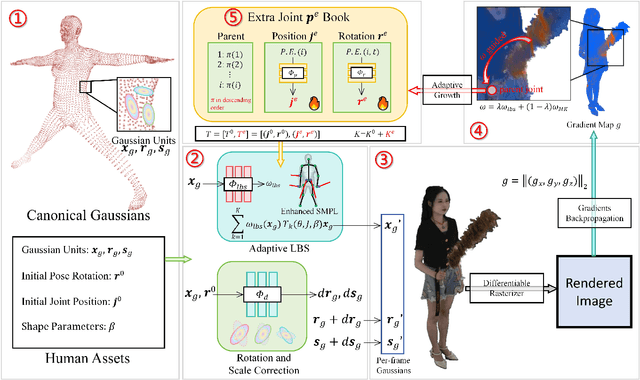

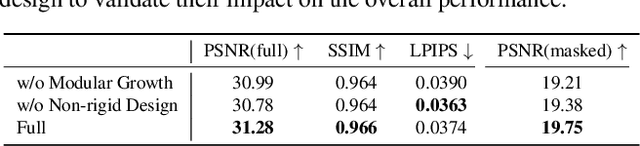

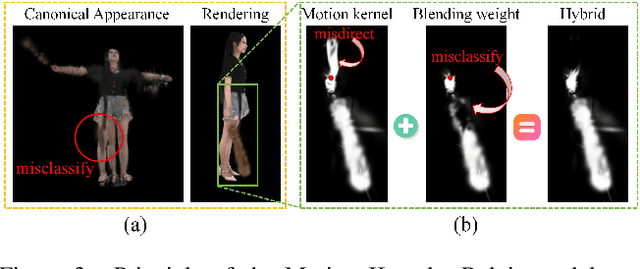

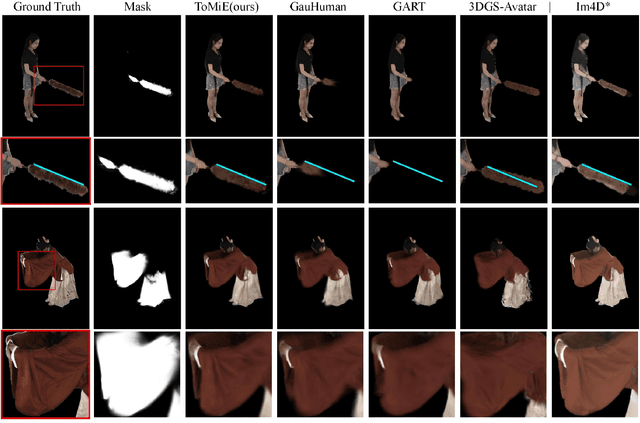

ToMiE: Towards Modular Growth in Enhanced SMPL Skeleton for 3D Human with Animatable Garments

Oct 10, 2024

Abstract:In this paper, we highlight a critical yet often overlooked factor in most 3D human tasks, namely modeling humans with complex garments. It is known that the parameterized formulation of SMPL is able to fit human skin; while complex garments, e.g., hand-held objects and loose-fitting garments, are difficult to get modeled within the unified framework, since their movements are usually decoupled with the human body. To enhance the capability of SMPL skeleton in response to this situation, we propose a modular growth strategy that enables the joint tree of the skeleton to expand adaptively. Specifically, our method, called ToMiE, consists of parent joints localization and external joints optimization. For parent joints localization, we employ a gradient-based approach guided by both LBS blending weights and motion kernels. Once the external joints are obtained, we proceed to optimize their transformations in SE(3) across different frames, enabling rendering and explicit animation. ToMiE manages to outperform other methods across various cases with garments, not only in rendering quality but also by offering free animation of grown joints, thereby enhancing the expressive ability of SMPL skeleton for a broader range of applications.

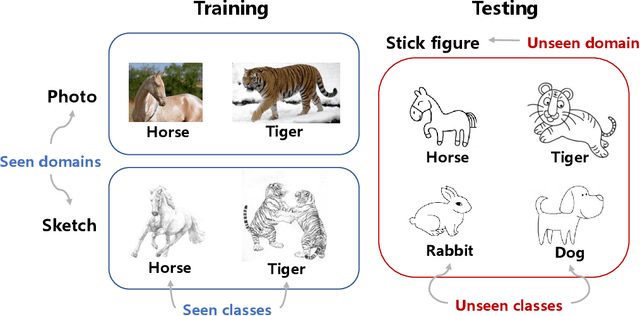

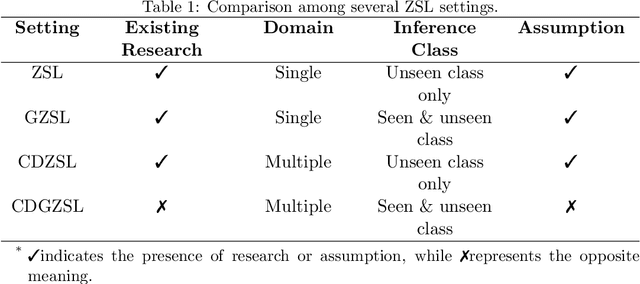

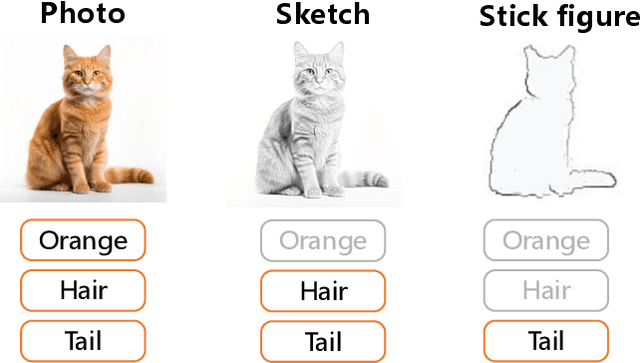

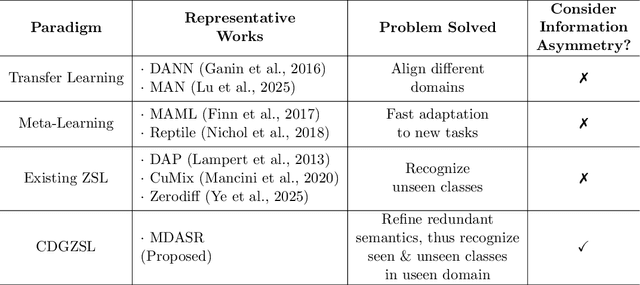

Less but Better: Enabling Generalized Zero-shot Learning Towards Unseen Domains by Intrinsic Learning from Redundant LLM Semantics

Mar 21, 2024

Abstract:Generalized zero-shot learning (GZSL) focuses on recognizing seen and unseen classes against domain shift problem (DSP) where data of unseen classes may be misclassified as seen classes. However, existing GZSL is still limited to seen domains. In the current work, we pioneer cross-domain GZSL (CDGZSL) which addresses GZSL towards unseen domains. Different from existing GZSL methods which alleviate DSP by generating features of unseen classes with semantics, CDGZSL needs to construct a common feature space across domains and acquire the corresponding intrinsic semantics shared among domains to transfer from seen to unseen domains. Considering the information asymmetry problem caused by redundant class semantics annotated with large language models (LLMs), we present Meta Domain Alignment Semantic Refinement (MDASR). Technically, MDASR consists of two parts: Inter-class Similarity Alignment (ISA), which eliminates the non-intrinsic semantics not shared across all domains under the guidance of inter-class feature relationships, and Unseen-class Meta Generation (UMG), which preserves intrinsic semantics to maintain connectivity between seen and unseen classes by simulating feature generation. MDASR effectively aligns the redundant semantic space with the common feature space, mitigating the information asymmetry in CDGZSL. The effectiveness of MDASR is demonstrated on the Office-Home and Mini-DomainNet, and we have shared the LLM-based semantics for these datasets as the benchmark.

Learning to better see the unseen: Broad-Deep Mixed Anti-Forgetting Framework for Incremental Zero-Shot Fault Diagnosis

Mar 18, 2024Abstract:Zero-shot fault diagnosis (ZSFD) is capable of identifying unseen faults via predicting fault attributes labeled by human experts. We first recognize the demand of ZSFD to deal with continuous changes in industrial processes, i.e., the model's ability to adapt to new fault categories and attributes while avoiding forgetting the diagnosis ability learned previously. To overcome the issue that the existing ZSFD paradigm cannot learn from evolving streams of training data in industrial scenarios, the incremental ZSFD (IZSFD) paradigm is proposed for the first time, which incorporates category increment and attribute increment for both traditional ZSFD and generalized ZSFD paradigms. To achieve IZSFD, we present a broad-deep mixed anti-forgetting framework (BDMAFF) that aims to learn from new fault categories and attributes. To tackle the issue of forgetting, BDMAFF effectively accumulates previously acquired knowledge from two perspectives: features and attribute prototypes. The feature memory is established through a deep generative model that employs anti-forgetting training strategies, ensuring the generation quality of historical categories is supervised and maintained. The diagnosis model SEEs the UNSEEN faults with the help of generated samples from the generative model. The attribute prototype memory is established through a diagnosis model inspired by the broad learning system. Unlike traditional incremental learning algorithms, BDMAFF introduces a memory-driven iterative update strategy for the diagnosis model, which allows the model to learn new faults and attributes without requiring the storage of all historical training samples. The effectiveness of the proposed method is verified by a real hydraulic system and the Tennessee-Eastman benchmark process.

Addressing Domain Shift via Knowledge Space Sharing for Generalized Zero-Shot Industrial Fault Diagnosis

Jun 04, 2023Abstract:Fault diagnosis is a critical aspect of industrial safety, and supervised industrial fault diagnosis has been extensively researched. However, obtaining fault samples of all categories for model training can be challenging due to cost and safety concerns. As a result, the generalized zero-shot industrial fault diagnosis has gained attention as it aims to diagnose both seen and unseen faults. Nevertheless, the lack of unseen fault data for training poses a challenging domain shift problem (DSP), where unseen faults are often identified as seen faults. In this article, we propose a knowledge space sharing (KSS) model to address the DSP in the generalized zero-shot industrial fault diagnosis task. The KSS model includes a generation mechanism (KSS-G) and a discrimination mechanism (KSS-D). KSS-G generates samples for rare faults by recombining transferable attribute features extracted from seen samples under the guidance of auxiliary knowledge. KSS-D is trained in a supervised way with the help of generated samples, which aims to address the DSP by modeling seen categories in the knowledge space. KSS-D avoids misclassifying rare faults as seen faults and identifies seen fault samples. We conduct generalized zero-shot diagnosis experiments on the benchmark Tennessee-Eastman process, and our results show that our approach outperforms state-of-the-art methods for the generalized zero-shot industrial fault diagnosis problem.

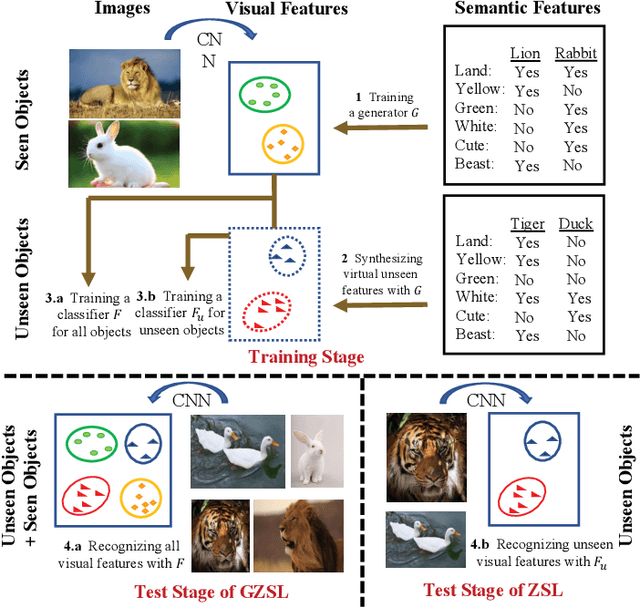

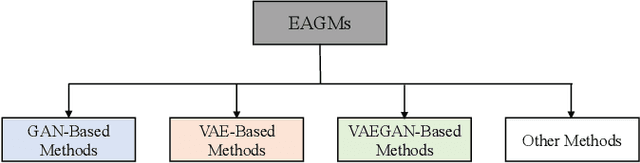

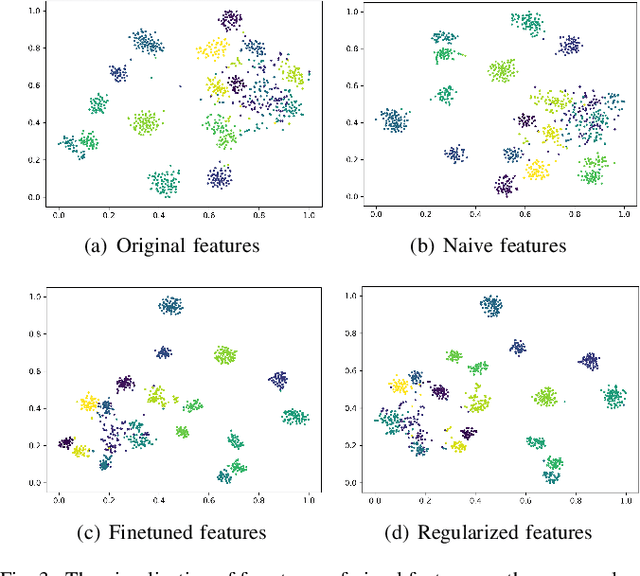

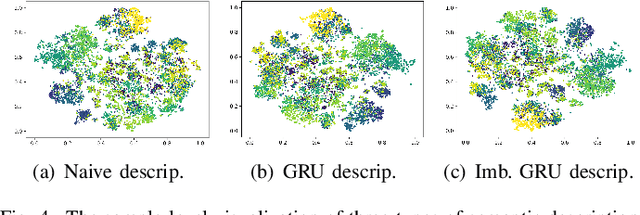

A Systematic Evaluation and Benchmark for Embedding-Aware Generative Models: Features, Models, and Any-shot Scenarios

Feb 16, 2023

Abstract:Embedding-aware generative model (EAGM) addresses the data insufficiency problem for zero-shot learning (ZSL) by constructing a generator between semantic and visual feature spaces. Thanks to the predefined benchmark and protocols, the number of proposed EAGMs for ZSL is increasing rapidly. We argue that it is time to take a step back and reconsider the embedding-aware generative paradigm. The main work of this paper is two-fold. First, the embedding features in benchmark datasets are somehow overlooked, which potentially limits the performance of EAGMs, while most researchers focus on how to improve EAGMs. Therefore, we conduct a systematic evaluation of ten representative EAGMs and prove that even embarrassedly simple modifications on the embedding features can improve the performance of EAGMs for ZSL remarkably. So it's time to pay more attention to the current embedding features in benchmark datasets. Second, based on five benchmark datasets, each with six any-shot learning scenarios, we systematically compare the performance of ten typical EAGMs for the first time, and we give a strong baseline for zero-shot learning (ZSL) and few-shot learning (FSL). Meanwhile, a comprehensive generative model repository, namely, generative any-shot learning (GASL) repository, is provided, which contains the models, features, parameters, and scenarios of EAGMs for ZSL and FSL. Any results in this paper can be readily reproduced with only one command line based on GASL.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge