Liangjun Feng

Cube: A Roblox View of 3D Intelligence

Mar 19, 2025

Abstract:Foundation models trained on vast amounts of data have demonstrated remarkable reasoning and generation capabilities in the domains of text, images, audio and video. Our goal at Roblox is to build such a foundation model for 3D intelligence, a model that can support developers in producing all aspects of a Roblox experience, from generating 3D objects and scenes to rigging characters for animation to producing programmatic scripts describing object behaviors. We discuss three key design requirements for such a 3D foundation model and then present our first step towards building such a model. We expect that 3D geometric shapes will be a core data type and describe our solution for 3D shape tokenizer. We show how our tokenization scheme can be used in applications for text-to-shape generation, shape-to-text generation and text-to-scene generation. We demonstrate how these applications can collaborate with existing large language models (LLMs) to perform scene analysis and reasoning. We conclude with a discussion outlining our path to building a fully unified foundation model for 3D intelligence.

Addressing Domain Shift via Knowledge Space Sharing for Generalized Zero-Shot Industrial Fault Diagnosis

Jun 04, 2023Abstract:Fault diagnosis is a critical aspect of industrial safety, and supervised industrial fault diagnosis has been extensively researched. However, obtaining fault samples of all categories for model training can be challenging due to cost and safety concerns. As a result, the generalized zero-shot industrial fault diagnosis has gained attention as it aims to diagnose both seen and unseen faults. Nevertheless, the lack of unseen fault data for training poses a challenging domain shift problem (DSP), where unseen faults are often identified as seen faults. In this article, we propose a knowledge space sharing (KSS) model to address the DSP in the generalized zero-shot industrial fault diagnosis task. The KSS model includes a generation mechanism (KSS-G) and a discrimination mechanism (KSS-D). KSS-G generates samples for rare faults by recombining transferable attribute features extracted from seen samples under the guidance of auxiliary knowledge. KSS-D is trained in a supervised way with the help of generated samples, which aims to address the DSP by modeling seen categories in the knowledge space. KSS-D avoids misclassifying rare faults as seen faults and identifies seen fault samples. We conduct generalized zero-shot diagnosis experiments on the benchmark Tennessee-Eastman process, and our results show that our approach outperforms state-of-the-art methods for the generalized zero-shot industrial fault diagnosis problem.

A Systematic Evaluation and Benchmark for Embedding-Aware Generative Models: Features, Models, and Any-shot Scenarios

Feb 16, 2023

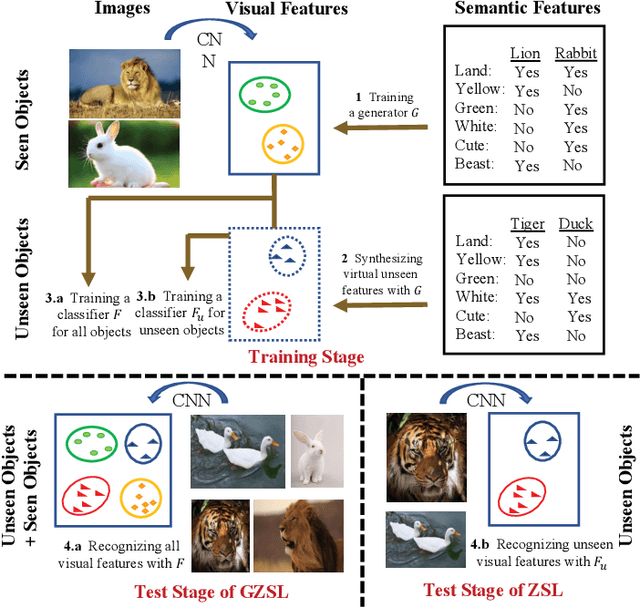

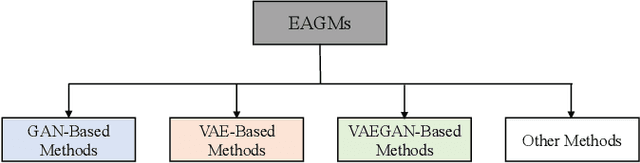

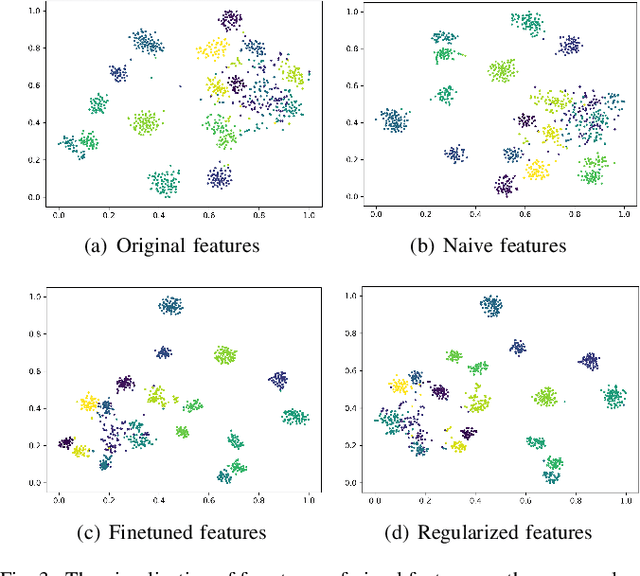

Abstract:Embedding-aware generative model (EAGM) addresses the data insufficiency problem for zero-shot learning (ZSL) by constructing a generator between semantic and visual feature spaces. Thanks to the predefined benchmark and protocols, the number of proposed EAGMs for ZSL is increasing rapidly. We argue that it is time to take a step back and reconsider the embedding-aware generative paradigm. The main work of this paper is two-fold. First, the embedding features in benchmark datasets are somehow overlooked, which potentially limits the performance of EAGMs, while most researchers focus on how to improve EAGMs. Therefore, we conduct a systematic evaluation of ten representative EAGMs and prove that even embarrassedly simple modifications on the embedding features can improve the performance of EAGMs for ZSL remarkably. So it's time to pay more attention to the current embedding features in benchmark datasets. Second, based on five benchmark datasets, each with six any-shot learning scenarios, we systematically compare the performance of ten typical EAGMs for the first time, and we give a strong baseline for zero-shot learning (ZSL) and few-shot learning (FSL). Meanwhile, a comprehensive generative model repository, namely, generative any-shot learning (GASL) repository, is provided, which contains the models, features, parameters, and scenarios of EAGMs for ZSL and FSL. Any results in this paper can be readily reproduced with only one command line based on GASL.

Bias-Eliminated Semantic Refinement for Any-Shot Learning

Feb 10, 2022

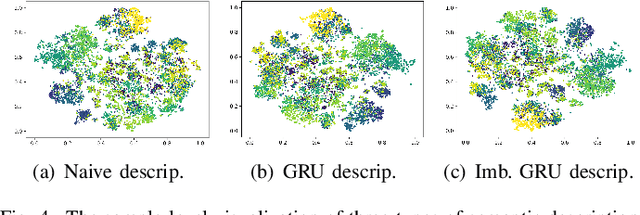

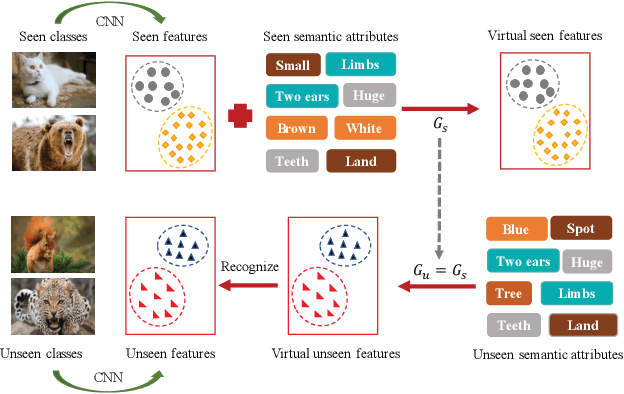

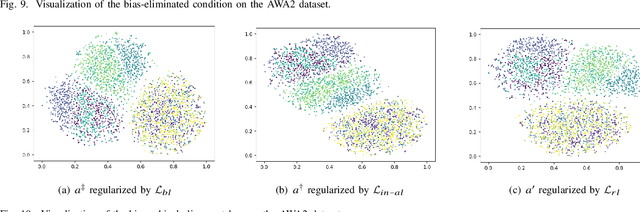

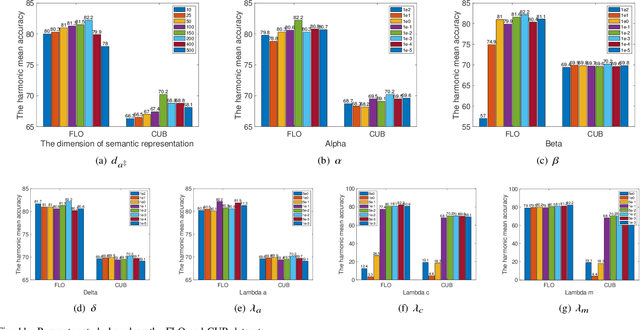

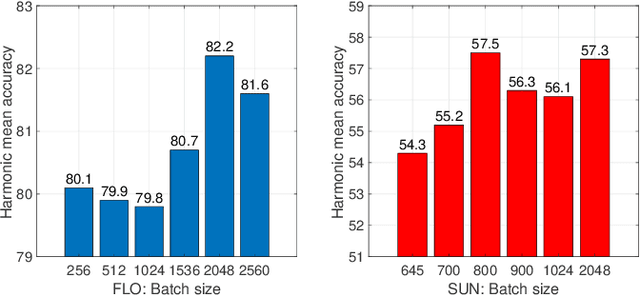

Abstract:When training samples are scarce, the semantic embedding technique, ie, describing class labels with attributes, provides a condition to generate visual features for unseen objects by transferring the knowledge from seen objects. However, semantic descriptions are usually obtained in an external paradigm, such as manual annotation, resulting in weak consistency between descriptions and visual features. In this paper, we refine the coarse-grained semantic description for any-shot learning tasks, ie, zero-shot learning (ZSL), generalized zero-shot learning (GZSL), and few-shot learning (FSL). A new model, namely, the semantic refinement Wasserstein generative adversarial network (SRWGAN) model, is designed with the proposed multihead representation and hierarchical alignment techniques. Unlike conventional methods, semantic refinement is performed with the aim of identifying a bias-eliminated condition for disjoint-class feature generation and is applicable in both inductive and transductive settings. We extensively evaluate model performance on six benchmark datasets and observe state-of-the-art results for any-shot learning; eg, we obtain 70.2% harmonic accuracy for the Caltech UCSD Birds (CUB) dataset and 82.2% harmonic accuracy for the Oxford Flowers (FLO) dataset in the standard GZSL setting. Various visualizations are also provided to show the bias-eliminated generation of SRWGAN. Our code is available.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge