Hongting Zhou

LogicMP: A Neuro-symbolic Approach for Encoding First-order Logic Constraints

Sep 29, 2023Abstract:Integrating first-order logic constraints (FOLCs) with neural networks is a crucial but challenging problem since it involves modeling intricate correlations to satisfy the constraints. This paper proposes a novel neural layer, LogicMP, whose layers perform mean-field variational inference over an MLN. It can be plugged into any off-the-shelf neural network to encode FOLCs while retaining modularity and efficiency. By exploiting the structure and symmetries in MLNs, we theoretically demonstrate that our well-designed, efficient mean-field iterations effectively mitigate the difficulty of MLN inference, reducing the inference from sequential calculation to a series of parallel tensor operations. Empirical results in three kinds of tasks over graphs, images, and text show that LogicMP outperforms advanced competitors in both performance and efficiency.

PKGM: A Pre-trained Knowledge Graph Model for E-commerce Application

Mar 02, 2022

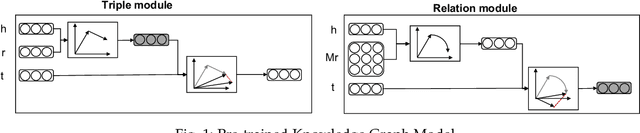

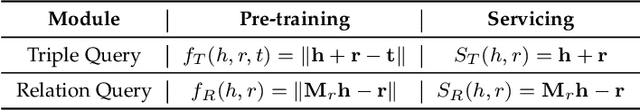

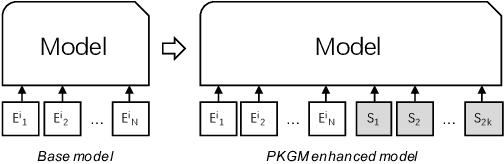

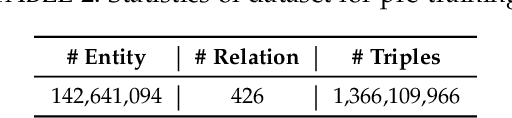

Abstract:In recent years, knowledge graphs have been widely applied as a uniform way to organize data and have enhanced many tasks requiring knowledge. In online shopping platform Taobao, we built a billion-scale e-commerce product knowledge graph. It organizes data uniformly and provides item knowledge services for various tasks such as item recommendation. Usually, such knowledge services are provided through triple data, while this implementation includes (1) tedious data selection works on product knowledge graph and (2) task model designing works to infuse those triples knowledge. More importantly, product knowledge graph is far from complete, resulting error propagation to knowledge enhanced tasks. To avoid these problems, we propose a Pre-trained Knowledge Graph Model (PKGM) for the billion-scale product knowledge graph. On the one hand, it could provide item knowledge services in a uniform way with service vectors for embedding-based and item-knowledge-related task models without accessing triple data. On the other hand, it's service is provided based on implicitly completed product knowledge graph, overcoming the common the incomplete issue. We also propose two general ways to integrate the service vectors from PKGM into downstream task models. We test PKGM in five knowledge-related tasks, item classification, item resolution, item recommendation, scene detection and sequential recommendation. Experimental results show that PKGM introduces significant performance gains on these tasks, illustrating the useful of service vectors from PKGM.

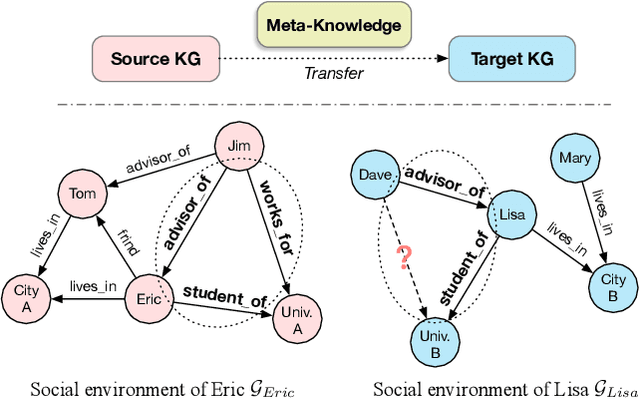

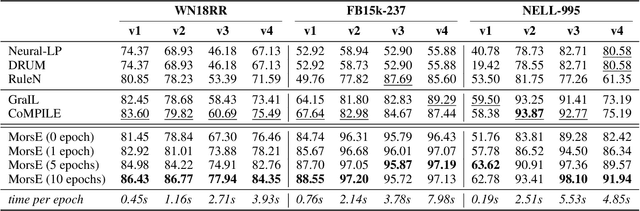

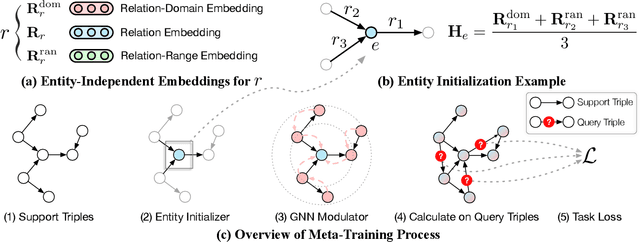

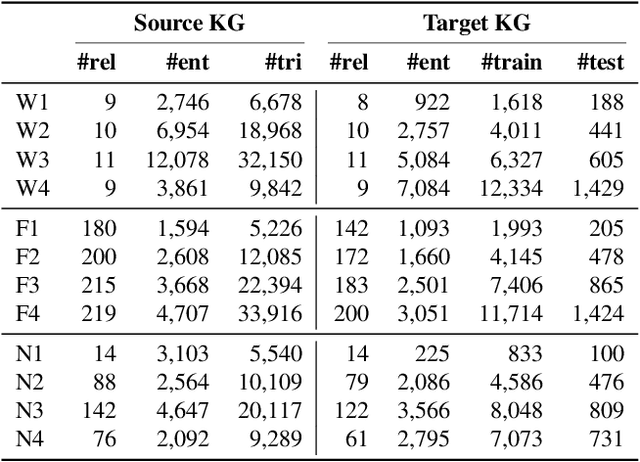

Standing on the Shoulders of Predecessors: Meta-Knowledge Transfer for Knowledge Graphs

Oct 27, 2021

Abstract:Knowledge graphs (KGs) have become widespread, and various knowledge graphs are constructed incessantly to support many in-KG and out-of-KG applications. During the construction of KGs, although new KGs may contain new entities with respect to constructed KGs, some entity-independent knowledge can be transferred from constructed KGs to new KGs. We call such knowledge meta-knowledge, and refer to the problem of transferring meta-knowledge from constructed (source) KGs to new (target) KGs to improve the performance of tasks on target KGs as meta-knowledge transfer for knowledge graphs. However, there is no available general framework that can tackle meta-knowledge transfer for both in-KG and out-of-KG tasks uniformly. Therefore, in this paper, we propose a framework, MorsE, which means conducting Meta-Learning for Meta-Knowledge Transfer via Knowledge Graph Embedding. MorsE represents the meta-knowledge via Knowledge Graph Embedding and learns the meta-knowledge by Meta-Learning. Specifically, MorsE uses an entity initializer and a Graph Neural Network (GNN) modulator to entity-independently obtain entity embeddings given a KG and is trained following the meta-learning setting to gain the ability of effectively obtaining embeddings. Experimental results on meta-knowledge transfer for both in-KG and out-of-KG tasks show that MorsE is able to learn and transfer meta-knowledge between KGs effectively, and outperforms existing state-of-the-art models.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge