Haeil Lee

StablePrompt: Automatic Prompt Tuning using Reinforcement Learning for Large Language Models

Oct 10, 2024

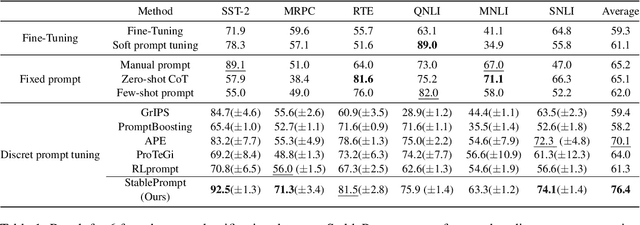

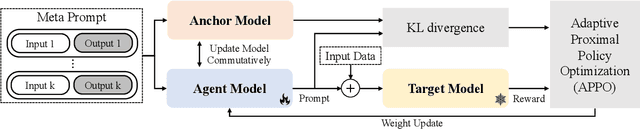

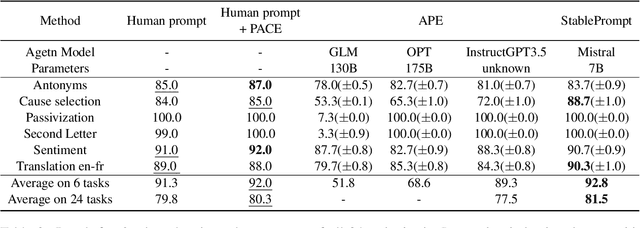

Abstract:Finding appropriate prompts for the specific task has become an important issue as the usage of Large Language Models (LLM) has expanded. Reinforcement Learning (RL) is widely used for prompt tuning, but its inherent instability and environmental dependency make it difficult to use in practice. In this paper, we propose StablePrompt, which strikes a balance between training stability and search space, mitigating the instability of RL and producing high-performance prompts. We formulate prompt tuning as an online RL problem between the agent and target LLM and introduce Adaptive Proximal Policy Optimization (APPO). APPO introduces an LLM anchor model to adaptively adjust the rate of policy updates. This allows for flexible prompt search while preserving the linguistic ability of the pre-trained LLM. StablePrompt outperforms previous methods on various tasks including text classification, question answering, and text generation. Our code can be found in github.

Beta Sampling is All You Need: Efficient Image Generation Strategy for Diffusion Models using Stepwise Spectral Analysis

Jul 16, 2024

Abstract:Generative diffusion models have emerged as a powerful tool for high-quality image synthesis, yet their iterative nature demands significant computational resources. This paper proposes an efficient time step sampling method based on an image spectral analysis of the diffusion process, aimed at optimizing the denoising process. Instead of the traditional uniform distribution-based time step sampling, we introduce a Beta distribution-like sampling technique that prioritizes critical steps in the early and late stages of the process. Our hypothesis is that certain steps exhibit significant changes in image content, while others contribute minimally. We validated our approach using Fourier transforms to measure frequency response changes at each step, revealing substantial low-frequency changes early on and high-frequency adjustments later. Experiments with ADM and Stable Diffusion demonstrated that our Beta Sampling method consistently outperforms uniform sampling, achieving better FID and IS scores, and offers competitive efficiency relative to state-of-the-art methods like AutoDiffusion. This work provides a practical framework for enhancing diffusion model efficiency by focusing computational resources on the most impactful steps, with potential for further optimization and broader application.

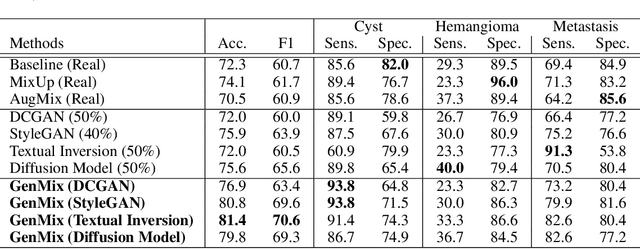

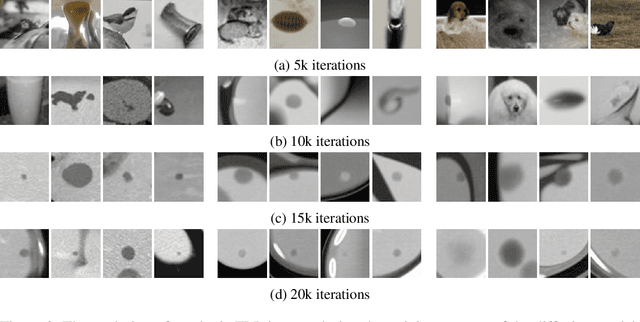

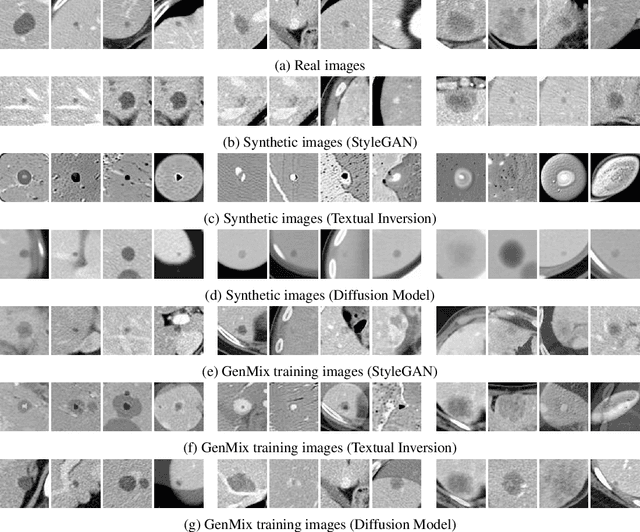

GenMix: Combining Generative and Mixture Data Augmentation for Medical Image Classification

May 31, 2024

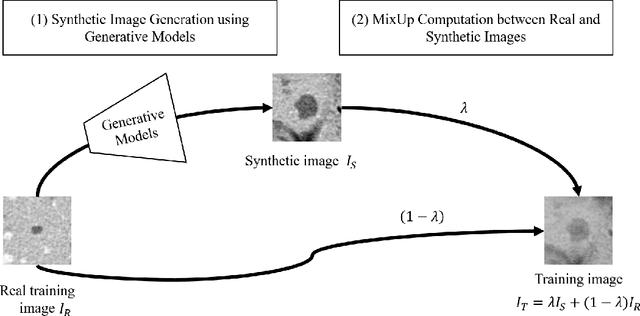

Abstract:In this paper, we propose a novel data augmentation technique called GenMix, which combines generative and mixture approaches to leverage the strengths of both methods. While generative models excel at creating new data patterns, they face challenges such as mode collapse in GANs and difficulties in training diffusion models, especially with limited medical imaging data. On the other hand, mixture models enhance class boundary regions but tend to favor the major class in scenarios with class imbalance. To address these limitations, GenMix integrates both approaches to complement each other. GenMix operates in two stages: (1) training a generative model to produce synthetic images, and (2) performing mixup between synthetic and real data. This process improves the quality and diversity of synthetic data while simultaneously benefiting from the new pattern learning of generative models and the boundary enhancement of mixture models. We validate the effectiveness of our method on the task of classifying focal liver lesions (FLLs) in CT images. Our results demonstrate that GenMix enhances the performance of various generative models, including DCGAN, StyleGAN, Textual Inversion, and Diffusion Models. Notably, the proposed method with Textual Inversion outperforms other methods without fine-tuning diffusion model on the FLL dataset.

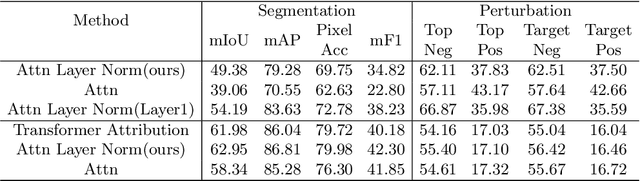

Inspecting Explainability of Transformer Models with Additional Statistical Information

Nov 19, 2023

Abstract:Transformer becomes more popular in the vision domain in recent years so there is a need for finding an effective way to interpret the Transformer model by visualizing it. In recent work, Chefer et al. can visualize the Transformer on vision and multi-modal tasks effectively by combining attention layers to show the importance of each image patch. However, when applying to other variants of Transformer such as the Swin Transformer, this method can not focus on the predicted object. Our method, by considering the statistics of tokens in layer normalization layers, shows a great ability to interpret the explainability of Swin Transformer and ViT.

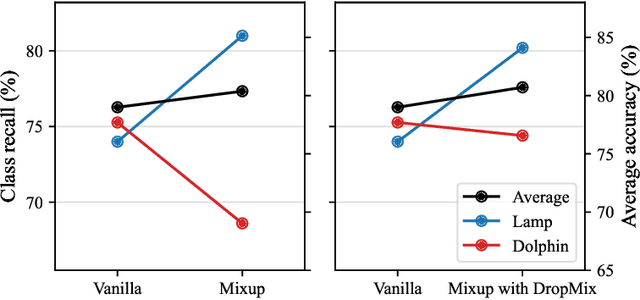

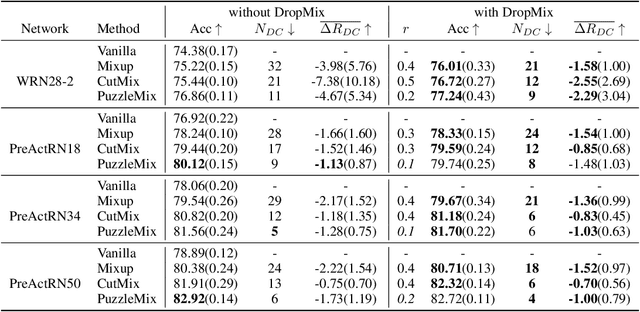

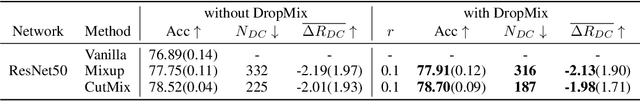

DropMix: Reducing Class Dependency in Mixed Sample Data Augmentation

Jul 18, 2023

Abstract:Mixed sample data augmentation (MSDA) is a widely used technique that has been found to improve performance in a variety of tasks. However, in this paper, we show that the effects of MSDA are class-dependent, with some classes seeing an improvement in performance while others experience a decline. To reduce class dependency, we propose the DropMix method, which excludes a specific percentage of data from the MSDA computation. By training on a combination of MSDA and non-MSDA data, the proposed method not only improves the performance of classes that were previously degraded by MSDA, but also increases overall average accuracy, as shown in experiments on two datasets (CIFAR-100 and ImageNet) using three MSDA methods (Mixup, CutMix and PuzzleMix).

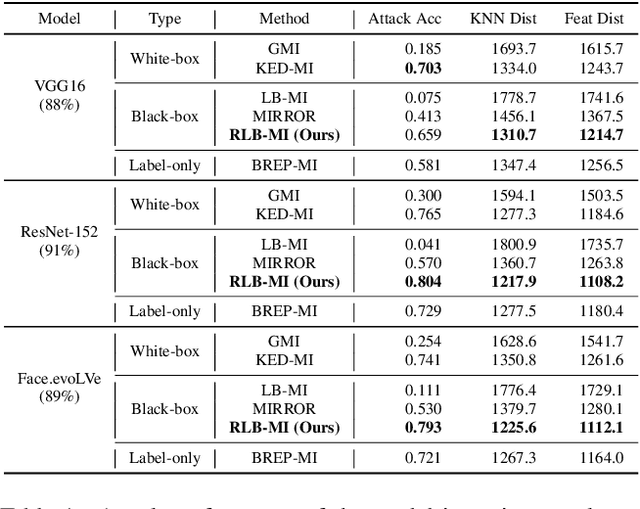

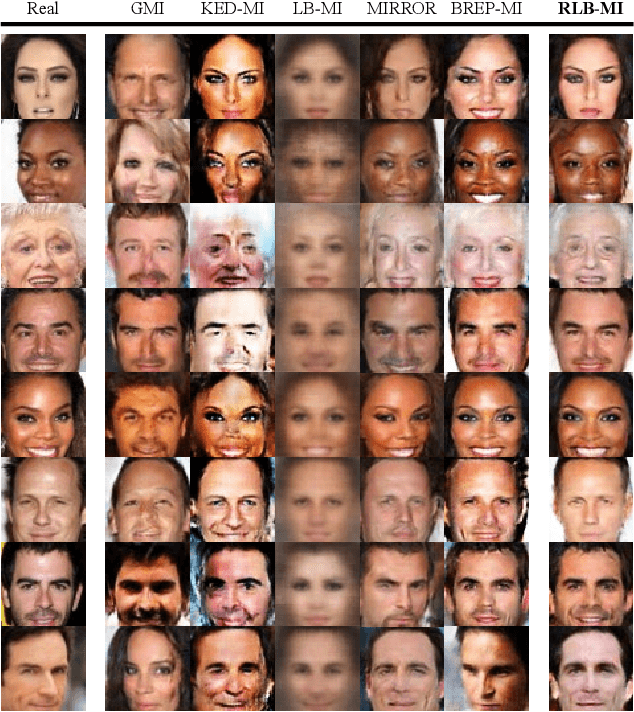

Reinforcement Learning-Based Black-Box Model Inversion Attacks

Apr 10, 2023

Abstract:Model inversion attacks are a type of privacy attack that reconstructs private data used to train a machine learning model, solely by accessing the model. Recently, white-box model inversion attacks leveraging Generative Adversarial Networks (GANs) to distill knowledge from public datasets have been receiving great attention because of their excellent attack performance. On the other hand, current black-box model inversion attacks that utilize GANs suffer from issues such as being unable to guarantee the completion of the attack process within a predetermined number of query accesses or achieve the same level of performance as white-box attacks. To overcome these limitations, we propose a reinforcement learning-based black-box model inversion attack. We formulate the latent space search as a Markov Decision Process (MDP) problem and solve it with reinforcement learning. Our method utilizes the confidence scores of the generated images to provide rewards to an agent. Finally, the private data can be reconstructed using the latent vectors found by the agent trained in the MDP. The experiment results on various datasets and models demonstrate that our attack successfully recovers the private information of the target model by achieving state-of-the-art attack performance. We emphasize the importance of studies on privacy-preserving machine learning by proposing a more advanced black-box model inversion attack.

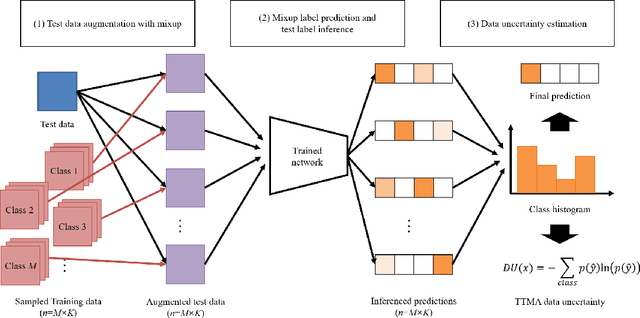

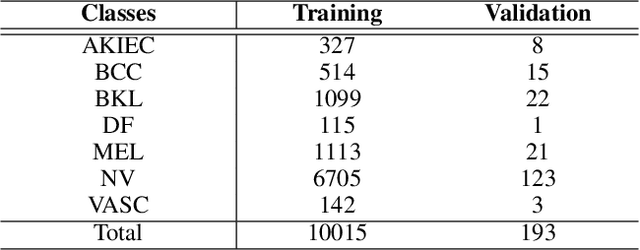

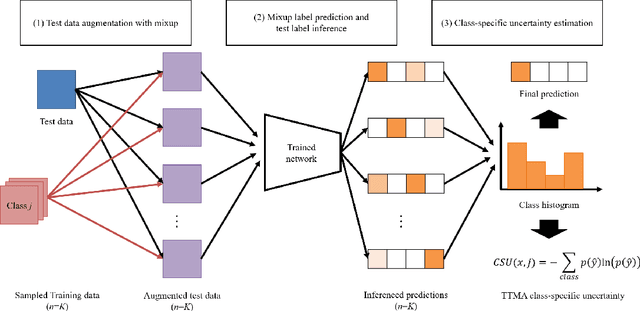

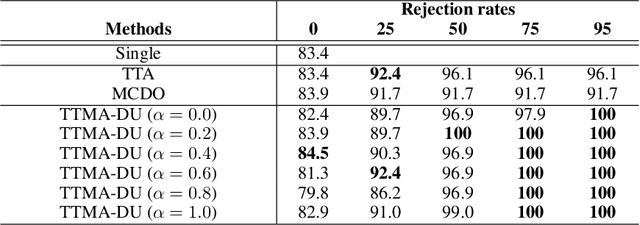

Test-Time Mixup Augmentation for Data and Class-Specific Uncertainty Estimation in Multi-Class Image Classification

Dec 01, 2022

Abstract:Uncertainty estimation of the trained deep learning network provides important information for improving the learning efficiency or evaluating the reliability of the network prediction. In this paper, we propose a method for the uncertainty estimation for multi-class image classification using test-time mixup augmentation (TTMA). To improve the discrimination ability between the correct and incorrect prediction of the existing aleatoric uncertainty, we propose the data uncertainty by applying the mixup augmentation on the test data and measuring the entropy of the histogram of predicted labels. In addition to the data uncertainty, we propose a class-specific uncertainty presenting the aleatoric uncertainty associated with the specific class, which can provide information on the class confusion and class similarity of the trained network. The proposed methods are validated on two public datasets, the ISIC-18 skin lesion diagnosis dataset, and the CIFAR-100 real-world image classification dataset. The experiments demonstrate that (1) the proposed data uncertainty better separates the correct and incorrect prediction than the existing uncertainty measures thanks to the mixup perturbation, and (2) the proposed class-specific uncertainty provides information on the class confusion and class similarity of the trained network for both datasets.

Noisy Label Classification using Label Noise Selection with Test-Time Augmentation Cross-Entropy and NoiseMix Learning

Dec 01, 2022Abstract:As the size of the dataset used in deep learning tasks increases, the noisy label problem, which is a task of making deep learning robust to the incorrectly labeled data, has become an important task. In this paper, we propose a method of learning noisy label data using the label noise selection with test-time augmentation (TTA) cross-entropy and classifier learning with the NoiseMix method. In the label noise selection, we propose TTA cross-entropy by measuring the cross-entropy to predict the test-time augmented training data. In the classifier learning, we propose the NoiseMix method based on MixUp and BalancedMix methods by mixing the samples from the noisy and the clean label data. In experiments on the ISIC-18 public skin lesion diagnosis dataset, the proposed TTA cross-entropy outperformed the conventional cross-entropy and the TTA uncertainty in detecting label noise data in the label noise selection process. Moreover, the proposed NoiseMix not only outperformed the state-of-the-art methods in the classification performance but also showed the most robustness to the label noise in the classifier learning.

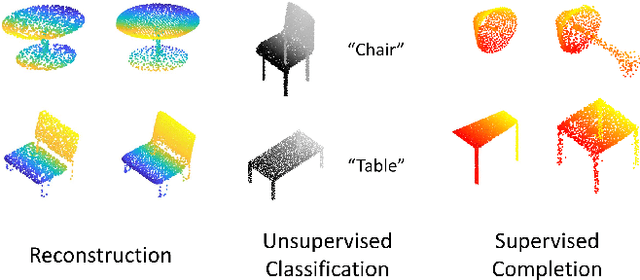

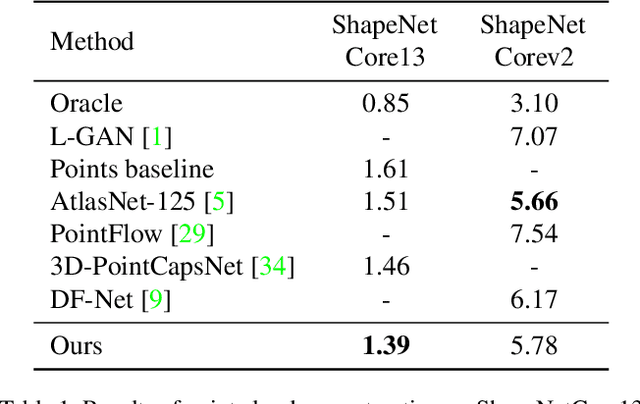

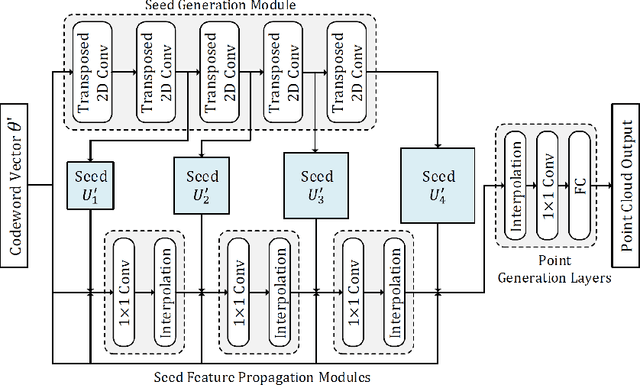

Progressive Seed Generation Auto-encoder for Unsupervised Point Cloud Learning

Dec 09, 2021

Abstract:With the development of 3D scanning technologies, 3D vision tasks have become a popular research area. Owing to the large amount of data acquired by sensors, unsupervised learning is essential for understanding and utilizing point clouds without an expensive annotation process. In this paper, we propose a novel framework and an effective auto-encoder architecture named "PSG-Net" for reconstruction-based learning of point clouds. Unlike existing studies that used fixed or random 2D points, our framework generates input-dependent point-wise features for the latent point set. PSG-Net uses the encoded input to produce point-wise features through the seed generation module and extracts richer features in multiple stages with gradually increasing resolution by applying the seed feature propagation module progressively. We prove the effectiveness of PSG-Net experimentally; PSG-Net shows state-of-the-art performances in point cloud reconstruction and unsupervised classification, and achieves comparable performance to counterpart methods in supervised completion.

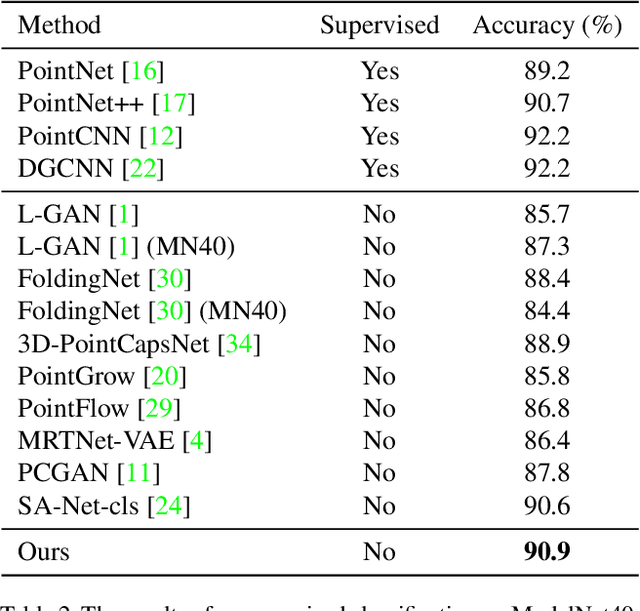

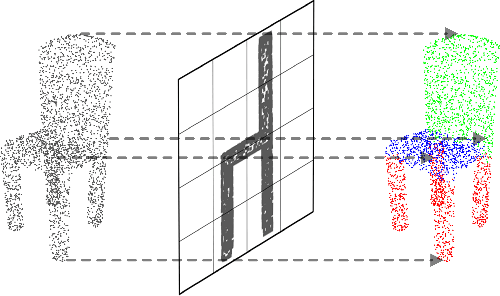

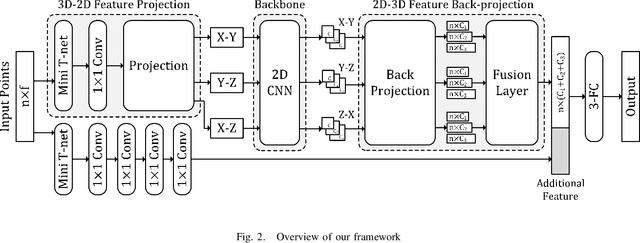

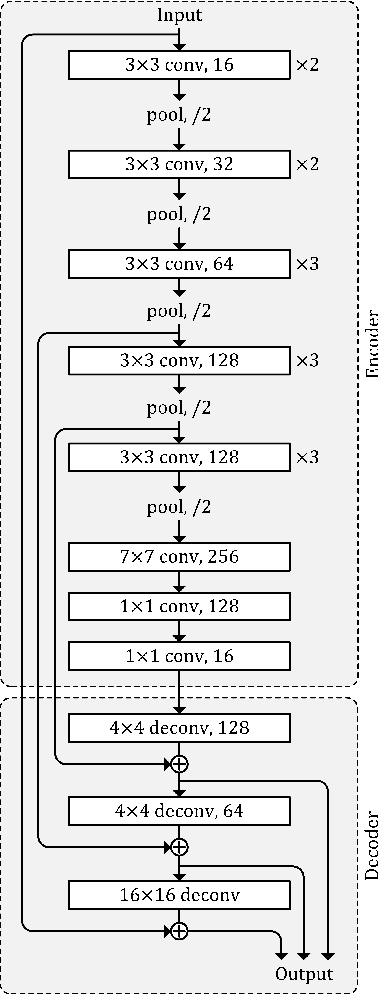

PBP-Net: Point Projection and Back-Projection Network for 3D Point Cloud Segmentation

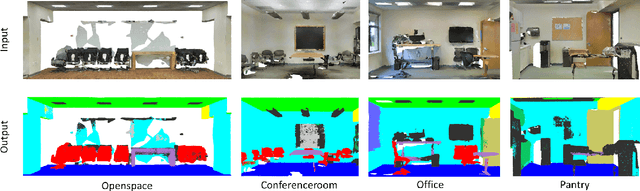

Nov 02, 2020

Abstract:Following considerable development in 3D scanning technologies, many studies have recently been proposed with various approaches for 3D vision tasks, including some methods that utilize 2D convolutional neural networks (CNNs). However, even though 2D CNNs have achieved high performance in many 2D vision tasks, existing works have not effectively applied them onto 3D vision tasks. In particular, segmentation has not been well studied because of the difficulty of dense prediction for each point, which requires rich feature representation. In this paper, we propose a simple and efficient architecture named point projection and back-projection network (PBP-Net), which leverages 2D CNNs for the 3D point cloud segmentation. 3 modules are introduced, each of which projects 3D point cloud onto 2D planes, extracts features using a 2D CNN backbone, and back-projects features onto the original 3D point cloud. To demonstrate effective 3D feature extraction using 2D CNN, we perform various experiments including comparison to recent methods. We analyze the proposed modules through ablation studies and perform experiments on object part segmentation (ShapeNet-Part dataset) and indoor scene semantic segmentation (S3DIS dataset). The experimental results show that proposed PBP-Net achieves comparable performance to existing state-of-the-art methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge