Erik Billing

Advantages of Multimodal versus Verbal-Only Robot-to-Human Communication with an Anthropomorphic Robotic Mock Driver

Jul 03, 2023

Abstract:Robots are increasingly used in shared environments with humans, making effective communication a necessity for successful human-robot interaction. In our work, we study a crucial component: active communication of robot intent. Here, we present an anthropomorphic solution where a humanoid robot communicates the intent of its host robot acting as an "Anthropomorphic Robotic Mock Driver" (ARMoD). We evaluate this approach in two experiments in which participants work alongside a mobile robot on various tasks, while the ARMoD communicates a need for human attention, when required, or gives instructions to collaborate on a joint task. The experiments feature two interaction styles of the ARMoD: a verbal-only mode using only speech and a multimodal mode, additionally including robotic gaze and pointing gestures to support communication and register intent in space. Our results show that the multimodal interaction style, including head movements and eye gaze as well as pointing gestures, leads to more natural fixation behavior. Participants naturally identified and fixated longer on the areas relevant for intent communication, and reacted faster to instructions in collaborative tasks. Our research further indicates that the ARMoD intent communication improves engagement and social interaction with mobile robots in workplace settings.

Ethical Challenges in the Human-Robot Interaction Field

Apr 15, 2021Abstract:The aim of this extended abstract is to introduce five examples of ethical issues in HRI that could have potential ethical implications, particularly on HRI participants. We consider these examples important to discuss in order to reach a consensus on how to handle them. Due to space limitations, this list is far from exhaustive and we hope that it can lead to a wider discussion that stimulates HRI researchers to think ethically. Previous work has shown a trend of underreporting ethical conduct in the HRI field; in this extended abstract we consider some of the ethical issues that could arise in HRI research.

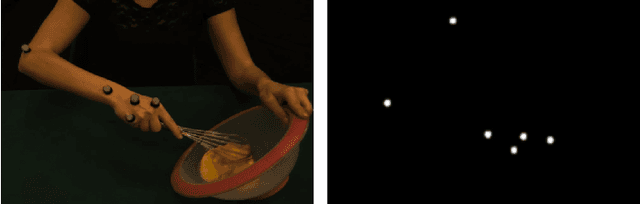

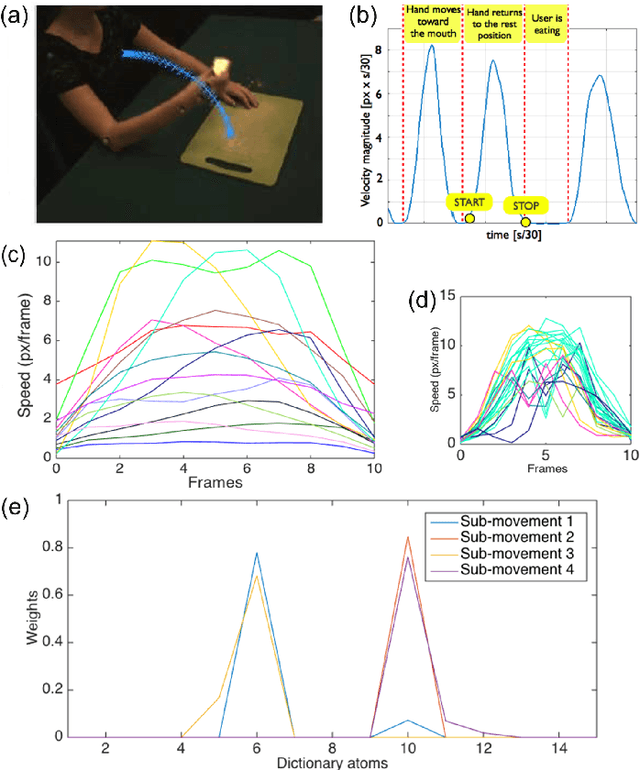

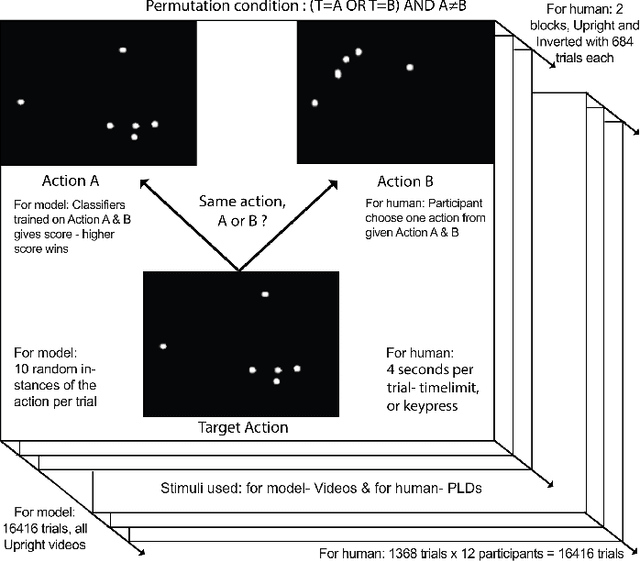

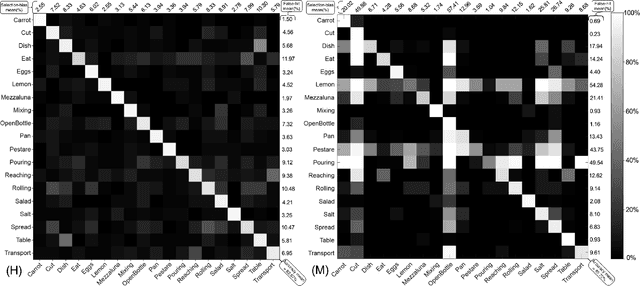

Action similarity judgment based on kinematic primitives

Aug 30, 2020

Abstract:Understanding which features humans rely on -- in visually recognizing action similarity is a crucial step towards a clearer picture of human action perception from a learning and developmental perspective. In the present work, we investigate to which extent a computational model based on kinematics can determine action similarity and how its performance relates to human similarity judgments of the same actions. To this aim, twelve participants perform an action similarity task, and their performances are compared to that of a computational model solving the same task. The chosen model has its roots in developmental robotics and performs action classification based on learned kinematic primitives. The comparative experiment results show that both the model and human participants can reliably identify whether two actions are the same or not. However, the model produces more false hits and has a greater selection bias than human participants. A possible reason for this is the particular sensitivity of the model towards kinematic primitives of the presented actions. In a second experiment, human participants' performance on an action identification task indicated that they relied solely on kinematic information rather than on action semantics. The results show that both the model and human performance are highly accurate in an action similarity task based on kinematic-level features, which can provide an essential basis for classifying human actions.

Proceedings of the SREC Workshop at HRI 2019

Sep 05, 2019Abstract:Robot-Assisted Therapy (RAT) has successfully been used in Human Robot Interaction (HRI) research by including social robots in health-care interventions by virtue of their ability to engage human users in both social and emotional dimensions. Robots used for these tasks must be designed with several user groups in mind, including both individuals receiving therapy and care professionals responsible for the treatment. These robots must also be able to perceive their context of use, recognize human actions and intentions, and follow the therapeutic goals to perform meaningful and personalized treatment. Effective interactions require for robots to be capable of coordinated, timely behavior in response to social cues. This means being able to estimate and predict levels of engagement, attention, intentionality and emotional state during human-robot interactions. An additional challenge for social robots in therapy and care is the wide range of needs and conditions the different users can have during their interventions, even if they may share the same pathologies their current requirements and the objectives of their therapies can varied extensively. Therefore, it becomes crucial for robots to adapt their behaviors and interaction scenario to the specific needs, preferences and requirements of the patients they interact with. This personalization should be considered in terms of the robot behavior and the intervention scenario and must reflect the needs, preferences and requirements of the user.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge