Deqing Zou

Revisiting Classification Taxonomy for Grammatical Errors

Feb 18, 2025Abstract:Grammatical error classification plays a crucial role in language learning systems, but existing classification taxonomies often lack rigorous validation, leading to inconsistencies and unreliable feedback. In this paper, we revisit previous classification taxonomies for grammatical errors by introducing a systematic and qualitative evaluation framework. Our approach examines four aspects of a taxonomy, i.e., exclusivity, coverage, balance, and usability. Then, we construct a high-quality grammatical error classification dataset annotated with multiple classification taxonomies and evaluate them grounding on our proposed evaluation framework. Our experiments reveal the drawbacks of existing taxonomies. Our contributions aim to improve the precision and effectiveness of error analysis, providing more understandable and actionable feedback for language learners.

Position: LLMs Can be Good Tutors in Foreign Language Education

Feb 08, 2025Abstract:While recent efforts have begun integrating large language models (LLMs) into foreign language education (FLE), they often rely on traditional approaches to learning tasks without fully embracing educational methodologies, thus lacking adaptability to language learning. To address this gap, we argue that LLMs have the potential to serve as effective tutors in FLE. Specifically, LLMs can play three critical roles: (1) as data enhancers, improving the creation of learning materials or serving as student simulations; (2) as task predictors, serving as learner assessment or optimizing learning pathway; and (3) as agents, enabling personalized and inclusive education. We encourage interdisciplinary research to explore these roles, fostering innovation while addressing challenges and risks, ultimately advancing FLE through the thoughtful integration of LLMs.

Towards Making Deep Learning-based Vulnerability Detectors Robust

Aug 04, 2021

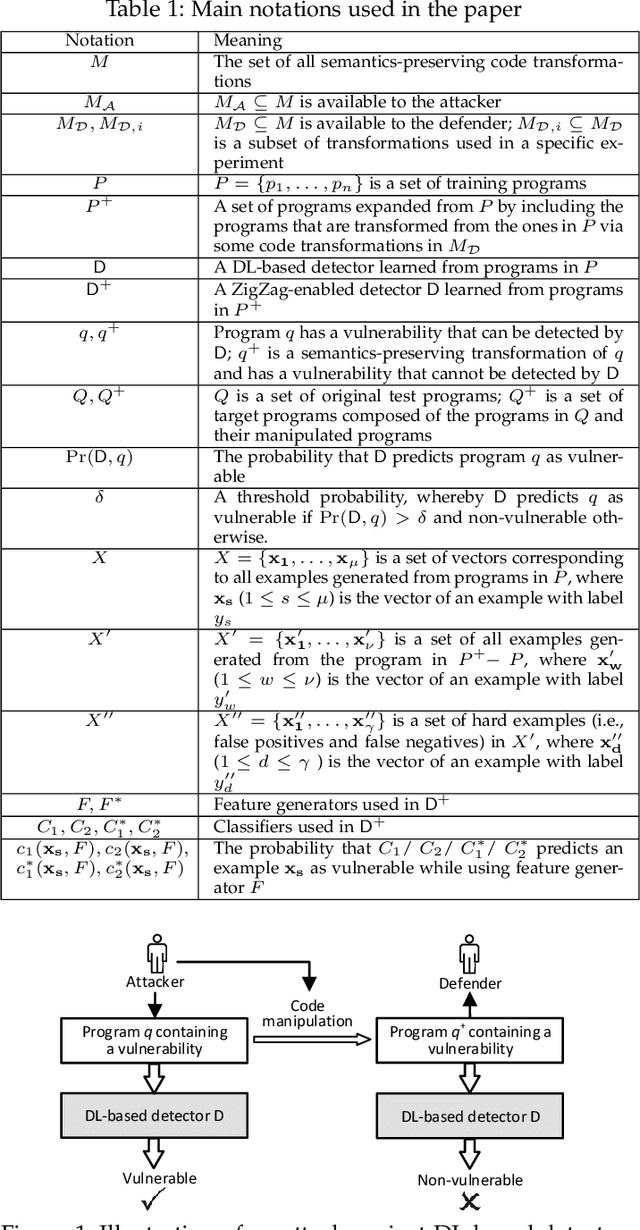

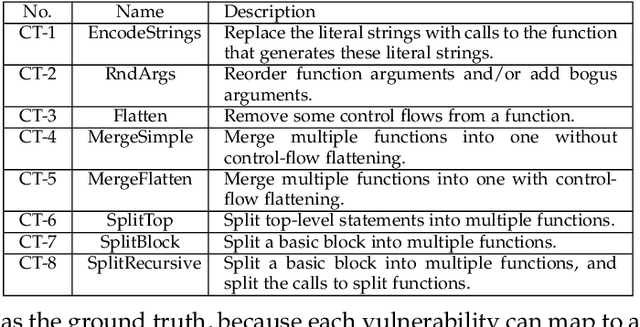

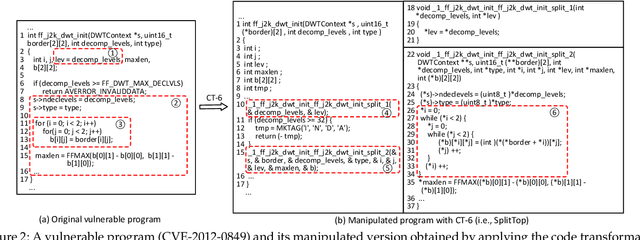

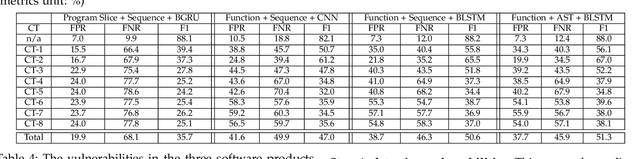

Abstract:Automatically detecting software vulnerabilities in source code is an important problem that has attracted much attention. In particular, deep learning-based vulnerability detectors, or DL-based detectors, are attractive because they do not need human experts to define features or patterns of vulnerabilities. However, such detectors' robustness is unclear. In this paper, we initiate the study in this aspect by demonstrating that DL-based detectors are not robust against simple code transformations, dubbed attacks in this paper, as these transformations may be leveraged for malicious purposes. As a first step towards making DL-based detectors robust against such attacks, we propose an innovative framework, dubbed ZigZag, which is centered at (i) decoupling feature learning and classifier learning and (ii) using a ZigZag-style strategy to iteratively refine them until they converge to robust features and robust classifiers. Experimental results show that the ZigZag framework can substantially improve the robustness of DL-based detectors.

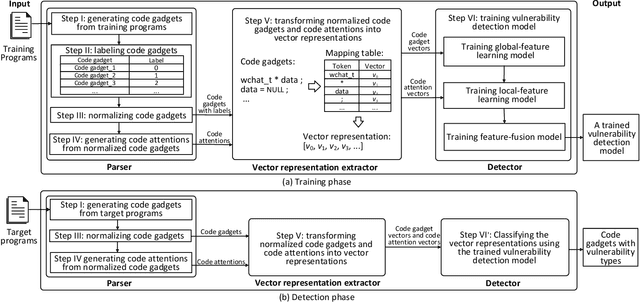

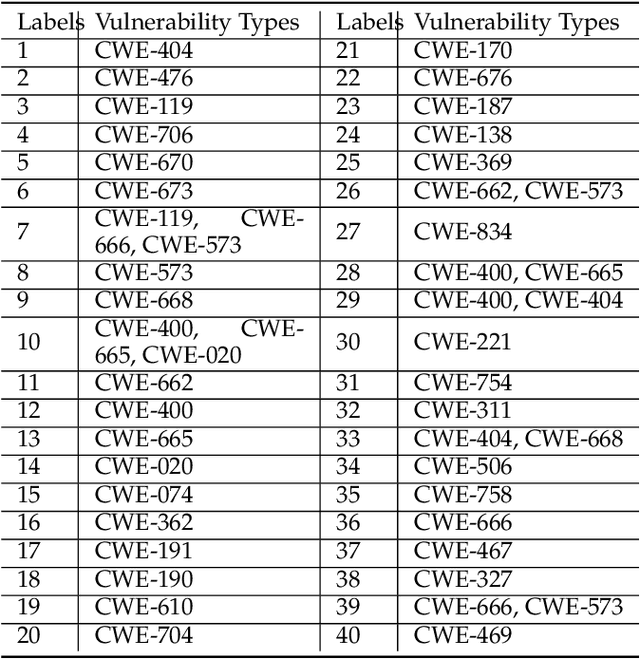

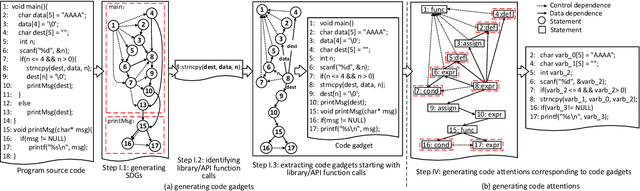

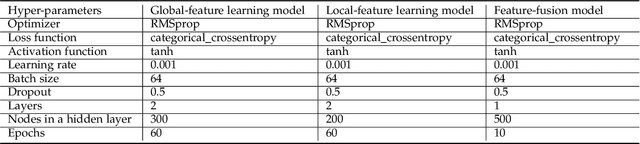

$μ$VulDeePecker: A Deep Learning-Based System for Multiclass Vulnerability Detection

Jan 08, 2020

Abstract:Fine-grained software vulnerability detection is an important and challenging problem. Ideally, a detection system (or detector) not only should be able to detect whether or not a program contains vulnerabilities, but also should be able to pinpoint the type of a vulnerability in question. Existing vulnerability detection methods based on deep learning can detect the presence of vulnerabilities (i.e., addressing the binary classification or detection problem), but cannot pinpoint types of vulnerabilities (i.e., incapable of addressing multiclass classification). In this paper, we propose the first deep learning-based system for multiclass vulnerability detection, dubbed $\mu$VulDeePecker. The key insight underlying $\mu$VulDeePecker is the concept of code attention, which can capture information that can help pinpoint types of vulnerabilities, even when the samples are small. For this purpose, we create a dataset from scratch and use it to evaluate the effectiveness of $\mu$VulDeePecker. Experimental results show that $\mu$VulDeePecker is effective for multiclass vulnerability detection and that accommodating control-dependence (other than data-dependence) can lead to higher detection capabilities.

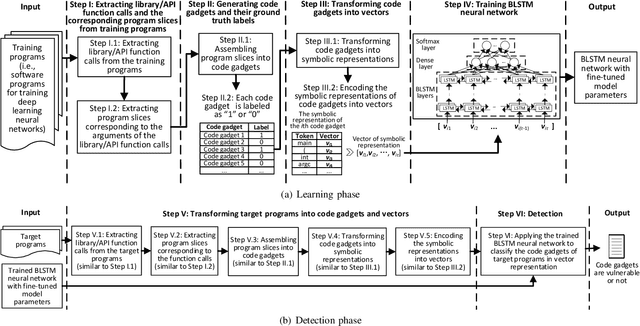

SySeVR: A Framework for Using Deep Learning to Detect Software Vulnerabilities

Sep 21, 2018

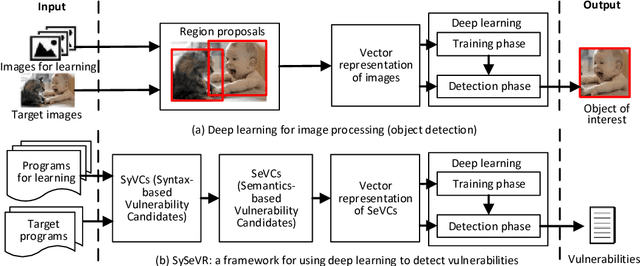

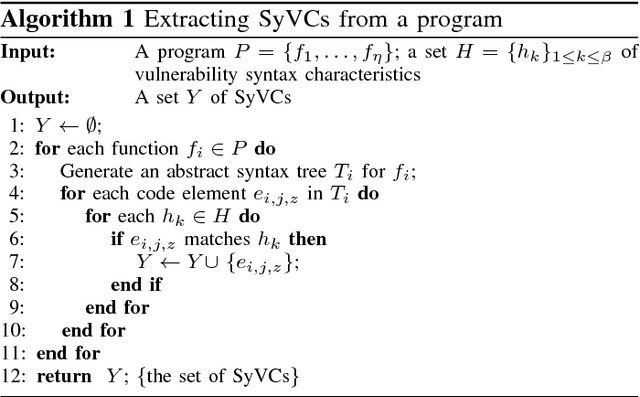

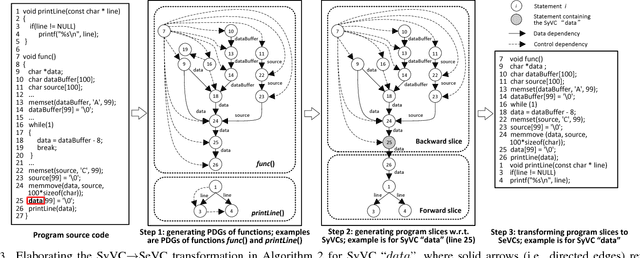

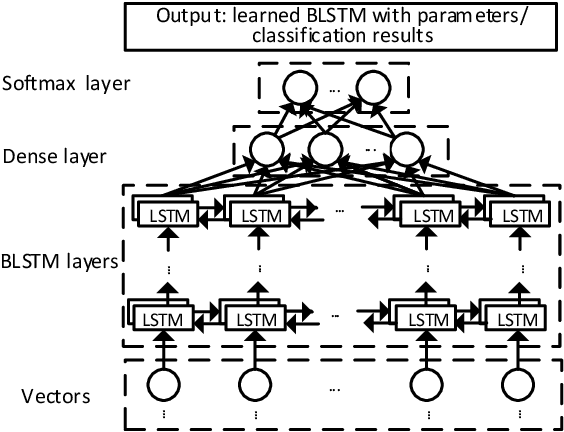

Abstract:The detection of software vulnerabilities (or vulnerabilities for short) is an important problem that has yet to be tackled, as manifested by many vulnerabilities reported on a daily basis. This calls for machine learning methods to automate vulnerability detection. Deep learning is attractive for this purpose because it does not require human experts to manually define features. Despite the tremendous success of deep learning in other domains, its applicability to vulnerability detection is not systematically understood. In order to fill this void, we propose the first systematic framework for using deep learning to detect vulnerabilities. The framework, dubbed Syntax-based, Semantics-based, and Vector Representations (SySeVR), focuses on obtaining program representations that can accommodate syntax and semantic information pertinent to vulnerabilities. Our experiments with 4 software products demonstrate the usefulness of the framework: we detect 15 vulnerabilities that are not reported in the National Vulnerability Database. Among these 15 vulnerabilities, 7 are unknown and have been reported to the vendors, and the other 8 have been "silently" patched by the vendors when releasing newer versions of the products.

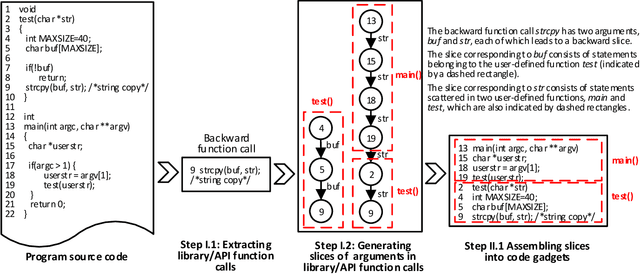

VulDeePecker: A Deep Learning-Based System for Vulnerability Detection

Jan 05, 2018

Abstract:The automatic detection of software vulnerabilities is an important research problem. However, existing solutions to this problem rely on human experts to define features and often miss many vulnerabilities (i.e., incurring high false negative rate). In this paper, we initiate the study of using deep learning-based vulnerability detection to relieve human experts from the tedious and subjective task of manually defining features. Since deep learning is motivated to deal with problems that are very different from the problem of vulnerability detection, we need some guiding principles for applying deep learning to vulnerability detection. In particular, we need to find representations of software programs that are suitable for deep learning. For this purpose, we propose using code gadgets to represent programs and then transform them into vectors, where a code gadget is a number of (not necessarily consecutive) lines of code that are semantically related to each other. This leads to the design and implementation of a deep learning-based vulnerability detection system, called Vulnerability Deep Pecker (VulDeePecker). In order to evaluate VulDeePecker, we present the first vulnerability dataset for deep learning approaches. Experimental results show that VulDeePecker can achieve much fewer false negatives (with reasonable false positives) than other approaches. We further apply VulDeePecker to 3 software products (namely Xen, Seamonkey, and Libav) and detect 4 vulnerabilities, which are not reported in the National Vulnerability Database but were "silently" patched by the vendors when releasing later versions of these products; in contrast, these vulnerabilities are almost entirely missed by the other vulnerability detection systems we experimented with.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge