Daniel Schulz

Second Competition on Presentation Attack Detection on ID Card

Jul 27, 2025Abstract:This work summarises and reports the results of the second Presentation Attack Detection competition on ID cards. This new version includes new elements compared to the previous one. (1) An automatic evaluation platform was enabled for automatic benchmarking; (2) Two tracks were proposed in order to evaluate algorithms and datasets, respectively; and (3) A new ID card dataset was shared with Track 1 teams to serve as the baseline dataset for the training and optimisation. The Hochschule Darmstadt, Fraunhofer-IGD, and Facephi company jointly organised this challenge. 20 teams were registered, and 74 submitted models were evaluated. For Track 1, the "Dragons" team reached first place with an Average Ranking and Equal Error rate (EER) of AV-Rank of 40.48% and 11.44% EER, respectively. For the more challenging approach in Track 2, the "Incode" team reached the best results with an AV-Rank of 14.76% and 6.36% EER, improving on the results of the first edition of 74.30% and 21.87% EER, respectively. These results suggest that PAD on ID cards is improving, but it is still a challenging problem related to the number of images, especially of bona fide images.

First Competition on Presentation Attack Detection on ID Card

Aug 31, 2024

Abstract:This paper summarises the Competition on Presentation Attack Detection on ID Cards (PAD-IDCard) held at the 2024 International Joint Conference on Biometrics (IJCB2024). The competition attracted a total of ten registered teams, both from academia and industry. In the end, the participating teams submitted five valid submissions, with eight models to be evaluated by the organisers. The competition presented an independent assessment of current state-of-the-art algorithms. Today, no independent evaluation on cross-dataset is available; therefore, this work determined the state-of-the-art on ID cards. To reach this goal, a sequestered test set and baseline algorithms were used to evaluate and compare all the proposals. The sequestered test dataset contains ID cards from four different countries. In summary, a team that chose to be "Anonymous" reached the best average ranking results of 74.80%, followed very closely by the "IDVC" team with 77.65%.

SynFacePAD 2023: Competition on Face Presentation Attack Detection Based on Privacy-aware Synthetic Training Data

Nov 09, 2023

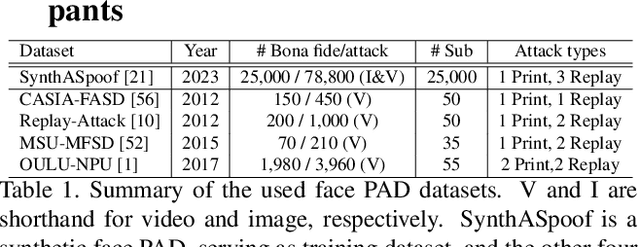

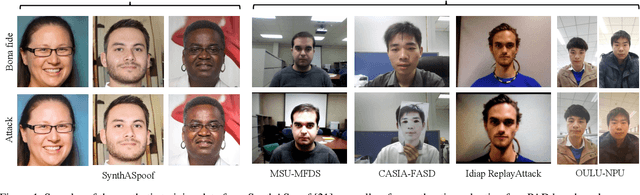

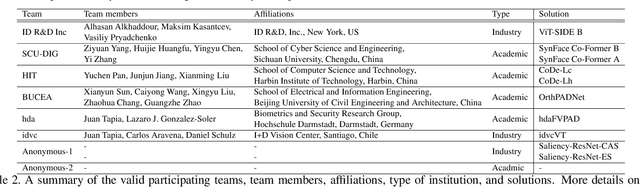

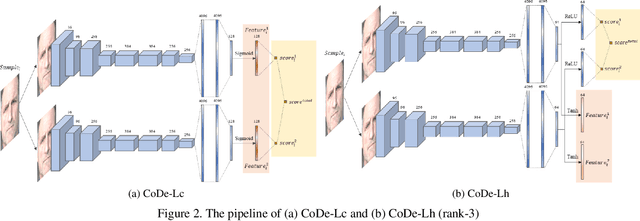

Abstract:This paper presents a summary of the Competition on Face Presentation Attack Detection Based on Privacy-aware Synthetic Training Data (SynFacePAD 2023) held at the 2023 International Joint Conference on Biometrics (IJCB 2023). The competition attracted a total of 8 participating teams with valid submissions from academia and industry. The competition aimed to motivate and attract solutions that target detecting face presentation attacks while considering synthetic-based training data motivated by privacy, legal and ethical concerns associated with personal data. To achieve that, the training data used by the participants was limited to synthetic data provided by the organizers. The submitted solutions presented innovations and novel approaches that led to outperforming the considered baseline in the investigated benchmarks.

Iris Liveness Detection Competition (LivDet-Iris) -- The 2023 Edition

Oct 06, 2023

Abstract:This paper describes the results of the 2023 edition of the ''LivDet'' series of iris presentation attack detection (PAD) competitions. New elements in this fifth competition include (1) GAN-generated iris images as a category of presentation attack instruments (PAI), and (2) an evaluation of human accuracy at detecting PAI as a reference benchmark. Clarkson University and the University of Notre Dame contributed image datasets for the competition, composed of samples representing seven different PAI categories, as well as baseline PAD algorithms. Fraunhofer IGD, Beijing University of Civil Engineering and Architecture, and Hochschule Darmstadt contributed results for a total of eight PAD algorithms to the competition. Accuracy results are analyzed by different PAI types, and compared to human accuracy. Overall, the Fraunhofer IGD algorithm, using an attention-based pixel-wise binary supervision network, showed the best-weighted accuracy results (average classification error rate of 37.31%), while the Beijing University of Civil Engineering and Architecture's algorithm won when equal weights for each PAI were given (average classification rate of 22.15%). These results suggest that iris PAD is still a challenging problem.

Single Morphing Attack Detection using Siamese Network and Few-shot Learning

Jun 22, 2022

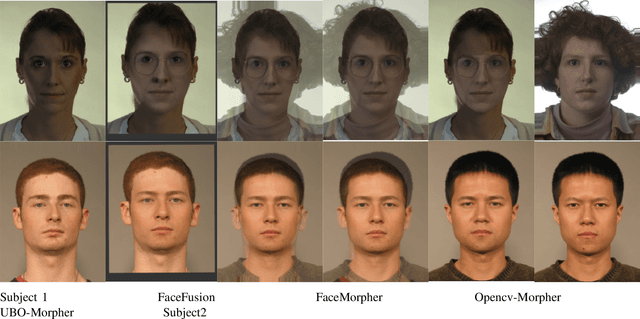

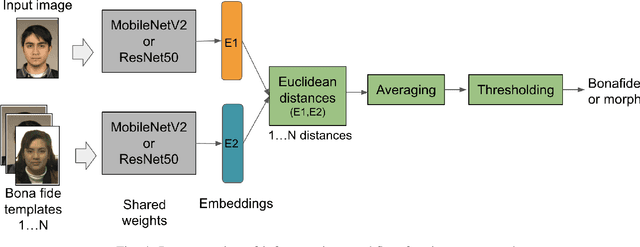

Abstract:Face morphing attack detection is challenging and presents a concrete and severe threat for face verification systems. Reliable detection mechanisms for such attacks, which have been tested with a robust cross-database protocol and unknown morphing tools still is a research challenge. This paper proposes a framework following the Few-Shot-Learning approach that shares image information based on the siamese network using triplet-semi-hard-loss to tackle the morphing attack detection and boost the clustering classification process. This network compares a bona fide or potentially morphed image with triplets of morphing and bona fide face images. Our results show that this new network cluster the data points, and assigns them to classes in order to obtain a lower equal error rate in a cross-database scenario sharing only small image numbers from an unknown database. Few-shot learning helps to boost the learning process. Experimental results using a cross-datasets trained with FRGCv2 and tested with FERET and the AMSL open-access databases reduced the BPCER10 from 43% to 4.91% using ResNet50 and 5.50% for MobileNetV2.

Towards an Efficient Semantic Segmentation Method of ID Cards for Verification Systems

Nov 24, 2021

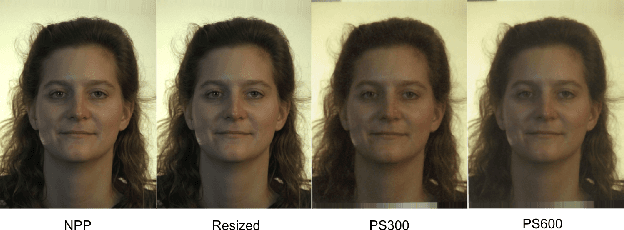

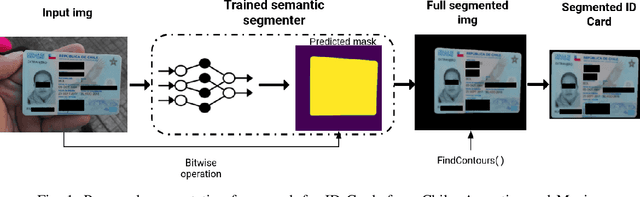

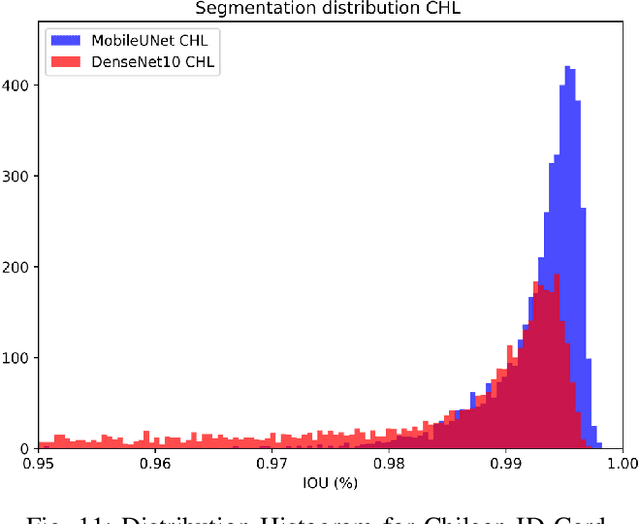

Abstract:Removing the background in ID Card images is a real challenge for remote verification systems because many of the re-digitalised images present cluttered backgrounds, poor illumination conditions, distortion and occlusions. The background in ID Card images confuses the classifiers and the text extraction. Due to the lack of available images for research, this field represents an open problem in computer vision today. This work proposes a method for removing the background using semantic segmentation of ID Cards. In the end, images captured in the wild from the real operation, using a manually labelled dataset consisting of 45,007 images, with five types of ID Cards from three countries (Chile, Argentina and Mexico), including typical presentation attack scenarios, were used. This method can help to improve the following stages in a regular identity verification or document tampering detection system. Two Deep Learning approaches were explored, based on MobileUNet and DenseNet10. The best results were obtained using MobileUNet, with 6.5 million parameters. A Chilean ID Card's mean Intersection Over Union (IoU) was 0.9926 on a private test dataset of 4,988 images. The best results for the fused multi-country dataset of ID Card images from Chile, Argentina and Mexico reached an IoU of 0.9911. The proposed methods are lightweight enough to be used in real-time operation on mobile devices.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge