Chunhe Xia

Dexterous Hand Manipulation via Efficient Imitation-Bootstrapped Online Reinforcement Learning

Mar 06, 2025

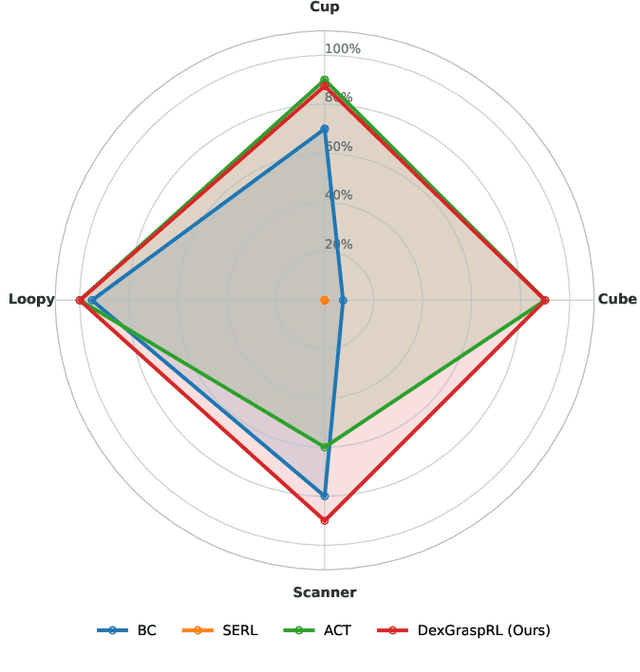

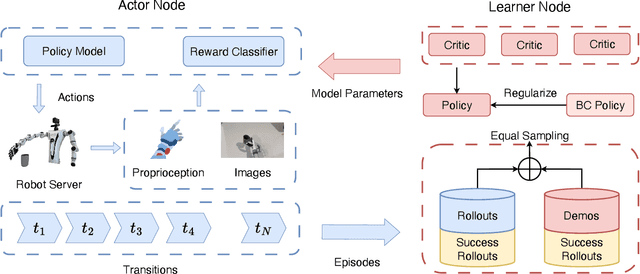

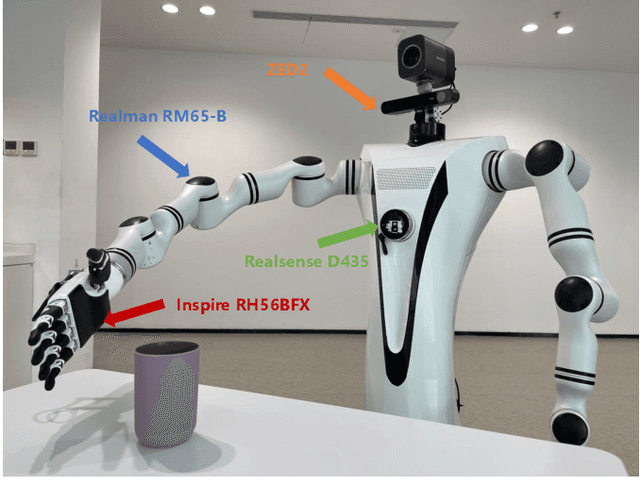

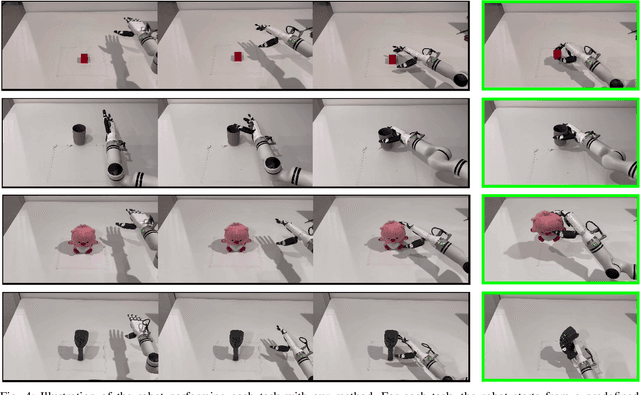

Abstract:Dexterous hand manipulation in real-world scenarios presents considerable challenges due to its demands for both dexterity and precision. While imitation learning approaches have thoroughly examined these challenges, they still require a significant number of expert demonstrations and are limited by a constrained performance upper bound. In this paper, we propose a novel and efficient Imitation-Bootstrapped Online Reinforcement Learning (IBORL) method tailored for robotic dexterous hand manipulation in real-world environments. Specifically, we pretrain the policy using a limited set of expert demonstrations and subsequently finetune this policy through direct reinforcement learning in the real world. To address the catastrophic forgetting issues that arise from the distribution shift between expert demonstrations and real-world environments, we design a regularization term that balances the exploration of novel behaviors with the preservation of the pretrained policy. Our experiments with real-world tasks demonstrate that our method significantly outperforms existing approaches, achieving an almost 100% success rate and a 23% improvement in cycle time. Furthermore, by finetuning with online reinforcement learning, our method surpasses expert demonstrations and uncovers superior policies. Our code and empirical results are available in https://hggforget.github.io/iborl.github.io/.

PPBFL: A Privacy Protected Blockchain-based Federated Learning Model

Jan 08, 2024Abstract:With the rapid development of machine learning and a growing concern for data privacy, federated learning has become a focal point of attention. However, attacks on model parameters and a lack of incentive mechanisms hinder the effectiveness of federated learning. Therefore, we propose A Privacy Protected Blockchain-based Federated Learning Model (PPBFL) to enhance the security of federated learning and encourage active participation of nodes in model training. Blockchain technology ensures the integrity of model parameters stored in the InterPlanetary File System (IPFS), providing protection against tampering. Within the blockchain, we introduce a Proof of Training Work (PoTW) consensus algorithm tailored for federated learning, aiming to incentive training nodes. This algorithm rewards nodes with greater computational power, promoting increased participation and effort in the federated learning process. A novel adaptive differential privacy algorithm is simultaneously applied to local and global models. This safeguards the privacy of local data at training clients, preventing malicious nodes from launching inference attacks. Additionally, it enhances the security of the global model, preventing potential security degradation resulting from the combination of numerous local models. The possibility of security degradation is derived from the composition theorem. By introducing reverse noise in the global model, a zero-bias estimate of differential privacy noise between local and global models is achieved. Furthermore, we propose a new mix transactions mechanism utilizing ring signature technology to better protect the identity privacy of local training clients. Security analysis and experimental results demonstrate that PPBFL, compared to baseline methods, not only exhibits superior model performance but also achieves higher security.

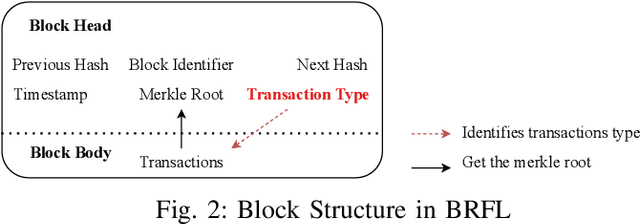

FBChain: A Blockchain-based Federated Learning Model with Efficiency and Secure Communication

Nov 21, 2023

Abstract:Privacy and security in the parameter transmission process of federated learning are currently among the most prominent concerns. However, there are two thorny problems caused by unprotected communication methods: "parameter-leakage" and "inefficient-communication". This article proposes Blockchain-based Federated Learning (FBChain) model for federated learning parameter communication to overcome the above two problems. First, we utilize the immutability of blockchain to store the global model and hash value of local model parameters in case of tampering during the communication process, protect data privacy by encrypting parameters, and verify data consistency by comparing the hash values of local parameters, thus addressing the "parameter-leakage" problem. Second, the Proof of Weighted Link Speed (PoWLS) consensus algorithm comprehensively selects nodes with the higher weighted link speed to aggregate global model and package blocks, thereby solving the "inefficient-communication" problem. Experimental results demonstrate the effectiveness of our proposed FBChain model and its ability to improve model communication efficiency in federated learning.

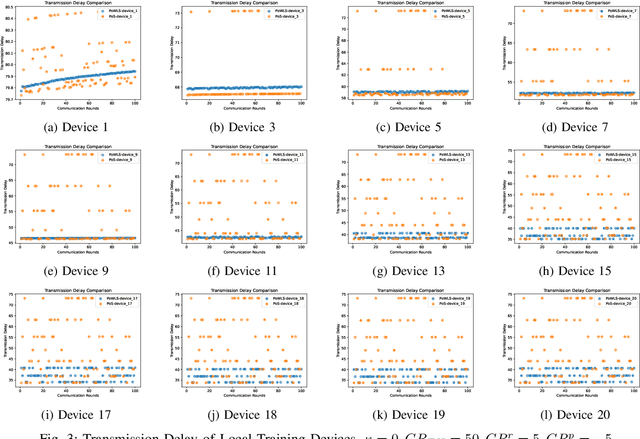

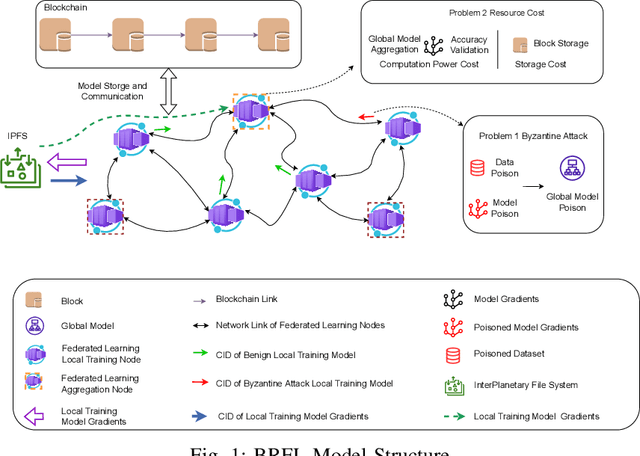

BRFL: A Blockchain-based Byzantine-Robust Federated Learning Model

Oct 20, 2023

Abstract:With the increasing importance of machine learning, the privacy and security of training data have become critical. Federated learning, which stores data in distributed nodes and shares only model parameters, has gained significant attention for addressing this concern. However, a challenge arises in federated learning due to the Byzantine Attack Problem, where malicious local models can compromise the global model's performance during aggregation. This article proposes the Blockchain-based Byzantine-Robust Federated Learning (BRLF) model that combines federated learning with blockchain technology. This integration enables traceability of malicious models and provides incentives for locally trained clients. Our approach involves selecting the aggregation node based on Pearson's correlation coefficient, and we perform spectral clustering and calculate the average gradient within each cluster, validating its accuracy using local dataset of the aggregation nodes. Experimental results on public datasets demonstrate the superior byzantine robustness of our secure aggregation algorithm compared to other baseline byzantine robust aggregation methods, and proved our proposed model effectiveness in addressing the resource consumption problem.

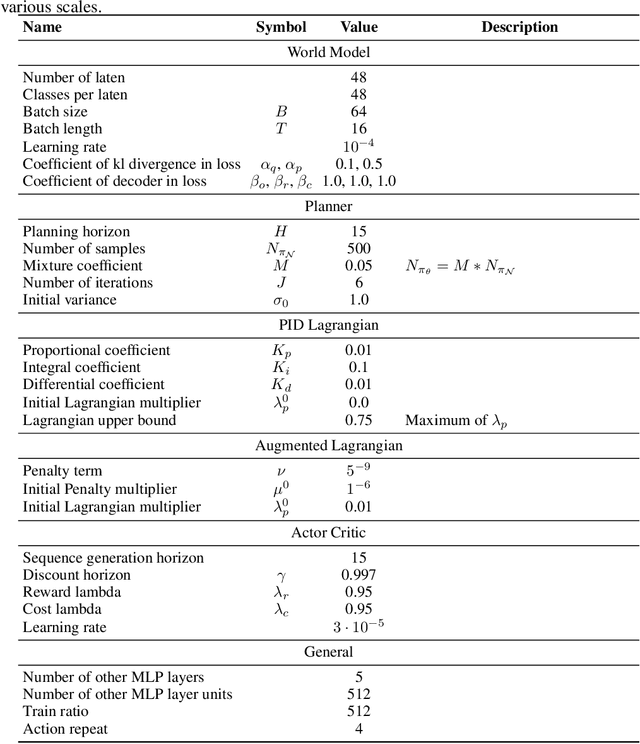

Safe DreamerV3: Safe Reinforcement Learning with World Models

Jul 14, 2023

Abstract:The widespread application of Reinforcement Learning (RL) in real-world situations is yet to come to fruition, largely as a result of its failure to satisfy the essential safety demands of such systems. Existing safe reinforcement learning (SafeRL) methods, employing cost functions to enhance safety, fail to achieve zero-cost in complex scenarios, including vision-only tasks, even with comprehensive data sampling and training. To address this, we introduce Safe DreamerV3, a novel algorithm that integrates both Lagrangian-based and planning-based methods within a world model. Our methodology represents a significant advancement in SafeRL as the first algorithm to achieve nearly zero-cost in both low-dimensional and vision-only tasks within the Safety-Gymnasium benchmark. Our project website can be found in: https://sites.google.com/view/safedreamerv3.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge