Che-Kai Liu

REASON: Accelerating Probabilistic Logical Reasoning for Scalable Neuro-Symbolic Intelligence

Jan 28, 2026Abstract:Neuro-symbolic AI systems integrate neural perception with symbolic reasoning to enable data-efficient, interpretable, and robust intelligence beyond purely neural models. Although this compositional paradigm has shown superior performance in domains such as reasoning, planning, and verification, its deployment remains challenging due to severe inefficiencies in symbolic and probabilistic inference. Through systematic analysis of representative neuro-symbolic workloads, we identify probabilistic logical reasoning as the inefficiency bottleneck, characterized by irregular control flow, low arithmetic intensity, uncoalesced memory accesses, and poor hardware utilization on CPUs and GPUs. This paper presents REASON, an integrated acceleration framework for probabilistic logical reasoning in neuro-symbolic AI. REASON introduces a unified directed acyclic graph representation that captures common structure across symbolic and probabilistic models, coupled with adaptive pruning and regularization. At the architecture level, REASON features a reconfigurable, tree-based processing fabric optimized for irregular traversal, symbolic deduction, and probabilistic aggregation. At the system level, REASON is tightly integrated with GPU streaming multiprocessors through a programmable interface and multi-level pipeline that efficiently orchestrates compositional execution. Evaluated across six neuro-symbolic workloads, REASON achieves 12-50x speedup and 310-681x energy efficiency over desktop and edge GPUs under TSMC 28 nm node. REASON enables real-time probabilistic logical reasoning, completing end-to-end tasks in 0.8 s with 6 mm2 area and 2.12 W power, demonstrating that targeted acceleration of probabilistic logical reasoning is critical for practical and scalable neuro-symbolic AI and positioning REASON as a foundational system architecture for next-generation cognitive intelligence.

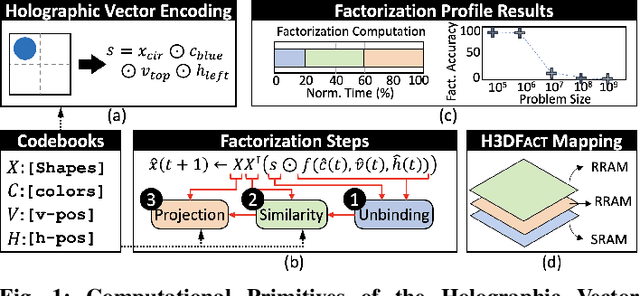

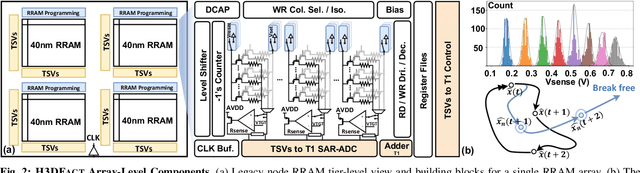

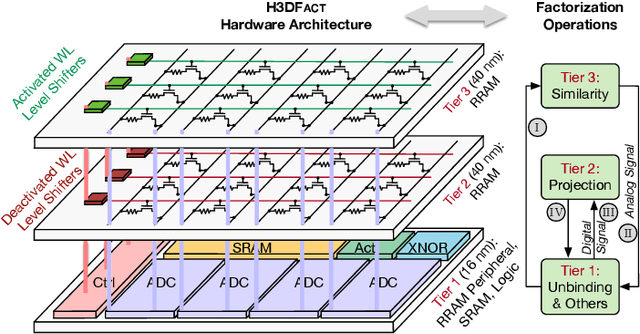

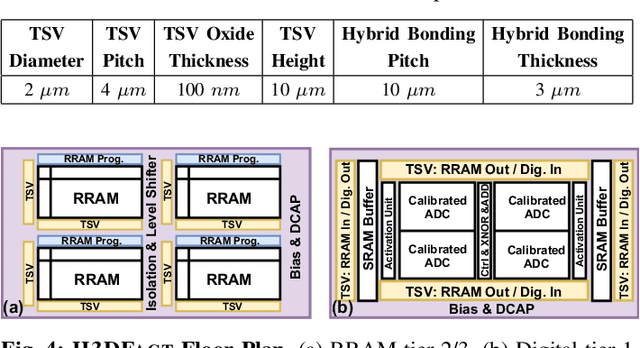

H3DFact: Heterogeneous 3D Integrated CIM for Factorization with Holographic Perceptual Representations

Apr 05, 2024

Abstract:Disentangling attributes of various sensory signals is central to human-like perception and reasoning and a critical task for higher-order cognitive and neuro-symbolic AI systems. An elegant approach to represent this intricate factorization is via high-dimensional holographic vectors drawing on brain-inspired vector symbolic architectures. However, holographic factorization involves iterative computation with high-dimensional matrix-vector multiplications and suffers from non-convergence problems. In this paper, we present H3DFact, a heterogeneous 3D integrated in-memory compute engine capable of efficiently factorizing high-dimensional holographic representations. H3DFact exploits the computation-in-superposition capability of holographic vectors and the intrinsic stochasticity associated with memristive-based 3D compute-in-memory. Evaluated on large-scale factorization and perceptual problems, H3DFact demonstrates superior capability in factorization accuracy and operational capacity by up to five orders of magnitude, with 5.5x compute density, 1.2x energy efficiency improvements, and 5.9x less silicon footprint compared to iso-capacity 2D designs.

Towards Cognitive AI Systems: a Survey and Prospective on Neuro-Symbolic AI

Jan 02, 2024Abstract:The remarkable advancements in artificial intelligence (AI), primarily driven by deep neural networks, have significantly impacted various aspects of our lives. However, the current challenges surrounding unsustainable computational trajectories, limited robustness, and a lack of explainability call for the development of next-generation AI systems. Neuro-symbolic AI (NSAI) emerges as a promising paradigm, fusing neural, symbolic, and probabilistic approaches to enhance interpretability, robustness, and trustworthiness while facilitating learning from much less data. Recent NSAI systems have demonstrated great potential in collaborative human-AI scenarios with reasoning and cognitive capabilities. In this paper, we provide a systematic review of recent progress in NSAI and analyze the performance characteristics and computational operators of NSAI models. Furthermore, we discuss the challenges and potential future directions of NSAI from both system and architectural perspectives.

Towards Efficient Hyperdimensional Computing Using Photonics

Nov 29, 2023

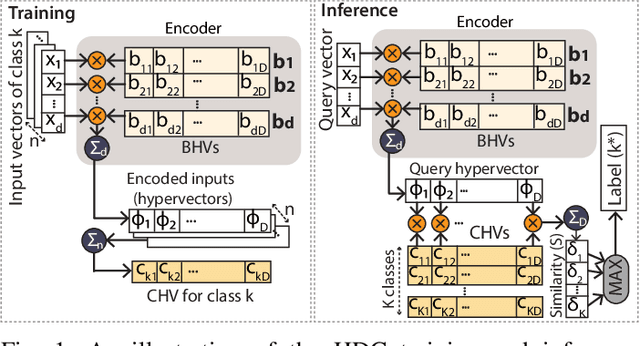

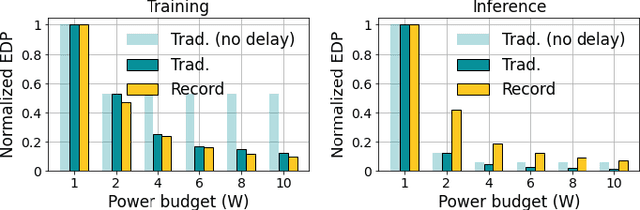

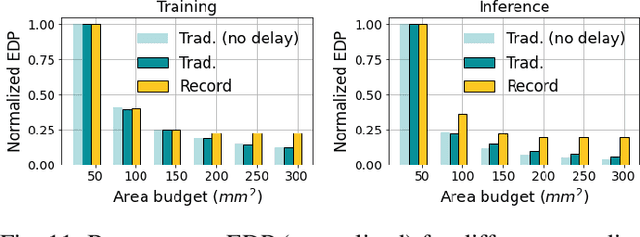

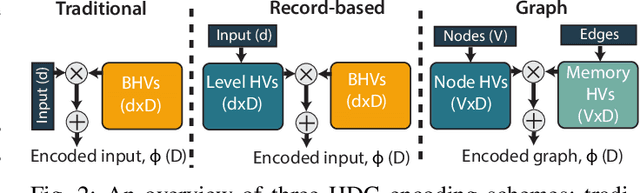

Abstract:Over the past few years, silicon photonics-based computing has emerged as a promising alternative to CMOS-based computing for Deep Neural Networks (DNN). Unfortunately, the non-linear operations and the high-precision requirements of DNNs make it extremely challenging to design efficient silicon photonics-based systems for DNN inference and training. Hyperdimensional Computing (HDC) is an emerging, brain-inspired machine learning technique that enjoys several advantages over existing DNNs, including being lightweight, requiring low-precision operands, and being robust to noise introduced by the nonidealities in the hardware. For HDC, computing in-memory (CiM) approaches have been widely used, as CiM reduces the data transfer cost if the operands can fit into the memory. However, inefficient multi-bit operations, high write latency, and low endurance make CiM ill-suited for HDC. On the other hand, the existing electro-photonic DNN accelerators are inefficient for HDC because they are specifically optimized for matrix multiplication in DNNs and consume a lot of power with high-precision data converters. In this paper, we argue that photonic computing and HDC complement each other better than photonic computing and DNNs, or CiM and HDC. We propose PhotoHDC, the first-ever electro-photonic accelerator for HDC training and inference, supporting the basic, record-based, and graph encoding schemes. Evaluating with popular datasets, we show that our accelerator can achieve two to five orders of magnitude lower EDP than the state-of-the-art electro-photonic DNN accelerators for implementing HDC training and inference. PhotoHDC also achieves four orders of magnitude lower energy-delay product than CiM-based accelerators for both HDC training and inference.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge