Andreas Velten

A comprehensive study of time-of-flight non-line-of-sight imaging

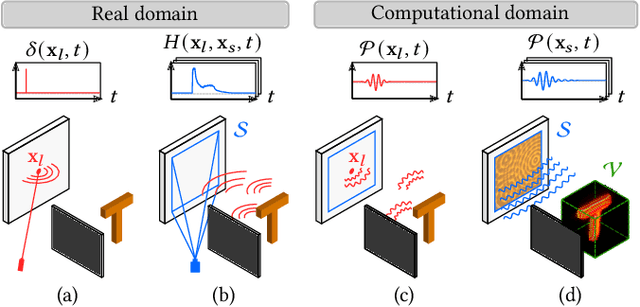

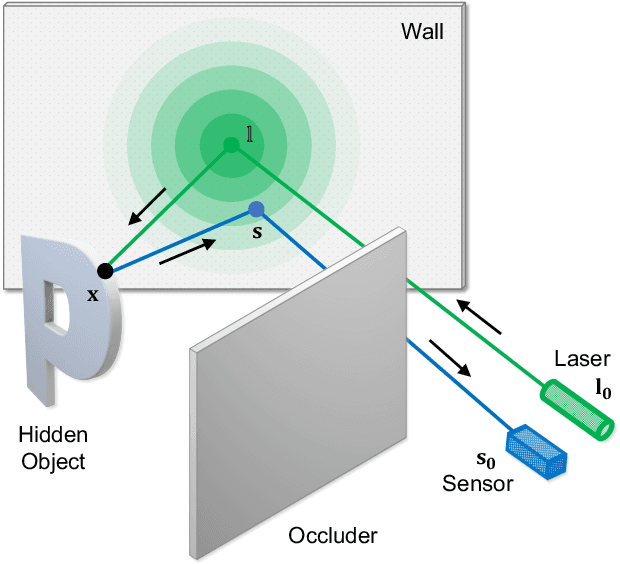

Mar 10, 2026Abstract:Time-of-Flight non-line-of-sight (ToF NLOS) imaging techniques provide state-of-the-art reconstructions of scenes hidden around corners by inverting the optical path of indirect photons scattered by visible surfaces and measured by picosecond resolution sensors. The emergence of a wide range of ToF NLOS imaging methods with heterogeneous formulae and hardware implementations obscures the assessment of both their theoretical and experimental aspects. We present a comprehensive study of a representative set of ToF NLOS imaging methods by discussing their similarities and differences under common formulation and hardware. We first outline the problem statement under a common general forward model for ToF NLOS measurements, and the typical assumptions that yield tractable inverse models. We discuss the relationship of the resulting simplified forward and inverse models to a family of Radon transforms, and how migrating these to the frequency domain relates to recent phasor-based virtual line-of-sight imaging models for NLOS imaging that obey the constraints of conventional lens-based imaging systems. We then evaluate performance of the selected methods on hidden scenes captured under the same hardware setup and similar photon counts. Our experiments show that existing methods share similar limitations on spatial resolution, visibility, and sensitivity to noise when operating under equal hardware constraints, with particular differences that stem from method-specific parameters. We expect our methodology to become a reference in future research on ToF NLOS imaging to obtain objective comparisons of existing and new methods.

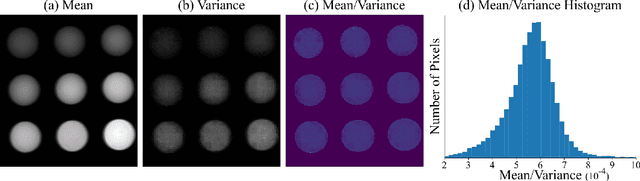

Robust 3D Object Detection using Probabilistic Point Clouds from Single-Photon LiDARs

Jul 31, 2025Abstract:LiDAR-based 3D sensors provide point clouds, a canonical 3D representation used in various scene understanding tasks. Modern LiDARs face key challenges in several real-world scenarios, such as long-distance or low-albedo objects, producing sparse or erroneous point clouds. These errors, which are rooted in the noisy raw LiDAR measurements, get propagated to downstream perception models, resulting in potentially severe loss of accuracy. This is because conventional 3D processing pipelines do not retain any uncertainty information from the raw measurements when constructing point clouds. We propose Probabilistic Point Clouds (PPC), a novel 3D scene representation where each point is augmented with a probability attribute that encapsulates the measurement uncertainty (or confidence) in the raw data. We further introduce inference approaches that leverage PPC for robust 3D object detection; these methods are versatile and can be used as computationally lightweight drop-in modules in 3D inference pipelines. We demonstrate, via both simulations and real captures, that PPC-based 3D inference methods outperform several baselines using LiDAR as well as camera-LiDAR fusion models, across challenging indoor and outdoor scenarios involving small, distant, and low-albedo objects, as well as strong ambient light. Our project webpage is at https://bhavyagoyal.github.io/ppc .

Optimized Sampling for Non-Line-of-Sight Imaging Using Modified Fast Fourier Transforms

Jan 09, 2025

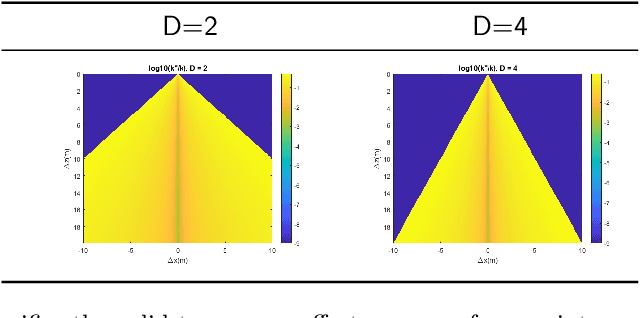

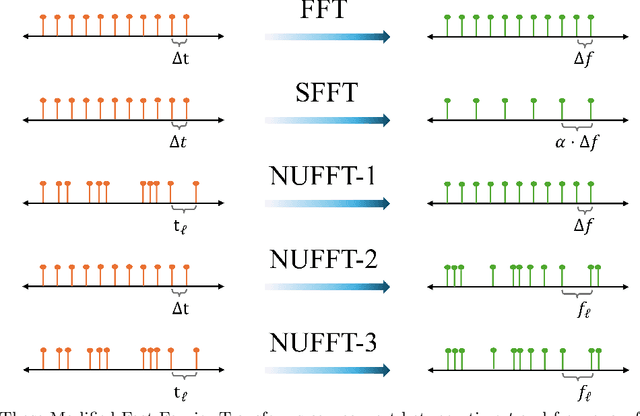

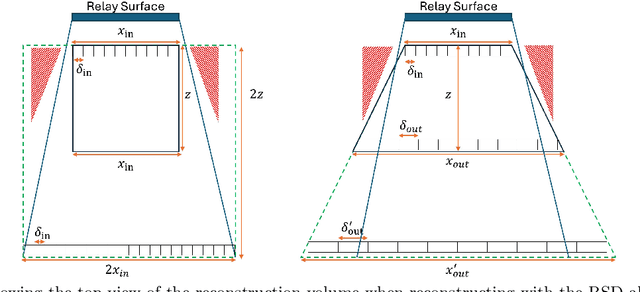

Abstract:Non-line-of-Sight (NLOS) imaging systems collect light at a diffuse relay surface and input this measurement into computational algorithms that output a 3D volumetric reconstruction. These algorithms utilize the Fast Fourier Transform (FFT) to accelerate the reconstruction process but require both input and output to be sampled spatially with uniform grids. However, the geometry of NLOS imaging inherently results in non-uniform sampling on the relay surface when using multi-pixel detector arrays, even though such arrays significantly reduce acquisition times. Furthermore, using these arrays increases the data rate required for sensor readout, posing challenges for real-world deployment. In this work, we utilize the phasor field framework to demonstrate that existing NLOS imaging setups typically oversample the relay surface spatially, explaining why the measurement can be compressed without significantly sacrificing reconstruction quality. This enables us to utilize the Non-Uniform Fast Fourier Transform (NUFFT) to reconstruct from sparse measurements acquired from irregularly sampled relay surfaces of arbitrary shapes. Furthermore, we utilize the NUFFT to reconstruct at arbitrary locations in the hidden volume, ensuring flexible sampling schemes for both the input and output. Finally, we utilize the Scaled Fast Fourier Transform (SFFT) to reconstruct larger volumes without increasing the number of samples stored in memory. All algorithms introduced in this paper preserve the computational complexity of FFT-based methods, ensuring scalability for practical NLOS imaging applications.

Iterating the Transient Light Transport Matrix for Non-Line-of-Sight Imaging

Dec 13, 2024

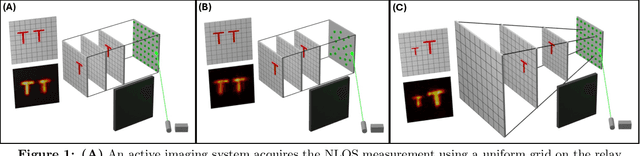

Abstract:Active imaging systems sample the Transient Light Transport Matrix (TLTM) for a scene by sequentially illuminating various positions in this scene using a controllable light source, and then measuring the resulting spatiotemporal light transport with time of flight (ToF) sensors. Time-resolved Non-line-of-sight (NLOS) imaging employs an active imaging system that measures part of the TLTM of an intermediary relay surface, and uses the indirect reflections of light encoded within this TLTM to "see around corners". Such imaging systems have applications in diverse areas such as disaster response, remote surveillance, and autonomous navigation. While existing NLOS imaging systems usually measure a subset of the full TLTM, development of customized gated Single Photon Avalanche Diode (SPAD) arrays \cite{riccardo_fast-gated_2022} has made it feasible to probe the full measurement space. In this work, we demonstrate that the full TLTM on the relay surface can be processed with efficient algorithms to computationally focus and detect our illumination in different parts of the hidden scene, turning the relay surface into a second-order active imaging system. These algorithms allow us to iterate on the measured, first-order TLTM, and extract a \textbf{second order TLTM for surfaces in the hidden scene}. We showcase three applications of TLTMs in NLOS imaging: (1) Scene Relighting with novel illumination, (2) Separation of direct and indirect components of light transport in the hidden scene, and (3) Dual Photography. Additionally, we empirically demonstrate that SPAD arrays enable parallel acquisition of photons, effectively mitigating long acquisition times.

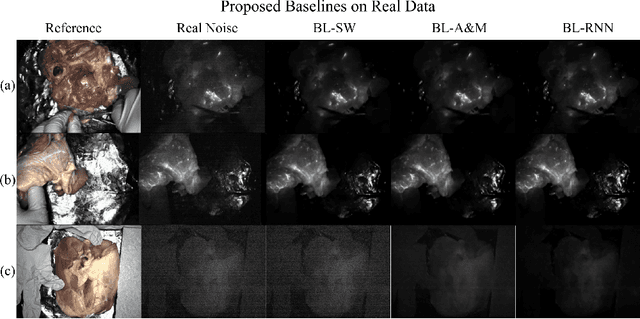

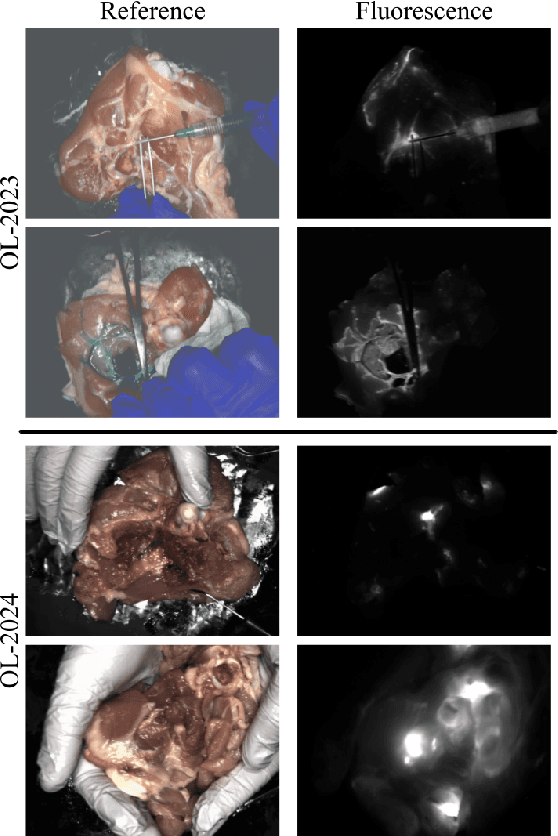

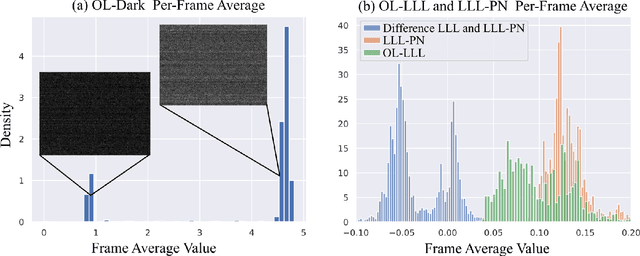

Video Denoising in Fluorescence Guided Surgery

Nov 14, 2024

Abstract:Fluorescence guided surgery (FGS) is a promising surgical technique that gives surgeons a unique view of tissue that is used to guide their practice by delineating tissue types and diseased areas. As new fluorescent contrast agents are developed that have low fluorescent photon yields, it becomes increasingly important to develop computational models to allow FGS systems to maintain good video quality in real time environments. To further complicate this task, FGS has a difficult bias noise term from laser leakage light (LLL) that represents unfiltered excitation light that can be on the order of the fluorescent signal. Most conventional video denoising methods focus on zero mean noise, and non-causal processing, both of which are violated in FGS. Luckily in FGS, often a co-located reference video is also captured which we use to simulate the LLL and assist in the denoising processes. In this work, we propose an accurate noise simulation pipeline that includes LLL and propose three baseline deep learning based algorithms for FGS video denoising.

Sparsity aware coding for single photon sensitive vision using Selective Sensing

Aug 09, 2023

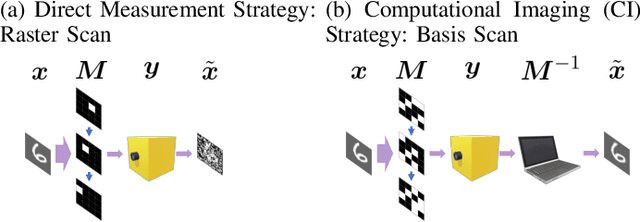

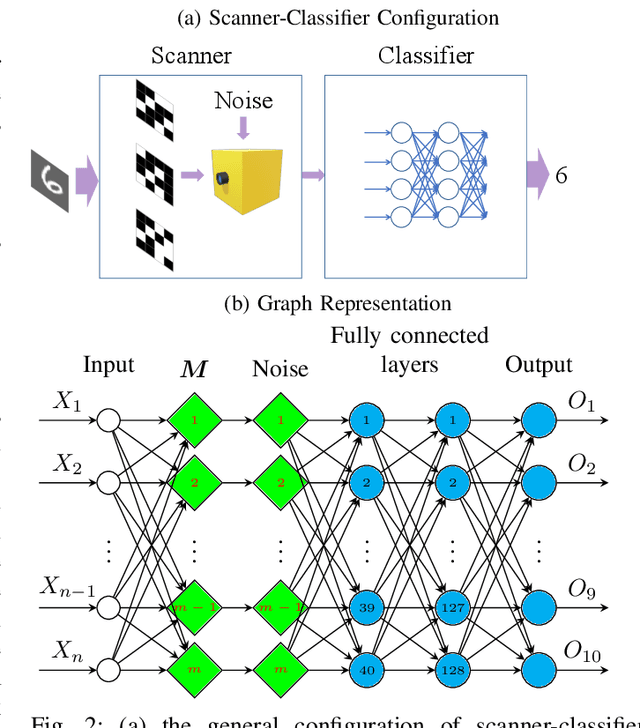

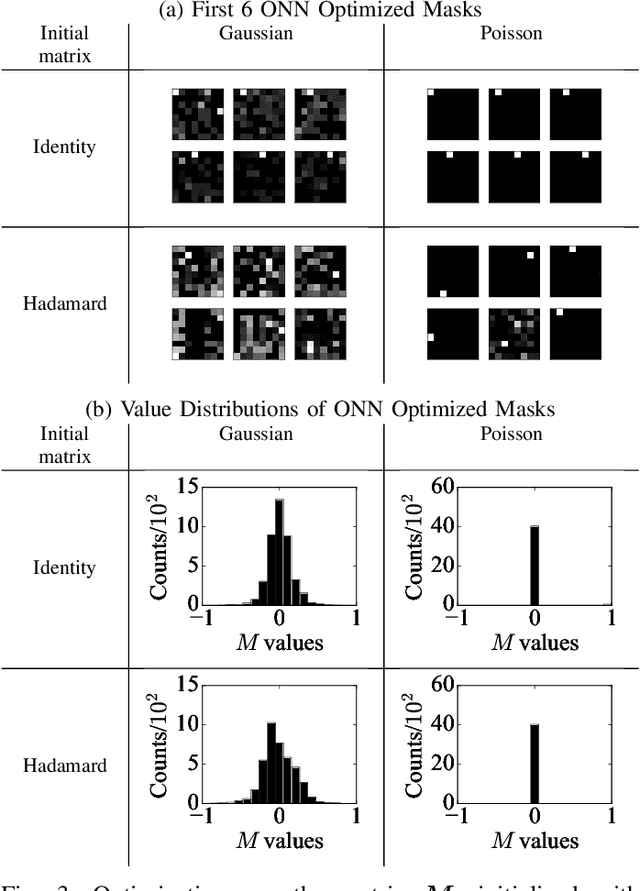

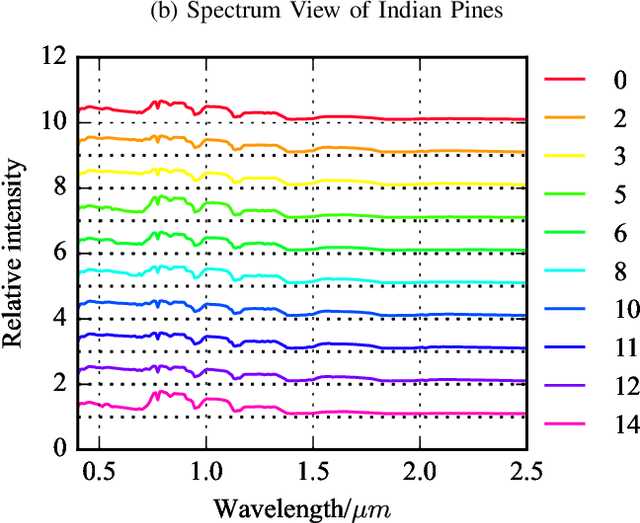

Abstract:Optical coding has been widely adopted to improve the imaging techniques. Traditional coding strategies developed under additive Gaussian noise fail to perform optimally in the presence of Poisson noise. It has been observed in previous studies that coding performance varies significantly between these two noise models. In this work, we introduce a novel approach called selective sensing, which leverages training data to learn priors and optimizes the coding strategies for downstream classification tasks. By adapting to the specific characteristics of photon-counting sensors, the proposed method aims to improve coding performance under Poisson noise and enhance overall classification accuracy. Experimental and simulated results demonstrate the effectiveness of selective sensing in comparison to traditional coding strategies, highlighting its potential for practical applications in photon counting scenarios where Poisson noise are prevalent.

Virtual Mirrors: Non-Line-of-Sight Imaging Beyond the Third Bounce

Jul 26, 2023

Abstract:Non-line-of-sight (NLOS) imaging methods are capable of reconstructing complex scenes that are not visible to an observer using indirect illumination. However, they assume only third-bounce illumination, so they are currently limited to single-corner configurations, and present limited visibility when imaging surfaces at certain orientations. To reason about and tackle these limitations, we make the key observation that planar diffuse surfaces behave specularly at wavelengths used in the computational wave-based NLOS imaging domain. We call such surfaces virtual mirrors. We leverage this observation to expand the capabilities of NLOS imaging using illumination beyond the third bounce, addressing two problems: imaging single-corner objects at limited visibility angles, and imaging objects hidden behind two corners. To image objects at limited visibility angles, we first analyze the reflections of the known illuminated point on surfaces of the scene as an estimator of the position and orientation of objects with limited visibility. We then image those limited visibility objects by computationally building secondary apertures at other surfaces that observe the target object from a direct visibility perspective. Beyond single-corner NLOS imaging, we exploit the specular behavior of virtual mirrors to image objects hidden behind a second corner by imaging the space behind such virtual mirrors, where the mirror image of objects hidden around two corners is formed. No specular surfaces were involved in the making of this paper.

Physics to the Rescue: Deep Non-line-of-sight Reconstruction for High-speed Imaging

May 03, 2022

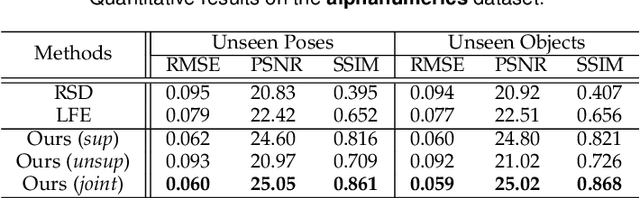

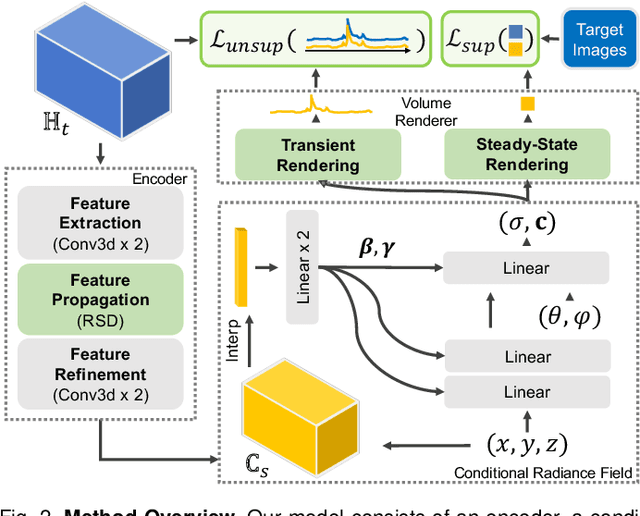

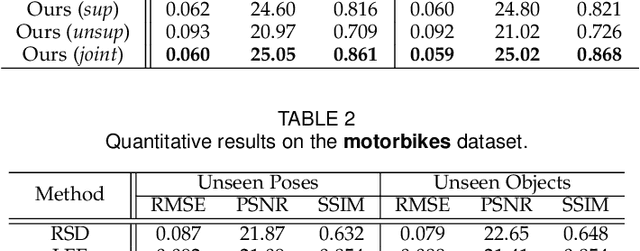

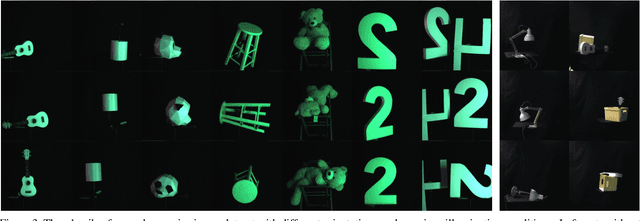

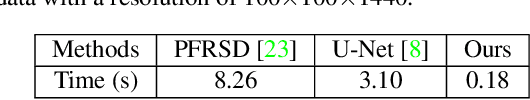

Abstract:Computational approach to imaging around the corner, or non-line-of-sight (NLOS) imaging, is becoming a reality thanks to major advances in imaging hardware and reconstruction algorithms. A recent development towards practical NLOS imaging, Nam et al. demonstrated a high-speed non-confocal imaging system that operates at 5Hz, 100x faster than the prior art. This enormous gain in acquisition rate, however, necessitates numerous approximations in light transport, breaking many existing NLOS reconstruction methods that assume an idealized image formation model. To bridge the gap, we present a novel deep model that incorporates the complementary physics priors of wave propagation and volume rendering into a neural network for high-quality and robust NLOS reconstruction. This orchestrated design regularizes the solution space by relaxing the image formation model, resulting in a deep model that generalizes well on real captures despite being exclusively trained on synthetic data. Further, we devise a unified learning framework that enables our model to be flexibly trained using diverse supervision signals, including target intensity images or even raw NLOS transient measurements. Once trained, our model renders both intensity and depth images at inference time in a single forward pass, capable of processing more than 5 captures per second on a high-end GPU. Through extensive qualitative and quantitative experiments, we show that our method outperforms prior physics and learning based approaches on both synthetic and real measurements. We anticipate that our method along with the fast capturing system will accelerate future development of NLOS imaging for real world applications that require high-speed imaging.

Towards Non-Line-of-Sight Photography

Sep 16, 2021

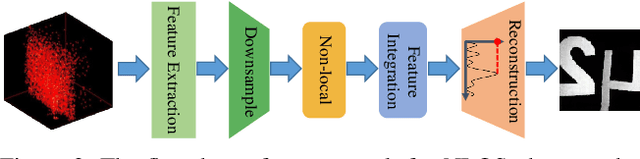

Abstract:Non-line-of-sight (NLOS) imaging is based on capturing the multi-bounce indirect reflections from the hidden objects. Active NLOS imaging systems rely on the capture of the time of flight of light through the scene, and have shown great promise for the accurate and robust reconstruction of hidden scenes without the need for specialized scene setups and prior assumptions. Despite that existing methods can reconstruct 3D geometries of the hidden scene with excellent depth resolution, accurately recovering object textures and appearance with high lateral resolution remains an challenging problem. In this work, we propose a new problem formulation, called NLOS photography, to specifically address this deficiency. Rather than performing an intermediate estimate of the 3D scene geometry, our method follows a data-driven approach and directly reconstructs 2D images of a NLOS scene that closely resemble the pictures taken with a conventional camera from the location of the relay wall. This formulation largely simplifies the challenging reconstruction problem by bypassing the explicit modeling of 3D geometry, and enables the learning of a deep model with a relatively small training dataset. The results are NLOS reconstructions of unprecedented lateral resolution and image quality.

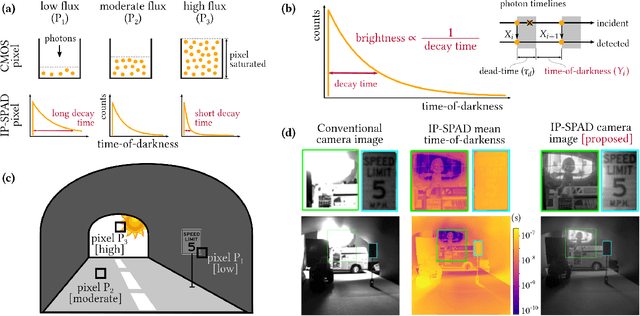

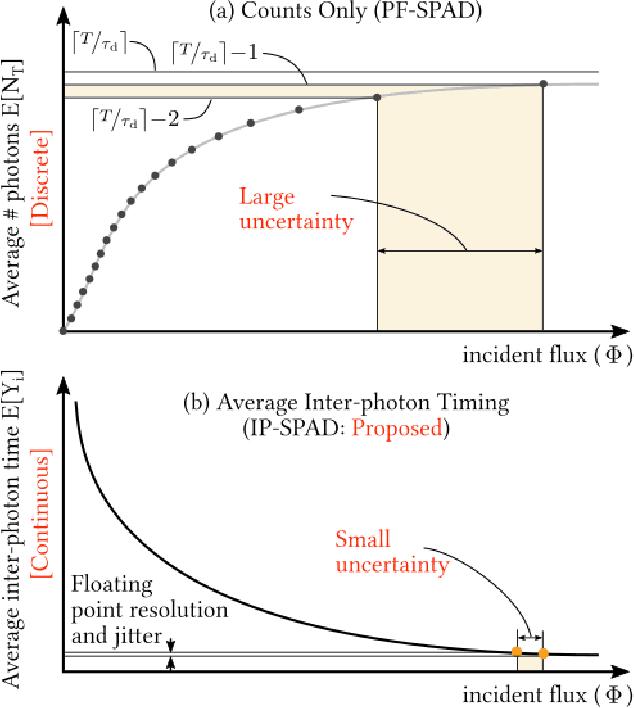

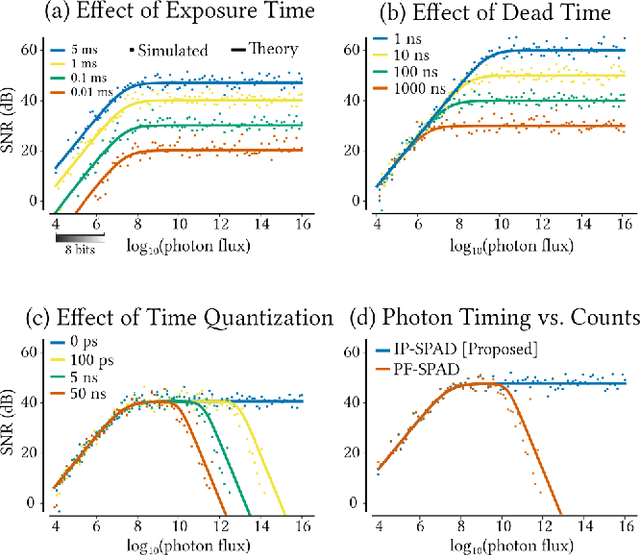

Passive Inter-Photon Imaging

Apr 11, 2021

Abstract:Digital camera pixels measure image intensities by converting incident light energy into an analog electrical current, and then digitizing it into a fixed-width binary representation. This direct measurement method, while conceptually simple, suffers from limited dynamic range and poor performance under extreme illumination -- electronic noise dominates under low illumination, and pixel full-well capacity results in saturation under bright illumination. We propose a novel intensity cue based on measuring inter-photon timing, defined as the time delay between detection of successive photons. Based on the statistics of inter-photon times measured by a time-resolved single-photon sensor, we develop theory and algorithms for a scene brightness estimator which works over extreme dynamic range; we experimentally demonstrate imaging scenes with a dynamic range of over ten million to one. The proposed techniques, aided by the emergence of single-photon sensors such as single-photon avalanche diodes (SPADs) with picosecond timing resolution, will have implications for a wide range of imaging applications: robotics, consumer photography, astronomy, microscopy and biomedical imaging.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge