Adrià Colomé

Beyond Static Perception: Integrating Temporal Context into VLMs for Cloth Folding

May 12, 2025Abstract:Manipulating clothes is challenging due to their complex dynamics, high deformability, and frequent self-occlusions. Garments exhibit a nearly infinite number of configurations, making explicit state representations difficult to define. In this paper, we analyze BiFold, a model that predicts language-conditioned pick-and-place actions from visual observations, while implicitly encoding garment state through end-to-end learning. To address scenarios such as crumpled garments or recovery from failed manipulations, BiFold leverages temporal context to improve state estimation. We examine the internal representations of the model and present evidence that its fine-tuning and temporal context enable effective alignment between text and image regions, as well as temporal consistency.

BiFold: Bimanual Cloth Folding with Language Guidance

Jan 27, 2025Abstract:Cloth folding is a complex task due to the inevitable self-occlusions of clothes, their complicated dynamics, and the disparate materials, geometries, and textures that garments can have. In this work, we learn folding actions conditioned on text commands. Translating high-level, abstract instructions into precise robotic actions requires sophisticated language understanding and manipulation capabilities. To do that, we leverage a pre-trained vision-language model and repurpose it to predict manipulation actions. Our model, BiFold, can take context into account and achieves state-of-the-art performance on an existing language-conditioned folding benchmark. Given the lack of annotated bimanual folding data, we devise a procedure to automatically parse actions of a simulated dataset and tag them with aligned text instructions. BiFold attains the best performance on our dataset and can transfer to new instructions, garments, and environments.

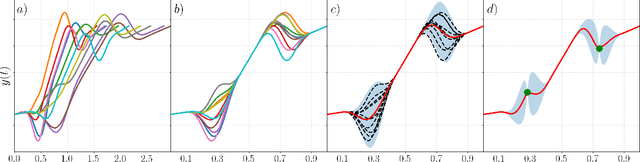

Linear quadratic control of nonlinear systems with Koopman operator learning and the Nyström method

Mar 05, 2024Abstract:In this paper, we study how the Koopman operator framework can be combined with kernel methods to effectively control nonlinear dynamical systems. While kernel methods have typically large computational requirements, we show how random subspaces (Nystr\"om approximation) can be used to achieve huge computational savings while preserving accuracy. Our main technical contribution is deriving theoretical guarantees on the effect of the Nystr\"om approximation. More precisely, we study the linear quadratic regulator problem, showing that both the approximated Riccati operator and the regulator objective, for the associated solution of the optimal control problem, converge at the rate $m^{-1/2}$, where $m$ is the random subspace size. Theoretical findings are complemented by numerical experiments corroborating our results.

Benchmarking the Sim-to-Real Gap in Cloth Manipulation

Oct 14, 2023Abstract:Realistic physics engines play a crucial role for learning to manipulate deformable objects such as garments in simulation. By doing so, researchers can circumvent challenges such as sensing the deformation of the object in the real-world. In spite of the extensive use of simulations for this task, few works have evaluated the reality gap between deformable object simulators and real-world data. We present a benchmark dataset to evaluate the sim-to-real gap in cloth manipulation. The dataset is collected by performing a dynamic cloth manipulation task involving contact with a rigid table. We use the dataset to evaluate the reality gap, computational time, and simulation stability of four popular deformable object simulators: MuJoCo, Bullet, Flex, and SOFA. Additionally, we discuss the benefits and drawbacks of each simulator. The benchmark dataset is open-source. Supplementary material, videos, and code, can be found at https://sites.google.com/view/cloth-sim2real-benchmark.

Deformable Surface Reconstruction via Riemannian Metric Preservation

Dec 22, 2022Abstract:Estimating the pose of an object from a monocular image is an inverse problem fundamental in computer vision. The ill-posed nature of this problem requires incorporating deformation priors to solve it. In practice, many materials do not perceptibly shrink or extend when manipulated, constituting a powerful and well-known prior. Mathematically, this translates to the preservation of the Riemannian metric. Neural networks offer the perfect playground to solve the surface reconstruction problem as they can approximate surfaces with arbitrary precision and allow the computation of differential geometry quantities. This paper presents an approach to inferring continuous deformable surfaces from a sequence of images, which is benchmarked against several techniques and obtains state-of-the-art performance without the need for offline training.

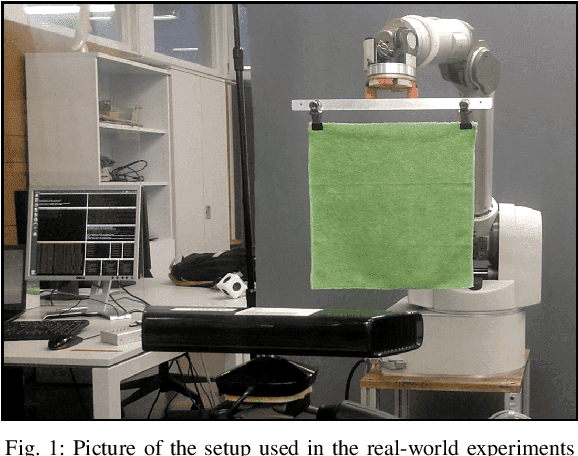

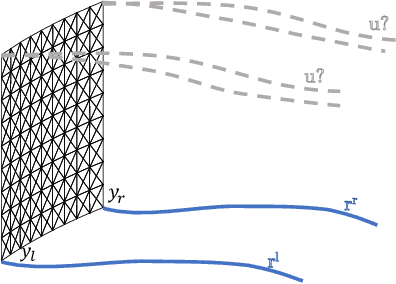

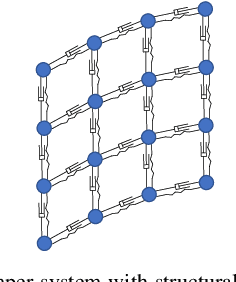

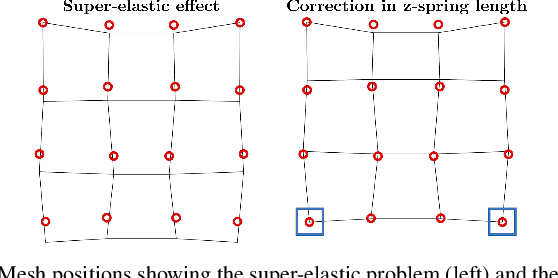

Model Predictive Control for Dynamic Cloth Manipulation: Parameter Learning and Experimental Validation

Sep 20, 2022

Abstract:Robotic cloth manipulation is a relevant challenging problem for autonomous robotic systems. Highly deformable objects as textile items can adopt multiple configurations and shapes during their manipulation. Hence, robots should not only understand the current cloth configuration but also be able to predict the future possible behaviors of the cloth. This paper addresses the problem of indirectly controlling the configuration of certain points of a textile object, by applying actions on other parts of the object through the use of a Model Predictive Control (MPC) strategy, which also allows to foresee the behavior of indirectly controlled points. The designed controller finds the optimal control signals to attain the desired future target configuration. The explored scenario in this paper considers tracking a reference trajectory with the lower corners of a square piece of cloth by grasping its upper corners. To do so, we propose and validate a linear cloth model that allows solving the MPC-related optimization problem in real time. Reinforcement Learning (RL) techniques are used to learn the optimal parameters of the proposed cloth model and also to tune the resulting MPC. After obtaining accurate tracking results in simulation, the full control scheme was implemented and executed in a real robot, obtaining accurate tracking even in adverse conditions. While total observed errors reach the 5 cm mark, for a 30x30 cm cloth, an analysis shows the MPC contributes less than 30% to that value.

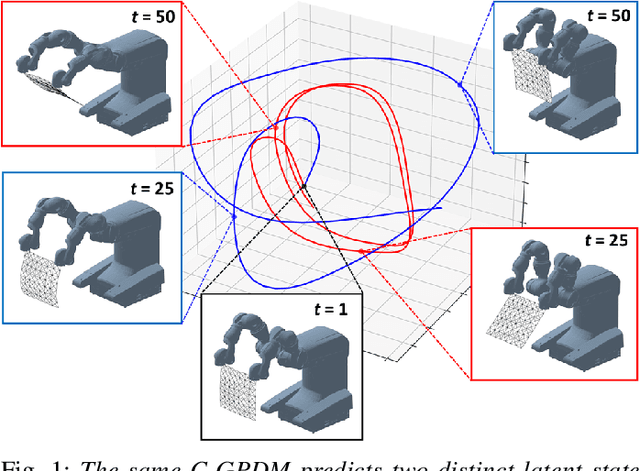

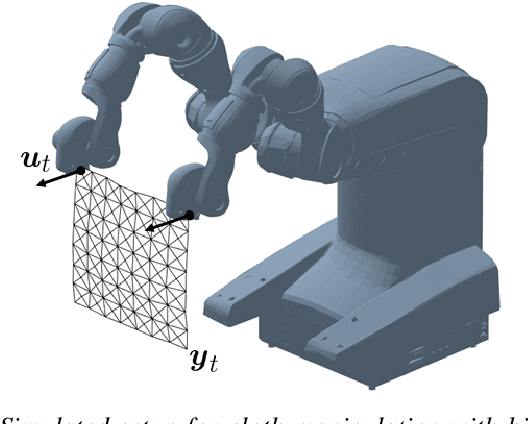

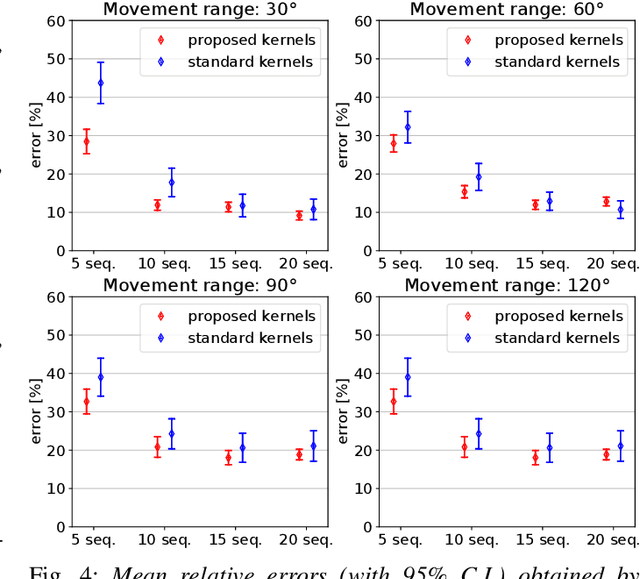

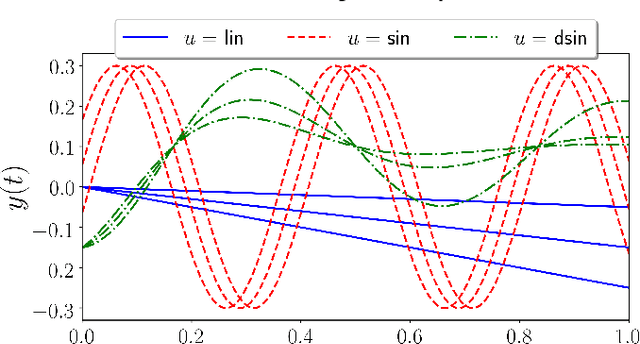

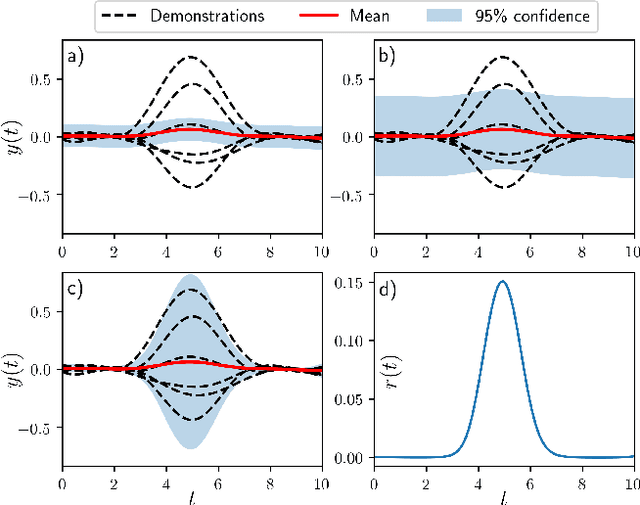

Controlled Gaussian Process Dynamical Models with Application to Robotic Cloth Manipulation

Mar 11, 2021

Abstract:Over the last years, robotic cloth manipulation has gained relevance within the research community. While significant advances have been made in robotic manipulation of rigid objects, the manipulation of non-rigid objects such as cloth garments is still a challenging problem. The uncertainty on how cloth behaves often requires the use of model-based approaches. However, cloth models have a very high dimensionality. Therefore, it is difficult to find a middle point between providing a manipulator with a dynamics model of cloth and working with a state space of tractable dimensionality. For this reason, most cloth manipulation approaches in literature perform static or quasi-static manipulation. In this paper, we propose a variation of Gaussian Process Dynamical Models (GPDMs) to model cloth dynamics in a low-dimensional manifold. GPDMs project a high-dimensional state space into a smaller dimension latent space which is capable of keeping the dynamic properties. Using such approach, we add control variables to the original formulation. In this way, it is possible to take into account the robot commands exerted on the cloth dynamics. We call this new version Controlled Gaussian Process Dynamical Model (C-GPDM). Moreover, we propose an alternative kernel representation for the model, characterized by a richer parameterization than the one employed in the majority of previous GPDM realizations. The modeling capacity of our proposal has been tested in a simulated scenario, where C-GPDM proved to be capable of generalizing over a considerably wide range of movements and correctly predicting the cloth oscillations generated by previously unseen sequences of control actions.

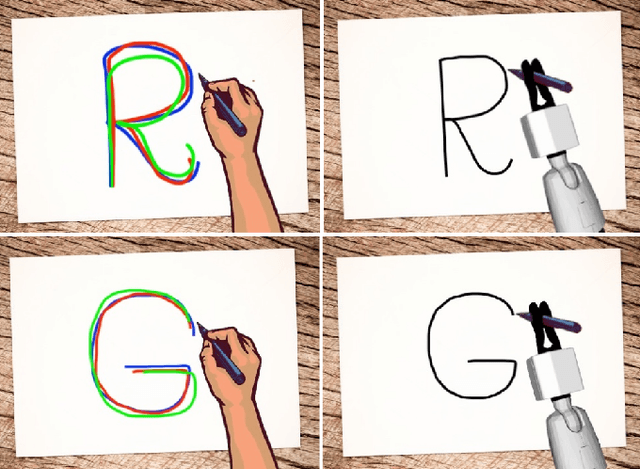

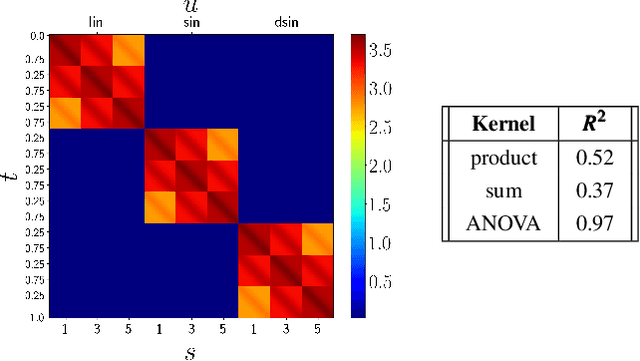

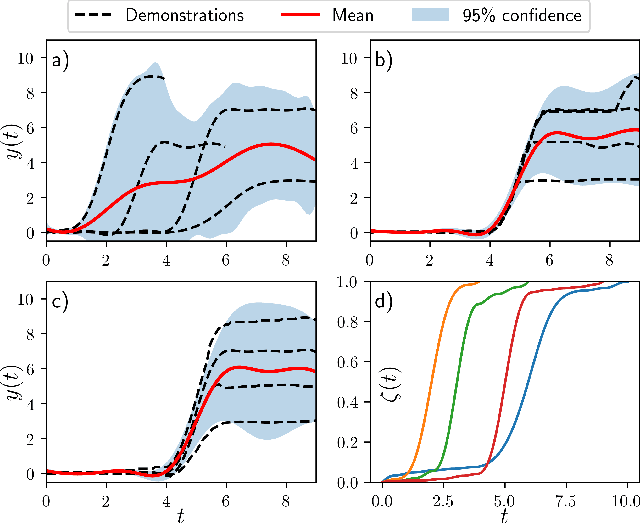

Task-Adaptive Robot Learning from Demonstration under Replication with Gaussian Process Models

Oct 15, 2020

Abstract:Learning from Demonstration (LfD) is a paradigm that allows robots to learn complex manipulation tasks that can not be easily scripted, but can be demonstrated by a human teacher. One of the challenges of LfD is to enable robots to acquire skills that can be adapted to different scenarios. In this paper, we propose to achieve this by exploiting the variations in the demonstrations to retrieve an adaptive and robust policy, using Gaussian Process (GP) models. Adaptability is enhanced by incorporating task parameters into the model, which encode different specifications within the same task. With our formulation, these parameters can either be real, integer, or categorical. Furthermore, we propose a GP design that exploits the structure of replications, i.e., repeated demonstrations at identical conditions within data. Our method significantly reduces the computational cost of model fitting in complex tasks, where replications are essential to obtain a robust model. We illustrate our approach through several experiments on a handwritten letter demonstration dataset.

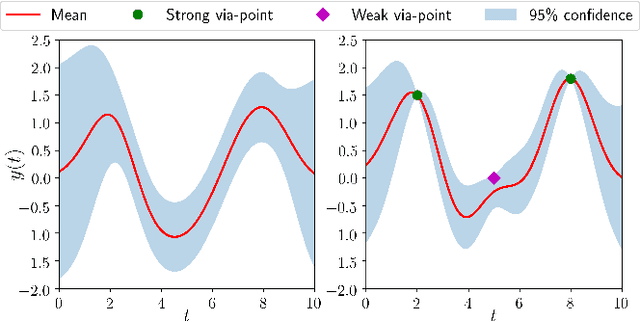

Gaussian-Process-based Robot Learning from Demonstration

Feb 23, 2020

Abstract:Endowed with higher levels of autonomy, robots are required to perform increasingly complex manipulation tasks. Learning from demonstration is arising as a promising paradigm for easily extending robot capabilities so that they adapt to unseen scenarios. We present a novel Gaussian-Process-based approach for learning manipulation skills from observations of a human teacher. This probabilistic representation allows to generalize over multiple demonstrations, and encode uncertainty variability along the different phases of the task. In this paper, we address how Gaussian Processes can be used to effectively learn a policy from trajectories in task space. We also present a method to efficiently adapt the policy to fulfill new requirements, and to modulate the robot behavior as a function of task uncertainty. This approach is illustrated through a real-world application using the TIAGo robot.

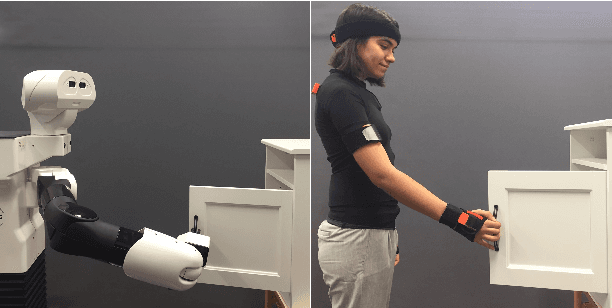

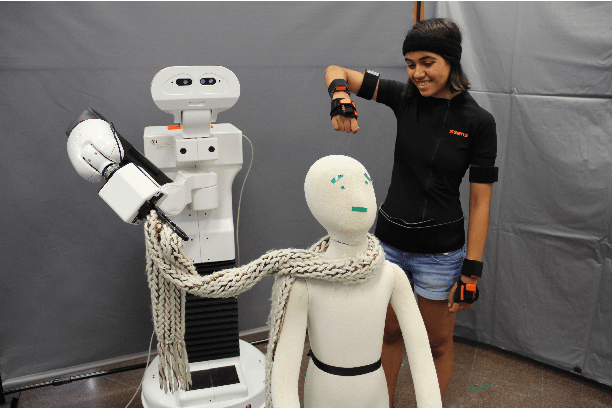

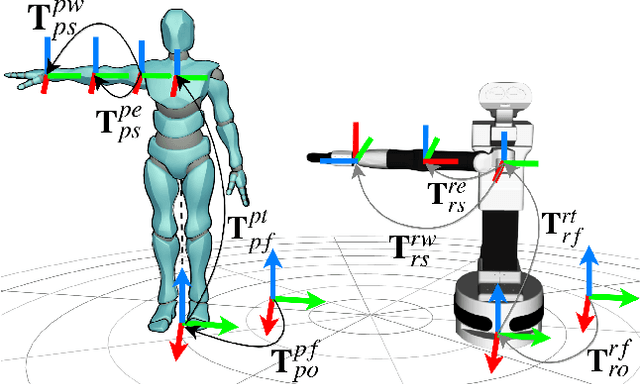

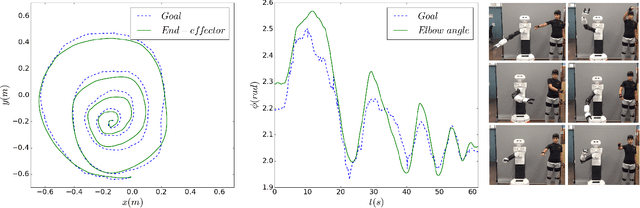

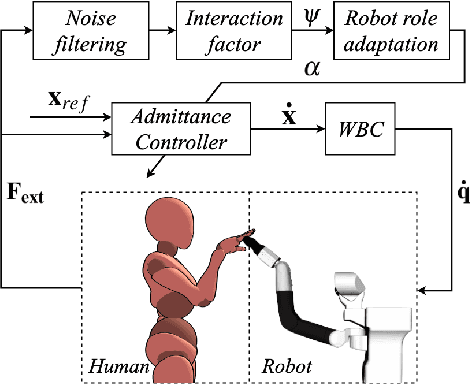

A Robot Teleoperation Framework for Human Motion Transfer

Sep 13, 2019

Abstract:Transferring human motion to a mobile robotic manipulator and ensuring safe physical human-robot interaction are crucial steps towards automating complex manipulation tasks in human-shared environments. In this work we present a robot whole-body teleoperation framework for human motion transfer. We propose a general solution to the correspondence problem: a mapping that defines an equivalence between the robot and observed human posture. For achieving real-time teleoperation and effective redundancy resolution, we make use of the whole-body paradigm with an adequate task hierarchy, and present a differential drive control algorithm to the wheeled robot base. To ensure safe physical human-robot interaction, we propose a variable admittance controller that stably adapts the dynamics of the end-effector to switch between stiff and compliant behaviors. We validate our approach through several experiments using the TIAGo robot. Results show effective real-time imitation and dynamic behavior adaptation. This could be an easy way for a non-expert to teach a rough manipulation skill to an assistive robot.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge