Yungi Kim

LP Data Pipeline: Lightweight, Purpose-driven Data Pipeline for Large Language Models

Nov 18, 2024Abstract:Creating high-quality, large-scale datasets for large language models (LLMs) often relies on resource-intensive, GPU-accelerated models for quality filtering, making the process time-consuming and costly. This dependence on GPUs limits accessibility for organizations lacking significant computational infrastructure. To address this issue, we introduce the Lightweight, Purpose-driven (LP) Data Pipeline, a framework that operates entirely on CPUs to streamline the processes of dataset extraction, filtering, and curation. Based on our four core principles, the LP Data Pipeline significantly reduces preparation time and cost while maintaining high data quality. Importantly, our pipeline enables the creation of purpose-driven datasets tailored to specific domains and languages, enhancing the applicability of LLMs in specialized contexts. We anticipate that our pipeline will lower the barriers to LLM development, enabling a wide range of organizations to access LLMs more easily.

Open Ko-LLM Leaderboard2: Bridging Foundational and Practical Evaluation for Korean LLMs

Oct 16, 2024

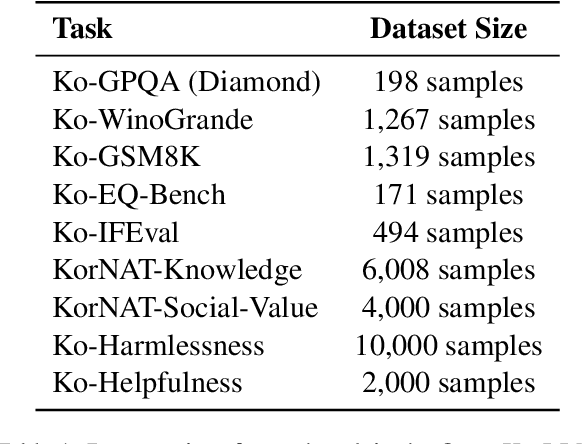

Abstract:The Open Ko-LLM Leaderboard has been instrumental in benchmarking Korean Large Language Models (LLMs), yet it has certain limitations. Notably, the disconnect between quantitative improvements on the overly academic leaderboard benchmarks and the qualitative impact of the models should be addressed. Furthermore, the benchmark suite is largely composed of translated versions of their English counterparts, which may not fully capture the intricacies of the Korean language. To address these issues, we propose Open Ko-LLM Leaderboard2, an improved version of the earlier Open Ko-LLM Leaderboard. The original benchmarks are entirely replaced with new tasks that are more closely aligned with real-world capabilities. Additionally, four new native Korean benchmarks are introduced to better reflect the distinct characteristics of the Korean language. Through these refinements, Open Ko-LLM Leaderboard2 seeks to provide a more meaningful evaluation for advancing Korean LLMs.

Representing the Under-Represented: Cultural and Core Capability Benchmarks for Developing Thai Large Language Models

Oct 07, 2024

Abstract:The rapid advancement of large language models (LLMs) has highlighted the need for robust evaluation frameworks that assess their core capabilities, such as reasoning, knowledge, and commonsense, leading to the inception of certain widely-used benchmark suites such as the H6 benchmark. However, these benchmark suites are primarily built for the English language, and there exists a lack thereof for under-represented languages, in terms of LLM development, such as Thai. On the other hand, developing LLMs for Thai should also include enhancing the cultural understanding as well as core capabilities. To address these dual challenge in Thai LLM research, we propose two key benchmarks: Thai-H6 and Thai Cultural and Linguistic Intelligence Benchmark (ThaiCLI). Through a thorough evaluation of various LLMs with multi-lingual capabilities, we provide a comprehensive analysis of the proposed benchmarks and how they contribute to Thai LLM development. Furthermore, we will make both the datasets and evaluation code publicly available to encourage further research and development for Thai LLMs.

InstaTrans: An Instruction-Aware Translation Framework for Non-English Instruction Datasets

Oct 02, 2024

Abstract:It is challenging to generate high-quality instruction datasets for non-English languages due to tail phenomena, which limit performance on less frequently observed data. To mitigate this issue, we propose translating existing high-quality English instruction datasets as a solution, emphasizing the need for complete and instruction-aware translations to maintain the inherent attributes of these datasets. We claim that fine-tuning LLMs with datasets translated in this way can improve their performance in the target language. To this end, we introduces a new translation framework tailored for instruction datasets, named InstaTrans (INSTruction-Aware TRANSlation). Through extensive experiments, we demonstrate the superiority of InstaTrans over other competitors in terms of completeness and instruction-awareness of translation, highlighting its potential to broaden the accessibility of LLMs across diverse languages at a relatively low cost. Furthermore, we have validated that fine-tuning LLMs with datasets translated by InstaTrans can effectively improve their performance in the target language.

1 Trillion Token (1TT) Platform: A Novel Framework for Efficient Data Sharing and Compensation in Large Language Models

Sep 30, 2024

Abstract:In this paper, we propose the 1 Trillion Token Platform (1TT Platform), a novel framework designed to facilitate efficient data sharing with a transparent and equitable profit-sharing mechanism. The platform fosters collaboration between data contributors, who provide otherwise non-disclosed datasets, and a data consumer, who utilizes these datasets to enhance their own services. Data contributors are compensated in monetary terms, receiving a share of the revenue generated by the services of the data consumer. The data consumer is committed to sharing a portion of the revenue with contributors, according to predefined profit-sharing arrangements. By incorporating a transparent profit-sharing paradigm to incentivize large-scale data sharing, the 1TT Platform creates a collaborative environment to drive the advancement of NLP and LLM technologies.

Rethinking KenLM: Good and Bad Model Ensembles for Efficient Text Quality Filtering in Large Web Corpora

Sep 15, 2024

Abstract:With the increasing demand for substantial amounts of high-quality data to train large language models (LLMs), efficiently filtering large web corpora has become a critical challenge. For this purpose, KenLM, a lightweight n-gram-based language model that operates on CPUs, is widely used. However, the traditional method of training KenLM utilizes only high-quality data and, consequently, does not explicitly learn the linguistic patterns of low-quality data. To address this issue, we propose an ensemble approach that leverages two contrasting KenLMs: (i) Good KenLM, trained on high-quality data; and (ii) Bad KenLM, trained on low-quality data. Experimental results demonstrate that our approach significantly reduces noisy content while preserving high-quality content compared to the traditional KenLM training method. This indicates that our method can be a practical solution with minimal computational overhead for resource-constrained environments.

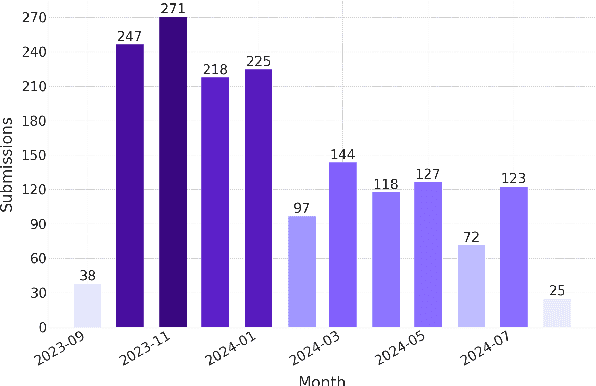

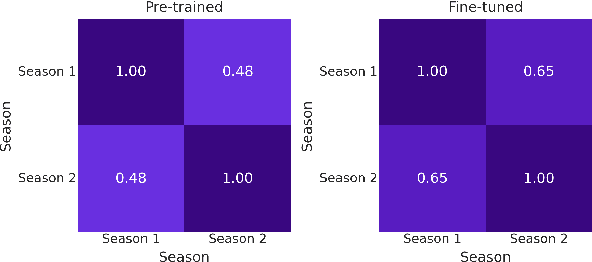

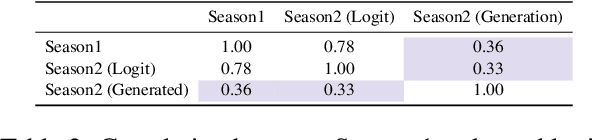

Open Ko-LLM Leaderboard: Evaluating Large Language Models in Korean with Ko-H5 Benchmark

May 31, 2024Abstract:This paper introduces the Open Ko-LLM Leaderboard and the Ko-H5 Benchmark as vital tools for evaluating Large Language Models (LLMs) in Korean. Incorporating private test sets while mirroring the English Open LLM Leaderboard, we establish a robust evaluation framework that has been well integrated in the Korean LLM community. We perform data leakage analysis that shows the benefit of private test sets along with a correlation study within the Ko-H5 benchmark and temporal analyses of the Ko-H5 score. Moreover, we present empirical support for the need to expand beyond set benchmarks. We hope the Open Ko-LLM Leaderboard sets precedent for expanding LLM evaluation to foster more linguistic diversity.

SAAS: Solving Ability Amplification Strategy for Enhanced Mathematical Reasoning in Large Language Models

Apr 08, 2024

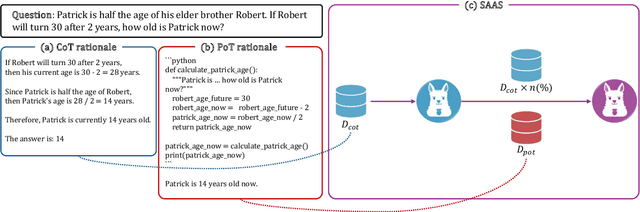

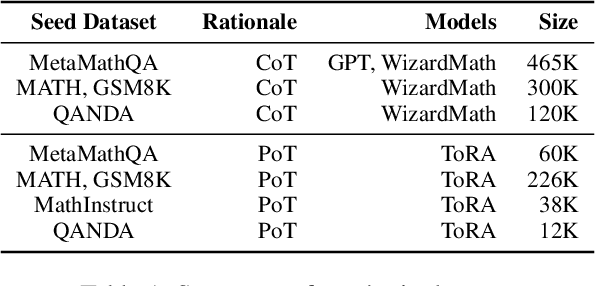

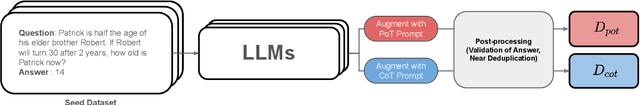

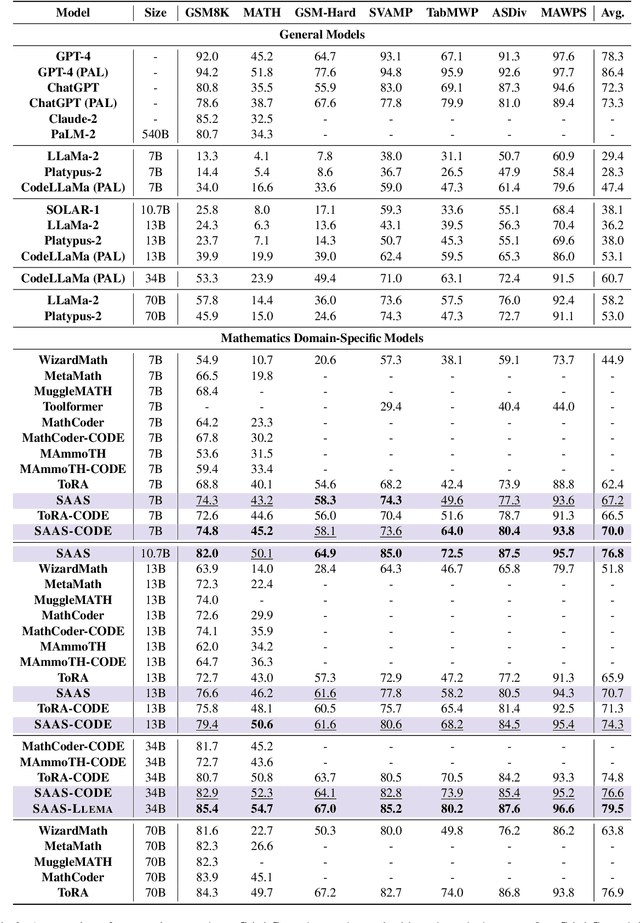

Abstract:This study presents a novel learning approach designed to enhance both mathematical reasoning and problem-solving abilities of Large Language Models (LLMs). We focus on integrating the Chain-of-Thought (CoT) and the Program-of-Thought (PoT) learning, hypothesizing that prioritizing the learning of mathematical reasoning ability is helpful for the amplification of problem-solving ability. Thus, the initial learning with CoT is essential for solving challenging mathematical problems. To this end, we propose a sequential learning approach, named SAAS (Solving Ability Amplification Strategy), which strategically transitions from CoT learning to PoT learning. Our empirical study, involving an extensive performance comparison using several benchmarks, demonstrates that our SAAS achieves state-of-the-art (SOTA) performance. The results underscore the effectiveness of our sequential learning approach, marking a significant advancement in the field of mathematical reasoning in LLMs.

Evalverse: Unified and Accessible Library for Large Language Model Evaluation

Apr 01, 2024

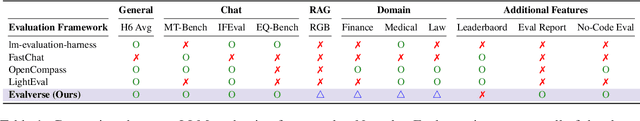

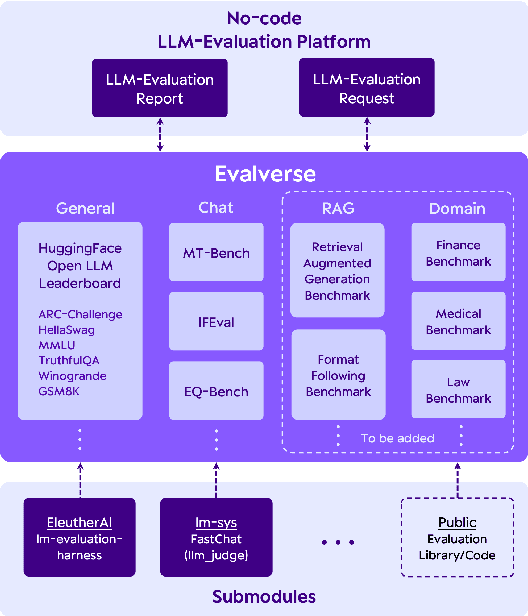

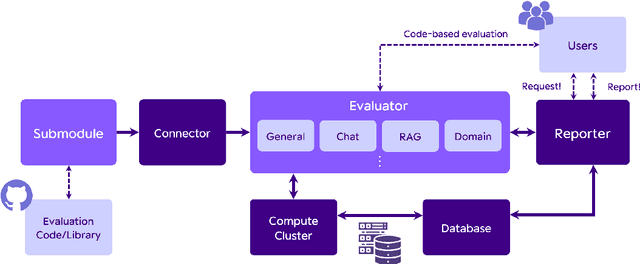

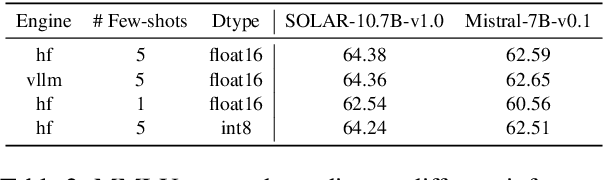

Abstract:This paper introduces Evalverse, a novel library that streamlines the evaluation of Large Language Models (LLMs) by unifying disparate evaluation tools into a single, user-friendly framework. Evalverse enables individuals with limited knowledge of artificial intelligence to easily request LLM evaluations and receive detailed reports, facilitated by an integration with communication platforms like Slack. Thus, Evalverse serves as a powerful tool for the comprehensive assessment of LLMs, offering both researchers and practitioners a centralized and easily accessible evaluation framework. Finally, we also provide a demo video for Evalverse, showcasing its capabilities and implementation in a two-minute format.

Dataverse: Open-Source ETL Pipeline for Large Language Models

Mar 28, 2024Abstract:To address the challenges associated with data processing at scale, we propose Dataverse, a unified open-source Extract-Transform-Load (ETL) pipeline for large language models (LLMs) with a user-friendly design at its core. Easy addition of custom processors with block-based interface in Dataverse allows users to readily and efficiently use Dataverse to build their own ETL pipeline. We hope that Dataverse will serve as a vital tool for LLM development and open source the entire library to welcome community contribution. Additionally, we provide a concise, two-minute video demonstration of our system, illustrating its capabilities and implementation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge