Yinyu Wu

Latency Minimization for Multi-AAV-Enabled ISCC Systems with Movable Antenna

Aug 07, 2025Abstract:This paper investigates an autonomous aerial vehicle (AAV)-enabled integrated sensing, communication, and computation system, with a particular focus on integrating movable antennas (MAs) into the system for enhancing overall system performance. Specifically, multiple MA-enabled AVVs perform sensing tasks and simultaneously transmit the generated computational tasks to the base station for processing. To minimize the maximum latency under the sensing and resource constraints, we formulate an optimization problem that jointly coordinates the position of the MAs, the computation resource allocation, and the transmit beamforming. Due to the non-convexity of the objective function and strong coupling among variables, we propose a two-layer iterative algorithm leveraging particle swarm optimization and convex optimization to address it. The simulation results demonstrate that the proposed scheme achieves significant latency improvements compared to the baseline schemes.

SafeLawBench: Towards Safe Alignment of Large Language Models

Jun 07, 2025

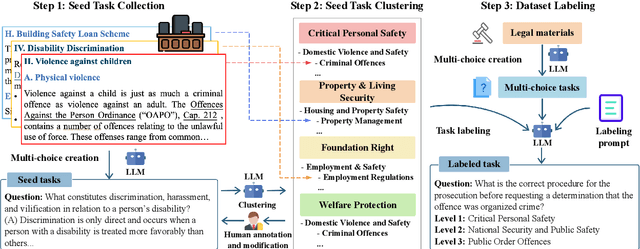

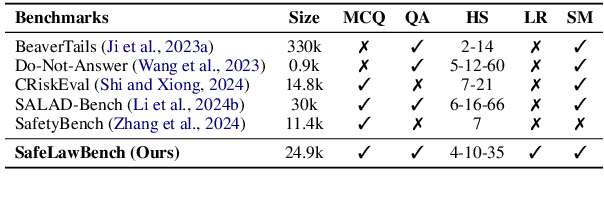

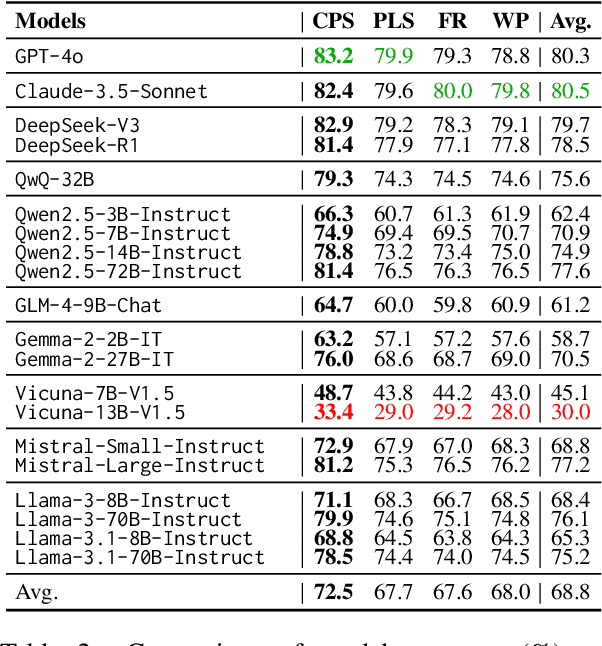

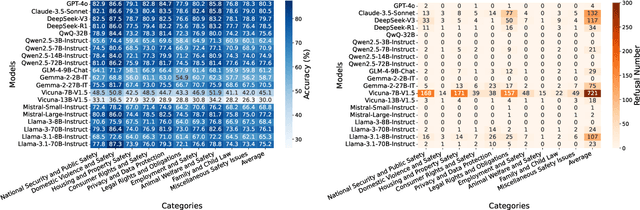

Abstract:With the growing prevalence of large language models (LLMs), the safety of LLMs has raised significant concerns. However, there is still a lack of definitive standards for evaluating their safety due to the subjective nature of current safety benchmarks. To address this gap, we conducted the first exploration of LLMs' safety evaluation from a legal perspective by proposing the SafeLawBench benchmark. SafeLawBench categorizes safety risks into three levels based on legal standards, providing a systematic and comprehensive framework for evaluation. It comprises 24,860 multi-choice questions and 1,106 open-domain question-answering (QA) tasks. Our evaluation included 2 closed-source LLMs and 18 open-source LLMs using zero-shot and few-shot prompting, highlighting the safety features of each model. We also evaluated the LLMs' safety-related reasoning stability and refusal behavior. Additionally, we found that a majority voting mechanism can enhance model performance. Notably, even leading SOTA models like Claude-3.5-Sonnet and GPT-4o have not exceeded 80.5% accuracy in multi-choice tasks on SafeLawBench, while the average accuracy of 20 LLMs remains at 68.8\%. We urge the community to prioritize research on the safety of LLMs.

Retrieval-Augmented Generation for Mobile Edge Computing via Large Language Model

Dec 30, 2024Abstract:The rapid evolution of mobile edge computing (MEC) has introduced significant challenges in optimizing resource allocation in highly dynamic wireless communication systems, in which task offloading decisions should be made in real-time. However, existing resource allocation strategies cannot well adapt to the dynamic and heterogeneous characteristics of MEC systems, since they are short of scalability, context-awareness, and interpretability. To address these issues, this paper proposes a novel retrieval-augmented generation (RAG) method to improve the performance of MEC systems. Specifically, a latency minimization problem is first proposed to jointly optimize the data offloading ratio, transmit power allocation, and computing resource allocation. Then, an LLM-enabled information-retrieval mechanism is proposed to solve the problem efficiently. Extensive experiments across multi-user, multi-task, and highly dynamic offloading scenarios show that the proposed method consistently reduces latency compared to several DL-based approaches, achieving 57% improvement under varying user computing ability, 86% with different servers, 30% under distinct transmit powers, and 42% for varying data volumes. These results show the effectiveness of LLM-driven solutions to solve the resource allocation problems in MEC systems.

Hierarchical Learning for IRS-Assisted MEC Systems with Rate-Splitting Multiple Access

Dec 05, 2024Abstract:Intelligent reflecting surface (IRS)-assisted mobile edge computing (MEC) systems have shown notable improvements in efficiency, such as reduced latency, higher data rates, and better energy efficiency. However, the resource competition among users will lead to uneven allocation, increased latency, and lower throughput. Fortunately, the rate-splitting multiple access (RSMA) technique has emerged as a promising solution for managing interference and optimizing resource allocation in MEC systems. This paper studies an IRS-assisted MEC system with RSMA, aiming to jointly optimize the passive beamforming of the IRS, the active beamforming of the base station, the task offloading allocation, the transmit power of users, the ratios of public and private information allocation, and the decoding order of the RSMA to minimize the average delay from a novel uplink transmission perspective. Since the formulated problem is non-convex and the optimization variables are highly coupled, we propose a hierarchical deep reinforcement learning-based algorithm to optimize both continuous and discrete variables of the problem. Additionally, to better extract channel features, we design a novel network architecture within the policy and evaluation networks of the proposed algorithm, combining convolutional neural networks and densely connected convolutional network for feature extraction. Simulation results indicate that the proposed algorithm not only exhibits excellent convergence performance but also outperforms various benchmarks.

UAV-Enabled Data Collection for IoT Networks via Rainbow Learning

Sep 22, 2024

Abstract:Unmanned aerial vehicles (UAVs) assisted Internet of things (IoT) systems have become an important part of future wireless communications. To achieve higher communication rate, the joint design of UAV trajectory and resource allocation is crucial. This letter considers a scenario where a multi-antenna UAV is dispatched to simultaneously collect data from multiple ground IoT nodes (GNs) within a time interval. To improve the sum data collection (SDC) volume, i.e., the total data volume transmitted by the GNs, the UAV trajectory, the UAV receive beamforming, the scheduling of the GNs, and the transmit power of the GNs are jointly optimized. Since the problem is non-convex and the optimization variables are highly coupled, it is hard to solve using traditional optimization methods. To find a near-optimal solution, a double-loop structured optimization-driven deep reinforcement learning (DRL) algorithm and a fully DRL-based algorithm are proposed to solve the problem effectively. Simulation results verify that the proposed algorithms outperform two benchmarks with significant improvement in SDC volumes.

Latency-Aware Resource Allocation for Mobile Edge Generation and Computing via Deep Reinforcement Learning

Aug 04, 2024Abstract:Recently, the integration of mobile edge computing (MEC) and generative artificial intelligence (GAI) technology has given rise to a new area called mobile edge generation and computing (MEGC), which offers mobile users heterogeneous services such as task computing and content generation. In this letter, we investigate the joint communication, computation, and the AIGC resource allocation problem in an MEGC system. A latency minimization problem is first formulated to enhance the quality of service for mobile users. Due to the strong coupling of the optimization variables, we propose a new deep reinforcement learning-based algorithm to solve it efficiently. Numerical results demonstrate that the proposed algorithm can achieve lower latency than two baseline algorithms.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge