Tianshu Hao

OpenClinicalAI: enabling AI to diagnose diseases in real-world clinical settings

Sep 09, 2021Abstract:This paper quantitatively reveals the state-of-the-art and state-of-the-practice AI systems only achieve acceptable performance on the stringent conditions that all categories of subjects are known, which we call closed clinical settings, but fail to work in real-world clinical settings. Compared to the diagnosis task in the closed setting, real-world clinical settings pose severe challenges, and we must treat them differently. We build a clinical AI benchmark named Clinical AIBench to set up real-world clinical settings to facilitate researches. We propose an open, dynamic machine learning framework and develop an AI system named OpenClinicalAI to diagnose diseases in real-world clinical settings. The first versions of Clinical AIBench and OpenClinicalAI target Alzheimer's disease. In the real-world clinical setting, OpenClinicalAI significantly outperforms the state-of-the-art AI system. In addition, OpenClinicalAI develops personalized diagnosis strategies to avoid unnecessary testing and seamlessly collaborates with clinicians. It is promising to be embedded in the current medical systems to improve medical services.

Shift-and-Balance Attention

Mar 24, 2021

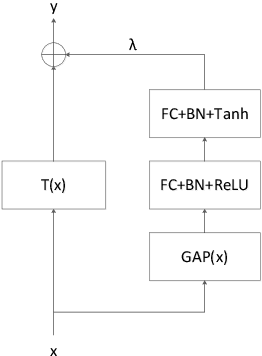

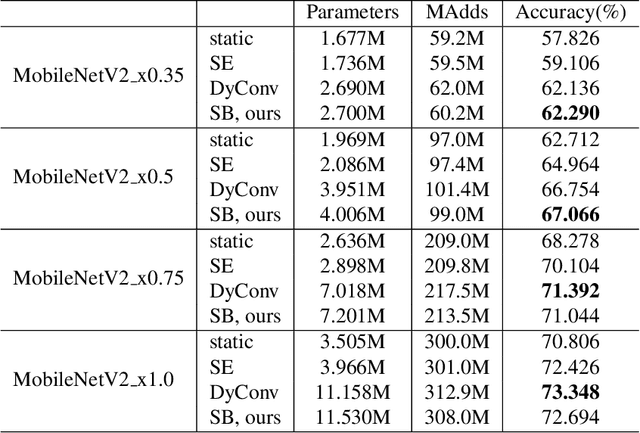

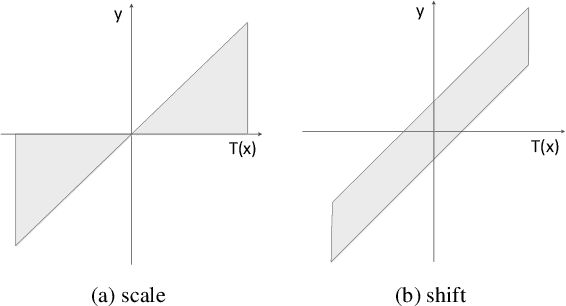

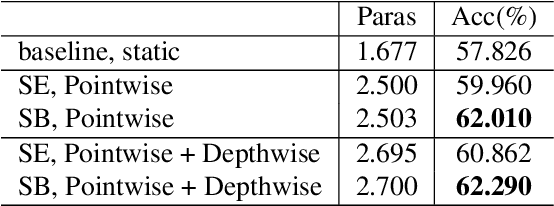

Abstract:Attention is an effective mechanism to improve the deep model capability. Squeeze-and-Excite (SE) introduces a light-weight attention branch to enhance the network's representational power. The attention branch is gated using the Sigmoid function and multiplied by the feature map's trunk branch. It is too sensitive to coordinate and balance the trunk and attention branches' contributions. To control the attention branch's influence, we propose a new attention method, called Shift-and-Balance (SB). Different from Squeeze-and-Excite, the attention branch is regulated by the learned control factor to control the balance, then added into the feature map's trunk branch. Experiments show that Shift-and-Balance attention significantly improves the accuracy compared to Squeeze-and-Excite when applied in more layers, increasing more size and capacity of a network. Moreover, Shift-and-Balance attention achieves better or close accuracy compared to the state-of-art Dynamic Convolution.

AIBench: An Industry Standard AI Benchmark Suite from Internet Services

Apr 30, 2020

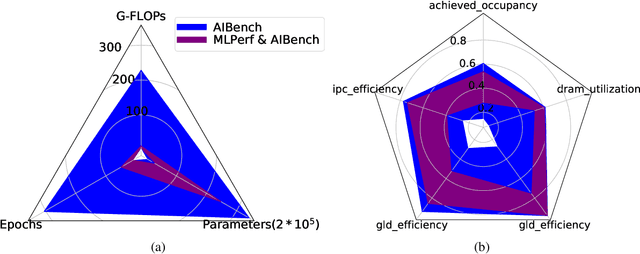

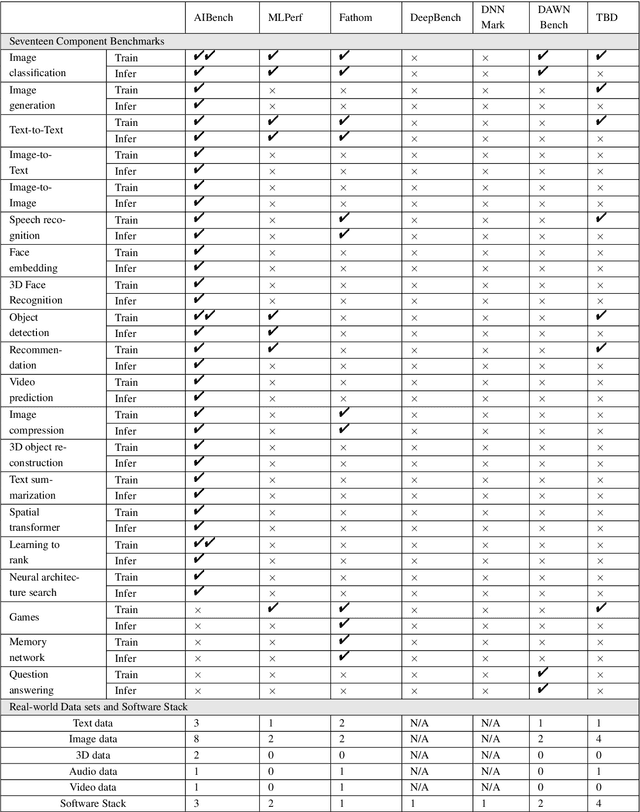

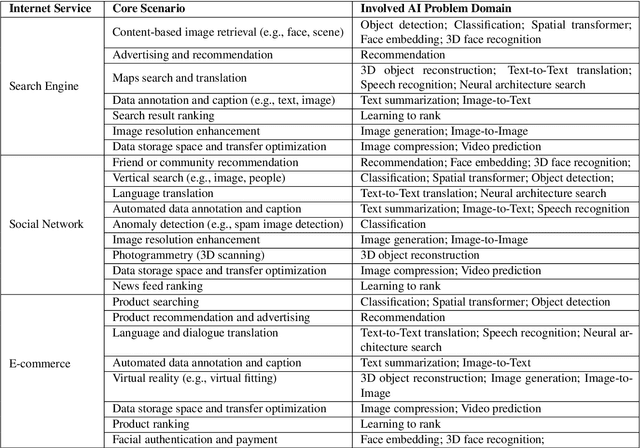

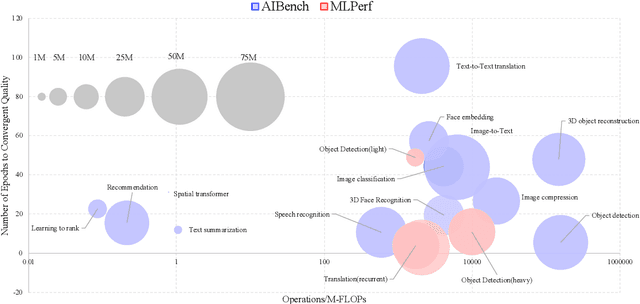

Abstract:The booming successes of machine learning in different domains boost industry-scale deployments of innovative AI algorithms, systems, and architectures, and thus the importance of benchmarking grows. However, the confidential nature of the workloads, the paramount importance of the representativeness and diversity of benchmarks, and the prohibitive cost of training a state-of-the-art model mutually aggravate the AI benchmarking challenges. In this paper, we present a balanced AI benchmarking methodology for meeting the subtly different requirements of different stages in developing a new system/architecture and ranking/purchasing commercial off-the-shelf ones. Performing an exhaustive survey on the most important AI domain-Internet services with seventeen industry partners, we identify and include seventeen representative AI tasks to guarantee the representativeness and diversity of the benchmarks. Meanwhile, for reducing the benchmarking cost, we select a benchmark subset to a minimum-three tasks-according to the criteria: diversity of model complexity, computational cost, and convergence rate, repeatability, and having widely-accepted metrics or not. We contribute by far the most comprehensive AI benchmark suite-AIBench. The evaluations show AIBench outperforms MLPerf in terms of the diversity and representativeness of model complexity, computational cost, convergent rate, computation and memory access patterns, and hotspot functions. With respect to the AIBench full benchmarks, its subset shortens the benchmarking cost by 41%, while maintaining the primary workload characteristics. The specifications, source code, and performance numbers are publicly available from the web site http://www.benchcouncil.org/AIBench/index.html.

AIBench: An Agile Domain-specific Benchmarking Methodology and an AI Benchmark Suite

Feb 17, 2020

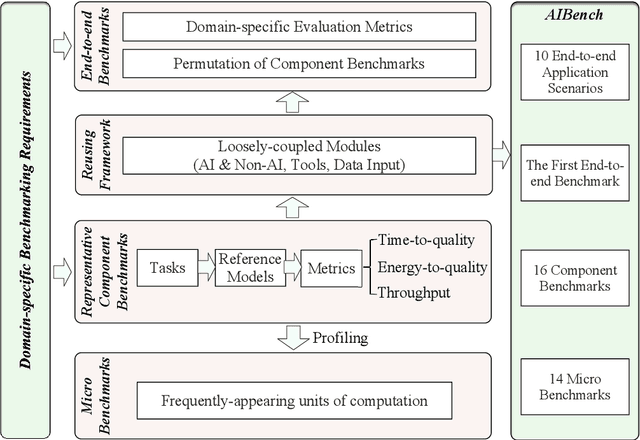

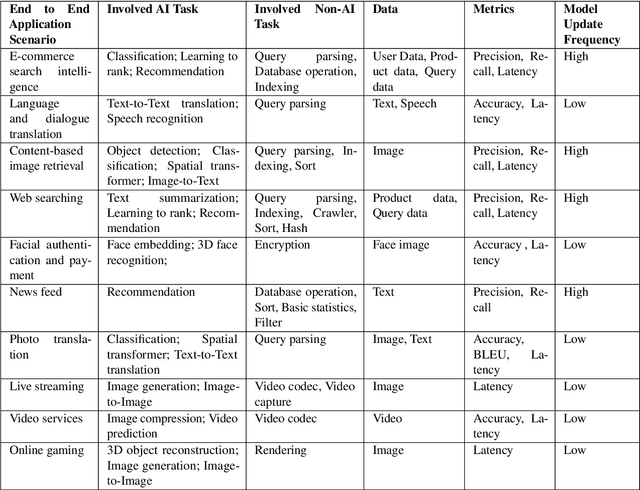

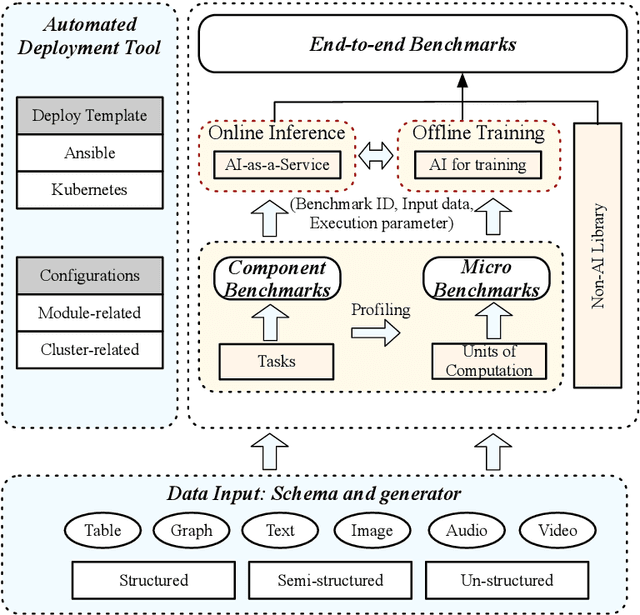

Abstract:Domain-specific software and hardware co-design is encouraging as it is much easier to achieve efficiency for fewer tasks. Agile domain-specific benchmarking speeds up the process as it provides not only relevant design inputs but also relevant metrics, and tools. Unfortunately, modern workloads like Big data, AI, and Internet services dwarf the traditional one in terms of code size, deployment scale, and execution path, and hence raise serious benchmarking challenges. This paper proposes an agile domain-specific benchmarking methodology. Together with seventeen industry partners, we identify ten important end-to-end application scenarios, among which sixteen representative AI tasks are distilled as the AI component benchmarks. We propose the permutations of essential AI and non-AI component benchmarks as end-to-end benchmarks. An end-to-end benchmark is a distillation of the essential attributes of an industry-scale application. We design and implement a highly extensible, configurable, and flexible benchmark framework, on the basis of which, we propose the guideline for building end-to-end benchmarks, and present the first end-to-end Internet service AI benchmark. The preliminary evaluation shows the value of our benchmark suite---AIBench against MLPerf and TailBench for hardware and software designers, micro-architectural researchers, and code developers. The specifications, source code, testbed, and results are publicly available from the web site \url{http://www.benchcouncil.org/AIBench/index.html}.

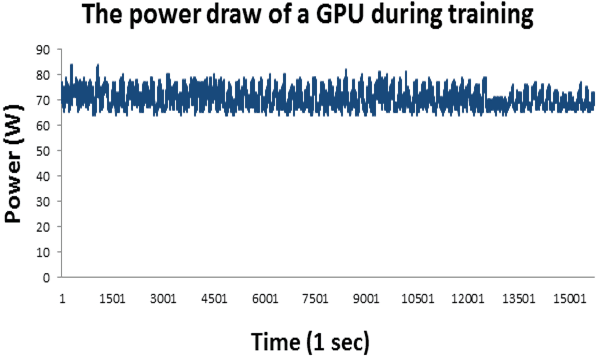

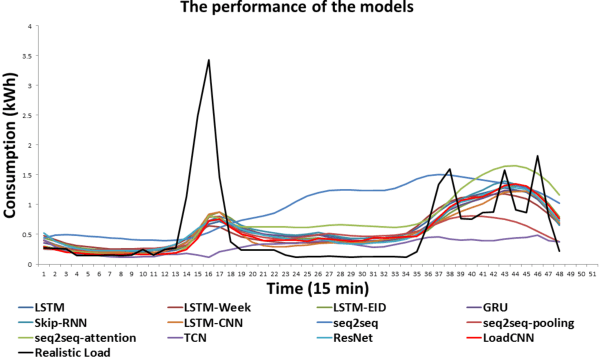

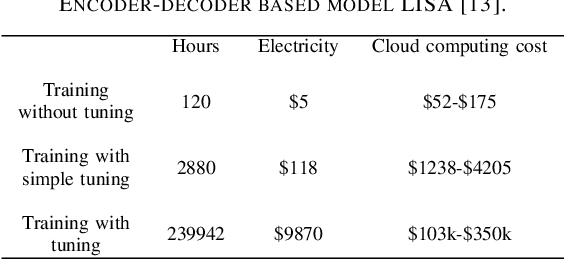

LoadCNN: A Efficient Green Deep Learning Model for Day-ahead Individual Resident Load Forecasting

Aug 01, 2019

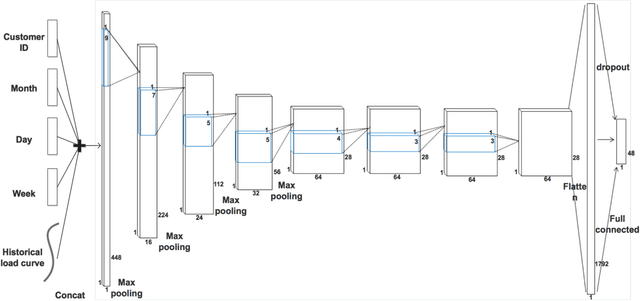

Abstract:Accurate day-ahead individual resident load forecasting is very important to various applications of smart grid. As a powerful machine learning technology, deep learning has shown great advantages in load forecasting task. However, deep learning is a computationally-hungry method, requires a plenty of training time and results in considerable energy consumed and a plenty of CO2 emitted. This aggravates the energy crisis and incurs a substantial cost to the environment. As a result, the deep learning methods are difficult to be popularized and applied in the real smart grid environment. In this paper, to reduce training time, energy consumed and CO2 emitted, we propose a efficient green model based on convolutional neural network, namely LoadCNN, for next-day load forecasting of individual resident. The training time, energy consumption, and CO2 emissions of LoadCNN are only approximately 1/70 of the corresponding indicators of other state-of-the-art models. Meanwhile, it achieves state-of-the-art performance in terms of prediction accuracy. LoadCNN is the first load forecasting model which simultaneously considers prediction accuracy, training time, energy efficiency and environment costs. It is a efficient green model that is able to be quickly, cost-effectively and environmental-friendly deployed in a realistic smart grid environment.

A new direction to promote the implementation of artificial intelligence in natural clinical settings

May 08, 2019

Abstract:Artificial intelligence (AI) researchers claim that they have made great `achievements' in clinical realms. However, clinicians point out the so-called `achievements' have no ability to implement into natural clinical settings. The root cause for this huge gap is that many essential features of natural clinical tasks are overlooked by AI system developers without medical background. In this paper, we propose that the clinical benchmark suite is a novel and promising direction to capture the essential features of the real-world clinical tasks, hence qualifies itself for guiding the development of AI systems, promoting the implementation of AI in real-world clinical practice.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge