Tianhao Shi

Latent Inter-User Difference Modeling for LLM Personalization

Jul 28, 2025Abstract:Large language models (LLMs) are increasingly integrated into users' daily lives, leading to a growing demand for personalized outputs. Previous work focuses on leveraging a user's own history, overlooking inter-user differences that are crucial for effective personalization. While recent work has attempted to model such differences, the reliance on language-based prompts often hampers the effective extraction of meaningful distinctions. To address these issues, we propose Difference-aware Embedding-based Personalization (DEP), a framework that models inter-user differences in the latent space instead of relying on language prompts. DEP constructs soft prompts by contrasting a user's embedding with those of peers who engaged with similar content, highlighting relative behavioral signals. A sparse autoencoder then filters and compresses both user-specific and difference-aware embeddings, preserving only task-relevant features before injecting them into a frozen LLM. Experiments on personalized review generation show that DEP consistently outperforms baseline methods across multiple metrics. Our code is available at https://github.com/SnowCharmQ/DEP.

Leveraging Memory Retrieval to Enhance LLM-based Generative Recommendation

Dec 23, 2024

Abstract:Leveraging Large Language Models (LLMs) to harness user-item interaction histories for item generation has emerged as a promising paradigm in generative recommendation. However, the limited context window of LLMs often restricts them to focusing on recent user interactions only, leading to the neglect of long-term interests involved in the longer histories. To address this challenge, we propose a novel Automatic Memory-Retrieval framework (AutoMR), which is capable of storing long-term interests in the memory and extracting relevant information from it for next-item generation within LLMs. Extensive experimental results on two real-world datasets demonstrate the effectiveness of our proposed AutoMR framework in utilizing long-term interests for generative recommendation.

Fair Recommendations with Limited Sensitive Attributes: A Distributionally Robust Optimization Approach

May 02, 2024

Abstract:As recommender systems are indispensable in various domains such as job searching and e-commerce, providing equitable recommendations to users with different sensitive attributes becomes an imperative requirement. Prior approaches for enhancing fairness in recommender systems presume the availability of all sensitive attributes, which can be difficult to obtain due to privacy concerns or inadequate means of capturing these attributes. In practice, the efficacy of these approaches is limited, pushing us to investigate ways of promoting fairness with limited sensitive attribute information. Toward this goal, it is important to reconstruct missing sensitive attributes. Nevertheless, reconstruction errors are inevitable due to the complexity of real-world sensitive attribute reconstruction problems and legal regulations. Thus, we pursue fair learning methods that are robust to reconstruction errors. To this end, we propose Distributionally Robust Fair Optimization (DRFO), which minimizes the worst-case unfairness over all potential probability distributions of missing sensitive attributes instead of the reconstructed one to account for the impact of the reconstruction errors. We provide theoretical and empirical evidence to demonstrate that our method can effectively ensure fairness in recommender systems when only limited sensitive attributes are accessible.

SphereHead: Stable 3D Full-head Synthesis with Spherical Tri-plane Representation

Apr 08, 2024

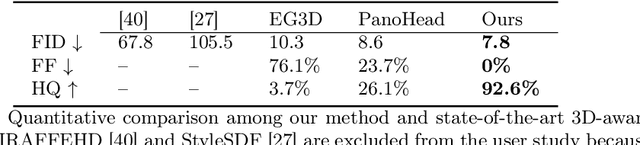

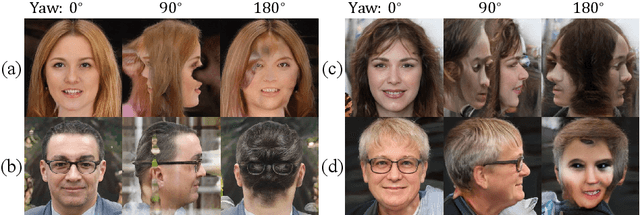

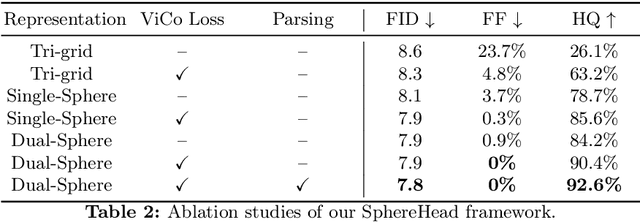

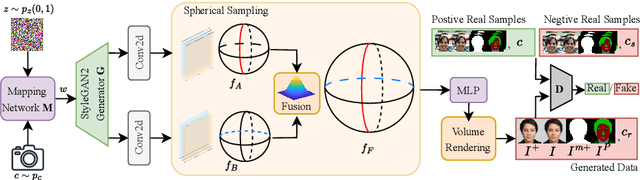

Abstract:While recent advances in 3D-aware Generative Adversarial Networks (GANs) have aided the development of near-frontal view human face synthesis, the challenge of comprehensively synthesizing a full 3D head viewable from all angles still persists. Although PanoHead proves the possibilities of using a large-scale dataset with images of both frontal and back views for full-head synthesis, it often causes artifacts for back views. Based on our in-depth analysis, we found the reasons are mainly twofold. First, from network architecture perspective, we found each plane in the utilized tri-plane/tri-grid representation space tends to confuse the features from both sides, causing "mirroring" artifacts (e.g., the glasses appear in the back). Second, from data supervision aspect, we found that existing discriminator training in 3D GANs mainly focuses on the quality of the rendered image itself, and does not care much about its plausibility with the perspective from which it was rendered. This makes it possible to generate "face" in non-frontal views, due to its easiness to fool the discriminator. In response, we propose SphereHead, a novel tri-plane representation in the spherical coordinate system that fits the human head's geometric characteristics and efficiently mitigates many of the generated artifacts. We further introduce a view-image consistency loss for the discriminator to emphasize the correspondence of the camera parameters and the images. The combination of these efforts results in visually superior outcomes with significantly fewer artifacts. Our code and dataset are publicly available at https://lhyfst.github.io/spherehead.

Preliminary Study on Incremental Learning for Large Language Model-based Recommender Systems

Dec 25, 2023Abstract:Adapting Large Language Models for recommendation (LLM4Rec)has garnered substantial attention and demonstrated promising results. However, the challenges of practically deploying LLM4Rec are largely unexplored, with the need for incremental adaptation to evolving user preferences being a critical concern. Nevertheless, the suitability of traditional incremental learning within LLM4Rec remains ambiguous, given the unique characteristics of LLMs. In this study, we empirically evaluate the commonly used incremental learning strategies (full retraining and fine-tuning) for LLM4Rec. Surprisingly, neither approach leads to evident improvements in LLM4Rec's performance. Rather than directly dismissing the role of incremental learning, we ascribe this lack of anticipated performance improvement to the mismatch between the LLM4Recarchitecture and incremental learning: LLM4Rec employs a single adaptation module for learning recommendation, hampering its ability to simultaneously capture long-term and short-term user preferences in the incremental learning context. To validate this speculation, we develop a Long- and Short-term Adaptation-aware Tuning (LSAT) framework for LLM4Rec incremental learning. Instead of relying on a single adaptation module, LSAT utilizes two adaptation modules to separately learn long-term and short-term user preferences. Empirical results demonstrate that LSAT could enhance performance, validating our speculation.

Reformulating CTR Prediction: Learning Invariant Feature Interactions for Recommendation

Apr 26, 2023Abstract:Click-Through Rate (CTR) prediction plays a core role in recommender systems, serving as the final-stage filter to rank items for a user. The key to addressing the CTR task is learning feature interactions that are useful for prediction, which is typically achieved by fitting historical click data with the Empirical Risk Minimization (ERM) paradigm. Representative methods include Factorization Machines and Deep Interest Network, which have achieved wide success in industrial applications. However, such a manner inevitably learns unstable feature interactions, i.e., the ones that exhibit strong correlations in historical data but generalize poorly for future serving. In this work, we reformulate the CTR task -- instead of pursuing ERM on historical data, we split the historical data chronologically into several periods (a.k.a, environments), aiming to learn feature interactions that are stable across periods. Such feature interactions are supposed to generalize better to predict future behavior data. Nevertheless, a technical challenge is that existing invariant learning solutions like Invariant Risk Minimization are not applicable, since the click data entangles both environment-invariant and environment-specific correlations. To address this dilemma, we propose Disentangled Invariant Learning (DIL) which disentangles feature embeddings to capture the two types of correlations separately. To improve the modeling efficiency, we further design LightDIL which performs the disentanglement at the higher level of the feature field. Extensive experiments demonstrate the effectiveness of DIL in learning stable feature interactions for CTR. We release the code at https://github.com/zyang1580/DIL.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge