Tajamul Ashraf

MATRIX: Multimodal Agent Tuning for Robust Tool-Use Reasoning

Oct 09, 2025Abstract:Vision language models (VLMs) are increasingly deployed as controllers with access to external tools for complex reasoning and decision-making, yet their effectiveness remains limited by the scarcity of high-quality multimodal trajectories and the cost of manual annotation. We address this challenge with a vision-centric agent tuning framework that automatically synthesizes multimodal trajectories, generates step-wise preference pairs, and trains a VLM controller for robust tool-use reasoning. Our pipeline first constructs M-TRACE, a large-scale dataset of 28.5K multimodal tasks with 177K verified trajectories, enabling imitation-based trajectory tuning. Building on this, we develop MATRIX Agent, a controller finetuned on M-TRACE for step-wise tool reasoning. To achieve finer alignment, we further introduce Pref-X, a set of 11K automatically generated preference pairs, and optimize MATRIX on it via step-wise preference learning. Across three benchmarks, Agent-X, GTA, and GAIA, MATRIX consistently surpasses both open- and closed-source VLMs, demonstrating scalable and effective multimodal tool use. Our data and code is avaliable at https://github.com/mbzuai-oryx/MATRIX.

MIRA: A Novel Framework for Fusing Modalities in Medical RAG

Jul 10, 2025Abstract:Multimodal Large Language Models (MLLMs) have significantly advanced AI-assisted medical diagnosis, but they often generate factually inconsistent responses that deviate from established medical knowledge. Retrieval-Augmented Generation (RAG) enhances factual accuracy by integrating external sources, but it presents two key challenges. First, insufficient retrieval can miss critical information, whereas excessive retrieval can introduce irrelevant or misleading content, disrupting model output. Second, even when the model initially provides correct answers, over-reliance on retrieved data can lead to factual errors. To address these issues, we introduce the Multimodal Intelligent Retrieval and Augmentation (MIRA) framework, designed to optimize factual accuracy in MLLM. MIRA consists of two key components: (1) a calibrated Rethinking and Rearrangement module that dynamically adjusts the number of retrieved contexts to manage factual risk, and (2) A medical RAG framework integrating image embeddings and a medical knowledge base with a query-rewrite module for efficient multimodal reasoning. This enables the model to effectively integrate both its inherent knowledge and external references. Our evaluation of publicly available medical VQA and report generation benchmarks demonstrates that MIRA substantially enhances factual accuracy and overall performance, achieving new state-of-the-art results. Code is released at https://github.com/mbzuai-oryx/MIRA.

TITAN: Query-Token based Domain Adaptive Adversarial Learning

Jun 26, 2025Abstract:We focus on the source-free domain adaptive object detection (SF-DAOD) problem when source data is unavailable during adaptation and the model must adapt to an unlabeled target domain. The majority of approaches for the problem employ a self-supervised approach using a student-teacher (ST) framework where pseudo-labels are generated via a source-pretrained model for further fine-tuning. We observe that the performance of a student model often degrades drastically, due to the collapse of the teacher model, primarily caused by high noise in pseudo-labels, resulting from domain bias, discrepancies, and a significant domain shift across domains. To obtain reliable pseudo-labels, we propose a Target-based Iterative Query-Token Adversarial Network (TITAN), which separates the target images into two subsets: those similar to the source (easy) and those dissimilar (hard). We propose a strategy to estimate variance to partition the target domain. This approach leverages the insight that higher detection variances correspond to higher recall and greater similarity to the source domain. Also, we incorporate query-token-based adversarial modules into a student-teacher baseline framework to reduce the domain gaps between two feature representations. Experiments conducted on four natural imaging datasets and two challenging medical datasets have substantiated the superior performance of TITAN compared to existing state-of-the-art (SOTA) methodologies. We report an mAP improvement of +22.7, +22.2, +21.1, and +3.7 percent over the current SOTA on C2F, C2B, S2C, and K2C benchmarks, respectively.

Agent-X: Evaluating Deep Multimodal Reasoning in Vision-Centric Agentic Tasks

May 30, 2025Abstract:Deep reasoning is fundamental for solving complex tasks, especially in vision-centric scenarios that demand sequential, multimodal understanding. However, existing benchmarks typically evaluate agents with fully synthetic, single-turn queries, limited visual modalities, and lack a framework to assess reasoning quality over multiple steps as required in real-world settings. To address this, we introduce Agent-X, a large-scale benchmark for evaluating vision-centric agents multi-step and deep reasoning capabilities in real-world, multimodal settings. Agent- X features 828 agentic tasks with authentic visual contexts, including images, multi-image comparisons, videos, and instructional text. These tasks span six major agentic environments: general visual reasoning, web browsing, security and surveillance, autonomous driving, sports, and math reasoning. Our benchmark requires agents to integrate tool use with explicit, stepwise decision-making in these diverse settings. In addition, we propose a fine-grained, step-level evaluation framework that assesses the correctness and logical coherence of each reasoning step and the effectiveness of tool usage throughout the task. Our results reveal that even the best-performing models, including GPT, Gemini, and Qwen families, struggle to solve multi-step vision tasks, achieving less than 50% full-chain success. These findings highlight key bottlenecks in current LMM reasoning and tool-use capabilities and identify future research directions in vision-centric agentic reasoning models. Our data and code are publicly available at https://github.com/mbzuai-oryx/Agent-X

ATR-Bench: A Federated Learning Benchmark for Adaptation, Trust, and Reasoning

May 22, 2025Abstract:Federated Learning (FL) has emerged as a promising paradigm for collaborative model training while preserving data privacy across decentralized participants. As FL adoption grows, numerous techniques have been proposed to tackle its practical challenges. However, the lack of standardized evaluation across key dimensions hampers systematic progress and fair comparison of FL methods. In this work, we introduce ATR-Bench, a unified framework for analyzing federated learning through three foundational dimensions: Adaptation, Trust, and Reasoning. We provide an in-depth examination of the conceptual foundations, task formulations, and open research challenges associated with each theme. We have extensively benchmarked representative methods and datasets for adaptation to heterogeneous clients and trustworthiness in adversarial or unreliable environments. Due to the lack of reliable metrics and models for reasoning in FL, we only provide literature-driven insights for this dimension. ATR-Bench lays the groundwork for a systematic and holistic evaluation of federated learning with real-world relevance. We will make our complete codebase publicly accessible and a curated repository that continuously tracks new developments and research in the FL literature.

Context Aware Grounded Teacher for Source Free Object Detection

Apr 21, 2025

Abstract:We focus on the Source Free Object Detection (SFOD) problem, when source data is unavailable during adaptation, and the model must adapt to the unlabeled target domain. In medical imaging, several approaches have leveraged a semi-supervised student-teacher architecture to bridge domain discrepancy. Context imbalance in labeled training data and significant domain shifts between domains can lead to biased teacher models that produce inaccurate pseudolabels, degrading the student model's performance and causing a mode collapse. Class imbalance, particularly when one class significantly outnumbers another, leads to contextual bias. To tackle the problem of context bias and the significant performance drop of the student model in the SFOD setting, we introduce Grounded Teacher (GT) as a standard framework. In this study, we model contextual relationships using a dedicated relational context module and leverage it to mitigate inherent biases in the model. This approach enables us to apply augmentations to closely related classes, across and within domains, enhancing the performance of underrepresented classes while keeping the effect on dominant classes minimal. We further improve the quality of predictions by implementing an expert foundational branch to supervise the student model. We validate the effectiveness of our approach in mitigating context bias under the SFOD setting through experiments on three medical datasets supported by comprehensive ablation studies. All relevant resources, including preprocessed data, trained model weights, and code, are publicly available at this https://github.com/Tajamul21/Grounded_Teacher.

LLM Post-Training: A Deep Dive into Reasoning Large Language Models

Feb 28, 2025Abstract:Large Language Models (LLMs) have transformed the natural language processing landscape and brought to life diverse applications. Pretraining on vast web-scale data has laid the foundation for these models, yet the research community is now increasingly shifting focus toward post-training techniques to achieve further breakthroughs. While pretraining provides a broad linguistic foundation, post-training methods enable LLMs to refine their knowledge, improve reasoning, enhance factual accuracy, and align more effectively with user intents and ethical considerations. Fine-tuning, reinforcement learning, and test-time scaling have emerged as critical strategies for optimizing LLMs performance, ensuring robustness, and improving adaptability across various real-world tasks. This survey provides a systematic exploration of post-training methodologies, analyzing their role in refining LLMs beyond pretraining, addressing key challenges such as catastrophic forgetting, reward hacking, and inference-time trade-offs. We highlight emerging directions in model alignment, scalable adaptation, and inference-time reasoning, and outline future research directions. We also provide a public repository to continually track developments in this fast-evolving field: https://github.com/mbzuai-oryx/Awesome-LLM-Post-training.

Phase-Informed Tool Segmentation for Manual Small-Incision Cataract Surgery

Nov 25, 2024

Abstract:Cataract surgery is the most common surgical procedure globally, with a disproportionately higher burden in developing countries. While automated surgical video analysis has been explored in general surgery, its application to ophthalmic procedures remains limited. Existing works primarily focus on Phaco cataract surgery, an expensive technique not accessible in regions where cataract treatment is most needed. In contrast, Manual Small-Incision Cataract Surgery (MSICS) is the preferred low-cost, faster alternative in high-volume settings and for challenging cases. However, no dataset exists for MSICS. To address this gap, we introduce Cataract-MSICS, the first comprehensive dataset containing 53 surgical videos annotated for 18 surgical phases and 3,527 frames with 13 surgical tools at the pixel level. We benchmark this dataset on state-of-the-art models and present ToolSeg, a novel framework that enhances tool segmentation by introducing a phase-conditional decoder and a simple yet effective semi-supervised setup leveraging pseudo-labels from foundation models. Our approach significantly improves segmentation performance, achieving a $23.77\%$ to $38.10\%$ increase in mean Dice scores, with a notable boost for tools that are less prevalent and small. Furthermore, we demonstrate that ToolSeg generalizes to other surgical settings, showcasing its effectiveness on the CaDIS dataset.

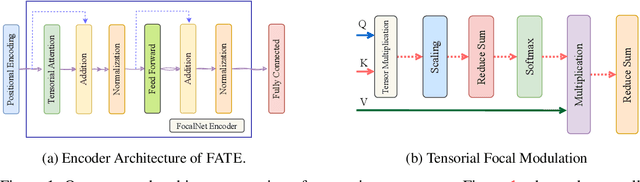

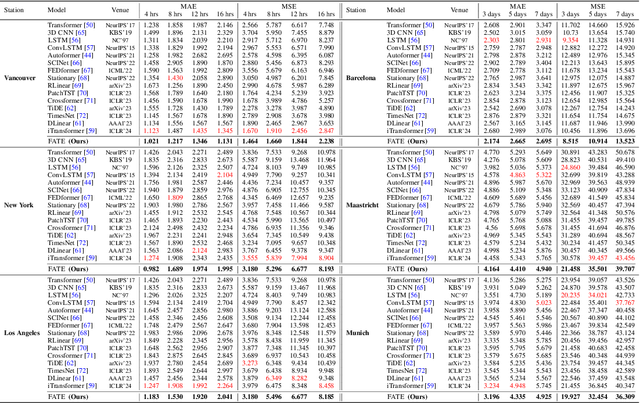

FATE: Focal-modulated Attention Encoder for Temperature Prediction

Aug 21, 2024

Abstract:One of the major challenges of the twenty-first century is climate change, evidenced by rising sea levels, melting glaciers, and increased storm frequency. Accurate temperature forecasting is vital for understanding and mitigating these impacts. Traditional data-driven models often use recurrent neural networks (RNNs) but face limitations in parallelization, especially with longer sequences. To address this, we introduce a novel approach based on the FocalNet Transformer architecture. Our Focal modulation Attention Encoder (FATE) framework operates in a multi-tensor format, utilizing tensorized modulation to capture spatial and temporal nuances in meteorological data. Comparative evaluations against existing transformer encoders, 3D CNNs, LSTM, and ConvLSTM models show that FATE excels at identifying complex patterns in temperature data. Additionally, we present a new labeled dataset, the Climate Change Parameter dataset (CCPD), containing 40 years of data from Jammu and Kashmir on seven climate-related parameters. Experiments with real-world temperature datasets from the USA, Canada, and Europe show accuracy improvements of 12\%, 23\%, and 28\%, respectively, over current state-of-the-art models. Our CCPD dataset also achieved a 24\% improvement in accuracy. To support reproducible research, we have released the source code and pre-trained FATE model at \href{https://github.com/Tajamul21/FATE}{https://github.com/Tajamul21/FATE}.

HF-Fed: Hierarchical based customized Federated Learning Framework for X-Ray Imaging

Jul 25, 2024Abstract:In clinical applications, X-ray technology is vital for noninvasive examinations like mammography, providing essential anatomical information. However, the radiation risk associated with X-ray procedures raises concerns. X-ray reconstruction is crucial in medical imaging for detailed visual representations of internal structures, aiding diagnosis and treatment without invasive procedures. Recent advancements in deep learning (DL) have shown promise in X-ray reconstruction, but conventional DL methods often require centralized aggregation of large datasets, leading to domain shifts and privacy issues. To address these challenges, we introduce the Hierarchical Framework-based Federated Learning method (HF-Fed) for customized X-ray imaging. HF-Fed tackles X-ray imaging optimization by decomposing the problem into local data adaptation and holistic X-ray imaging. It employs a hospital-specific hierarchical framework and a shared common imaging network called Network of Networks (NoN) to acquire stable features from diverse data distributions. The hierarchical hypernetwork extracts domain-specific hyperparameters, conditioning the NoN for customized X-ray reconstruction. Experimental results demonstrate HF-Fed's competitive performance, offering a promising solution for enhancing X-ray imaging without data sharing. This study significantly contributes to the literature on federated learning in healthcare, providing valuable insights for policymakers and healthcare providers. The source code and pre-trained HF-Fed model are available at \url{https://tisharepo.github.io/Webpage/}.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge