Richa Gabha

D-MASTER: Mask Annealed Transformer for Unsupervised Domain Adaptation in Breast Cancer Detection from Mammograms

Jul 09, 2024

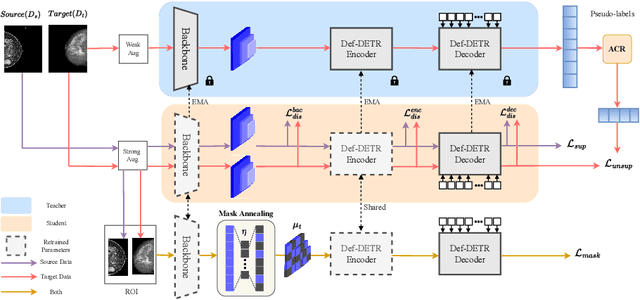

Abstract:We focus on the problem of Unsupervised Domain Adaptation (\uda) for breast cancer detection from mammograms (BCDM) problem. Recent advancements have shown that masked image modeling serves as a robust pretext task for UDA. However, when applied to cross-domain BCDM, these techniques struggle with breast abnormalities such as masses, asymmetries, and micro-calcifications, in part due to the typically much smaller size of region of interest in comparison to natural images. This often results in more false positives per image (FPI) and significant noise in pseudo-labels typically used to bootstrap such techniques. Recognizing these challenges, we introduce a transformer-based Domain-invariant Mask Annealed Student Teacher autoencoder (D-MASTER) framework. D-MASTER adaptively masks and reconstructs multi-scale feature maps, enhancing the model's ability to capture reliable target domain features. D-MASTER also includes adaptive confidence refinement to filter pseudo-labels, ensuring only high-quality detections are considered. We also provide a bounding box annotated subset of 1000 mammograms from the RSNA Breast Screening Dataset (referred to as RSNA-BSD1K) to support further research in BCDM. We evaluate D-MASTER on multiple BCDM datasets acquired from diverse domains. Experimental results show a significant improvement of 9% and 13% in sensitivity at 0.3 FPI over state-of-the-art UDA techniques on publicly available benchmark INBreast and DDSM datasets respectively. We also report an improvement of 11% and 17% on In-house and RSNA-BSD1K datasets respectively. The source code, pre-trained D-MASTER model, along with RSNA-BSD1K dataset annotations is available at https://dmaster-iitd.github.io/webpage.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge