Subhash Nerella

MANDARIN: Mixture-of-Experts Framework for Dynamic Delirium and Coma Prediction in ICU Patients: Development and Validation of an Acute Brain Dysfunction Prediction Model

Mar 08, 2025Abstract:Acute brain dysfunction (ABD) is a common, severe ICU complication, presenting as delirium or coma and leading to prolonged stays, increased mortality, and cognitive decline. Traditional screening tools like the Glasgow Coma Scale (GCS), Confusion Assessment Method (CAM), and Richmond Agitation-Sedation Scale (RASS) rely on intermittent assessments, causing delays and inconsistencies. In this study, we propose MANDARIN (Mixture-of-Experts Framework for Dynamic Delirium and Coma Prediction in ICU Patients), a 1.5M-parameter mixture-of-experts neural network to predict ABD in real-time among ICU patients. The model integrates temporal and static data from the ICU to predict the brain status in the next 12 to 72 hours, using a multi-branch approach to account for current brain status. The MANDARIN model was trained on data from 92,734 patients (132,997 ICU admissions) from 2 hospitals between 2008-2019 and validated externally on data from 11,719 patients (14,519 ICU admissions) from 15 hospitals and prospectively on data from 304 patients (503 ICU admissions) from one hospital in 2021-2024. Three datasets were used: the University of Florida Health (UFH) dataset, the electronic ICU Collaborative Research Database (eICU), and the Medical Information Mart for Intensive Care (MIMIC)-IV dataset. MANDARIN significantly outperforms the baseline neurological assessment scores (GCS, CAM, and RASS) for delirium prediction in both external (AUROC 75.5% CI: 74.2%-76.8% vs 68.3% CI: 66.9%-69.5%) and prospective (AUROC 82.0% CI: 74.8%-89.2% vs 72.7% CI: 65.5%-81.0%) cohorts, as well as for coma prediction (external AUROC 87.3% CI: 85.9%-89.0% vs 72.8% CI: 70.6%-74.9%, and prospective AUROC 93.4% CI: 88.5%-97.9% vs 67.7% CI: 57.7%-76.8%) with a 12-hour lead time. This tool has the potential to assist clinicians in decision-making by continuously monitoring the brain status of patients in the ICU.

MANGO: Multimodal Acuity traNsformer for intelliGent ICU Outcomes

Dec 13, 2024

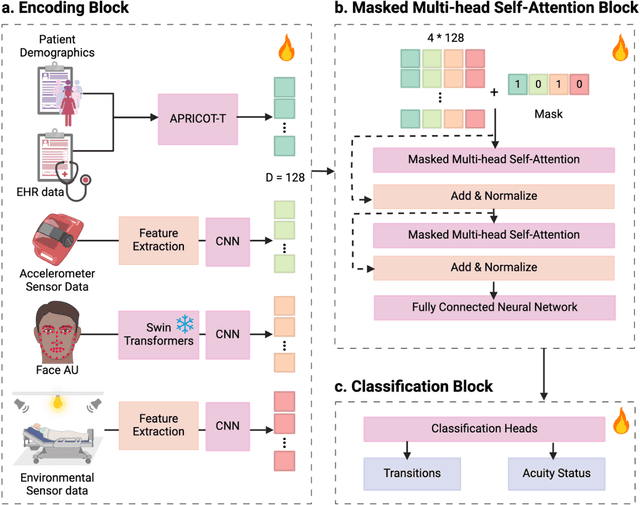

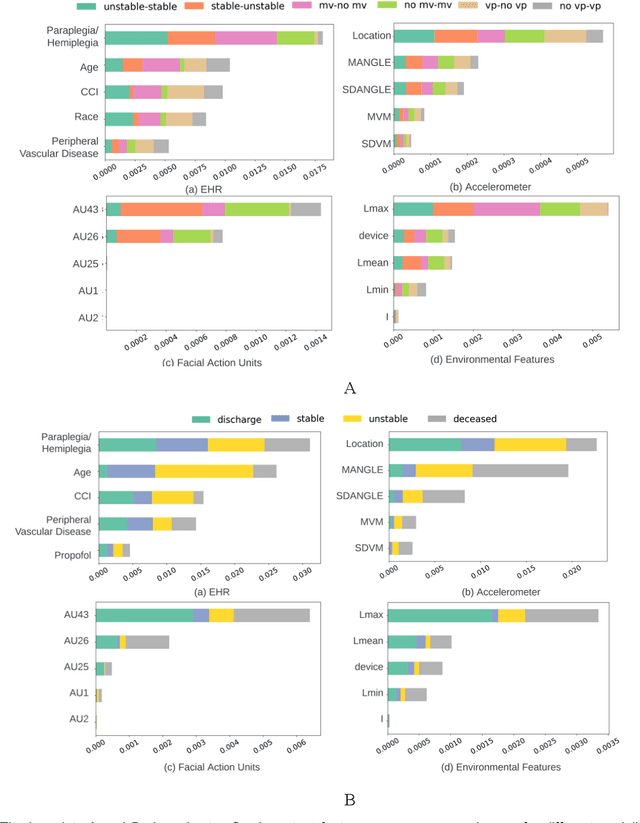

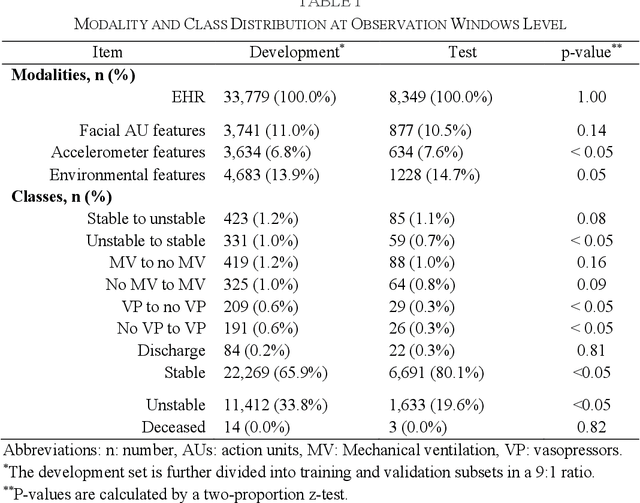

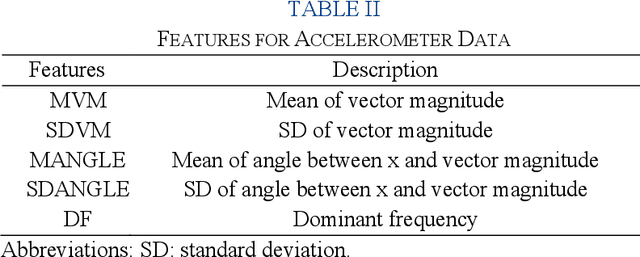

Abstract:Estimation of patient acuity in the Intensive Care Unit (ICU) is vital to ensure timely and appropriate interventions. Advances in artificial intelligence (AI) technologies have significantly improved the accuracy of acuity predictions. However, prior studies using machine learning for acuity prediction have predominantly relied on electronic health records (EHR) data, often overlooking other critical aspects of ICU stay, such as patient mobility, environmental factors, and facial cues indicating pain or agitation. To address this gap, we present MANGO: the Multimodal Acuity traNsformer for intelliGent ICU Outcomes, designed to enhance the prediction of patient acuity states, transitions, and the need for life-sustaining therapy. We collected a multimodal dataset ICU-Multimodal, incorporating four key modalities, EHR data, wearable sensor data, video of patient's facial cues, and ambient sensor data, which we utilized to train MANGO. The MANGO model employs a multimodal feature fusion network powered by Transformer masked self-attention method, enabling it to capture and learn complex interactions across these diverse data modalities even when some modalities are absent. Our results demonstrated that integrating multiple modalities significantly improved the model's ability to predict acuity status, transitions, and the need for life-sustaining therapy. The best-performing models achieved an area under the receiver operating characteristic curve (AUROC) of 0.76 (95% CI: 0.72-0.79) for predicting transitions in acuity status and the need for life-sustaining therapy, while 0.82 (95% CI: 0.69-0.89) for acuity status prediction...

DeLLiriuM: A large language model for delirium prediction in the ICU using structured EHR

Oct 22, 2024

Abstract:Delirium is an acute confusional state that has been shown to affect up to 31% of patients in the intensive care unit (ICU). Early detection of this condition could lead to more timely interventions and improved health outcomes. While artificial intelligence (AI) models have shown great potential for ICU delirium prediction using structured electronic health records (EHR), most of them have not explored the use of state-of-the-art AI models, have been limited to single hospitals, or have been developed and validated on small cohorts. The use of large language models (LLM), models with hundreds of millions to billions of parameters, with structured EHR data could potentially lead to improved predictive performance. In this study, we propose DeLLiriuM, a novel LLM-based delirium prediction model using EHR data available in the first 24 hours of ICU admission to predict the probability of a patient developing delirium during the rest of their ICU admission. We develop and validate DeLLiriuM on ICU admissions from 104,303 patients pertaining to 195 hospitals across three large databases: the eICU Collaborative Research Database, the Medical Information Mart for Intensive Care (MIMIC)-IV, and the University of Florida Health's Integrated Data Repository. The performance measured by the area under the receiver operating characteristic curve (AUROC) showed that DeLLiriuM outperformed all baselines in two external validation sets, with 0.77 (95% confidence interval 0.76-0.78) and 0.84 (95% confidence interval 0.83-0.85) across 77,543 patients spanning 194 hospitals. To the best of our knowledge, DeLLiriuM is the first LLM-based delirium prediction tool for the ICU based on structured EHR data, outperforming deep learning baselines which employ structured features and can provide helpful information to clinicians for timely interventions.

Leveraging Computer Vision in the Intensive Care Unit (ICU) for Examining Visitation and Mobility

Mar 10, 2024

Abstract:Despite the importance of closely monitoring patients in the Intensive Care Unit (ICU), many aspects are still assessed in a limited manner due to the time constraints imposed on healthcare providers. For example, although excessive visitations during rest hours can potentially exacerbate the risk of circadian rhythm disruption and delirium, it is not captured in the ICU. Likewise, while mobility can be an important indicator of recovery or deterioration in ICU patients, it is only captured sporadically or not captured at all. In the past few years, the computer vision field has found application in many domains by reducing the human burden. Using computer vision systems in the ICU can also potentially enable non-existing assessments or enhance the frequency and accuracy of existing assessments while reducing the staff workload. In this study, we leverage a state-of-the-art noninvasive computer vision system based on depth imaging to characterize ICU visitations and patients' mobility. We then examine the relationship between visitation and several patient outcomes, such as pain, acuity, and delirium. We found an association between deteriorating patient acuity and the incidence of delirium with increased visitations. In contrast, self-reported pain, reported using the Defense and Veteran Pain Rating Scale (DVPRS), was correlated with decreased visitations. Our findings highlight the feasibility and potential of using noninvasive autonomous systems to monitor ICU patients.

Detecting Visual Cues in the Intensive Care Unit and Association with Patient Clinical Status

Nov 01, 2023

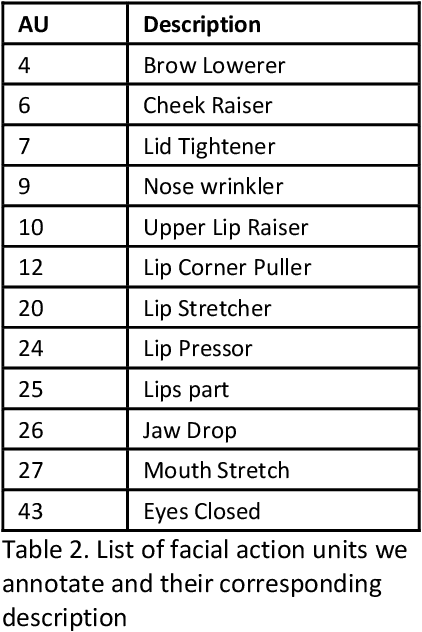

Abstract:Intensive Care Units (ICU) provide close supervision and continuous care to patients with life-threatening conditions. However, continuous patient assessment in the ICU is still limited due to time constraints and the workload on healthcare providers. Existing patient assessments in the ICU such as pain or mobility assessment are mostly sporadic and administered manually, thus introducing the potential for human errors. Developing Artificial intelligence (AI) tools that can augment human assessments in the ICU can be beneficial for providing more objective and granular monitoring capabilities. For example, capturing the variations in a patient's facial cues related to pain or agitation can help in adjusting pain-related medications or detecting agitation-inducing conditions such as delirium. Additionally, subtle changes in visual cues during or prior to adverse clinical events could potentially aid in continuous patient monitoring when combined with high-resolution physiological signals and Electronic Health Record (EHR) data. In this paper, we examined the association between visual cues and patient condition including acuity status, acute brain dysfunction, and pain. We leveraged our AU-ICU dataset with 107,064 frames collected in the ICU annotated with facial action units (AUs) labels by trained annotators. We developed a new "masked loss computation" technique that addresses the data imbalance problem by maximizing data resource utilization. We trained the model using our AU-ICU dataset in conjunction with three external datasets to detect 18 AUs. The SWIN Transformer model achieved 0.57 mean F1-score and 0.89 mean accuracy on the test set. Additionally, we performed AU inference on 634,054 frames to evaluate the association between facial AUs and clinically important patient conditions such as acuity status, acute brain dysfunction, and pain.

Transformers in Healthcare: A Survey

Jun 30, 2023

Abstract:With Artificial Intelligence (AI) increasingly permeating various aspects of society, including healthcare, the adoption of the Transformers neural network architecture is rapidly changing many applications. Transformer is a type of deep learning architecture initially developed to solve general-purpose Natural Language Processing (NLP) tasks and has subsequently been adapted in many fields, including healthcare. In this survey paper, we provide an overview of how this architecture has been adopted to analyze various forms of data, including medical imaging, structured and unstructured Electronic Health Records (EHR), social media, physiological signals, and biomolecular sequences. Those models could help in clinical diagnosis, report generation, data reconstruction, and drug/protein synthesis. We identified relevant studies using the Preferred Reporting Items for Systematic Reviews and Meta-Analyses (PRISMA) guidelines. We also discuss the benefits and limitations of using transformers in healthcare and examine issues such as computational cost, model interpretability, fairness, alignment with human values, ethical implications, and environmental impact.

AI-Enhanced Intensive Care Unit: Revolutionizing Patient Care with Pervasive Sensing

Mar 11, 2023

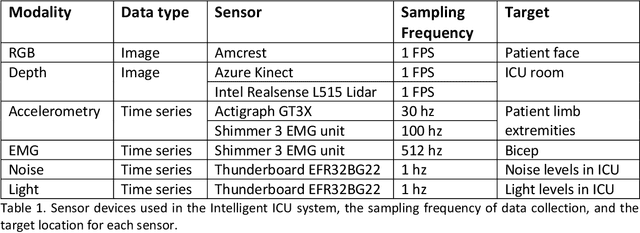

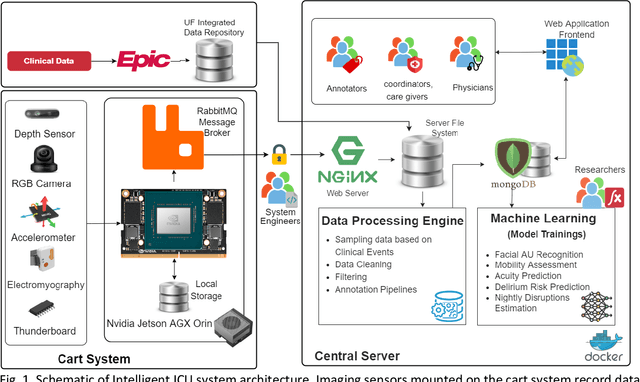

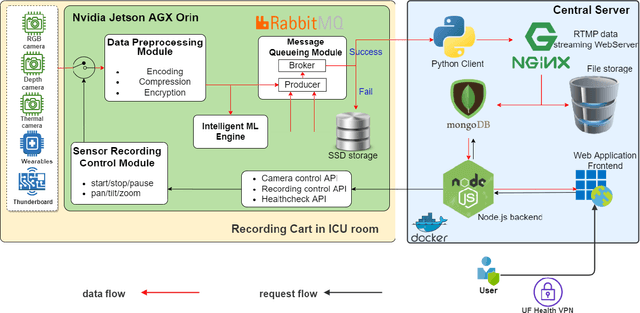

Abstract:The intensive care unit (ICU) is a specialized hospital space where critically ill patients receive intensive care and monitoring. Comprehensive monitoring is imperative in assessing patients conditions, in particular acuity, and ultimately the quality of care. However, the extent of patient monitoring in the ICU is limited due to time constraints and the workload on healthcare providers. Currently, visual assessments for acuity, including fine details such as facial expressions, posture, and mobility, are sporadically captured, or not captured at all. These manual observations are subjective to the individual, prone to documentation errors, and overburden care providers with the additional workload. Artificial Intelligence (AI) enabled systems has the potential to augment the patient visual monitoring and assessment due to their exceptional learning capabilities. Such systems require robust annotated data to train. To this end, we have developed pervasive sensing and data processing system which collects data from multiple modalities depth images, color RGB images, accelerometry, electromyography, sound pressure, and light levels in ICU for developing intelligent monitoring systems for continuous and granular acuity, delirium risk, pain, and mobility assessment. This paper presents the Intelligent Intensive Care Unit (I2CU) system architecture we developed for real-time patient monitoring and visual assessment.

Predicting risk of delirium from ambient noise and light information in the ICU

Mar 11, 2023

Abstract:Existing Intensive Care Unit (ICU) delirium prediction models do not consider environmental factors despite strong evidence of their influence on delirium. This study reports the first deep-learning based delirium prediction model for ICU patients using only ambient noise and light information. Ambient light and noise intensities were measured from ICU rooms of 102 patients from May 2021 to September 2022 using Thunderboard, ActiGraph sensors and an iPod with AudioTools application. These measurements were divided into daytime (0700 to 1859) and nighttime (1900 to 0659). Deep learning models were trained using this data to predict the incidence of delirium during ICU stay or within 4 days of discharge. Finally, outcome scores were analyzed to evaluate the importance and directionality of every feature. Daytime noise levels were significantly higher than nighttime noise levels. When using only noise features or a combination of noise and light features 1-D convolutional neural networks (CNN) achieved the strongest performance: AUC=0.77, 0.74; Sensitivity=0.60, 0.56; Specificity=0.74, 0.74; Precision=0.46, 0.40 respectively. Using only light features, Long Short-Term Memory (LSTM) networks performed best: AUC=0.80, Sensitivity=0.60, Specificity=0.77, Precision=0.37. Maximum nighttime and minimum daytime noise levels were the strongest positive and negative predictors of delirium respectively. Nighttime light level was a stronger predictor of delirium than daytime light level. Total influence of light features outweighed that of noise features on the second and fourth day of ICU stay. This study shows that ambient light and noise intensities are strong predictors of long-term delirium incidence in the ICU. It reveals that daytime and nighttime environmental factors might influence delirium differently and that the importance of light and noise levels vary over the course of an ICU stay.

End-to-End Machine Learning Framework for Facial AU Detection in Intensive Care Units

Nov 12, 2022

Abstract:Pain is a common occurrence among patients admitted to Intensive Care Units. Pain assessment in ICU patients still remains a challenge for clinicians and ICU staff, specifically in cases of non-verbal sedated, mechanically ventilated, and intubated patients. Current manual observation-based pain assessment tools are limited by the frequency of pain observations administered and are subjective to the observer. Facial behavior is a major component in observation-based tools. Furthermore, previous literature shows the feasibility of painful facial expression detection using facial action units (AUs). However, these approaches are limited to controlled or semi-controlled environments and have never been validated in clinical settings. In this study, we present our Pain-ICU dataset, the largest dataset available targeting facial behavior analysis in the dynamic ICU environment. Our dataset comprises 76,388 patient facial image frames annotated with AUs obtained from 49 adult patients admitted to ICUs at the University of Florida Health Shands hospital. In this work, we evaluated two vision transformer models, namely ViT and SWIN, for AU detection on our Pain-ICU dataset and also external datasets. We developed a completely end-to-end AU detection pipeline with the objective of performing real-time AU detection in the ICU. The SWIN transformer Base variant achieved 0.88 F1-score and 0.85 accuracy on the held-out test partition of the Pain-ICU dataset.

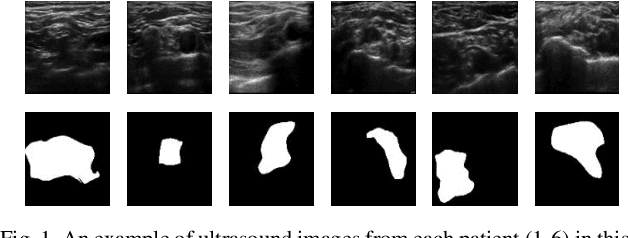

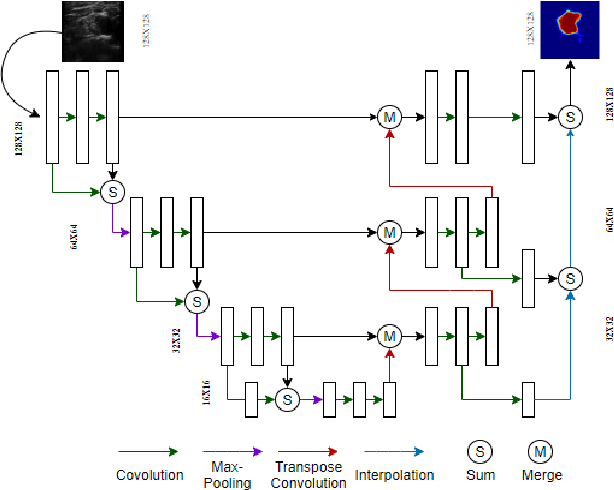

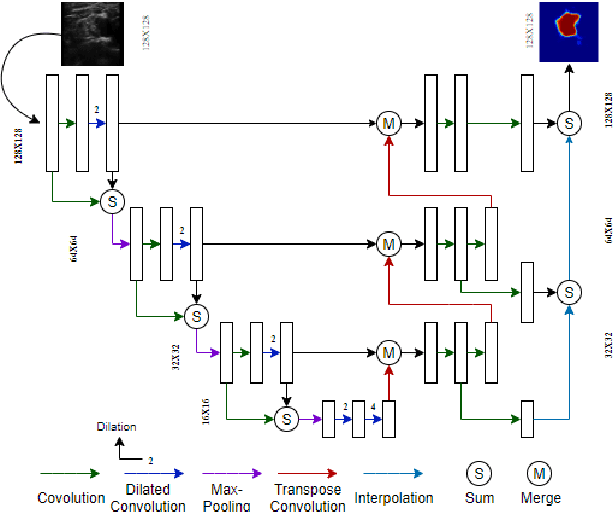

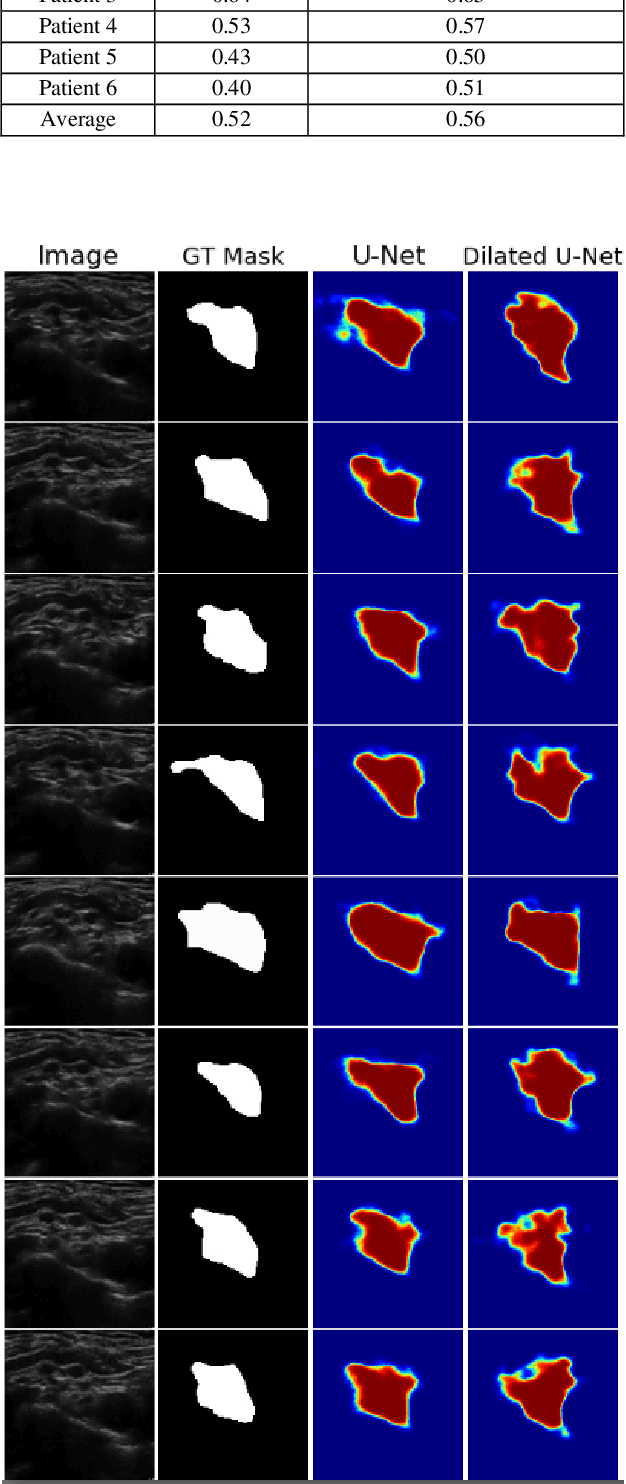

Automatic Ultrasound Image Segmentation of Supraclavicular Nerve Using Dilated U-Net Deep Learning Architecture

Aug 09, 2022

Abstract:Automated object recognition in medical images can facilitate medical diagnosis and treatment. In this paper, we automatically segmented supraclavicular nerves in ultrasound images to assist in injecting peripheral nerve blocks. Nerve blocks are generally used for pain treatment after surgery, where ultrasound guidance is used to inject local anesthetics next to target nerves. This treatment blocks the transmission of pain signals to the brain, which can help improve the rate of recovery from surgery and significantly decrease the requirement for postoperative opioids. However, Ultrasound Guided Regional Anesthesia (UGRA) requires anesthesiologists to visually recognize the actual nerve position in the ultrasound images. This is a complex task given the myriad visual presentations of nerves in ultrasound images, and their visual similarity to many neighboring tissues. In this study, we used an automated nerve detection system for the UGRA Nerve Block treatment. The system can recognize the position of the nerve in ultrasound images using Deep Learning techniques. We developed a model to capture features of nerves by training two deep neural networks with skip connections: two extended U-Net architectures with and without dilated convolutions. This solution could potentially lead to an improved blockade of targeted nerves in regional anesthesia.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge