Patrick Tighe

Peri-AIIMS: Perioperative Artificial Intelligence Driven Integrated Modeling of Surgeries using Anesthetic, Physical and Cognitive Statuses for Predicting Hospital Outcomes

Oct 29, 2024

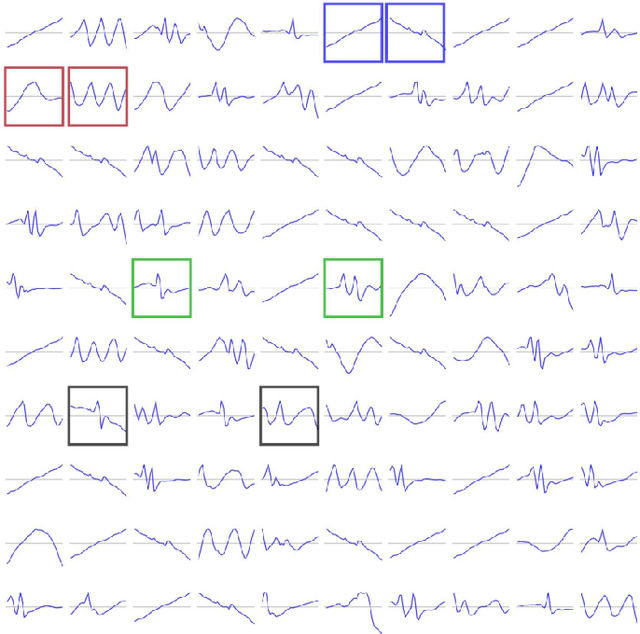

Abstract:The association between preoperative cognitive status and surgical outcomes is a critical, yet scarcely explored area of research. Linking intraoperative data with postoperative outcomes is a promising and low-cost way of evaluating long-term impacts of surgical interventions. In this study, we evaluated how preoperative cognitive status as measured by the clock drawing test contributed to predicting length of hospital stay, hospital charges, average pain experienced during follow-up, and 1-year mortality over and above intraoperative variables, demographics, preoperative physical status and comorbidities. We expanded our analysis to 6 specific surgical groups where sufficient data was available for cross-validation. The clock drawing images were represented by 10 constructional features discovered by a semi-supervised deep learning algorithm, previously validated to differentiate between dementia and non-dementia patients. Different machine learning models were trained to classify postoperative outcomes in hold-out test sets. The models were compared to their relative performance, time complexity, and interpretability. Shapley Additive Explanations (SHAP) analysis was used to find the most predictive features for classifying different outcomes in different surgical contexts. Relative classification performances achieved by different feature sets showed that the perioperative cognitive dataset which included clock drawing features in addition to intraoperative variables, demographics, and comorbidities served as the best dataset for 12 of 18 possible surgery-outcome combinations...

XTSFormer: Cross-Temporal-Scale Transformer for Irregular Time Event Prediction

Feb 03, 2024

Abstract:Event prediction aims to forecast the time and type of a future event based on a historical event sequence. Despite its significance, several challenges exist, including the irregularity of time intervals between consecutive events, the existence of cycles, periodicity, and multi-scale event interactions, as well as the high computational costs for long event sequences. Existing neural temporal point processes (TPPs) methods do not capture the multi-scale nature of event interactions, which is common in many real-world applications such as clinical event data. To address these issues, we propose the cross-temporal-scale transformer (XTSFormer), designed specifically for irregularly timed event data. Our model comprises two vital components: a novel Feature-based Cycle-aware Time Positional Encoding (FCPE) that adeptly captures the cyclical nature of time, and a hierarchical multi-scale temporal attention mechanism. These scales are determined by a bottom-up clustering algorithm. Extensive experiments on several real-world datasets show that our XTSFormer outperforms several baseline methods in prediction performance.

Detecting Visual Cues in the Intensive Care Unit and Association with Patient Clinical Status

Nov 01, 2023

Abstract:Intensive Care Units (ICU) provide close supervision and continuous care to patients with life-threatening conditions. However, continuous patient assessment in the ICU is still limited due to time constraints and the workload on healthcare providers. Existing patient assessments in the ICU such as pain or mobility assessment are mostly sporadic and administered manually, thus introducing the potential for human errors. Developing Artificial intelligence (AI) tools that can augment human assessments in the ICU can be beneficial for providing more objective and granular monitoring capabilities. For example, capturing the variations in a patient's facial cues related to pain or agitation can help in adjusting pain-related medications or detecting agitation-inducing conditions such as delirium. Additionally, subtle changes in visual cues during or prior to adverse clinical events could potentially aid in continuous patient monitoring when combined with high-resolution physiological signals and Electronic Health Record (EHR) data. In this paper, we examined the association between visual cues and patient condition including acuity status, acute brain dysfunction, and pain. We leveraged our AU-ICU dataset with 107,064 frames collected in the ICU annotated with facial action units (AUs) labels by trained annotators. We developed a new "masked loss computation" technique that addresses the data imbalance problem by maximizing data resource utilization. We trained the model using our AU-ICU dataset in conjunction with three external datasets to detect 18 AUs. The SWIN Transformer model achieved 0.57 mean F1-score and 0.89 mean accuracy on the test set. Additionally, we performed AU inference on 634,054 frames to evaluate the association between facial AUs and clinically important patient conditions such as acuity status, acute brain dysfunction, and pain.

End-to-End Machine Learning Framework for Facial AU Detection in Intensive Care Units

Nov 12, 2022

Abstract:Pain is a common occurrence among patients admitted to Intensive Care Units. Pain assessment in ICU patients still remains a challenge for clinicians and ICU staff, specifically in cases of non-verbal sedated, mechanically ventilated, and intubated patients. Current manual observation-based pain assessment tools are limited by the frequency of pain observations administered and are subjective to the observer. Facial behavior is a major component in observation-based tools. Furthermore, previous literature shows the feasibility of painful facial expression detection using facial action units (AUs). However, these approaches are limited to controlled or semi-controlled environments and have never been validated in clinical settings. In this study, we present our Pain-ICU dataset, the largest dataset available targeting facial behavior analysis in the dynamic ICU environment. Our dataset comprises 76,388 patient facial image frames annotated with AUs obtained from 49 adult patients admitted to ICUs at the University of Florida Health Shands hospital. In this work, we evaluated two vision transformer models, namely ViT and SWIN, for AU detection on our Pain-ICU dataset and also external datasets. We developed a completely end-to-end AU detection pipeline with the objective of performing real-time AU detection in the ICU. The SWIN transformer Base variant achieved 0.88 F1-score and 0.85 accuracy on the held-out test partition of the Pain-ICU dataset.

Automatic Ultrasound Image Segmentation of Supraclavicular Nerve Using Dilated U-Net Deep Learning Architecture

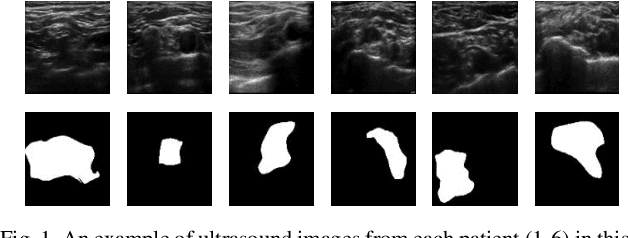

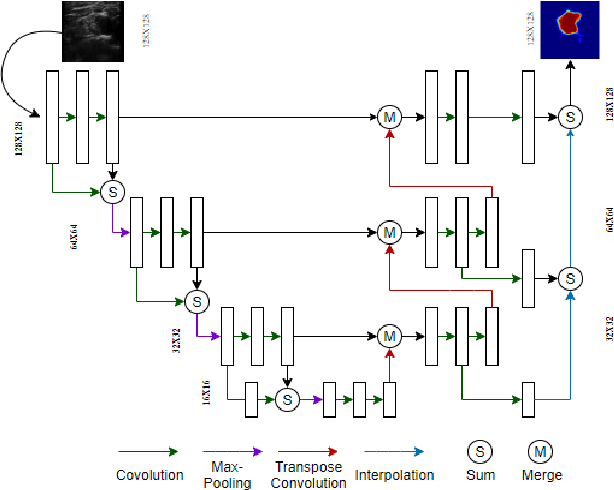

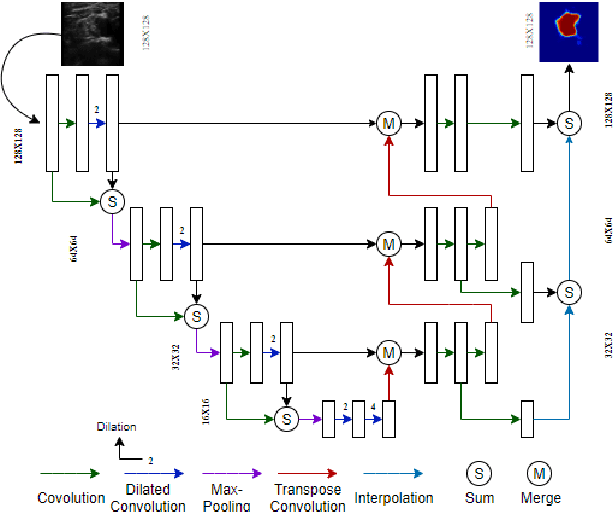

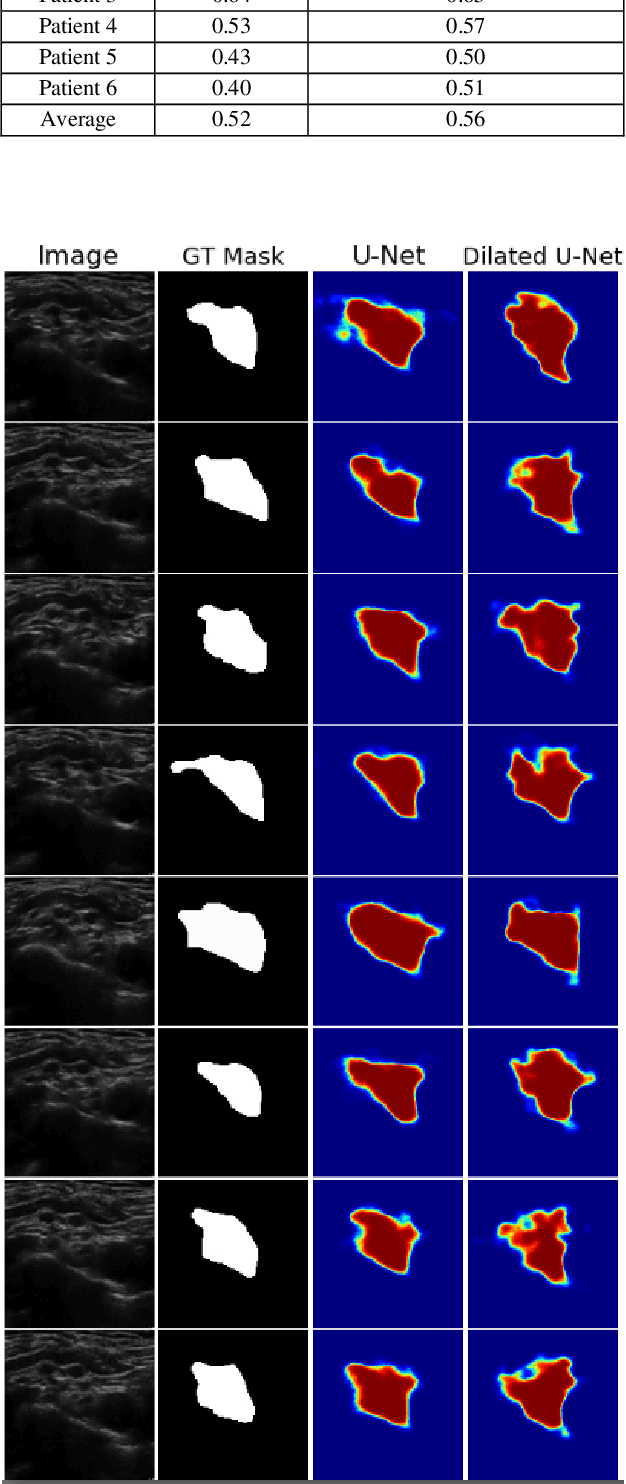

Aug 09, 2022

Abstract:Automated object recognition in medical images can facilitate medical diagnosis and treatment. In this paper, we automatically segmented supraclavicular nerves in ultrasound images to assist in injecting peripheral nerve blocks. Nerve blocks are generally used for pain treatment after surgery, where ultrasound guidance is used to inject local anesthetics next to target nerves. This treatment blocks the transmission of pain signals to the brain, which can help improve the rate of recovery from surgery and significantly decrease the requirement for postoperative opioids. However, Ultrasound Guided Regional Anesthesia (UGRA) requires anesthesiologists to visually recognize the actual nerve position in the ultrasound images. This is a complex task given the myriad visual presentations of nerves in ultrasound images, and their visual similarity to many neighboring tissues. In this study, we used an automated nerve detection system for the UGRA Nerve Block treatment. The system can recognize the position of the nerve in ultrasound images using Deep Learning techniques. We developed a model to capture features of nerves by training two deep neural networks with skip connections: two extended U-Net architectures with and without dilated convolutions. This solution could potentially lead to an improved blockade of targeted nerves in regional anesthesia.

Facial Action Unit Detection on ICU Data for Pain Assessment

Apr 24, 2020Abstract:Current day pain assessment methods rely on patient self-report or by an observer like the Intensive Care Unit (ICU) nurses. Patient self-report is subjective to the individual and suffers due to poor recall. Pain assessment by manual observation is limited by the number of administrations per day and staff workload. Previous studies showed the feasibility of automatic pain assessment by detecting Facial Action Units (AUs). Pain is observed to be associated with certain facial action units (AUs). This method of pain assessment can overcome the pitfalls of present-day pain assessment techniques. All the previous studies are limited to controlled environment data. In this study, we evaluated the performance of OpenFace an open-source facial behavior analysis tool and AU R-CNN on the real-world ICU data. Presence of assisted breathing devices, variable lighting of ICUs, patient orientation with respect to camera significantly affected the performance of the models, although these showed the state-of-the-art results in facial behavior analysis tasks. In this study, we show the need for automated pain assessment system which is trained on real-world ICU data for clinically acceptable pain assessment system.

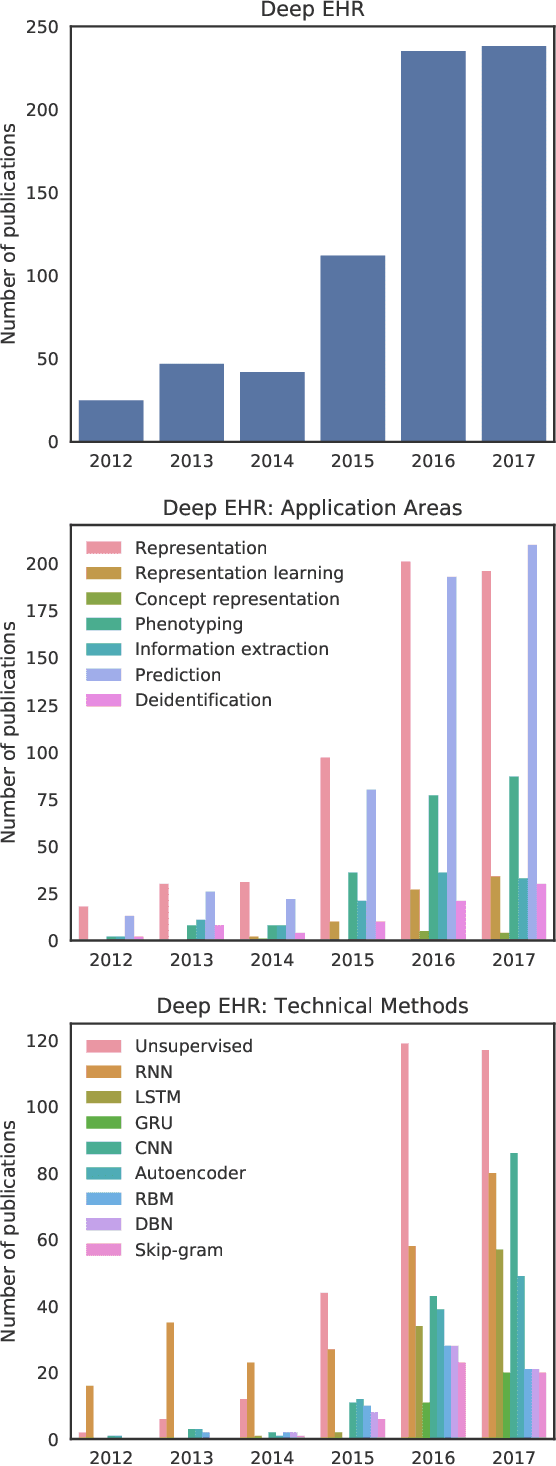

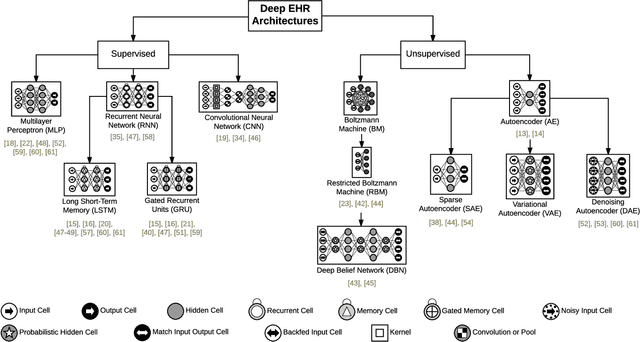

Deep EHR: A Survey of Recent Advances in Deep Learning Techniques for Electronic Health Record Analysis

Feb 24, 2018

Abstract:The past decade has seen an explosion in the amount of digital information stored in electronic health records (EHR). While primarily designed for archiving patient clinical information and administrative healthcare tasks, many researchers have found secondary use of these records for various clinical informatics tasks. Over the same period, the machine learning community has seen widespread advances in deep learning techniques, which also have been successfully applied to the vast amount of EHR data. In this paper, we review these deep EHR systems, examining architectures, technical aspects, and clinical applications. We also identify shortcomings of current techniques and discuss avenues of future research for EHR-based deep learning.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge