Sheroze Sheriffdeen

Reading Recognition in the Wild

May 30, 2025Abstract:To enable egocentric contextual AI in always-on smart glasses, it is crucial to be able to keep a record of the user's interactions with the world, including during reading. In this paper, we introduce a new task of reading recognition to determine when the user is reading. We first introduce the first-of-its-kind large-scale multimodal Reading in the Wild dataset, containing 100 hours of reading and non-reading videos in diverse and realistic scenarios. We then identify three modalities (egocentric RGB, eye gaze, head pose) that can be used to solve the task, and present a flexible transformer model that performs the task using these modalities, either individually or combined. We show that these modalities are relevant and complementary to the task, and investigate how to efficiently and effectively encode each modality. Additionally, we show the usefulness of this dataset towards classifying types of reading, extending current reading understanding studies conducted in constrained settings to larger scale, diversity and realism. Code, model, and data will be public.

Robust, High-Precision GNSS Carrier-Phase Positioning with Visual-Inertial Fusion

Mar 02, 2023

Abstract:Robust, high-precision global localization is fundamental to a wide range of outdoor robotics applications. Conventional fusion methods use low-accuracy pseudorange based GNSS measurements ($>>5m$ errors) and can only yield a coarse registration to the global earth-centered-earth-fixed (ECEF) frame. In this paper, we leverage high-precision GNSS carrier-phase positioning and aid it with local visual-inertial odometry (VIO) tracking using an extended Kalman filter (EKF) framework that better resolves the integer ambiguity concerned with GNSS carrier-phase. %to achieve centimeter-level accuracy in the ECEF frame. We also propose an algorithm for accurate GNSS-antenna-to-IMU extrinsics calibration to accurately align VIO to the ECEF frame. Together, our system achieves robust global positioning demonstrated by real-world hardware experiments in severely occluded urban canyons, and outperforms the state-of-the-art RTKLIB by a significant margin in terms of integer ambiguity solution fix rate and positioning RMSE accuracy.

Solving Forward and Inverse Problems Using Autoencoders

Jan 06, 2020

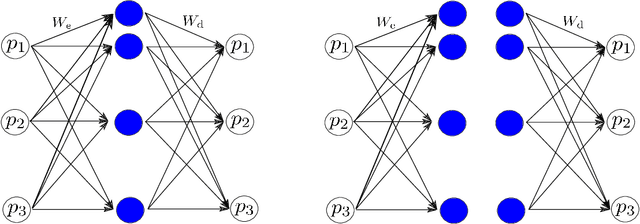

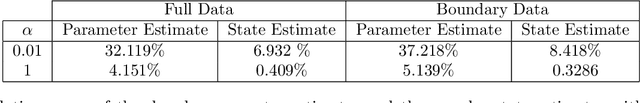

Abstract:This work develops a model-aware autoencoder networks as a new method for solving scientific forward and inverse problems. Autoencoders are unsupervised neural networks that are able to learn new representations of data through appropriately selected architecture and regularization. The resulting mappings to and from the latent representation can be used to encode and decode the data. In our work, we set the data space to be the parameter space of a parameter of interest we wish to invert for. Further, as a way to encode the underlying physical model into the autoencoder, we enforce the latent space of an autoencoder to be the space of observations of physically-governed phenomena. In doing so, we leverage the well known capability of a deep neural network as a universal function operator to simultaneously obtain both the parameter-to-observation and observation-to-parameter map. The results suggest that this simultaneous learning interacts synergistically to improve the the inversion capability of the autoencoder.

Accelerating PDE-constrained Inverse Solutions with Deep Learning and Reduced Order Models

Dec 17, 2019

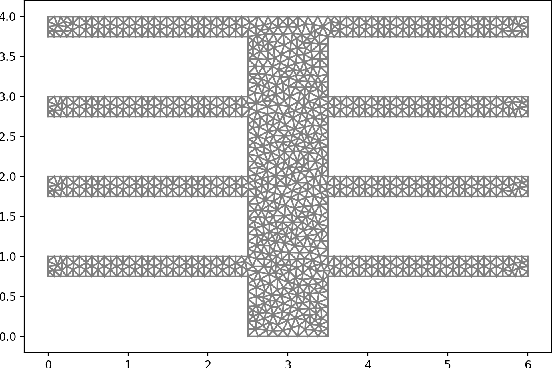

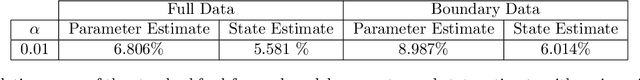

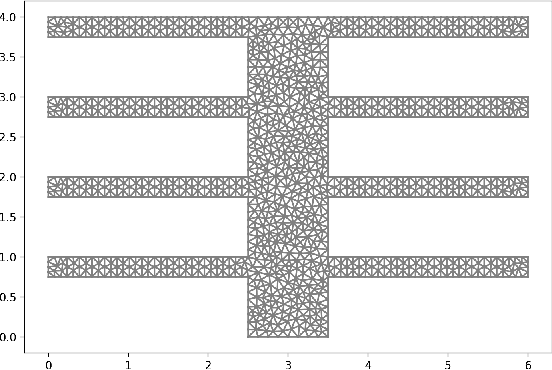

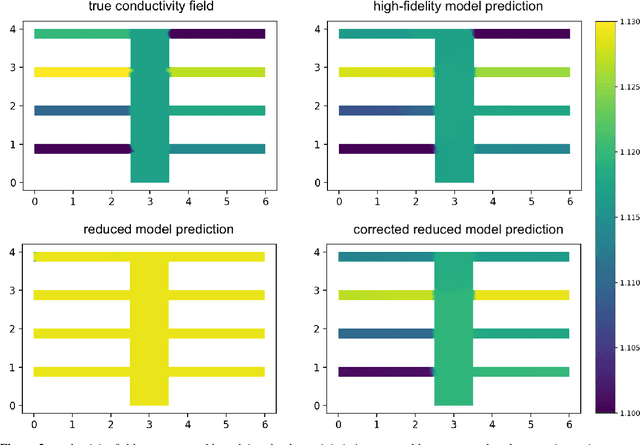

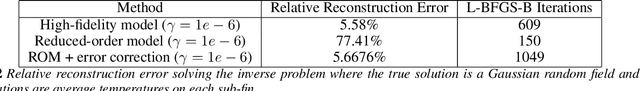

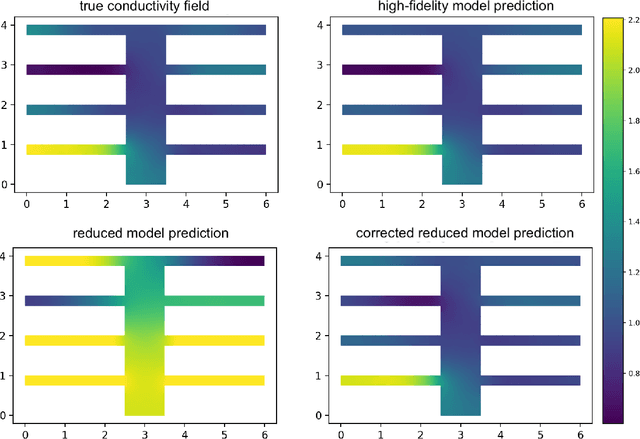

Abstract:Inverse problems are pervasive mathematical methods in inferring knowledge from observational and experimental data by leveraging simulations and models. Unlike direct inference methods, inverse problem approaches typically require many forward model solves usually governed by Partial Differential Equations (PDEs). This a crucial bottleneck in determining the feasibility of such methods. While machine learning (ML) methods, such as deep neural networks (DNNs), can be employed to learn nonlinear forward models, designing a network architecture that preserves accuracy while generalizing to new parameter regimes is a daunting task. Furthermore, due to the computation-expensive nature of forward models, state-of-the-art black-box ML methods would require an unrealistic amount of work in order to obtain an accurate surrogate model. On the other hand, standard Reduced-Order Models (ROMs) accurately capture supposedly important physics of the forward model in the reduced subspaces, but otherwise could be inaccurate elsewhere. In this paper, we propose to enlarge the validity of ROMs and hence improve the accuracy outside the reduced subspaces by incorporating a data-driven ML technique. In particular, we focus on a goal-oriented approach that substantially improves the accuracy of reduced models by learning the error between the forward model and the ROM outputs. Once an ML-enhanced ROM is constructed it can accelerate the performance of solving many-query problems in parametrized forward and inverse problems. Numerical results for inverse problems governed by elliptic PDEs and parametrized neutron transport equations will be presented to support our approach.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge