Qingsong Wang

Neural Network Training via Stochastic Alternating Minimization with Trainable Step Sizes

Aug 06, 2025Abstract:The training of deep neural networks is inherently a nonconvex optimization problem, yet standard approaches such as stochastic gradient descent (SGD) require simultaneous updates to all parameters, often leading to unstable convergence and high computational cost. To address these issues, we propose a novel method, Stochastic Alternating Minimization with Trainable Step Sizes (SAMT), which updates network parameters in an alternating manner by treating the weights of each layer as a block. By decomposing the overall optimization into sub-problems corresponding to different blocks, this block-wise alternating strategy reduces per-step computational overhead and enhances training stability in nonconvex settings. To fully leverage these benefits, inspired by meta-learning, we proposed a novel adaptive step size strategy to incorporate into the sub-problem solving steps of alternating updates. It supports different types of trainable step sizes, including but not limited to scalar, element-wise, row-wise, and column-wise, enabling adaptive step size selection tailored to each block via meta-learning. We further provide a theoretical convergence guarantee for the proposed algorithm, establishing its optimization soundness. Extensive experiments for multiple benchmarks demonstrate that SAMT achieves better generalization performance with fewer parameter updates compared to state-of-the-art methods, highlighting its effectiveness and potential in neural network optimization.

An Accelerated Bregman Algorithm for ReLU-based Symmetric Matrix Decomposition

Mar 21, 2025Abstract:Symmetric matrix decomposition is an active research area in machine learning. This paper focuses on exploiting the low-rank structure of non-negative and sparse symmetric matrices via the rectified linear unit (ReLU) activation function. We propose the ReLU-based nonlinear symmetric matrix decomposition (ReLU-NSMD) model, introduce an accelerated alternating partial Bregman (AAPB) method for its solution, and present the algorithm's convergence results. Our algorithm leverages the Bregman proximal gradient framework to overcome the challenge of estimating the global $L$-smooth constant in the classic proximal gradient algorithm. Numerical experiments on synthetic and real datasets validate the effectiveness of our model and algorithm.

A Triple-Inertial Accelerated Alternating Optimization Method for Deep Learning Training

Mar 13, 2025Abstract:The stochastic gradient descent (SGD) algorithm has achieved remarkable success in training deep learning models. However, it has several limitations, including susceptibility to vanishing gradients, sensitivity to input data, and a lack of robust theoretical guarantees. In recent years, alternating minimization (AM) methods have emerged as a promising alternative for model training by employing gradient-free approaches to iteratively update model parameters. Despite their potential, these methods often exhibit slow convergence rates. To address this challenge, we propose a novel Triple-Inertial Accelerated Alternating Minimization (TIAM) framework for neural network training. The TIAM approach incorporates a triple-inertial acceleration strategy with a specialized approximation method, facilitating targeted acceleration of different terms in each sub-problem optimization. This integration improves the efficiency of convergence, achieving superior performance with fewer iterations. Additionally, we provide a convergence analysis of the TIAM algorithm, including its global convergence properties and convergence rate. Extensive experiments validate the effectiveness of the TIAM method, showing significant improvements in generalization capability and computational efficiency compared to existing approaches, particularly when applied to the rectified linear unit (ReLU) and its variants.

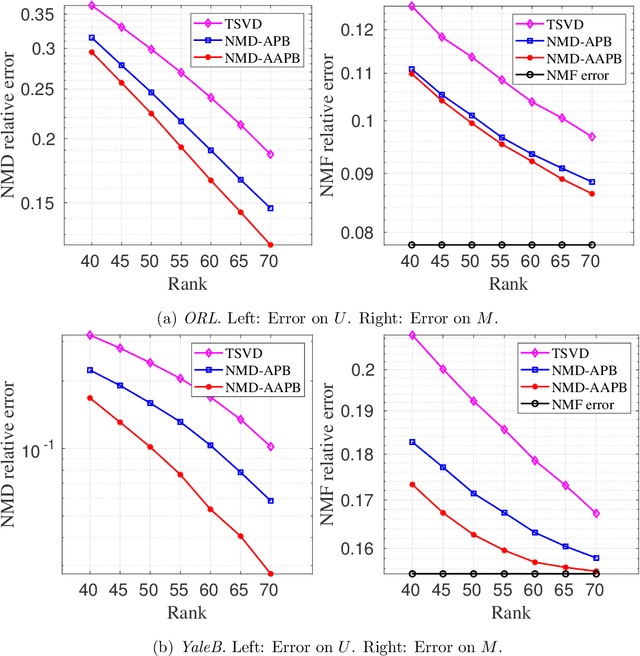

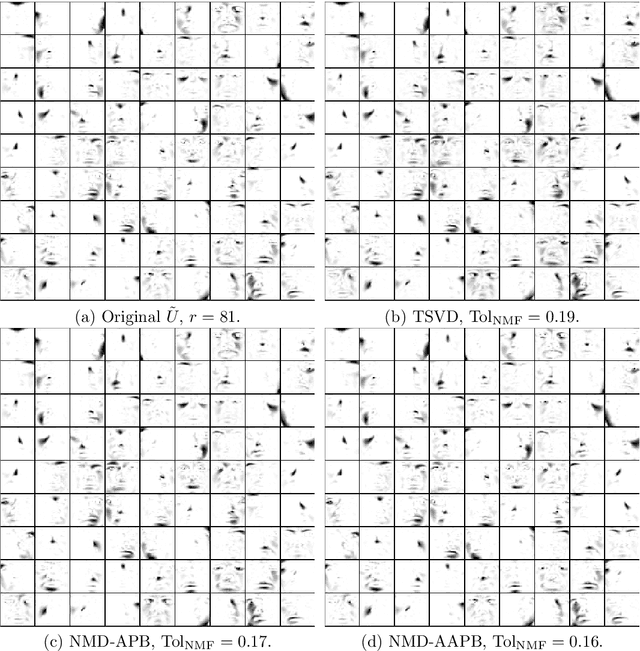

An Accelerated Alternating Partial Bregman Algorithm for ReLU-based Matrix Decomposition

Mar 04, 2025

Abstract:Despite the remarkable success of low-rank estimation in data mining, its effectiveness diminishes when applied to data that inherently lacks low-rank structure. To address this limitation, in this paper, we focus on non-negative sparse matrices and aim to investigate the intrinsic low-rank characteristics of the rectified linear unit (ReLU) activation function. We first propose a novel nonlinear matrix decomposition framework incorporating a comprehensive regularization term designed to simultaneously promote useful structures in clustering and compression tasks, such as low-rankness, sparsity, and non-negativity in the resulting factors. This formulation presents significant computational challenges due to its multi-block structure, non-convexity, non-smoothness, and the absence of global gradient Lipschitz continuity. To address these challenges, we develop an accelerated alternating partial Bregman proximal gradient method (AAPB), whose distinctive feature lies in its capability to enable simultaneous updates of multiple variables. Under mild and theoretically justified assumptions, we establish both sublinear and global convergence properties of the proposed algorithm. Through careful selection of kernel generating distances tailored to various regularization terms, we derive corresponding closed-form solutions while maintaining the $L$-smooth adaptable property always holds for any $L\ge 1$. Numerical experiments, on graph regularized clustering and sparse NMF basis compression confirm the effectiveness of our model and algorithm.

An Improved Optimal Proximal Gradient Algorithm for Non-Blind Image Deblurring

Feb 11, 2025Abstract:Image deblurring remains a central research area within image processing, critical for its role in enhancing image quality and facilitating clearer visual representations across diverse applications. This paper tackles the optimization problem of image deblurring, assuming a known blurring kernel. We introduce an improved optimal proximal gradient algorithm (IOptISTA), which builds upon the optimal gradient method and a weighting matrix, to efficiently address the non-blind image deblurring problem. Based on two regularization cases, namely the $l_1$ norm and total variation norm, we perform numerical experiments to assess the performance of our proposed algorithm. The results indicate that our algorithm yields enhanced PSNR and SSIM values, as well as a reduced tolerance, compared to existing methods.

Elucidating Flow Matching ODE Dynamics with respect to Data Geometries

Dec 25, 2024Abstract:Diffusion-based generative models have become the standard for image generation. ODE-based samplers and flow matching models improve efficiency, in comparison to diffusion models, by reducing sampling steps through learned vector fields. However, the theoretical foundations of flow matching models remain limited, particularly regarding the convergence of individual sample trajectories at terminal time - a critical property that impacts sample quality and being critical assumption for models like the consistency model. In this paper, we advance the theory of flow matching models through a comprehensive analysis of sample trajectories, centered on the denoiser that drives ODE dynamics. We establish the existence, uniqueness and convergence of ODE trajectories at terminal time, ensuring stable sampling outcomes under minimal assumptions. Our analysis reveals how trajectories evolve from capturing global data features to local structures, providing the geometric characterization of per-sample behavior in flow matching models. We also explain the memorization phenomenon in diffusion-based training through our terminal time analysis. These findings bridge critical gaps in understanding flow matching models, with practical implications for sampling stability and model design.

A Momentum Accelerated Algorithm for ReLU-based Nonlinear Matrix Decomposition

Feb 04, 2024Abstract:Recently, there has been a growing interest in the exploration of Nonlinear Matrix Decomposition (NMD) due to its close ties with neural networks. NMD aims to find a low-rank matrix from a sparse nonnegative matrix with a per-element nonlinear function. A typical choice is the Rectified Linear Unit (ReLU) activation function. To address over-fitting in the existing ReLU-based NMD model (ReLU-NMD), we propose a Tikhonov regularized ReLU-NMD model, referred to as ReLU-NMD-T. Subsequently, we introduce a momentum accelerated algorithm for handling the ReLU-NMD-T model. A distinctive feature, setting our work apart from most existing studies, is the incorporation of both positive and negative momentum parameters in our algorithm. Our numerical experiments on real-world datasets show the effectiveness of the proposed model and algorithm. Moreover, the code is available at https://github.com/nothing2wang/NMD-TM.

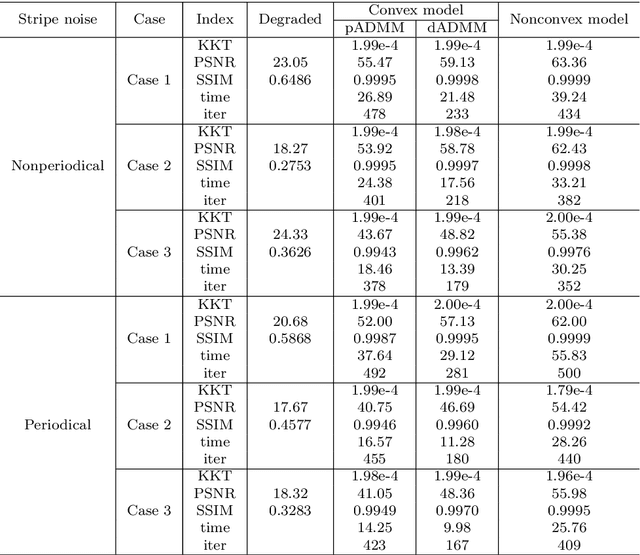

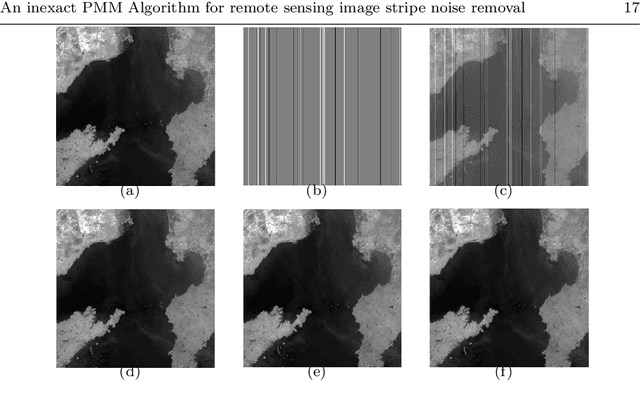

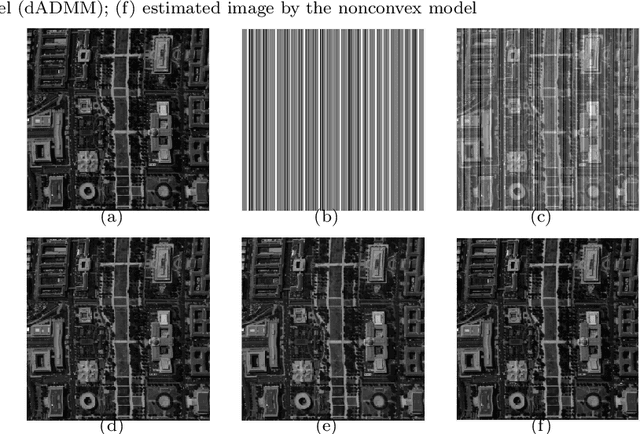

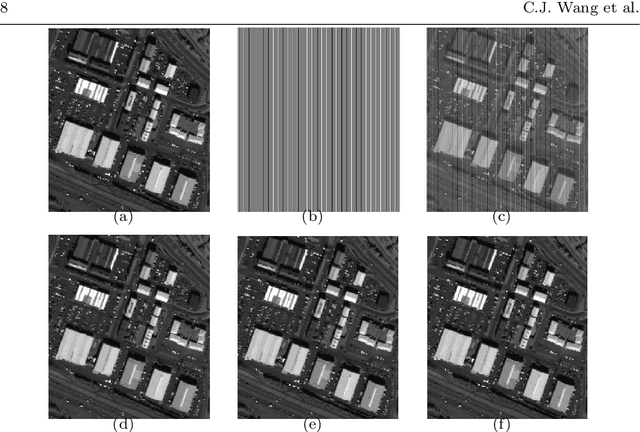

An inexact proximal majorization-minimization Algorithm for remote sensing image stripe noise removal

Aug 17, 2023

Abstract:The stripe noise existing in remote sensing images badly degrades the visual quality and restricts the precision of data analysis. Therefore, many destriping models have been proposed in recent years. In contrast to these existing models, in this paper, we propose a nonconvex model with a DC function (i.e., the difference of convex functions) structure to remove the strip noise. To solve this model, we make use of the DC structure and apply an inexact proximal majorization-minimization algorithm with each inner subproblem solved by the alternating direction method of multipliers. It deserves mentioning that we design an implementable stopping criterion for the inner subproblem, while the convergence can still be guaranteed. Numerical experiments demonstrate the superiority of the proposed model and algorithm.

It begins with a boundary: A geometric view on probabilistically robust learning

May 30, 2023Abstract:Although deep neural networks have achieved super-human performance on many classification tasks, they often exhibit a worrying lack of robustness towards adversarially generated examples. Thus, considerable effort has been invested into reformulating Empirical Risk Minimization (ERM) into an adversarially robust framework. Recently, attention has shifted towards approaches which interpolate between the robustness offered by adversarial training and the higher clean accuracy and faster training times of ERM. In this paper, we take a fresh and geometric view on one such method -- Probabilistically Robust Learning (PRL) (Robey et al., ICML, 2022). We propose a geometric framework for understanding PRL, which allows us to identify a subtle flaw in its original formulation and to introduce a family of probabilistic nonlocal perimeter functionals to address this. We prove existence of solutions using novel relaxation methods and study properties as well as local limits of the introduced perimeters.

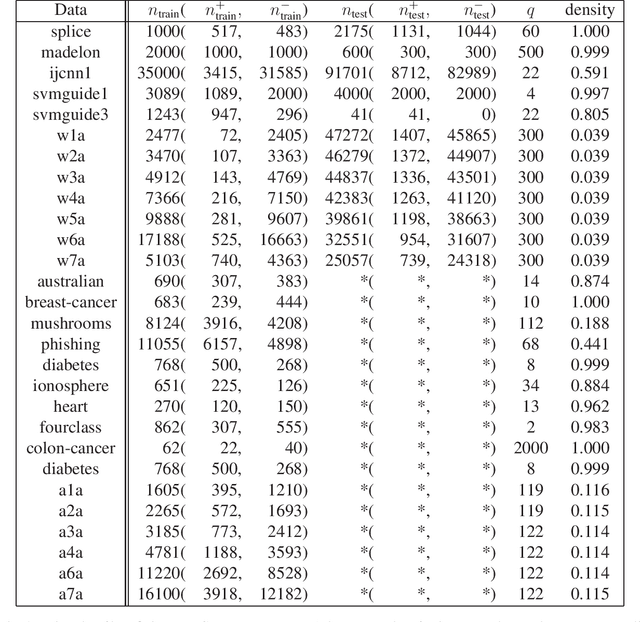

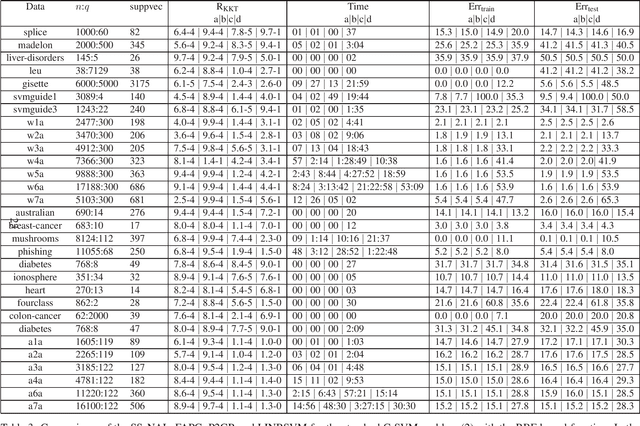

A sparse semismooth Newton based augmented Lagrangian method for large-scale support vector machines

Oct 03, 2019

Abstract:Support vector machines (SVMs) are successful modeling and prediction tools with a variety of applications. Previous work has demonstrated the superiority of the SVMs in dealing with the high dimensional, low sample size problems. However, the numerical difficulties of the SVMs will become severe with the increase of the sample size. Although there exist many solvers for the SVMs, only few of them are designed by exploiting the special structures of the SVMs. In this paper, we propose a highly efficient sparse semismooth Newton based augmented Lagrangian method for solving a large-scale convex quadratic programming problem with a linear equality constraint and a simple box constraint, which is generated from the dual problems of the SVMs. By leveraging the primal-dual error bound result, the fast local convergence rate of the augmented Lagrangian method can be guaranteed. Furthermore, by exploiting the second-order sparsity of the problem when using the semismooth Newton method, the algorithm can efficiently solve the aforementioned difficult problems. Finally, numerical comparisons demonstrate that the proposed algorithm outperforms the current state-of-the-art solvers for the large-scale SVMs.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge