Pranay Tankala

The Importance of Being Smoothly Calibrated

Mar 16, 2026Abstract:Recent work has highlighted the centrality of smooth calibration [Kakade and Foster, 2008] as a robust measure of calibration error. We generalize, unify, and extend previous results on smooth calibration, both as a robust calibration measure, and as a step towards omniprediction, which enables predictions with low regret for downstream decision makers seeking to optimize some proper loss unknown to the predictor. We present a new omniprediction guarantee for smoothly calibrated predictors, for the class of all bounded proper losses. We smooth the predictor by adding some noise to it, and compete against smoothed versions of any benchmark predictor on the space, where we add some noise to the predictor and then post-process it arbitrarily. The omniprediction error is bounded by the smooth calibration error of the predictor and the earth mover's distance from the benchmark. We exhibit instances showing that this dependence cannot, in general, be improved. We show how this unifies and extends prior results [Foster and Vohra, 1998; Hartline, Wu, and Yang, 2025] on omniprediction from smooth calibration. We present a crisp new characterization of smooth calibration in terms of the earth mover's distance to the closest perfectly calibrated joint distribution of predictions and labels. This also yields a simpler proof of the relation to the lower distance to calibration from [Blasiok, Gopalan, Hu, and Nakkiran, 2023]. We use this to show that the upper distance to calibration cannot be estimated within a quadratic factor with sample complexity independent of the support size of the predictions. This is in contrast to the distance to calibration, where the corresponding problem was known to be information-theoretically impossible: no finite number of samples suffice [Blasiok, Gopalan, Hu, and Nakkiran, 2023].

Efficient Calibration for Decision Making

Nov 17, 2025Abstract:A decision-theoretic characterization of perfect calibration is that an agent seeking to minimize a proper loss in expectation cannot improve their outcome by post-processing a perfectly calibrated predictor. Hu and Wu (FOCS'24) use this to define an approximate calibration measure called calibration decision loss ($\mathsf{CDL}$), which measures the maximal improvement achievable by any post-processing over any proper loss. Unfortunately, $\mathsf{CDL}$ turns out to be intractable to even weakly approximate in the offline setting, given black-box access to the predictions and labels. We suggest circumventing this by restricting attention to structured families of post-processing functions $K$. We define the calibration decision loss relative to $K$, denoted $\mathsf{CDL}_K$ where we consider all proper losses but restrict post-processings to a structured family $K$. We develop a comprehensive theory of when $\mathsf{CDL}_K$ is information-theoretically and computationally tractable, and use it to prove both upper and lower bounds for natural classes $K$. In addition to introducing new definitions and algorithmic techniques to the theory of calibration for decision making, our results give rigorous guarantees for some widely used recalibration procedures in machine learning.

Efficient Testing Implies Structured Symmetry

Nov 05, 2025Abstract:Given a small random sample of $n$-bit strings labeled by an unknown Boolean function, which properties of this function can be tested computationally efficiently? We show an equivalence between properties that are efficiently testable from few samples and properties with structured symmetry, which depend only on the function's average values on parts of a low-complexity partition of the domain. Without the efficiency constraint, a similar characterization in terms of unstructured symmetry was obtained by Blais and Yoshida (2019). Our main technical tool is supersimulation, which builds on methods from the algorithmic fairness literature to approximate arbitrarily complex functions by small-circuit simulators that fool significantly larger distinguishers. We extend the characterization along other axes as well. We show that allowing parts to overlap exponentially reduces their required number, broadening the scope of the construction from properties testable with $O(\log n)$ samples to properties testable with $O(n)$ samples. For larger sample sizes, we show that any efficient tester is essentially checking for indistinguishability from a bounded collection of small circuits, in the spirit of a characterization of testable graph properties. Finally, we show that our results for Boolean function testing generalize to high-entropy distribution testing on arbitrary domains.

From Fairness to Infinity: Outcome-Indistinguishable (Omni)Prediction in Evolving Graphs

Nov 26, 2024

Abstract:Professional networks provide invaluable entree to opportunity through referrals and introductions. A rich literature shows they also serve to entrench and even exacerbate a status quo of privilege and disadvantage. Hiring platforms, equipped with the ability to nudge link formation, provide a tantalizing opening for beneficial structural change. We anticipate that key to this prospect will be the ability to estimate the likelihood of edge formation in an evolving graph. Outcome-indistinguishable prediction algorithms ensure that the modeled world is indistinguishable from the real world by a family of statistical tests. Omnipredictors ensure that predictions can be post-processed to yield loss minimization competitive with respect to a benchmark class of predictors for many losses simultaneously, with appropriate post-processing. We begin by observing that, by combining a slightly modified form of the online K29 star algorithm of Vovk (2007) with basic facts from the theory of reproducing kernel Hilbert spaces, one can derive simple and efficient online algorithms satisfying outcome indistinguishability and omniprediction, with guarantees that improve upon, or are complementary to, those currently known. This is of independent interest. We apply these techniques to evolving graphs, obtaining online outcome-indistinguishable omnipredictors for rich -- possibly infinite -- sets of distinguishers that capture properties of pairs of nodes, and their neighborhoods. This yields, inter alia, multicalibrated predictions of edge formation with respect to pairs of demographic groups, and the ability to simultaneously optimize loss as measured by a variety of social welfare functions.

Differentially Private Learning Beyond the Classical Dimensionality Regime

Nov 20, 2024Abstract:We initiate the study of differentially private learning in the proportional dimensionality regime, in which the number of data samples $n$ and problem dimension $d$ approach infinity at rates proportional to one another, meaning that $d / n \to \delta$ as $n \to \infty$ for an arbitrary, given constant $\delta \in (0, \infty)$. This setting is significantly more challenging than that of all prior theoretical work in high-dimensional differentially private learning, which, despite the name, has assumed that $\delta = 0$ or is sufficiently small for problems of sample complexity $O(d)$, a regime typically considered "low-dimensional" or "classical" by modern standards in high-dimensional statistics. We provide sharp theoretical estimates of the error of several well-studied differentially private algorithms for robust linear regression and logistic regression, including output perturbation, objective perturbation, and noisy stochastic gradient descent, in the proportional dimensionality regime. The $1 + o(1)$ factor precision of our error estimates enables a far more nuanced understanding of the price of privacy of these algorithms than that afforded by existing, coarser analyses, which are essentially vacuous in the regime we consider. We incorporate several probabilistic tools that have not previously been used to analyze differentially private learning algorithms, such as a modern Gaussian comparison inequality and recent universality laws with origins in statistical physics.

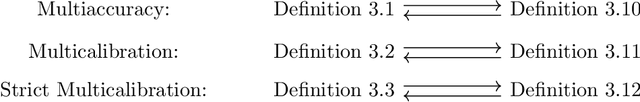

New Insights into Multi-Calibration

Jan 21, 2023

Abstract:We identify a novel connection between the recent literature on multi-group fairness for prediction algorithms and well-established notions of graph regularity from extremal graph theory. We frame our investigation using new, statistical distance-based variants of multi-calibration that are closely related to the concept of outcome indistinguishability. Adopting this perspective leads us naturally not only to our graph theoretic results, but also to new multi-calibration algorithms with improved complexity in certain parameter regimes, and to a generalization of a state-of-the-art result on omniprediction. Along the way, we also unify several prior algorithms for achieving multi-group fairness, as well as their analyses, through the lens of no-regret learning.

Privately Estimating a Gaussian: Efficient, Robust and Optimal

Dec 15, 2022

Abstract:In this work, we give efficient algorithms for privately estimating a Gaussian distribution in both pure and approximate differential privacy (DP) models with optimal dependence on the dimension in the sample complexity. In the pure DP setting, we give an efficient algorithm that estimates an unknown $d$-dimensional Gaussian distribution up to an arbitrary tiny total variation error using $\widetilde{O}(d^2 \log \kappa)$ samples while tolerating a constant fraction of adversarial outliers. Here, $\kappa$ is the condition number of the target covariance matrix. The sample bound matches best non-private estimators in the dependence on the dimension (up to a polylogarithmic factor). We prove a new lower bound on differentially private covariance estimation to show that the dependence on the condition number $\kappa$ in the above sample bound is also tight. Prior to our work, only identifiability results (yielding inefficient super-polynomial time algorithms) were known for the problem. In the approximate DP setting, we give an efficient algorithm to estimate an unknown Gaussian distribution up to an arbitrarily tiny total variation error using $\widetilde{O}(d^2)$ samples while tolerating a constant fraction of adversarial outliers. Prior to our work, all efficient approximate DP algorithms incurred a super-quadratic sample cost or were not outlier-robust. For the special case of mean estimation, our algorithm achieves the optimal sample complexity of $\widetilde O(d)$, improving on a $\widetilde O(d^{1.5})$ bound from prior work. Our pure DP algorithm relies on a recursive private preconditioning subroutine that utilizes the recent work on private mean estimation [Hopkins et al., 2022]. Our approximate DP algorithms are based on a substantial upgrade of the method of stabilizing convex relaxations introduced in [Kothari et al., 2022].

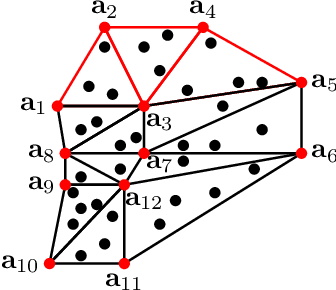

Weighed $\ell_1$ on the simplex: Compressive sensing meets locality

Apr 28, 2021

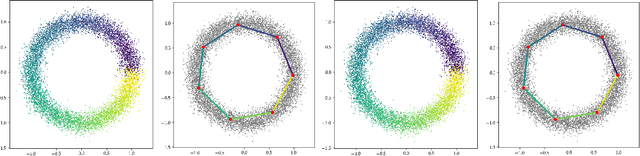

Abstract:Sparse manifold learning algorithms combine techniques in manifold learning and sparse optimization to learn features that could be utilized for downstream tasks. The standard setting of compressive sensing can not be immediately applied to this setup. Due to the intrinsic geometric structure of data, dictionary atoms might be redundant and do not satisfy the restricted isometry property or coherence condition. In addition, manifold learning emphasizes learning local geometry which is not reflected in a standard $\ell_1$ minimization problem. We propose weighted $\ell_0$ and weighted $\ell_1$ metrics that encourage representation via neighborhood atoms suited for dictionary based manifold learning. Assuming that the data is generated from Delaunay triangulation, we show the equivalence of weighted $\ell_1$ and weighted $\ell_0$. We discuss an optimization program that learns the dictionaries and sparse coefficients and demonstrate the utility of our regularization on synthetic and real datasets.

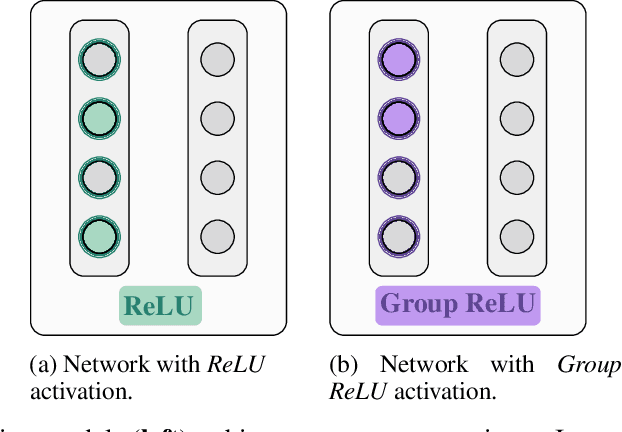

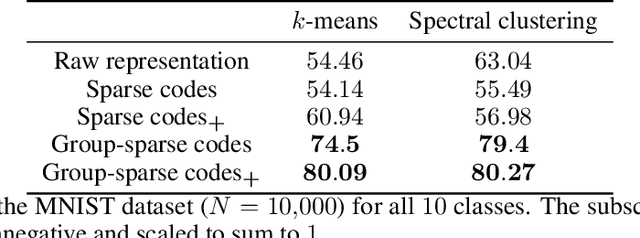

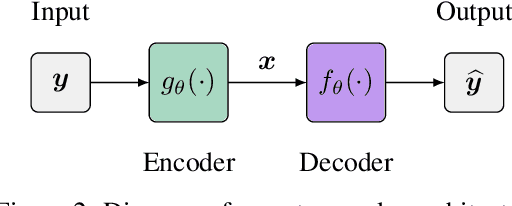

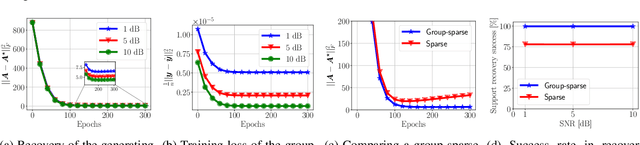

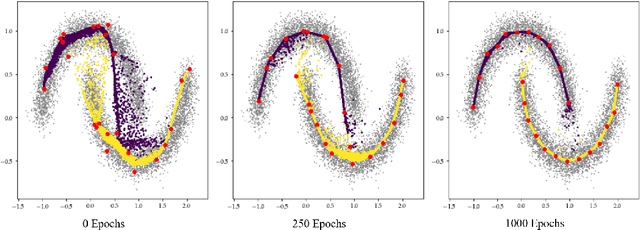

On the convergence of group-sparse autoencoders

Feb 13, 2021

Abstract:Recent approaches in the theoretical analysis of model-based deep learning architectures have studied the convergence of gradient descent in shallow ReLU networks that arise from generative models whose hidden layers are sparse. Motivated by the success of architectures that impose structured forms of sparsity, we introduce and study a group-sparse autoencoder that accounts for a variety of generative models, and utilizes a group-sparse ReLU activation function to force the non-zero units at a given layer to occur in blocks. For clustering models, inputs that result in the same group of active units belong to the same cluster. We proceed to analyze the gradient dynamics of a shallow instance of the proposed autoencoder, trained with data adhering to a group-sparse generative model. In this setting, we theoretically prove the convergence of the network parameters to a neighborhood of the generating matrix. We validate our model through numerical analysis and highlight the superior performance of networks with a group-sparse ReLU compared to networks that utilize traditional ReLUs, both in sparse coding and in parameter recovery tasks. We also provide real data experiments to corroborate the simulated results, and emphasize the clustering capabilities of structured sparsity models.

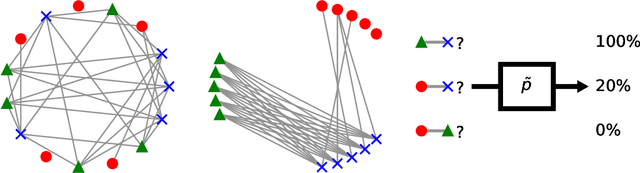

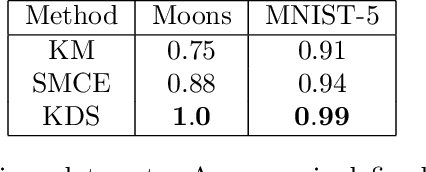

Manifold Learning and Deep Clustering with Local Dictionaries

Dec 03, 2020

Abstract:We introduce a novel clustering algorithm for data sampled from a union of nonlinear manifolds. Our algorithm extends a popular manifold clustering framework, which first computes a sparse similarity graph over the input data and then uses spectral methods to find clusters in this graph. While previous manifold learning algorithms directly compute similarity scores between pairs of data points, our algorithm first augments the data set with a small handful of representative atoms and then computes similarity scores between data points and atoms. To measure similarity, we express each data point as sparse convex combination of nearby atoms. To learn the atoms, we employ algorithm unrolling, an increasingly popular technique for structured deep learning. Ultimately, this departure from established manifold learning techniques leads to improvements in clustering accuracy and scalability.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge