Natasha Fernandes

Generalizable IoT Traffic Representations for Cross-Network Device Identification

Jan 27, 2026Abstract:Machine learning models have demonstrated strong performance in classifying network traffic and identifying Internet-of-Things (IoT) devices, enabling operators to discover and manage IoT assets at scale. However, many existing approaches rely on end-to-end supervised pipelines or task-specific fine-tuning, resulting in traffic representations that are tightly coupled to labeled datasets and deployment environments, which can limit generalizability. In this paper, we study the problem of learning generalizable traffic representations for IoT device identification. We design compact encoder architectures that learn per-flow embeddings from unlabeled IoT traffic and evaluate them using a frozen-encoder protocol with a simple supervised classifier. Our specific contributions are threefold. (1) We develop unsupervised encoder--decoder models that learn compact traffic representations from unlabeled IoT network flows and assess their quality through reconstruction-based analysis. (2) We show that these learned representations can be used effectively for IoT device-type classification using simple, lightweight classifiers trained on frozen embeddings. (3) We provide a systematic benchmarking study against the state-of-the-art pretrained traffic encoders, showing that larger models do not necessarily yield more robust representations for IoT traffic. Using more than 18 million real IoT traffic flows collected across multiple years and deployment environments, we learn traffic representations from unlabeled data and evaluate device-type classification on disjoint labeled subsets, achieving macro F1-scores exceeding 0.9 for device-type classification and demonstrating robustness under cross-environment deployment.

What is the Cost of Differential Privacy for Deep Learning-Based Trajectory Generation?

Jun 11, 2025Abstract:While location trajectories offer valuable insights, they also reveal sensitive personal information. Differential Privacy (DP) offers formal protection, but achieving a favourable utility-privacy trade-off remains challenging. Recent works explore deep learning-based generative models to produce synthetic trajectories. However, current models lack formal privacy guarantees and rely on conditional information derived from real data during generation. This work investigates the utility cost of enforcing DP in such models, addressing three research questions across two datasets and eleven utility metrics. (1) We evaluate how DP-SGD, the standard DP training method for deep learning, affects the utility of state-of-the-art generative models. (2) Since DP-SGD is limited to unconditional models, we propose a novel DP mechanism for conditional generation that provides formal guarantees and assess its impact on utility. (3) We analyse how model types - Diffusion, VAE, and GAN - affect the utility-privacy trade-off. Our results show that DP-SGD significantly impacts performance, although some utility remains if the datasets is sufficiently large. The proposed DP mechanism improves training stability, particularly when combined with DP-SGD, for unstable models such as GANs and on smaller datasets. Diffusion models yield the best utility without guarantees, but with DP-SGD, GANs perform best, indicating that the best non-private model is not necessarily optimal when targeting formal guarantees. In conclusion, DP trajectory generation remains a challenging task, and formal guarantees are currently only feasible with large datasets and in constrained use cases.

Empirical Calibration and Metric Differential Privacy in Language Models

Mar 18, 2025

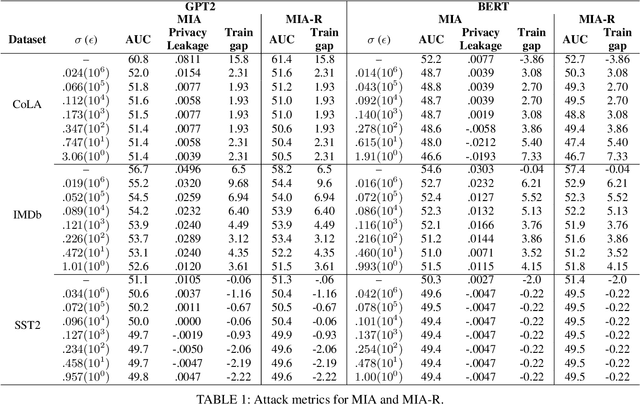

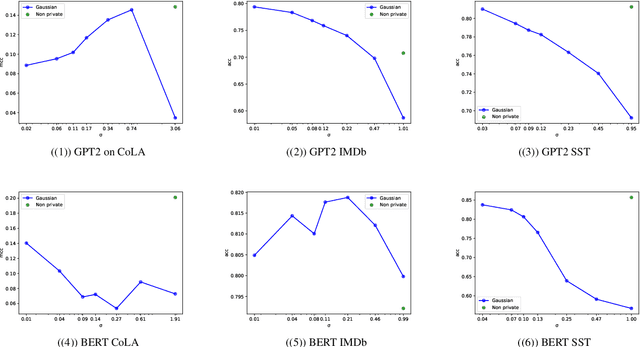

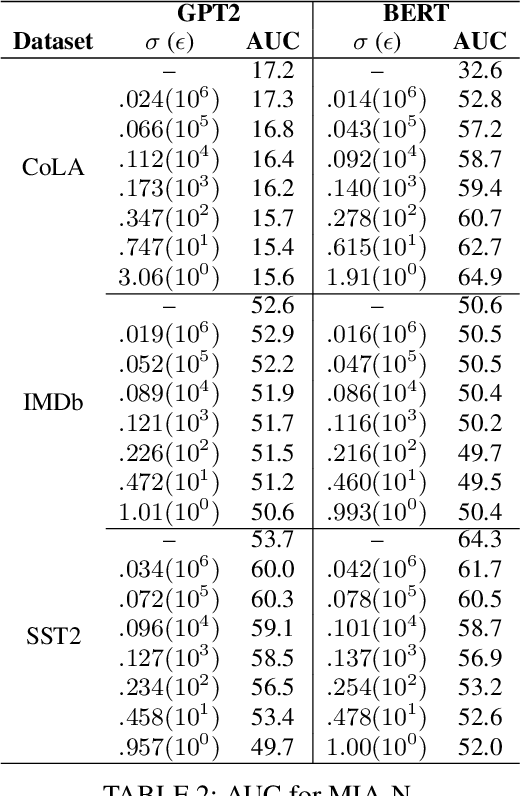

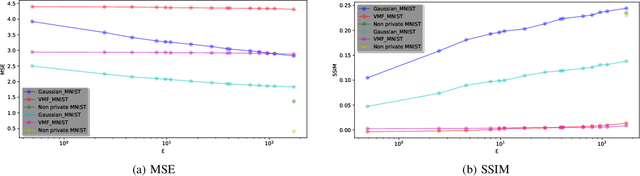

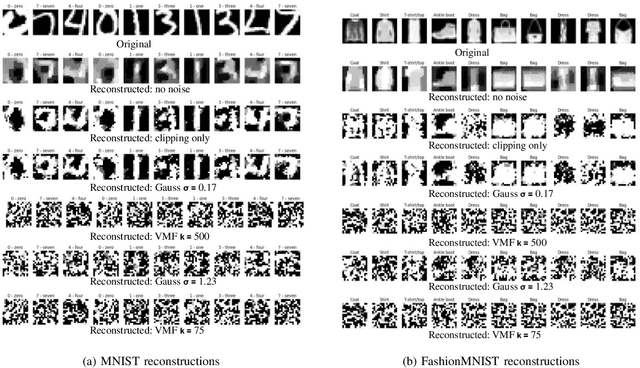

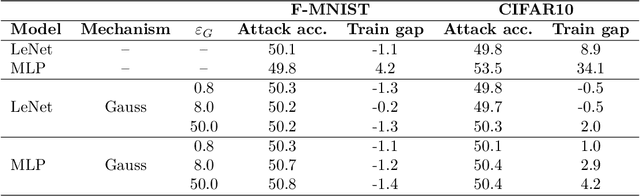

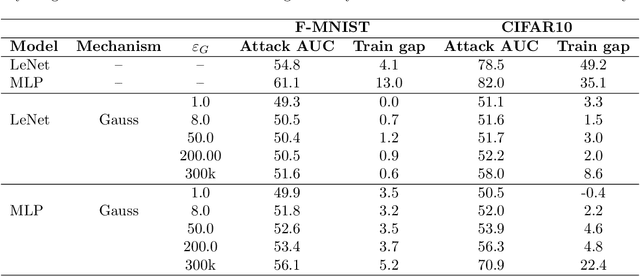

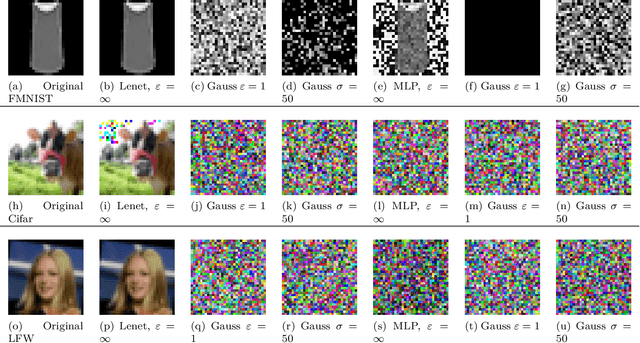

Abstract:NLP models trained with differential privacy (DP) usually adopt the DP-SGD framework, and privacy guarantees are often reported in terms of the privacy budget $\epsilon$. However, $\epsilon$ does not have any intrinsic meaning, and it is generally not possible to compare across variants of the framework. Work in image processing has therefore explored how to empirically calibrate noise across frameworks using Membership Inference Attacks (MIAs). However, this kind of calibration has not been established for NLP. In this paper, we show that MIAs offer little help in calibrating privacy, whereas reconstruction attacks are more useful. As a use case, we define a novel kind of directional privacy based on the von Mises-Fisher (VMF) distribution, a metric DP mechanism that perturbs angular distance rather than adding (isotropic) Gaussian noise, and apply this to NLP architectures. We show that, even though formal guarantees are incomparable, empirical privacy calibration reveals that each mechanism has different areas of strength with respect to utility-privacy trade-offs.

Comparing privacy notions for protection against reconstruction attacks in machine learning

Feb 06, 2025

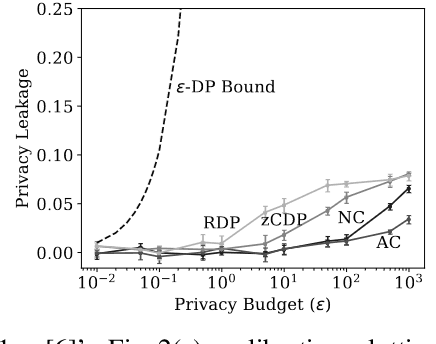

Abstract:Within the machine learning community, reconstruction attacks are a principal concern and have been identified even in federated learning (FL), which was designed with privacy preservation in mind. In response to these threats, the privacy community recommends the use of differential privacy (DP) in the stochastic gradient descent algorithm, termed DP-SGD. However, the proliferation of variants of DP in recent years\textemdash such as metric privacy\textemdash has made it challenging to conduct a fair comparison between different mechanisms due to the different meanings of the privacy parameters $\epsilon$ and $\delta$ across different variants. Thus, interpreting the practical implications of $\epsilon$ and $\delta$ in the FL context and amongst variants of DP remains ambiguous. In this paper, we lay a foundational framework for comparing mechanisms with differing notions of privacy guarantees, namely $(\epsilon,\delta)$-DP and metric privacy. We provide two foundational means of comparison: firstly, via the well-established $(\epsilon,\delta)$-DP guarantees, made possible through the R\'enyi differential privacy framework; and secondly, via Bayes' capacity, which we identify as an appropriate measure for reconstruction threats.

Bayes' capacity as a measure for reconstruction attacks in federated learning

Jun 19, 2024

Abstract:Within the machine learning community, reconstruction attacks are a principal attack of concern and have been identified even in federated learning, which was designed with privacy preservation in mind. In federated learning, it has been shown that an adversary with knowledge of the machine learning architecture is able to infer the exact value of a training element given an observation of the weight updates performed during stochastic gradient descent. In response to these threats, the privacy community recommends the use of differential privacy in the stochastic gradient descent algorithm, termed DP-SGD. However, DP has not yet been formally established as an effective countermeasure against reconstruction attacks. In this paper, we formalise the reconstruction threat model using the information-theoretic framework of quantitative information flow. We show that the Bayes' capacity, related to the Sibson mutual information of order infinity, represents a tight upper bound on the leakage of the DP-SGD algorithm to an adversary interested in performing a reconstruction attack. We provide empirical results demonstrating the effectiveness of this measure for comparing mechanisms against reconstruction threats.

Directional Privacy for Deep Learning

Nov 09, 2022

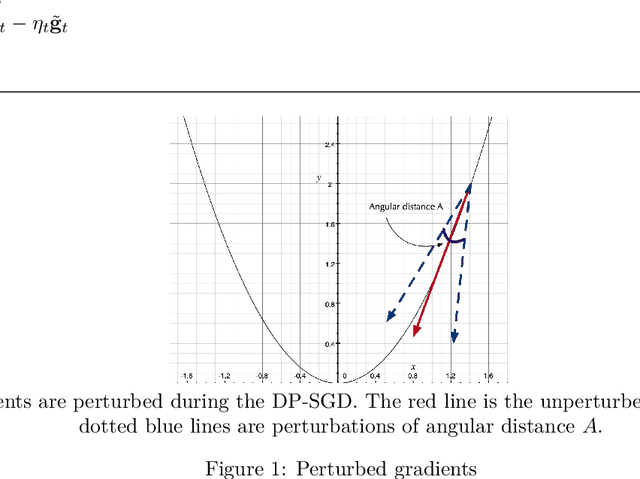

Abstract:Differentially Private Stochastic Gradient Descent (DP-SGD) is a key method for applying privacy in the training of deep learning models. This applies isotropic Gaussian noise to gradients during training, which can perturb these gradients in any direction, damaging utility. Metric DP, however, can provide alternative mechanisms based on arbitrary metrics that might be more suitable. In this paper we apply \textit{directional privacy}, via a mechanism based on the von Mises-Fisher (VMF) distribution, to perturb gradients in terms of \textit{angular distance} so that gradient direction is broadly preserved. We show that this provides $\epsilon d$-privacy for deep learning training, rather than the $(\epsilon, \delta)$-privacy of the Gaussian mechanism; and that experimentally, on key datasets, the VMF mechanism can outperform the Gaussian in the utility-privacy trade-off.

Locality Sensitive Hashing with Extended Differential Privacy

Nov 01, 2020

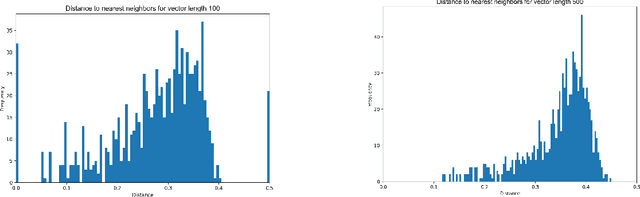

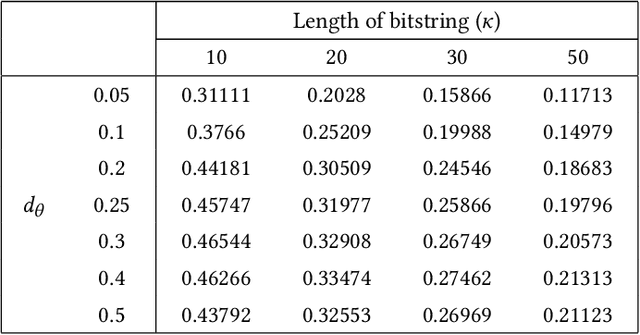

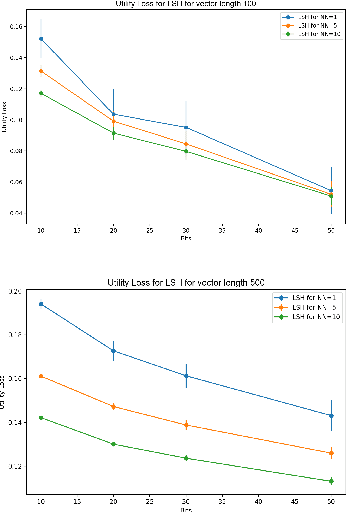

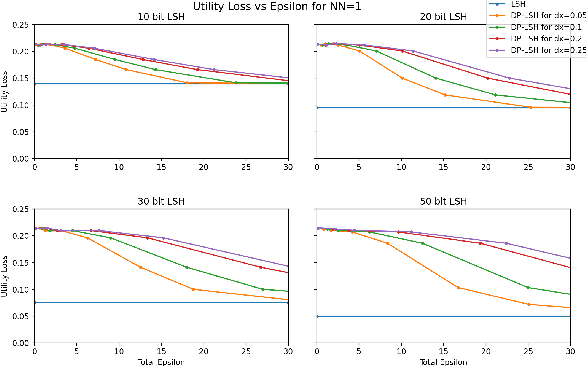

Abstract:Extended differential privacy, a generalization of standard differential privacy (DP) using a general metric rather than the Hamming metric, has been widely studied to provide rigorous privacy guarantees while keeping high utility. However, existing works on extended DP focus on a specific metric such as the Euclidean metric, the $l_1$ metric, and the Earth Mover's metric, and cannot be applied to other metrics. Consequently, existing extended DP mechanisms are limited to a small number of applications such as location-based services and document processing. In this paper, we propose a mechanism providing extended DP with a wide range of metrics. Our mechanism is based on locality sensitive hashing (LSH) and randomized response, and can be applied to a wide variety of metrics including the angular distance (or cosine) metric, Jaccard metric, Earth Mover's metric, and $l_p$ metric. Moreover, our mechanism works well for personal data in a high-dimensional space. We theoretically analyze the privacy properties of our mechanism, introducing new versions of concentrated and probabilistic extended DP to explain the guarantees provided. Finally, we apply our mechanism to friend matching based on high-dimensional personal data with an angular distance metric in the local model. We show that existing local DP mechanisms such as the RAPPOR do not work in this application. We also show through experiments that our mechanism makes possible friend matching with rigorous privacy guarantees and high utility.

Generalised Differential Privacy for Text Document Processing

Nov 26, 2018

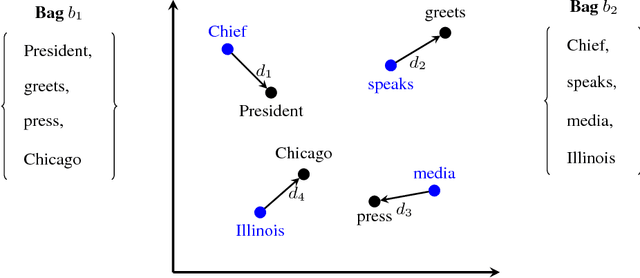

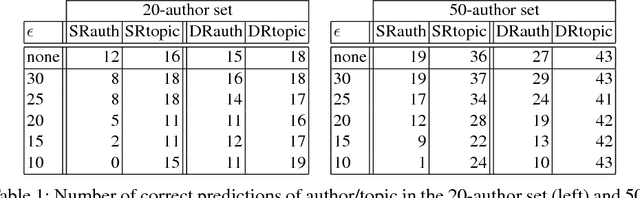

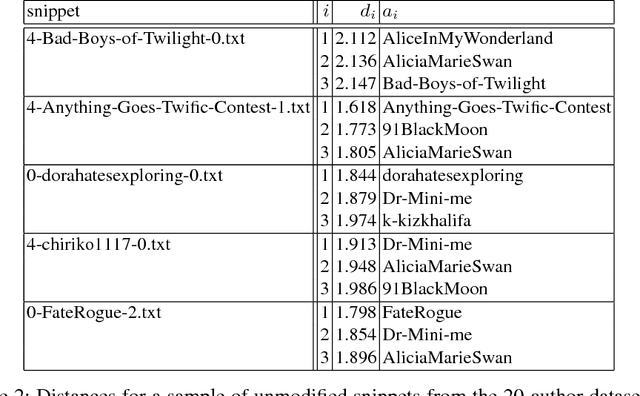

Abstract:We address the problem of how to "obfuscate" texts by removing stylistic clues which can identify authorship, whilst preserving (as much as possible) the content of the text. In this paper we combine ideas from "generalised differential privacy" and machine learning techniques for text processing to model privacy for text documents. We define a privacy mechanism that operates at the level of text documents represented as "bags-of-words" - these representations are typical in machine learning and contain sufficient information to carry out many kinds of classification tasks including topic identification and authorship attribution (of the original documents). We show that our mechanism satisfies privacy with respect to a metric for semantic similarity, thereby providing a balance between utility, defined by the semantic content of texts, with the obfuscation of stylistic clues. We demonstrate our implementation on a "fan fiction" dataset, confirming that it is indeed possible to disguise writing style effectively whilst preserving enough information and variation for accurate content classification tasks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge